Technology peripherals

Technology peripherals

AI

AI

Google does not open source PaLM, but netizens open source it! Miniature version of hundreds of billions of parameters: the maximum is only 1 billion, 8k context

Google does not open source PaLM, but netizens open source it! Miniature version of hundreds of billions of parameters: the maximum is only 1 billion, 8k context

Google does not open source PaLM, but netizens open source it! Miniature version of hundreds of billions of parameters: the maximum is only 1 billion, 8k context

PaLM, which Google has not open sourced, has been open sourced by netizens.

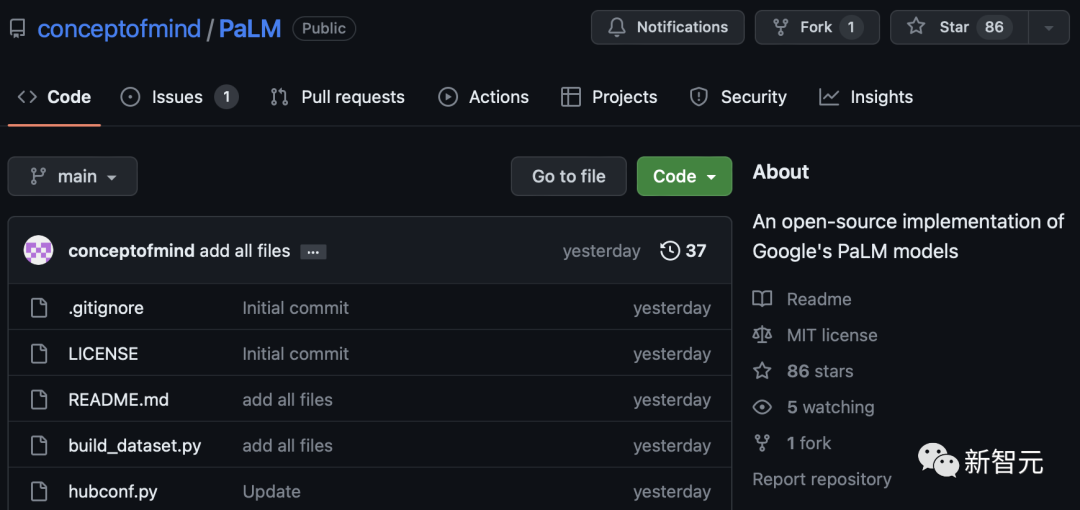

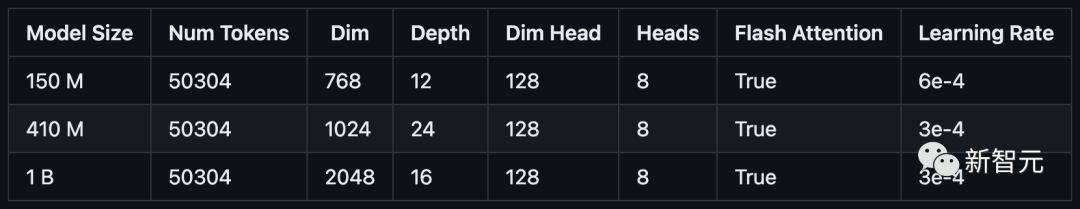

Yesterday, a developer open sourced three miniature versions of the PaLM model on GitHub: parameters are 150 million (PalM-150m), 410 million (PalM-410m) and 1 billion (PalM-1b).

## Project address: https://github.com/conceptofmind/PaLM

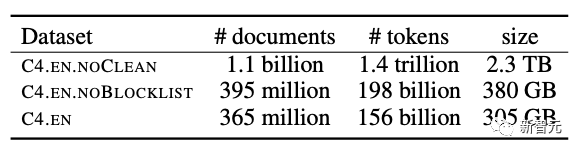

These three models were trained on the Google C4 data set with a context length of 8k. In the future, models with 2 billion parameters are being trained.

Google C4 Dataset

Open Source PaLMExample generated using 410 million parameter model:

My dog is very cute, but not very good at socializing with other dogs. The dog loves all new people and he likes to hang out with other dogs . I do need to take him to the park with other dogs. He does have some bad puppy breath, but it is only when he runs off in a direction he doesn't want to go. currently my dog is being very naughty. He would like to say hi in the park, but would rather take great care of himself for a while. He also has bad breath. I am going to have to get him some oral braces. It's been 3 months. The dog has some biting pains around his mouth. The dog is very timid and scared. The dog gets aggressive towards people. The dog is very playful and they are a little spoiled. I am not sure if it's a dog thing or if he is spoiled. He loves his toys and just wants to play. He plays with his toys all the time and even goes on walks. He is a little picky, not very good with other dogs. The dog is just a little puppy that goes to the park. He is a super friendly dog. He has not had a bad mouth or bad breathMy dog is very cute, but he is not good at socializing with other dogs. This dog loves all new people and he loves playing with other dogs. I do need to take him to the park with the other dogs. He does have a bit of puppy dog breath, but only when he runs in a direction he doesn't want to go. Now my dog is very naughty. He wanted to say hello in the park, but would rather take care of himself for a while. He also has bad breath. It's been three months since I had to buy him an oral brace. The dog has some sore bite marks around his mouth. This dog is very timid and scared. This dog is very playful and they are a bit spoiled. I'm not sure if it's the dog's fault or if he's just spoiled. He loves his toys and just wants to play with them. He is always playing with his toys and even going for walks. He is a bit picky and doesn't get along well with other dogs. The dog was just a puppy going to the park. He is a super friendly dog. He no longer has a bad breath problem.Although the parameters are indeed a bit small, the generated effect is still a bit hard to describe...

These models are compatible with many popular Lucidrain repositories, such as Toolformer-pytorch , PalM-rlhf-pytorch and PalM-pytorch.

The three latest open source models are baseline models and will be trained on larger-scale data sets.

All models will be further adjusted with instructions on FLAN to provide flan-PaLM models.

In terms of optimization algorithm, the decoupled weight attenuation Adam W is used, but you can also choose to use Mitchell Wortsman's Stable Adam W.

Currently, the model has been uploaded to Torch hub and the files are also stored in Huggingface hub.

If the model cannot be downloaded correctly from Torch hub, be sure to clear the checkpoints and model folders in .cache/torch/hub/. If the issue is still not resolved, then you can download the file from Huggingface’s repository. Currently, the integration of Huggingface is in progress.

All training data has been pre-labeled with GPTNEOX tagger, and the sequence length is cut off to 8192. This will help save significant costs in preprocessing data.

These datasets have been stored on Huggingface in parquet format, you can find the individual data chunks here: C4 Chunk 1, C4 Chunk 2, C4 Chunk 3, C4 Chunk 4, and C4 Chunk 5.

There is another option in the distributed training script to not use the provided pre-labeled C4 dataset, but to load and process another dataset like openwebtext.

Installation

There is a wave of installation required before attempting to run the model.

<code>git clone https://github.com/conceptofmind/PaLM.gitcd PaLM/pip3 install -r requirements.txt</code>

Using

You can do additional training or fine-tuning by loading a pre-trained model using Torch hub:

<code>model = torch.hub.load("conceptofmind/PaLM", "palm_410m_8k_v0").cuda()</code>In addition, you can also directly load the PyTorch model checkpoint through the following method:

<code>from palm_rlhf_pytorch import PaLMmodel = PaLM(num_tokens=50304, dim=1024, depth=24, dim_head=128, heads=8, flash_attn=True, qk_rmsnorm = False,).cuda()model.load('/palm_410m_8k_v0.pt')</code>To use the model to generate text, you can use the command line:

prompt - Prompt for generating text.

seq _ len - the sequence length of the generated text, the default value is 256.

temperature - Sampling temperature, default is 0.8

filter_thres - Filter threshold used for sampling. The default value is 0.9.

model - The model to use for generation. There are three different parameters (150m, 410m, 1b): palm_150m_8k_v0, palm_410m_8k_v0, palm_1b_8k_v0.

<code>python3 inference.py "My dog is very cute" --seq_len 256 --temperature 0.8 --filter_thres 0.9 --model "palm_410m_8k_v0"</code>

In order to improve performance, reasoning uses torch.compile(), Flash Attention and Hidet.

If you want to extend the generation by adding stream processing or other functions, the author provides a general inference script "inference.py".

Training

These "Open Source Palm" models were trained on 64 A100 (80GB) GPUs.

In order to facilitate model training, the author also provides a distributed training script train_distributed.py.

You are free to change the model layer and hyperparameter configuration to meet the hardware requirements, and you can also load the model's weights and change the training script to fine-tune the model.

Finally, the author stated that he would add a specific fine-tuning script and explore LoRA in the future.

Data

can be generated by running the build_dataset.py script similar to C4 used during training Dataset way to preprocess different datasets. This will pre-label the data, split it into chunks of specified sequence length, and upload it to the Huggingface hub.

For example:

<code>python3 build_dataset.py --seed 42 --seq_len 8192 --hf_account "your_hf_account" --tokenizer "EleutherAI/gpt-neox-20b" --dataset_name "EleutherAI/the_pile_deduplicated"</code>

PaLM 2 is coming

In April 2022, Google officially announced 540 billion for the first time Parameters of PaLM. Like other LLMs, PaLM can perform a variety of text generation and editing tasks.

PaLM is Google’s first large-scale use of the Pathways system to scale training to 6144 chips, which is the largest TPU-based system configuration used for training to date.

Its understanding ability is outstanding. Not only can it understand the jokes, but it can also explain the funny points to you who don’t understand.

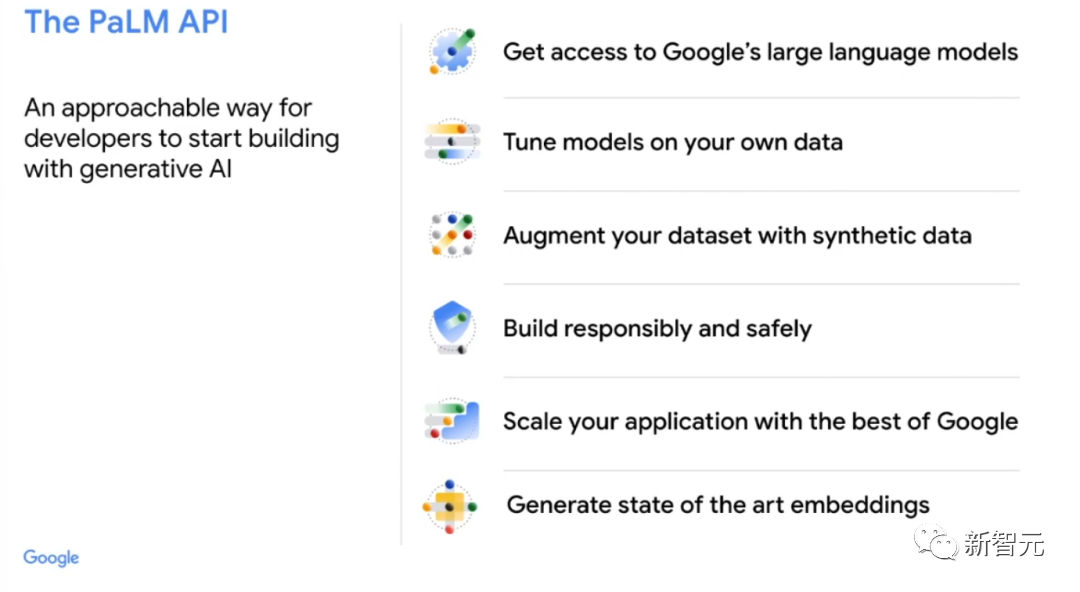

Just in mid-March, Google opened its PaLM large language model API for the first time.

This means that people can use it to complete tasks such as summarizing text, writing code, and even training PaLM into a ChatGPT-like Conversational chatbot.

At the upcoming Google annual I/O conference, Pichai will announce the company’s latest developments in the field of AI.

It is said that the latest and most advanced large-scale language model PaLM 2 will be launched soon.

PaLM 2 includes more than 100 languages and has been running under the internal code name "Unified Language Model" (Unified Language Model). It also conducts extensive testing in coding and mathematics as well as creative writing.

Last month, Google said that its medical LLM "Med-PalM2" can answer medical exam questions with an accuracy of 85% at the "expert doctor level."

In addition, Google will also release Bard, a chat robot supported by large models, as well as a generative experience for search.

It remains to be seen whether the latest AI release can straighten Google’s back.

The above is the detailed content of Google does not open source PaLM, but netizens open source it! Miniature version of hundreds of billions of parameters: the maximum is only 1 billion, 8k context. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to comment deepseek

Feb 19, 2025 pm 05:42 PM

How to comment deepseek

Feb 19, 2025 pm 05:42 PM

DeepSeek is a powerful information retrieval tool. Its advantage is that it can deeply mine information, but its disadvantages are that it is slow, the result presentation method is simple, and the database coverage is limited. It needs to be weighed according to specific needs.

How to search deepseek

Feb 19, 2025 pm 05:39 PM

How to search deepseek

Feb 19, 2025 pm 05:39 PM

DeepSeek is a proprietary search engine that only searches in a specific database or system, faster and more accurate. When using it, users are advised to read the document, try different search strategies, seek help and feedback on the user experience in order to make the most of their advantages.

Sesame Open Door Exchange Web Page Registration Link Gate Trading App Registration Website Latest

Feb 28, 2025 am 11:06 AM

Sesame Open Door Exchange Web Page Registration Link Gate Trading App Registration Website Latest

Feb 28, 2025 am 11:06 AM

This article introduces the registration process of the Sesame Open Exchange (Gate.io) web version and the Gate trading app in detail. Whether it is web registration or app registration, you need to visit the official website or app store to download the genuine app, then fill in the user name, password, email, mobile phone number and other information, and complete email or mobile phone verification.

Why can't the Bybit exchange link be directly downloaded and installed?

Feb 21, 2025 pm 10:57 PM

Why can't the Bybit exchange link be directly downloaded and installed?

Feb 21, 2025 pm 10:57 PM

Why can’t the Bybit exchange link be directly downloaded and installed? Bybit is a cryptocurrency exchange that provides trading services to users. The exchange's mobile apps cannot be downloaded directly through AppStore or GooglePlay for the following reasons: 1. App Store policy restricts Apple and Google from having strict requirements on the types of applications allowed in the app store. Cryptocurrency exchange applications often do not meet these requirements because they involve financial services and require specific regulations and security standards. 2. Laws and regulations Compliance In many countries, activities related to cryptocurrency transactions are regulated or restricted. To comply with these regulations, Bybit Application can only be used through official websites or other authorized channels

Sesame Open Door Trading Platform Download Mobile Version Gateio Trading Platform Download Address

Feb 28, 2025 am 10:51 AM

Sesame Open Door Trading Platform Download Mobile Version Gateio Trading Platform Download Address

Feb 28, 2025 am 10:51 AM

It is crucial to choose a formal channel to download the app and ensure the safety of your account.

gate.io exchange official registration portal

Feb 20, 2025 pm 04:27 PM

gate.io exchange official registration portal

Feb 20, 2025 pm 04:27 PM

Gate.io is a leading cryptocurrency exchange that offers a wide range of crypto assets and trading pairs. Registering Gate.io is very simple. You just need to visit its official website or download the app, click "Register", fill in the registration form, verify your email, and set up two-factor verification (2FA), and you can complete the registration. With Gate.io, users can enjoy a safe and convenient cryptocurrency trading experience.

Binance binance official website latest version login portal

Feb 21, 2025 pm 05:42 PM

Binance binance official website latest version login portal

Feb 21, 2025 pm 05:42 PM

To access the latest version of Binance website login portal, just follow these simple steps. Go to the official website and click the "Login" button in the upper right corner. Select your existing login method. If you are a new user, please "Register". Enter your registered mobile number or email and password and complete authentication (such as mobile verification code or Google Authenticator). After successful verification, you can access the latest version of Binance official website login portal.

The latest download address of Bitget in 2025: Steps to obtain the official app

Feb 25, 2025 pm 02:54 PM

The latest download address of Bitget in 2025: Steps to obtain the official app

Feb 25, 2025 pm 02:54 PM

This guide provides detailed download and installation steps for the official Bitget Exchange app, suitable for Android and iOS systems. The guide integrates information from multiple authoritative sources, including the official website, the App Store, and Google Play, and emphasizes considerations during download and account management. Users can download the app from official channels, including app store, official website APK download and official website jump, and complete registration, identity verification and security settings. In addition, the guide covers frequently asked questions and considerations, such as