How Redis+Caffeine implements distributed second-level cache components

The so-called second-level cache

Cache is to read data from a slower-reading medium and put it on a faster-reading medium, such as disk-->memory.

Usually we store data on disk, such as a database. If you read from the database every time, the reading speed will be affected by the IO of the disk itself, so there is a memory cache like redis. The data can be read out and put into the memory, so that when data needs to be obtained, the data can be returned directly from the memory, which can greatly improve the speed.

But generally redis is deployed separately into a cluster, so there will be network IO consumption. Although the connection with the redis cluster already has a tool such as a connection pool, there will still be a certain amount of consumption in data transmission. So there is an in-process cache, such as caffeine. When the in-application cache has qualified data, it can be used directly without having to obtain it from redis through the network. This forms a two-level cache. The in-application cache is called the first-level cache, and the remote cache (such as redis) is called the second-level cache.

Does the system need to cache CPU usage: If you have certain applications that need to consume a lot of CPU to calculate and obtain results.

If your database connection pool is relatively idle, you should not use cache to occupy the IO resources of the database. Consider using caching when the database connection pool is busy or frequently reports warnings about insufficient connections.

Advantages of distributed second-level cache

Redis is used to store hot data, and data that is not in Redis is directly accessed from the database.

Already have Redis, why do we still need to know about process caches such as Guava and Caffeine:

If Redis is not available, we can only access the database at this time, which can easily cause an avalanche , but this generally does not happen.

Accessing Redis will have certain network I/O and serialization and deserialization overhead. Although the performance is very high, it is not as fast as the local method after all. The hottest data can be stored in local to further speed up access. This idea is not unique to our Internet architecture. We use L1, L2, and L3 multi-level caches in computer systems to reduce direct access to memory and thereby speed up access.

So if we just use Redis, it can meet most of our needs, but when we need to pursue higher performance and higher availability, we have to understand multi-level cache .

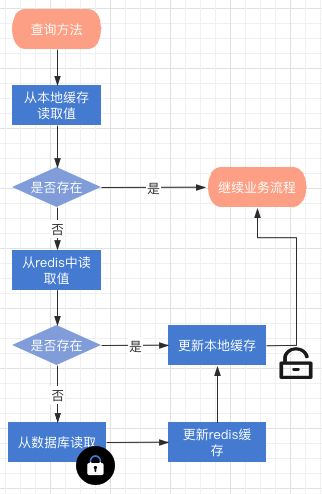

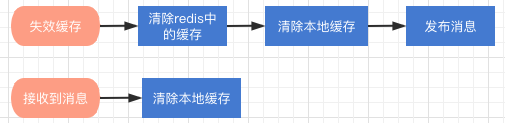

Level 2 cache operation process data reading process description

When neither redis nor the local cache can query the value, the update process will be triggered. The entire process is Locked cache invalidation process description

Redis updates and deletions of cache keys will be triggered. After clearing the redis cache

How to use the component?

The component is modified based on the Spring Cache framework. To use distributed cache in the project, you only need to add: cacheManager = "L2_CacheManager", or cacheManager = CacheRedisCaffeineAutoConfiguration. Distributed second-level cache

//这个方法会使用分布式二级缓存来提供查询

@Cacheable(cacheNames = CacheNames.CACHE_12HOUR, cacheManager = "L2_CacheManager")

public Config getAllValidateConfig() {

}If you want to use both distributed cache and distributed second-level cache components, then you need to inject a @Primary CacheManager bean into Spring

@Primary

@Bean("deaultCacheManager")

public RedisCacheManager cacheManager(RedisConnectionFactory factory) {

// 生成一个默认配置,通过config对象即可对缓存进行自定义配置

RedisCacheConfiguration config = RedisCacheConfiguration.defaultCacheConfig();

// 设置缓存的默认过期时间,也是使用Duration设置

config = config.entryTtl(Duration.ofMinutes(2)).disableCachingNullValues();

// 设置一个初始化的缓存空间set集合

Set<String> cacheNames = new HashSet<>();

cacheNames.add(CacheNames.CACHE_15MINS);

cacheNames.add(CacheNames.CACHE_30MINS);

// 对每个缓存空间应用不同的配置

Map<String, RedisCacheConfiguration> configMap = new HashMap<>();

configMap.put(CacheNames.CACHE_15MINS, config.entryTtl(Duration.ofMinutes(15)));

configMap.put(CacheNames.CACHE_30MINS, config.entryTtl(Duration.ofMinutes(30)));

// 使用自定义的缓存配置初始化一个cacheManager

RedisCacheManager cacheManager = RedisCacheManager.builder(factory)

.initialCacheNames(cacheNames) // 注意这两句的调用顺序,一定要先调用该方法设置初始化的缓存名,再初始化相关的配置

.withInitialCacheConfigurations(configMap)

.build();

return cacheManager;

}Then:

//这个方法会使用分布式二级缓存

@Cacheable(cacheNames = CacheNames.CACHE_12HOUR, cacheManager = "L2_CacheManager")

public Config getAllValidateConfig() {

}

//这个方法会使用分布式缓存

@Cacheable(cacheNames = CacheNames.CACHE_12HOUR)

public Config getAllValidateConfig2() {

}Core implementation method

The core is actually to implement the org.springframework.cache.CacheManager interface and inherit org.springframework.cache.support.AbstractValueAdaptingCache, to implement cache reading and writing under the Spring cache framework.

RedisCaffeineCacheManager implements the CacheManager interface

RedisCaffeineCacheManager.class mainly manages cache instances, generates corresponding cache management beans based on different CacheNames, and then puts them into a map.

package com.axin.idea.rediscaffeinecachestarter.support;

import com.axin.idea.rediscaffeinecachestarter.CacheRedisCaffeineProperties;

import com.github.benmanes.caffeine.cache.Caffeine;

import com.github.benmanes.caffeine.cache.stats.CacheStats;

import lombok.extern.slf4j.Slf4j;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.cache.Cache;

import org.springframework.cache.CacheManager;

import org.springframework.data.redis.core.RedisTemplate;

import org.springframework.util.CollectionUtils;

import java.util.*;

import java.util.concurrent.ConcurrentHashMap;

import java.util.concurrent.ConcurrentMap;

import java.util.concurrent.TimeUnit;

@Slf4j

public class RedisCaffeineCacheManager implements CacheManager {

private final Logger logger = LoggerFactory.getLogger(RedisCaffeineCacheManager.class);

private static ConcurrentMap<String, Cache> cacheMap = new ConcurrentHashMap<String, Cache>();

private CacheRedisCaffeineProperties cacheRedisCaffeineProperties;

private RedisTemplate<Object, Object> stringKeyRedisTemplate;

private boolean dynamic = true;

private Set<String> cacheNames;

{

cacheNames = new HashSet<>();

cacheNames.add(CacheNames.CACHE_15MINS);

cacheNames.add(CacheNames.CACHE_30MINS);

cacheNames.add(CacheNames.CACHE_60MINS);

cacheNames.add(CacheNames.CACHE_180MINS);

cacheNames.add(CacheNames.CACHE_12HOUR);

}

public RedisCaffeineCacheManager(CacheRedisCaffeineProperties cacheRedisCaffeineProperties,

RedisTemplate<Object, Object> stringKeyRedisTemplate) {

super();

this.cacheRedisCaffeineProperties = cacheRedisCaffeineProperties;

this.stringKeyRedisTemplate = stringKeyRedisTemplate;

this.dynamic = cacheRedisCaffeineProperties.isDynamic();

}

//——————————————————————— 进行缓存工具 ——————————————————————

/**

* 清除所有进程缓存

*/

public void clearAllCache() {

stringKeyRedisTemplate.convertAndSend(cacheRedisCaffeineProperties.getRedis().getTopic(), new CacheMessage(null, null));

}

/**

* 返回所有进程缓存(二级缓存)的统计信息

* result:{"缓存名称":统计信息}

* @return

*/

public static Map<String, CacheStats> getCacheStats() {

if (CollectionUtils.isEmpty(cacheMap)) {

return null;

}

Map<String, CacheStats> result = new LinkedHashMap<>();

for (Cache cache : cacheMap.values()) {

RedisCaffeineCache caffeineCache = (RedisCaffeineCache) cache;

result.put(caffeineCache.getName(), caffeineCache.getCaffeineCache().stats());

}

return result;

}

//—————————————————————————— core —————————————————————————

@Override

public Cache getCache(String name) {

Cache cache = cacheMap.get(name);

if(cache != null) {

return cache;

}

if(!dynamic && !cacheNames.contains(name)) {

return null;

}

cache = new RedisCaffeineCache(name, stringKeyRedisTemplate, caffeineCache(name), cacheRedisCaffeineProperties);

Cache oldCache = cacheMap.putIfAbsent(name, cache);

logger.debug("create cache instance, the cache name is : {}", name);

return oldCache == null ? cache : oldCache;

}

@Override

public Collection<String> getCacheNames() {

return this.cacheNames;

}

public void clearLocal(String cacheName, Object key) {

//cacheName为null 清除所有进程缓存

if (cacheName == null) {

log.info("清除所有本地缓存");

cacheMap = new ConcurrentHashMap<>();

return;

}

Cache cache = cacheMap.get(cacheName);

if(cache == null) {

return;

}

RedisCaffeineCache redisCaffeineCache = (RedisCaffeineCache) cache;

redisCaffeineCache.clearLocal(key);

}

/**

* 实例化本地一级缓存

* @param name

* @return

*/

private com.github.benmanes.caffeine.cache.Cache<Object, Object> caffeineCache(String name) {

Caffeine<Object, Object> cacheBuilder = Caffeine.newBuilder();

CacheRedisCaffeineProperties.CacheDefault cacheConfig;

switch (name) {

case CacheNames.CACHE_15MINS:

cacheConfig = cacheRedisCaffeineProperties.getCache15m();

break;

case CacheNames.CACHE_30MINS:

cacheConfig = cacheRedisCaffeineProperties.getCache30m();

break;

case CacheNames.CACHE_60MINS:

cacheConfig = cacheRedisCaffeineProperties.getCache60m();

break;

case CacheNames.CACHE_180MINS:

cacheConfig = cacheRedisCaffeineProperties.getCache180m();

break;

case CacheNames.CACHE_12HOUR:

cacheConfig = cacheRedisCaffeineProperties.getCache12h();

break;

default:

cacheConfig = cacheRedisCaffeineProperties.getCacheDefault();

}

long expireAfterAccess = cacheConfig.getExpireAfterAccess();

long expireAfterWrite = cacheConfig.getExpireAfterWrite();

int initialCapacity = cacheConfig.getInitialCapacity();

long maximumSize = cacheConfig.getMaximumSize();

long refreshAfterWrite = cacheConfig.getRefreshAfterWrite();

log.debug("本地缓存初始化:");

if (expireAfterAccess > 0) {

log.debug("设置本地缓存访问后过期时间,{}秒", expireAfterAccess);

cacheBuilder.expireAfterAccess(expireAfterAccess, TimeUnit.SECONDS);

}

if (expireAfterWrite > 0) {

log.debug("设置本地缓存写入后过期时间,{}秒", expireAfterWrite);

cacheBuilder.expireAfterWrite(expireAfterWrite, TimeUnit.SECONDS);

}

if (initialCapacity > 0) {

log.debug("设置缓存初始化大小{}", initialCapacity);

cacheBuilder.initialCapacity(initialCapacity);

}

if (maximumSize > 0) {

log.debug("设置本地缓存最大值{}", maximumSize);

cacheBuilder.maximumSize(maximumSize);

}

if (refreshAfterWrite > 0) {

cacheBuilder.refreshAfterWrite(refreshAfterWrite, TimeUnit.SECONDS);

}

cacheBuilder.recordStats();

return cacheBuilder.build();

}

}RedisCaffeineCache inherits AbstractValueAdaptingCache

The core is the get method and the put method.

package com.axin.idea.rediscaffeinecachestarter.support;

import com.axin.idea.rediscaffeinecachestarter.CacheRedisCaffeineProperties;

import com.github.benmanes.caffeine.cache.Cache;

import lombok.Getter;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.cache.support.AbstractValueAdaptingCache;

import org.springframework.data.redis.core.RedisTemplate;

import org.springframework.util.StringUtils;

import java.time.Duration;

import java.util.HashMap;

import java.util.Map;

import java.util.Set;

import java.util.concurrent.Callable;

import java.util.concurrent.ConcurrentHashMap;

import java.util.concurrent.TimeUnit;

import java.util.concurrent.locks.ReentrantLock;

public class RedisCaffeineCache extends AbstractValueAdaptingCache {

private final Logger logger = LoggerFactory.getLogger(RedisCaffeineCache.class);

private String name;

private RedisTemplate<Object, Object> redisTemplate;

@Getter

private Cache<Object, Object> caffeineCache;

private String cachePrefix;

/**

* 默认key超时时间 3600s

*/

private long defaultExpiration = 3600;

private Map<String, Long> defaultExpires = new HashMap<>();

{

defaultExpires.put(CacheNames.CACHE_15MINS, TimeUnit.MINUTES.toSeconds(15));

defaultExpires.put(CacheNames.CACHE_30MINS, TimeUnit.MINUTES.toSeconds(30));

defaultExpires.put(CacheNames.CACHE_60MINS, TimeUnit.MINUTES.toSeconds(60));

defaultExpires.put(CacheNames.CACHE_180MINS, TimeUnit.MINUTES.toSeconds(180));

defaultExpires.put(CacheNames.CACHE_12HOUR, TimeUnit.HOURS.toSeconds(12));

}

private String topic;

private Map<String, ReentrantLock> keyLockMap = new ConcurrentHashMap();

protected RedisCaffeineCache(boolean allowNullValues) {

super(allowNullValues);

}

public RedisCaffeineCache(String name, RedisTemplate<Object, Object> redisTemplate,

Cache<Object, Object> caffeineCache, CacheRedisCaffeineProperties cacheRedisCaffeineProperties) {

super(cacheRedisCaffeineProperties.isCacheNullValues());

this.name = name;

this.redisTemplate = redisTemplate;

this.caffeineCache = caffeineCache;

this.cachePrefix = cacheRedisCaffeineProperties.getCachePrefix();

this.defaultExpiration = cacheRedisCaffeineProperties.getRedis().getDefaultExpiration();

this.topic = cacheRedisCaffeineProperties.getRedis().getTopic();

defaultExpires.putAll(cacheRedisCaffeineProperties.getRedis().getExpires());

}

@Override

public String getName() {

return this.name;

}

@Override

public Object getNativeCache() {

return this;

}

@Override

public <T> T get(Object key, Callable<T> valueLoader) {

Object value = lookup(key);

if (value != null) {

return (T) value;

}

//key在redis和缓存中均不存在

ReentrantLock lock = keyLockMap.get(key.toString());

if (lock == null) {

logger.debug("create lock for key : {}", key);

keyLockMap.putIfAbsent(key.toString(), new ReentrantLock());

lock = keyLockMap.get(key.toString());

}

try {

lock.lock();

value = lookup(key);

if (value != null) {

return (T) value;

}

//执行原方法获得value

value = valueLoader.call();

Object storeValue = toStoreValue(value);

put(key, storeValue);

return (T) value;

} catch (Exception e) {

throw new ValueRetrievalException(key, valueLoader, e.getCause());

} finally {

lock.unlock();

}

}

@Override

public void put(Object key, Object value) {

if (!super.isAllowNullValues() && value == null) {

this.evict(key);

return;

}

long expire = getExpire();

logger.debug("put:{},expire:{}", getKey(key), expire);

redisTemplate.opsForValue().set(getKey(key), toStoreValue(value), expire, TimeUnit.SECONDS);

//缓存变更时通知其他节点清理本地缓存

push(new CacheMessage(this.name, key));

//此处put没有意义,会收到自己发送的缓存key失效消息

// caffeineCache.put(key, value);

}

@Override

public ValueWrapper putIfAbsent(Object key, Object value) {

Object cacheKey = getKey(key);

// 使用setIfAbsent原子性操作

long expire = getExpire();

boolean setSuccess;

setSuccess = redisTemplate.opsForValue().setIfAbsent(getKey(key), toStoreValue(value), Duration.ofSeconds(expire));

Object hasValue;

//setNx结果

if (setSuccess) {

push(new CacheMessage(this.name, key));

hasValue = value;

}else {

hasValue = redisTemplate.opsForValue().get(cacheKey);

}

caffeineCache.put(key, toStoreValue(value));

return toValueWrapper(hasValue);

}

@Override

public void evict(Object key) {

// 先清除redis中缓存数据,然后清除caffeine中的缓存,避免短时间内如果先清除caffeine缓存后其他请求会再从redis里加载到caffeine中

redisTemplate.delete(getKey(key));

push(new CacheMessage(this.name, key));

caffeineCache.invalidate(key);

}

@Override

public void clear() {

// 先清除redis中缓存数据,然后清除caffeine中的缓存,避免短时间内如果先清除caffeine缓存后其他请求会再从redis里加载到caffeine中

Set<Object> keys = redisTemplate.keys(this.name.concat(":*"));

for (Object key : keys) {

redisTemplate.delete(key);

}

push(new CacheMessage(this.name, null));

caffeineCache.invalidateAll();

}

/**

* 取值逻辑

* @param key

* @return

*/

@Override

protected Object lookup(Object key) {

Object cacheKey = getKey(key);

Object value = caffeineCache.getIfPresent(key);

if (value != null) {

logger.debug("从本地缓存中获得key, the key is : {}", cacheKey);

return value;

}

value = redisTemplate.opsForValue().get(cacheKey);

if (value != null) {

logger.debug("从redis中获得值,将值放到本地缓存中, the key is : {}", cacheKey);

caffeineCache.put(key, value);

}

return value;

}

/**

* @description 清理本地缓存

*/

public void clearLocal(Object key) {

logger.debug("clear local cache, the key is : {}", key);

if (key == null) {

caffeineCache.invalidateAll();

} else {

caffeineCache.invalidate(key);

}

}

//————————————————————————————私有方法——————————————————————————

private Object getKey(Object key) {

String keyStr = this.name.concat(":").concat(key.toString());

return StringUtils.isEmpty(this.cachePrefix) ? keyStr : this.cachePrefix.concat(":").concat(keyStr);

}

private long getExpire() {

long expire = defaultExpiration;

Long cacheNameExpire = defaultExpires.get(this.name);

return cacheNameExpire == null ? expire : cacheNameExpire.longValue();

}

/**

* @description 缓存变更时通知其他节点清理本地缓存

*/

private void push(CacheMessage message) {

redisTemplate.convertAndSend(topic, message);

}

}The above is the detailed content of How Redis+Caffeine implements distributed second-level cache components. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

How to build the redis cluster mode

Apr 10, 2025 pm 10:15 PM

How to build the redis cluster mode

Apr 10, 2025 pm 10:15 PM

Redis cluster mode deploys Redis instances to multiple servers through sharding, improving scalability and availability. The construction steps are as follows: Create odd Redis instances with different ports; Create 3 sentinel instances, monitor Redis instances and failover; configure sentinel configuration files, add monitoring Redis instance information and failover settings; configure Redis instance configuration files, enable cluster mode and specify the cluster information file path; create nodes.conf file, containing information of each Redis instance; start the cluster, execute the create command to create a cluster and specify the number of replicas; log in to the cluster to execute the CLUSTER INFO command to verify the cluster status; make

How to clear redis data

Apr 10, 2025 pm 10:06 PM

How to clear redis data

Apr 10, 2025 pm 10:06 PM

How to clear Redis data: Use the FLUSHALL command to clear all key values. Use the FLUSHDB command to clear the key value of the currently selected database. Use SELECT to switch databases, and then use FLUSHDB to clear multiple databases. Use the DEL command to delete a specific key. Use the redis-cli tool to clear the data.

How to use the redis command

Apr 10, 2025 pm 08:45 PM

How to use the redis command

Apr 10, 2025 pm 08:45 PM

Using the Redis directive requires the following steps: Open the Redis client. Enter the command (verb key value). Provides the required parameters (varies from instruction to instruction). Press Enter to execute the command. Redis returns a response indicating the result of the operation (usually OK or -ERR).

How to read redis queue

Apr 10, 2025 pm 10:12 PM

How to read redis queue

Apr 10, 2025 pm 10:12 PM

To read a queue from Redis, you need to get the queue name, read the elements using the LPOP command, and process the empty queue. The specific steps are as follows: Get the queue name: name it with the prefix of "queue:" such as "queue:my-queue". Use the LPOP command: Eject the element from the head of the queue and return its value, such as LPOP queue:my-queue. Processing empty queues: If the queue is empty, LPOP returns nil, and you can check whether the queue exists before reading the element.

How to use redis lock

Apr 10, 2025 pm 08:39 PM

How to use redis lock

Apr 10, 2025 pm 08:39 PM

Using Redis to lock operations requires obtaining the lock through the SETNX command, and then using the EXPIRE command to set the expiration time. The specific steps are: (1) Use the SETNX command to try to set a key-value pair; (2) Use the EXPIRE command to set the expiration time for the lock; (3) Use the DEL command to delete the lock when the lock is no longer needed.

How to implement the underlying redis

Apr 10, 2025 pm 07:21 PM

How to implement the underlying redis

Apr 10, 2025 pm 07:21 PM

Redis uses hash tables to store data and supports data structures such as strings, lists, hash tables, collections and ordered collections. Redis persists data through snapshots (RDB) and append write-only (AOF) mechanisms. Redis uses master-slave replication to improve data availability. Redis uses a single-threaded event loop to handle connections and commands to ensure data atomicity and consistency. Redis sets the expiration time for the key and uses the lazy delete mechanism to delete the expiration key.

How to read the source code of redis

Apr 10, 2025 pm 08:27 PM

How to read the source code of redis

Apr 10, 2025 pm 08:27 PM

The best way to understand Redis source code is to go step by step: get familiar with the basics of Redis. Select a specific module or function as the starting point. Start with the entry point of the module or function and view the code line by line. View the code through the function call chain. Be familiar with the underlying data structures used by Redis. Identify the algorithm used by Redis.

How to make message middleware for redis

Apr 10, 2025 pm 07:51 PM

How to make message middleware for redis

Apr 10, 2025 pm 07:51 PM

Redis, as a message middleware, supports production-consumption models, can persist messages and ensure reliable delivery. Using Redis as the message middleware enables low latency, reliable and scalable messaging.