Technology peripherals

Technology peripherals

AI

AI

OpenAI and Google have a double standard: use other people's data to train large models, but never allow their own data to leak out

OpenAI and Google have a double standard: use other people's data to train large models, but never allow their own data to leak out

OpenAI and Google have a double standard: use other people's data to train large models, but never allow their own data to leak out

In a new era of generative AI, big tech companies are pursuing a "do as I say, not as I do" strategy when it comes to using online content. To a certain extent, this strategy can be said to be a double standard and an abuse of the right to speak.

At the same time, as large language models (LLM) become the mainstream trend in AI development, both large and start-up companies are sparing no effort to develop their own large models. Among them, training data is an important prerequisite for the ability of large models.

Recently, according to Insider reports, Microsoft-backed OpenAI, Google and its backed Anthropic have been using online content from other websites or companies for training for many years. Their generative AI model . These were all done without asking for specific permission and will form part of a brewing legal battle to determine the future of the web and how copyright law is applied in this new era.

These big tech companies may argue that they are fair use, whether that is really the case is up for debate. But they won’t let their content be used to train other AI models. So we can’t help but ask, why can these large technology companies use other companies’ online content when training large models?

These companies are smart, but also very hypocritical

Is there any solid evidence for the claim that big tech companies use other people’s online content but don’t allow others to use their own? This can be seen in the terms of service and use of some of their products.

First let’s look at Claude, which is an AI assistant similar to ChatGPT launched by Anthropic. The system can complete tasks such as summary summarization, search, assistance in creation, question and answer, and coding. It was upgraded again some time ago and the context token was expanded to 100k, which greatly accelerated the processing speed.

Claude’s Terms of Service are as follows. You may not access or use the Service in the following ways (some of which are listed here). If any of these restrictions are inconsistent or unclear with the Acceptable Use Policy, the latter shall prevail:

- Develop any product or service that competes with our Services, including developing or training any AI or machine learning algorithms or models

- From our Crawl, crawl or otherwise obtain data or information from the Service

Claude Terms of Service Address: https://vault.pactsafe.io/s /9f502c93-cb5c-4571-b205-1e479da61794/legal.html#terms

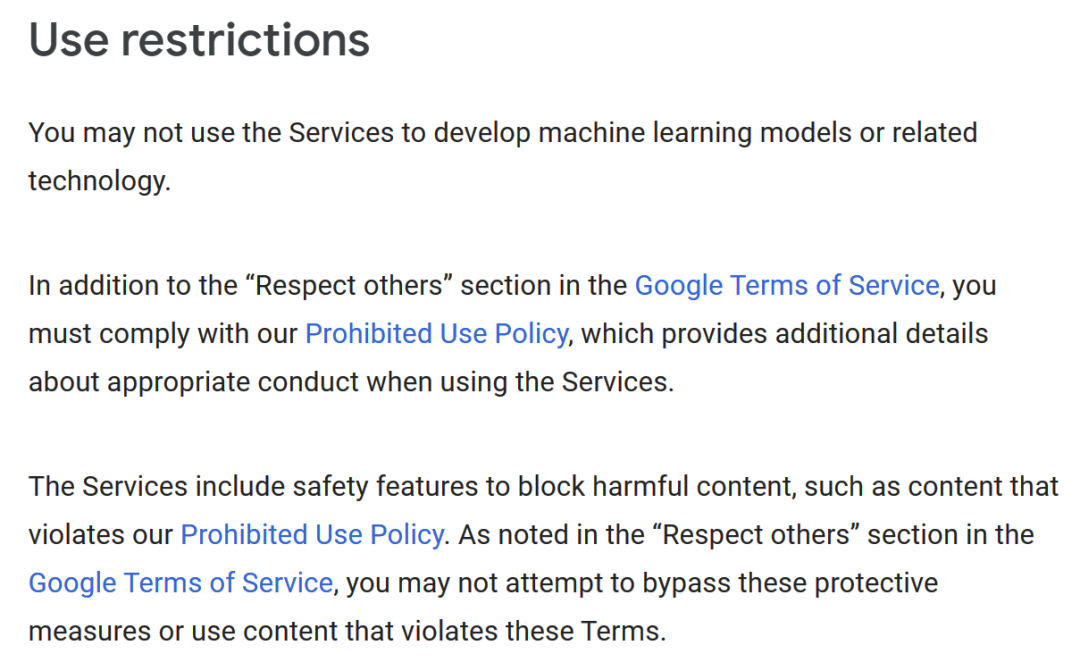

Similarly, Google’s Generative AI Terms of Use states, “You may not use the Service To develop machine learning models or related technologies."

##Google Generative AI Terms of Use Address: https: //policies.google.com/terms/generative-ai

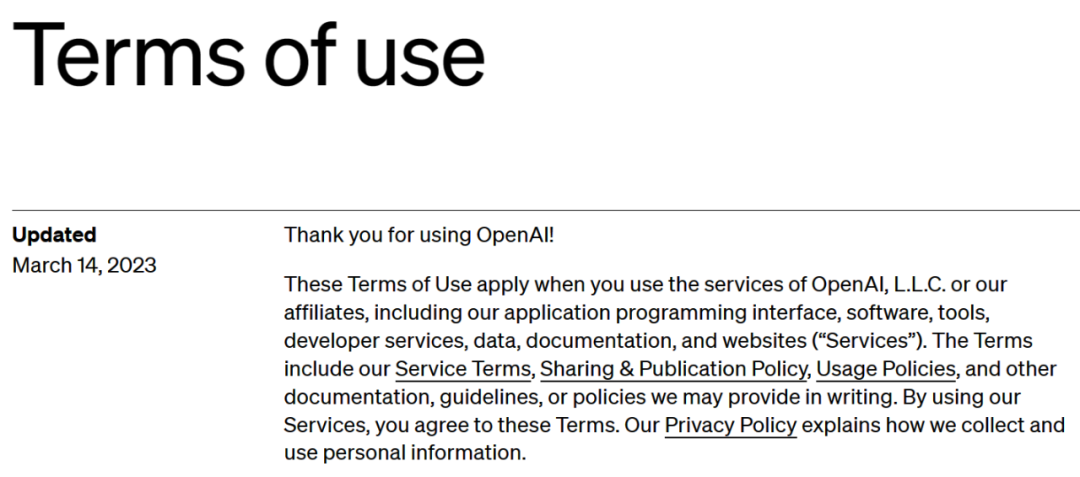

What about OpenAI’s terms of use? Similar to Google, "You may not use the output of this service to develop models that compete with OpenAI."

OpenAI Terms of Use Address: https://openai.com/policies/terms-of-use

These companies are smart, they know that high-quality content is critical to training new AI models, so it makes sense not to allow others to use their output in this way. But they have no scruples in using other people’s data to train their own models. How to explain this?

OpenAI, Google and Anthropic declined Insider's request for comment and did not respond.

Reddit, Twitter and Others: Enough is Enough

Actually, other companies weren't happy when they realized what was happening. In April, Reddit, which has been used for years to train AI models, plans to start charging for access to its data.

Reddit CEO Steve Huffman said, “Reddit’s data corpus is too valuable to give away that value to the largest companies in the world for free.”

Also in April this year, Musk accused Microsoft, OpenAI’s main supporter, of illegally using Twitter data to train AI models. "Time for litigation," he tweeted.

#However, in response to Insider's comment, Microsoft said, "There are so many things wrong with this premise that I don't even know where to start. ”

OpenAI CEO Sam Altman has tried to deepen this problem by exploring new AI models that respect copyright. According to Axios, he recently said, "We are trying to develop a new model. If the AI system uses your content or uses your style, you will get paid for it."

Sam Altman

Publishers (including Insiders) will all have vested interests. Additionally, some publishers, including U.S. News Corp., are already pushing for tech companies to pay to use their content to train AI models.

The current training method of AI models "breaks" the network

A former Microsoft executive said there must be something wrong with this. Microsoft veteran and famous software developer Steven Sinofsky believes that the current training method of AI models "breaks" the network.

Steven Sinofsky

He’s pushing The post reads, "In the past, crawled data was used in exchange for click-through rates. But now it is only used to train a model and does not bring any value to creators and copyright owners."

Perhaps, as more companies wake up, this uneven data usage in the era of generative AI will soon be changed.

The above is the detailed content of OpenAI and Google have a double standard: use other people's data to train large models, but never allow their own data to leak out. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1384

1384

52

52

Centos shutdown command line

Apr 14, 2025 pm 09:12 PM

Centos shutdown command line

Apr 14, 2025 pm 09:12 PM

The CentOS shutdown command is shutdown, and the syntax is shutdown [Options] Time [Information]. Options include: -h Stop the system immediately; -P Turn off the power after shutdown; -r restart; -t Waiting time. Times can be specified as immediate (now), minutes ( minutes), or a specific time (hh:mm). Added information can be displayed in system messages.

How to check CentOS HDFS configuration

Apr 14, 2025 pm 07:21 PM

How to check CentOS HDFS configuration

Apr 14, 2025 pm 07:21 PM

Complete Guide to Checking HDFS Configuration in CentOS Systems This article will guide you how to effectively check the configuration and running status of HDFS on CentOS systems. The following steps will help you fully understand the setup and operation of HDFS. Verify Hadoop environment variable: First, make sure the Hadoop environment variable is set correctly. In the terminal, execute the following command to verify that Hadoop is installed and configured correctly: hadoopversion Check HDFS configuration file: The core configuration file of HDFS is located in the /etc/hadoop/conf/ directory, where core-site.xml and hdfs-site.xml are crucial. use

What are the backup methods for GitLab on CentOS

Apr 14, 2025 pm 05:33 PM

What are the backup methods for GitLab on CentOS

Apr 14, 2025 pm 05:33 PM

Backup and Recovery Policy of GitLab under CentOS System In order to ensure data security and recoverability, GitLab on CentOS provides a variety of backup methods. This article will introduce several common backup methods, configuration parameters and recovery processes in detail to help you establish a complete GitLab backup and recovery strategy. 1. Manual backup Use the gitlab-rakegitlab:backup:create command to execute manual backup. This command backs up key information such as GitLab repository, database, users, user groups, keys, and permissions. The default backup file is stored in the /var/opt/gitlab/backups directory. You can modify /etc/gitlab

How is the GPU support for PyTorch on CentOS

Apr 14, 2025 pm 06:48 PM

How is the GPU support for PyTorch on CentOS

Apr 14, 2025 pm 06:48 PM

Enable PyTorch GPU acceleration on CentOS system requires the installation of CUDA, cuDNN and GPU versions of PyTorch. The following steps will guide you through the process: CUDA and cuDNN installation determine CUDA version compatibility: Use the nvidia-smi command to view the CUDA version supported by your NVIDIA graphics card. For example, your MX450 graphics card may support CUDA11.1 or higher. Download and install CUDAToolkit: Visit the official website of NVIDIACUDAToolkit and download and install the corresponding version according to the highest CUDA version supported by your graphics card. Install cuDNN library:

Detailed explanation of docker principle

Apr 14, 2025 pm 11:57 PM

Detailed explanation of docker principle

Apr 14, 2025 pm 11:57 PM

Docker uses Linux kernel features to provide an efficient and isolated application running environment. Its working principle is as follows: 1. The mirror is used as a read-only template, which contains everything you need to run the application; 2. The Union File System (UnionFS) stacks multiple file systems, only storing the differences, saving space and speeding up; 3. The daemon manages the mirrors and containers, and the client uses them for interaction; 4. Namespaces and cgroups implement container isolation and resource limitations; 5. Multiple network modes support container interconnection. Only by understanding these core concepts can you better utilize Docker.

Centos install mysql

Apr 14, 2025 pm 08:09 PM

Centos install mysql

Apr 14, 2025 pm 08:09 PM

Installing MySQL on CentOS involves the following steps: Adding the appropriate MySQL yum source. Execute the yum install mysql-server command to install the MySQL server. Use the mysql_secure_installation command to make security settings, such as setting the root user password. Customize the MySQL configuration file as needed. Tune MySQL parameters and optimize databases for performance.

How to view GitLab logs under CentOS

Apr 14, 2025 pm 06:18 PM

How to view GitLab logs under CentOS

Apr 14, 2025 pm 06:18 PM

A complete guide to viewing GitLab logs under CentOS system This article will guide you how to view various GitLab logs in CentOS system, including main logs, exception logs, and other related logs. Please note that the log file path may vary depending on the GitLab version and installation method. If the following path does not exist, please check the GitLab installation directory and configuration files. 1. View the main GitLab log Use the following command to view the main log file of the GitLabRails application: Command: sudocat/var/log/gitlab/gitlab-rails/production.log This command will display product

How to operate distributed training of PyTorch on CentOS

Apr 14, 2025 pm 06:36 PM

How to operate distributed training of PyTorch on CentOS

Apr 14, 2025 pm 06:36 PM

PyTorch distributed training on CentOS system requires the following steps: PyTorch installation: The premise is that Python and pip are installed in CentOS system. Depending on your CUDA version, get the appropriate installation command from the PyTorch official website. For CPU-only training, you can use the following command: pipinstalltorchtorchvisiontorchaudio If you need GPU support, make sure that the corresponding version of CUDA and cuDNN are installed and use the corresponding PyTorch version for installation. Distributed environment configuration: Distributed training usually requires multiple machines or single-machine multiple GPUs. Place