Technology peripherals

Technology peripherals

AI

AI

5 million token monster, read the entire 'Harry Potter' in one go! More than 1000 times longer than ChatGPT

5 million token monster, read the entire 'Harry Potter' in one go! More than 1000 times longer than ChatGPT

5 million token monster, read the entire 'Harry Potter' in one go! More than 1000 times longer than ChatGPT

Poor memory is the main pain point of current mainstream large-scale language models. For example, ChatGPT can only input 4096 tokens (about 3000 words). I often forget what I said before while chatting, and it is not even enough to read. of a short story.

The short input window also limits the application scenarios of the language model. For example, when summarizing a scientific paper (about 10,000 words), the article needs to be manually segmented. When it is input into the model, the associated information between different chapters is lost.

Although GPT-4 supports a maximum of 32,000 tokens and the upgraded Claude supports a maximum of 100,000 tokens, they can only alleviate the problem of insufficient brain capacity.

Recently a entrepreneurial team Magic announced that it will soon release the LTM-1 model, which can support up to 5 million token, It is about 500,000 lines of code or 5,000 files, which is 50 times higher than Claude. It can basically cover most storage needs. This is really a quantitative change that leads to a qualitative change!

The main application scenario of LTM-1 is code completion, for example, it can generate longer and more complex code suggestions.You can also reuse and synthesize information across multiple files.

The bad news is that Magic, the developer of LTM-1, did not release the specific technical principles, but only said that it designed a brand new method the Long-term Memory Network (LTM Net).

But there is also good news. In September 2021, researchers from DeepMind and other institutions once proposed a model called ∞-former, which includes long-term memory (long- term memory (LTM) mechanism, which theoretically allows the Transformer model to have infinite memory, but it is not clear whether the two are the same technology or an improved version.

Paper link: https://arxiv.org/pdf/2109.00301.pdf

The development team stated that although LTM Nets can see more context than GPT, the number of parameters of the LTM-1 model is much smaller than the current sota model, so it is more intelligent. Low, but continuing to increase the model size should improve the performance of LTM Nets.Currently LTM-1 has opened alpha test applications.

Application link: https://www.php. cn/link/bbfb937a66597d9646ad992009aee405

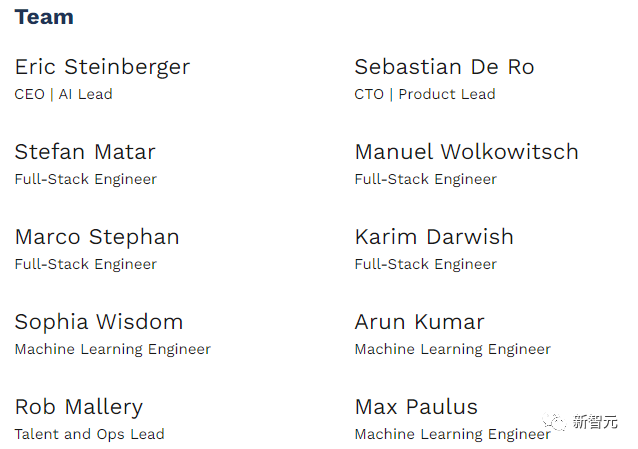

LTM-1 developer Magic was founded in 2022. It mainly develops products similar to GitHub Copilot, which can help software engineers write and review , debugging and modifying code, the goal is to create an AI colleague for programmers whose main competitive advantage is that the model can read longer codes.Magic is committed to public benefit and its mission is to build and safely deploy AGI systems that exceed human capabilities. It is currently a startup company with only 10 people.

Eric Steinberger, CEO and co-founder of Magic, graduated from Cambridge University with a bachelor's degree in computer science and has done machine learning research at FAIR.

Before founding Magic, Steinberger also founded ClimateScience to help children around the world learn about the impacts of climate change.

Infinite Memory Transformer

The design of the attention mechanism in the Transformer, the core component of the language model, will cause the time complexity to increase every time the length of the input sequence is increased. Quadratic growth.

Although there are some variants of the attention mechanism, such as sparse attention, etc., to reduce the complexity of the algorithm, its complexity is still related to the input length and cannot be infinitely expanded.

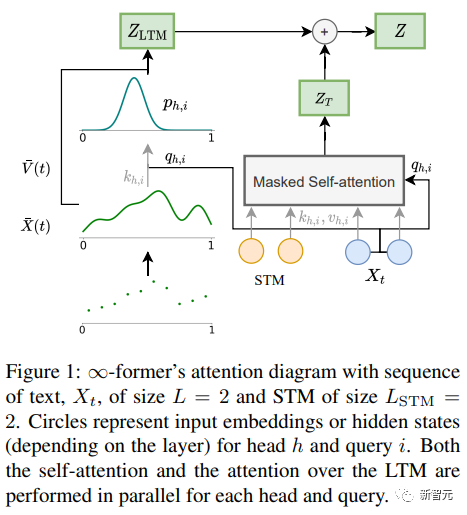

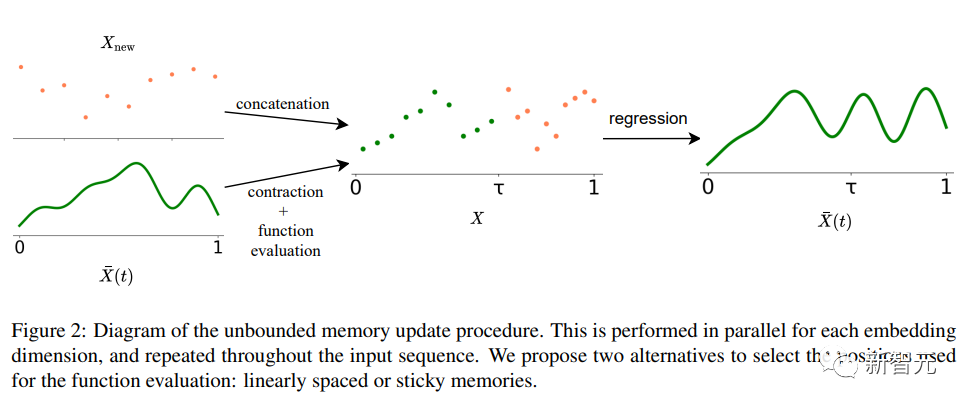

∞-former The key to the long-term memory (LTM) Transformer model that can extend the input sequence to infinity is a continuous spatial attention framework that reduces the representation granularity Increase the number of memory information units (basis functions).

In the framework, the input sequence is represented as a "continuous signal", representing N radial basis functions ( RBF), in this way, the attention complexity of ∞-former is reduced to O(L^2 L × N), while the attention complexity of the original Transformer is O(L×(L L_LTM)), where L and L_LTM correspond to the Transformer input size and long-term memory length respectively.

This representation method has two main advantages:

#1. The context can be represented by a basis function N that is smaller than the number of tokens, reducing The computational cost of attention is reduced;

2. N can be fixed, thereby being able to represent unlimited context in memory without increasing the complexity of the attention mechanism.

Of course, there is no free lunch in the world, and the price is the reduction of resolution: when using a smaller number of basis functions, Can result in reduced accuracy when representing the input sequence as a continuous signal.

In order to alleviate the problem of resolution reduction, researchers introduced the concept of "sticky memories", which attributes larger spaces in the LTM signal to more frequently accessed memory areas , created a concept of "permanence" in LTM, which enables the model to better capture long-term background without losing relevant information. It is also inspired by the long-term potential and plasticity of the brain.

Experimental part

In order to verify whether ∞-former can model long context, the researchers first Experiments are conducted on a synthesis task of sorting tokens by frequency in a long sequence; then experiments are conducted on language modeling and document-based dialogue generation by fine-tuning a pre-trained language model.

Sort

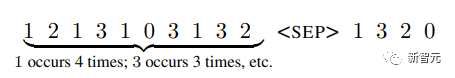

The input consists of a token sampled according to a probability distribution (unknown to the system) sequence, the goal is to generate tokens in descending order of frequency in the sequence

In order to study whether long-term memory is effectively utilized, and Transformer Whether ranking is done simply by modeling the most recent tag, the researchers designed the tag probability distribution to change over time.

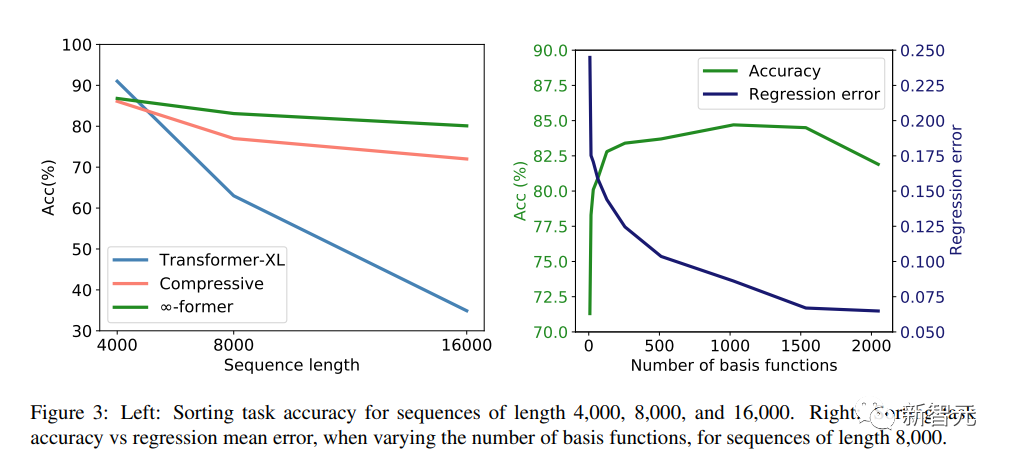

There are 20 tokens in the vocabulary, and experiments were conducted with sequences of lengths of 4,000, 8,000, and 16,000 respectively. Transformer-XL and compressive transformer were used as baseline models for comparison.

The experimental results can be seen that in the case of short sequence length (4,000), Transformer-XL achieves slightly higher accuracy than other models; but when the sequence length increases, its accuracy also drops rapidly, but For ∞-former, this decrease is not obvious, indicating that it has more advantages when modeling long sequences.

Language Modeling

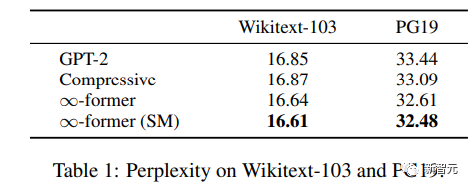

To understand whether long-term memory can be used to extend pre-training For the language model, the researchers fine-tuned GPT-2 small on a subset of Wikitext103 and PG-19, including approximately 200 million tokens.

The experimental results can be seen that ∞-former can reduce the confusion of Wikitext-103 and PG19, and ∞- The improvement obtained by former is larger on the PG19 dataset because books rely more on long-term memory than Wikipedia articles.

Document-based dialogue

In document-based dialogue generation, except In addition to the conversation history, the model can also obtain documents about the topic of the conversation.

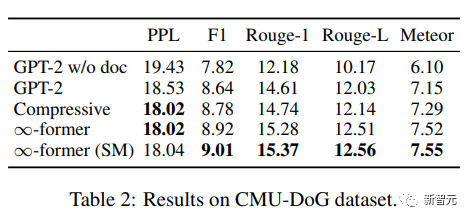

In the CMU Document Grounded Conversation dataset (CMU-DoG), the conversation is about a movie, and a summary of the movie is given as an auxiliary document; considering that the conversation contains multiple different In continuous discourse, auxiliary documents are divided into parts.

To assess the usefulness of long-term memory, the researchers made the task more challenging by giving the model access to the file only before the conversation began.

After fine-tuning GPT-2 small, in order to allow the model to keep the entire document in memory, a continuous LTM (∞-former) with N=512 basis functions is used to extend GPT -2.

To evaluate the model effect, use perplexity, F1 score, Rouge-1 and Rouge-L, and Meteor indicators.

From the results, ∞-former and compressive Transformer can generate better corpus, although the confusion between the two The degree is basically the same, but ∞-former achieves better scores on other indicators.

The above is the detailed content of 5 million token monster, read the entire 'Harry Potter' in one go! More than 1000 times longer than ChatGPT. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

How to set the Debian Apache log level

Apr 13, 2025 am 08:33 AM

How to set the Debian Apache log level

Apr 13, 2025 am 08:33 AM

This article describes how to adjust the logging level of the ApacheWeb server in the Debian system. By modifying the configuration file, you can control the verbose level of log information recorded by Apache. Method 1: Modify the main configuration file to locate the configuration file: The configuration file of Apache2.x is usually located in the /etc/apache2/ directory. The file name may be apache2.conf or httpd.conf, depending on your installation method. Edit configuration file: Open configuration file with root permissions using a text editor (such as nano): sudonano/etc/apache2/apache2.conf

How to optimize the performance of debian readdir

Apr 13, 2025 am 08:48 AM

How to optimize the performance of debian readdir

Apr 13, 2025 am 08:48 AM

In Debian systems, readdir system calls are used to read directory contents. If its performance is not good, try the following optimization strategy: Simplify the number of directory files: Split large directories into multiple small directories as much as possible, reducing the number of items processed per readdir call. Enable directory content caching: build a cache mechanism, update the cache regularly or when directory content changes, and reduce frequent calls to readdir. Memory caches (such as Memcached or Redis) or local caches (such as files or databases) can be considered. Adopt efficient data structure: If you implement directory traversal by yourself, select more efficient data structures (such as hash tables instead of linear search) to store and access directory information

How to implement file sorting by debian readdir

Apr 13, 2025 am 09:06 AM

How to implement file sorting by debian readdir

Apr 13, 2025 am 09:06 AM

In Debian systems, the readdir function is used to read directory contents, but the order in which it returns is not predefined. To sort files in a directory, you need to read all files first, and then sort them using the qsort function. The following code demonstrates how to sort directory files using readdir and qsort in Debian system: #include#include#include#include#include//Custom comparison function, used for qsortintcompare(constvoid*a,constvoid*b){returnstrcmp(*(

Debian mail server firewall configuration tips

Apr 13, 2025 am 11:42 AM

Debian mail server firewall configuration tips

Apr 13, 2025 am 11:42 AM

Configuring a Debian mail server's firewall is an important step in ensuring server security. The following are several commonly used firewall configuration methods, including the use of iptables and firewalld. Use iptables to configure firewall to install iptables (if not already installed): sudoapt-getupdatesudoapt-getinstalliptablesView current iptables rules: sudoiptables-L configuration

Debian mail server SSL certificate installation method

Apr 13, 2025 am 11:39 AM

Debian mail server SSL certificate installation method

Apr 13, 2025 am 11:39 AM

The steps to install an SSL certificate on the Debian mail server are as follows: 1. Install the OpenSSL toolkit First, make sure that the OpenSSL toolkit is already installed on your system. If not installed, you can use the following command to install: sudoapt-getupdatesudoapt-getinstallopenssl2. Generate private key and certificate request Next, use OpenSSL to generate a 2048-bit RSA private key and a certificate request (CSR): openss

How debian readdir integrates with other tools

Apr 13, 2025 am 09:42 AM

How debian readdir integrates with other tools

Apr 13, 2025 am 09:42 AM

The readdir function in the Debian system is a system call used to read directory contents and is often used in C programming. This article will explain how to integrate readdir with other tools to enhance its functionality. Method 1: Combining C language program and pipeline First, write a C program to call the readdir function and output the result: #include#include#include#includeintmain(intargc,char*argv[]){DIR*dir;structdirent*entry;if(argc!=2){

How Debian OpenSSL prevents man-in-the-middle attacks

Apr 13, 2025 am 10:30 AM

How Debian OpenSSL prevents man-in-the-middle attacks

Apr 13, 2025 am 10:30 AM

In Debian systems, OpenSSL is an important library for encryption, decryption and certificate management. To prevent a man-in-the-middle attack (MITM), the following measures can be taken: Use HTTPS: Ensure that all network requests use the HTTPS protocol instead of HTTP. HTTPS uses TLS (Transport Layer Security Protocol) to encrypt communication data to ensure that the data is not stolen or tampered during transmission. Verify server certificate: Manually verify the server certificate on the client to ensure it is trustworthy. The server can be manually verified through the delegate method of URLSession

How to do Debian Hadoop log management

Apr 13, 2025 am 10:45 AM

How to do Debian Hadoop log management

Apr 13, 2025 am 10:45 AM

Managing Hadoop logs on Debian, you can follow the following steps and best practices: Log Aggregation Enable log aggregation: Set yarn.log-aggregation-enable to true in the yarn-site.xml file to enable log aggregation. Configure log retention policy: Set yarn.log-aggregation.retain-seconds to define the retention time of the log, such as 172800 seconds (2 days). Specify log storage path: via yarn.n