Backend Development

Backend Development

Python Tutorial

Python Tutorial

Implement time series-based data recording and analysis using Scrapy and MongoDB

Implement time series-based data recording and analysis using Scrapy and MongoDB

Implement time series-based data recording and analysis using Scrapy and MongoDB

With the rapid development of big data and data mining technology, people are paying more and more attention to the recording and analysis of time series data. In terms of web crawlers, Scrapy is a very good crawler framework, and MongoDB is a very good NoSQL database. This article will introduce how to use Scrapy and MongoDB to implement time series-based data recording and analysis.

1. Installation and use of Scrapy

Scrapy is a web crawler framework implemented in Python language. We can use the following command to install Scrapy:

pip install scrapy

After the installation is complete, we can use Scrapy to write our crawler. Below we will use a simple crawler example to understand the use of Scrapy.

1. Create a Scrapy project

In the command line terminal, create a new Scrapy project through the following command:

scrapy startproject scrapy_example

After the project is created, we can use the following command Enter the root directory of the project:

cd scrapy_example

2. Write a crawler

We can create a new crawler through the following command:

scrapy genspider example www.example.com

The example here is a custom crawler Name, www.example.com is the domain name of the crawled website. Scrapy will generate a default crawler template file. We can edit this file to write the crawler.

In this example, we crawl a simple web page and save the text content on the web page to a text file. The crawler code is as follows:

import scrapy

class ExampleSpider(scrapy.Spider):

name = "example"

start_urls = ["https://www.example.com/"]

def parse(self, response):

filename = "example.txt"

with open(filename, "w") as f:

f.write(response.text)

self.log(f"Saved file {filename}")3. Run the crawler

Before running the crawler, we first set the Scrapy configuration. In the root directory of the project, find the settings.py file and set ROBOTSTXT_OBEY to False so that our crawler can crawl any website.

ROBOTSTXT_OBEY = False

Next, we can run the crawler through the following command:

scrapy crawl example

After the operation is completed, we can see an example.txt file in the root directory of the project. It stores the text content of the web pages we crawled.

2. Installation and use of MongoDB

MongoDB is a very excellent NoSQL database. We can install MongoDB using the following command:

sudo apt-get install mongodb

After the installation is complete, we need to start the MongoDB service. Enter the following command in the command line terminal:

sudo service mongodb start

After successfully starting the MongoDB service, we can operate data through the MongoDB Shell.

1. Create a database

Enter the following command in the command line terminal to connect to the MongoDB database:

mongo

After the connection is successful, we can use the following command to create a new Database:

use scrapytest

The scrapytest here is our customized database name.

2. Create a collection

In MongoDB, we use collections to store data. We can use the following command to create a new collection:

db.createCollection("example")The example here is our custom collection name.

3. Insert data

In Python, we can use the pymongo library to access the MongoDB database. We can use the following command to install the pymongo library:

pip install pymongo

After the installation is complete, we can use the following code to insert data:

import pymongo

client = pymongo.MongoClient(host="localhost", port=27017)

db = client["scrapytest"]

collection = db["example"]

data = {"title": "example", "content": "Hello World!"}

collection.insert_one(data)The data here is the data we want to insert, including title and content two fields.

4. Query data

We can use the following code to query data:

import pymongo

client = pymongo.MongoClient(host="localhost", port=27017)

db = client["scrapytest"]

collection = db["example"]

result = collection.find_one({"title": "example"})

print(result["content"])The query condition here is "title": "example", which means the query title field is equal to example The data. The query results will include the entire data document, and we can get the value of the content field through result["content"].

3. Combined use of Scrapy and MongoDB

In actual crawler applications, we often need to save the crawled data to the database and record the time series of the data. analyze. The combination of Scrapy and MongoDB can meet this requirement well.

In Scrapy, we can use pipelines to process the crawled data and save the data to MongoDB.

1. Create pipeline

We can create a file named pipelines.py in the root directory of the Scrapy project and define our pipeline in this file. In this example, we save the crawled data to MongoDB and add a timestamp field to represent the timestamp of the data record. The code is as follows:

import pymongo

from datetime import datetime

class ScrapyExamplePipeline:

def open_spider(self, spider):

self.client = pymongo.MongoClient("localhost", 27017)

self.db = self.client["scrapytest"]

def close_spider(self, spider):

self.client.close()

def process_item(self, item, spider):

collection = self.db[spider.name]

item["timestamp"] = datetime.now()

collection.insert_one(dict(item))

return itemThis pipeline will be called every time the crawler crawls an item. We convert the crawled items into a dictionary, add a timestamp field, and then save the entire dictionary to MongoDB.

2. Configure pipeline

Find the settings.py file in the root directory of the Scrapy project, and set ITEM_PIPELINES to the pipeline we just defined:

ITEM_PIPELINES = {

"scrapy_example.pipelines.ScrapyExamplePipeline": 300,

}The 300 here is The priority of the pipeline indicates the execution order of the pipeline among all pipelines.

3. Modify the crawler code

Modify the crawler code we just wrote and pass the item to the pipeline.

import scrapy

class ExampleSpider(scrapy.Spider):

name = "example"

start_urls = ["https://www.example.com/"]

def parse(self, response):

for text in response.css("p::text"):

yield {"text": text.extract()}Here we simply crawl the text content on the web page and save the content into a text field. Scrapy will pass this item to the defined pipeline for processing.

4. Query data

Now, we can save the crawled data to MongoDB. We also need to implement time series recording and analysis. We can do this using MongoDB's query and aggregation operations.

Find data within a specified time period:

import pymongo

from datetime import datetime

client = pymongo.MongoClient("localhost", 27017)

db = client["scrapytest"]

collection = db["example"]

start_time = datetime(2021, 1, 1)

end_time = datetime(2021, 12, 31)

result = collection.find({"timestamp": {"$gte": start_time, "$lte": end_time}})

for item in result:

print(item["text"])Here we find all data in 2021.

统计每个小时内的记录数:

import pymongo

client = pymongo.MongoClient("localhost", 27017)

db = client["scrapytest"]

collection = db["example"]

pipeline = [

{"$group": {"_id": {"$hour": "$timestamp"}, "count": {"$sum": 1}}},

{"$sort": {"_id": 1}},

]

result = collection.aggregate(pipeline)

for item in result:

print(f"{item['_id']}: {item['count']}")这里我们使用MongoDB的聚合操作来统计每个小时内的记录数。

通过Scrapy和MongoDB的结合使用,我们可以方便地实现时间序列的数据记录和分析。这种方案的优点是具有较强的扩展性和灵活性,可以适用于各种不同的应用场景。不过,由于本方案的实现可能涉及到一些较为复杂的数据结构和算法,所以在实际应用中需要进行一定程度的优化和调整。

The above is the detailed content of Implement time series-based data recording and analysis using Scrapy and MongoDB. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Which version is generally used for mongodb?

Apr 07, 2024 pm 05:48 PM

Which version is generally used for mongodb?

Apr 07, 2024 pm 05:48 PM

It is recommended to use the latest version of MongoDB (currently 5.0) as it provides the latest features and improvements. When selecting a version, you need to consider functional requirements, compatibility, stability, and community support. For example, the latest version has features such as transactions and aggregation pipeline optimization. Make sure the version is compatible with the application. For production environments, choose the long-term support version. The latest version has more active community support.

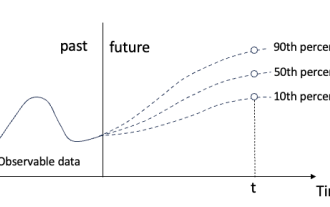

Quantile regression for time series probabilistic forecasting

May 07, 2024 pm 05:04 PM

Quantile regression for time series probabilistic forecasting

May 07, 2024 pm 05:04 PM

Do not change the meaning of the original content, fine-tune the content, rewrite the content, and do not continue. "Quantile regression meets this need, providing prediction intervals with quantified chances. It is a statistical technique used to model the relationship between a predictor variable and a response variable, especially when the conditional distribution of the response variable is of interest When. Unlike traditional regression methods, quantile regression focuses on estimating the conditional magnitude of the response variable rather than the conditional mean. "Figure (A): Quantile regression Quantile regression is an estimate. A modeling method for the linear relationship between a set of regressors X and the quantiles of the explained variables Y. The existing regression model is actually a method to study the relationship between the explained variable and the explanatory variable. They focus on the relationship between explanatory variables and explained variables

The difference between nodejs and vuejs

Apr 21, 2024 am 04:17 AM

The difference between nodejs and vuejs

Apr 21, 2024 am 04:17 AM

Node.js is a server-side JavaScript runtime, while Vue.js is a client-side JavaScript framework for creating interactive user interfaces. Node.js is used for server-side development, such as back-end service API development and data processing, while Vue.js is used for client-side development, such as single-page applications and responsive user interfaces.

Time Series Forecasting NLP Large Model New Work: Automatically Generate Implicit Prompts for Time Series Forecasting

Mar 18, 2024 am 09:20 AM

Time Series Forecasting NLP Large Model New Work: Automatically Generate Implicit Prompts for Time Series Forecasting

Mar 18, 2024 am 09:20 AM

Today I would like to share a recent research work from the University of Connecticut that proposes a method to align time series data with large natural language processing (NLP) models on the latent space to improve the performance of time series forecasting. The key to this method is to use latent spatial hints (prompts) to enhance the accuracy of time series predictions. Paper title: S2IP-LLM: SemanticSpaceInformedPromptLearningwithLLMforTimeSeriesForecasting Download address: https://arxiv.org/pdf/2403.05798v1.pdf 1. Large problem background model

Where is the database created by mongodb?

Apr 07, 2024 pm 05:39 PM

Where is the database created by mongodb?

Apr 07, 2024 pm 05:39 PM

The data of the MongoDB database is stored in the specified data directory, which can be located in the local file system, network file system or cloud storage. The specific location is as follows: Local file system: The default path is Linux/macOS:/data/db, Windows: C:\data\db. Network file system: The path depends on the file system. Cloud Storage: The path is determined by the cloud storage provider.

What are the advantages of mongodb database

Apr 07, 2024 pm 05:21 PM

What are the advantages of mongodb database

Apr 07, 2024 pm 05:21 PM

The MongoDB database is known for its flexibility, scalability, and high performance. Its advantages include: a document data model that allows data to be stored in a flexible and unstructured way. Horizontal scalability to multiple servers via sharding. Query flexibility, supporting complex queries and aggregation operations. Data replication and fault tolerance ensure data redundancy and high availability. JSON support for easy integration with front-end applications. High performance for fast response even when processing large amounts of data. Open source, customizable and free to use.

What does mongodb mean?

Apr 07, 2024 pm 05:57 PM

What does mongodb mean?

Apr 07, 2024 pm 05:57 PM

MongoDB is a document-oriented, distributed database system used to store and manage large amounts of structured and unstructured data. Its core concepts include document storage and distribution, and its main features include dynamic schema, indexing, aggregation, map-reduce and replication. It is widely used in content management systems, e-commerce platforms, social media websites, IoT applications, and mobile application development.

Where are the mongodb database files?

Apr 07, 2024 pm 05:42 PM

Where are the mongodb database files?

Apr 07, 2024 pm 05:42 PM

The MongoDB database file is located in the MongoDB data directory, which is /data/db by default, which contains .bson (document data), ns (collection information), journal (write operation records), wiredTiger (data when using the WiredTiger storage engine ) and config (database configuration information) and other files.