Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

How to use Apache Hadoop for distributed computing and data storage in PHP development

How to use Apache Hadoop for distributed computing and data storage in PHP development

How to use Apache Hadoop for distributed computing and data storage in PHP development

As the scale of the Internet and the amount of data continue to expand, single-machine computing and storage can no longer meet the needs of large-scale data processing. At this time, distributed computing and data storage become necessary solutions. As an open source distributed computing framework, Apache Hadoop has become the first choice for many big data processing projects.

How to use Apache Hadoop for distributed computing and data storage in PHP development? This article will introduce it in detail from three aspects: installation, configuration and practice.

1. Installation

Installing Apache Hadoop requires the following steps:

- Download the binary file package of Apache Hadoop

Yes Download the latest version from the official website of Apache Hadoop (http://hadoop.apache.org/releases.html).

- Install Java

Apache Hadoop is written based on Java, so you need to install Java first.

- Configure environment variables

After installing Java and Hadoop, you need to configure environment variables. In Windows systems, add the bin directory paths of Java and Hadoop to the system environment variables. In Linux systems, you need to add the PATH paths of Java and Hadoop in .bashrc or .bash_profile.

2. Configuration

After installing Hadoop, some configuration is required to use it normally. The following are some important configurations:

- core-site.xml

Configuration file path: $HADOOP_HOME/etc/hadoop/core-site.xml

In this file, you need to define the default file system URI of HDFS and the storage path of temporary files generated when Hadoop is running.

Sample configuration (for reference only):

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop/tmp</value>

</property>

</configuration>- hdfs-site.xml

Configuration file path: $HADOOP_HOME/etc/hadoop/hdfs -site.xml

In this file, you need to define the number of replicas and block size of HDFS and other information.

Sample configuration (for reference only):

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.blocksize</name>

<value>128M</value>

</property>

</configuration>- yarn-site.xml

Configuration file path: $HADOOP_HOME/etc/hadoop/yarn -site.xml

In this file, you need to define YARN-related configuration information, such as resource manager address, number of node managers, etc.

Sample configuration (for reference only):

<configuration>

<property>

<name>yarn.resourcemanager.address</name>

<value>localhost:8032</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>8192</value>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>4</value>

</property>

</configuration>- mapred-site.xml

Configuration file path: $HADOOP_HOME/etc/hadoop/mapred -site.xml

Configure relevant information of the MapReduce framework in this file.

Example configuration (for reference only):

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/usr/local/hadoop</value>

</property>

</configuration>3. Practice

After completing the above installation and configuration work, you can start using Apache Hadoop in PHP development Distributed computing and data storage.

- Storing Data

In Hadoop, data is stored in HDFS. You can use the Hdfs class (https://github.com/vladko/Hdfs) provided by PHP to operate HDFS.

Sample code:

require_once '/path/to/hdfs/vendor/autoload.php';

use AliyunHdfsHdfsClient;

$client = new HdfsClient(['host' => 'localhost', 'port' => 9000]);

// 上传本地文件到HDFS

$client->copyFromLocal('/path/to/local/file', '/path/to/hdfs/file');

// 下载HDFS文件到本地

$client->copyToLocal('/path/to/hdfs/file', '/path/to/local/file');- Distributed computing

Hadoop usually uses the MapReduce model for distributed computing. MapReduce calculations can be implemented using the HadoopStreaming class (https://github.com/andreas-glaser/php-hadoop-streaming) provided by PHP.

Sample code:

(Note: The following code simulates the operation of word counting in Hadoop.)

Mapper PHP code:

#!/usr/bin/php

<?php

while (($line = fgets(STDIN)) !== false) {

// 对每一行数据进行处理操作

$words = explode(' ', strtolower($line));

foreach ($words as $word) {

echo $word." 1

"; // 将每个单词按照‘单词 1’的格式输出

}

}Reducer PHP code:

#!/usr/bin/php

<?php

$counts = [];

while (($line = fgets(STDIN)) !== false) {

list($word, $count) = explode(" ", trim($line));

if (isset($counts[$word])) {

$counts[$word] += $count;

} else {

$counts[$word] = $count;

}

}

// 将结果输出

foreach ($counts as $word => $count) {

echo "$word: $count

";

}Execution command:

$ cat input.txt | ./mapper.php | sort | ./reducer.php

The above execution command will input the input.txt data through the pipeline to mapper.php for processing, then sort, and finally pipe the output result into reducer.php for processing Process, and finally output the number of occurrences of each word.

The HadoopStreaming class implements the basic logic of the MapReduce model, converts data into key-value pairs, calls the map function for mapping, generates new key-value pairs, and calls the reduce function for merge processing.

Sample code:

<?php require_once '/path/to/hadoop/vendor/autoload.php'; use HadoopStreamingTokenizerTokenizerMapper; use HadoopStreamingCountCountReducer; use HadoopStreamingHadoopStreaming; $hadoop = new HadoopStreaming(); $hadoop->setMapper(new TokenizerMapper()); $hadoop->setReducer(new CountReducer()); $hadoop->run();

Since Apache Hadoop is an open source distributed computing framework, it also provides many other APIs and tools, such as HBase, Hive, Pig, etc., in specific applications You can choose according to your needs.

Summary:

This article introduces how to use Apache Hadoop for distributed computing and data storage in PHP development. It first describes the detailed steps of Apache Hadoop installation and configuration, then introduces how to use PHP to operate HDFS to implement data storage operations, and finally uses the example of HadoopStreaming class to describe how to implement MapReduce distributed computing in PHP development.

The above is the detailed content of How to use Apache Hadoop for distributed computing and data storage in PHP development. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

CakePHP Project Configuration

Sep 10, 2024 pm 05:25 PM

CakePHP Project Configuration

Sep 10, 2024 pm 05:25 PM

In this chapter, we will understand the Environment Variables, General Configuration, Database Configuration and Email Configuration in CakePHP.

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

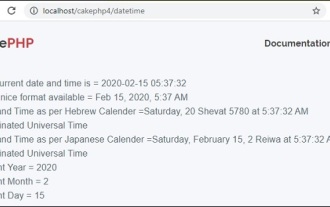

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

To work with date and time in cakephp4, we are going to make use of the available FrozenTime class.

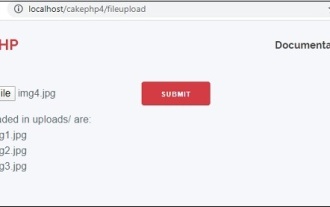

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

To work on file upload we are going to use the form helper. Here, is an example for file upload.

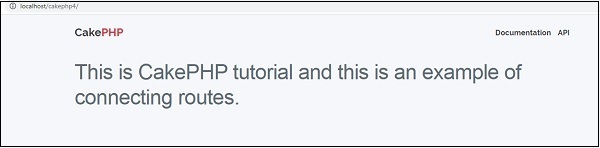

CakePHP Routing

Sep 10, 2024 pm 05:25 PM

CakePHP Routing

Sep 10, 2024 pm 05:25 PM

In this chapter, we are going to learn the following topics related to routing ?

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

CakePHP is an open-source framework for PHP. It is intended to make developing, deploying and maintaining applications much easier. CakePHP is based on a MVC-like architecture that is both powerful and easy to grasp. Models, Views, and Controllers gu

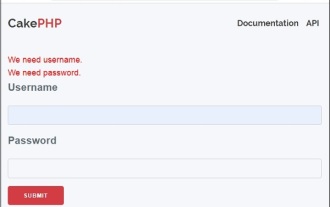

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

Validator can be created by adding the following two lines in the controller.

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

Visual Studio Code, also known as VS Code, is a free source code editor — or integrated development environment (IDE) — available for all major operating systems. With a large collection of extensions for many programming languages, VS Code can be c