Technology peripherals

Technology peripherals

AI

AI

The 'golden partner' of large models is here! Tencent Cloud officially releases AI native vector database, providing 1 billion-level vector retrieval capabilities

The 'golden partner' of large models is here! Tencent Cloud officially releases AI native vector database, providing 1 billion-level vector retrieval capabilities

The 'golden partner' of large models is here! Tencent Cloud officially releases AI native vector database, providing 1 billion-level vector retrieval capabilities

On July 4, Tencent Cloud officially released the AI native (AI Native) vector database Tencent Cloud VectorDB. This database can be widely used in scenarios such as large model training, inference, and knowledge base supplementation. It is the first vector database in China that provides full life cycle AI from the access layer, computing layer, to storage layer.

Known in the industry as the "hippocampus" of large models, vector databases are specifically designed to store and query vector data. According to reports, Tencent Cloud's vector database supports up to 1 billion vector retrieval scale, with latency controlled at the millisecond level. Compared with traditional stand-alone plug-in databases, the retrieval scale is increased by 10 times, and it also has a peak query capacity of one million levels per second (QPS).

Tencent Cloud defines AI Native vector database

With the advent of the big model era, embracing big models has become a necessity for enterprises.

By vectorizing data for storage, vector databases can significantly improve efficiency and reduce costs. It can solve the problems of high pre-training costs for large models, no "long-term memory", insufficient knowledge updates, and complex prompt word engineering. It breaks through the time and space limitations of large models and accelerates the implementation of large models in industry scenarios.

Statistics show that using Tencent Cloud Vector Database for classification, deduplication and cleaning of large model pre-training data can achieve a 10 times improvement in efficiency compared to traditional methods. If the vector database is used as an external knowledge base for model reasoning, Then the cost can be reduced by 2-4 orders of magnitude.

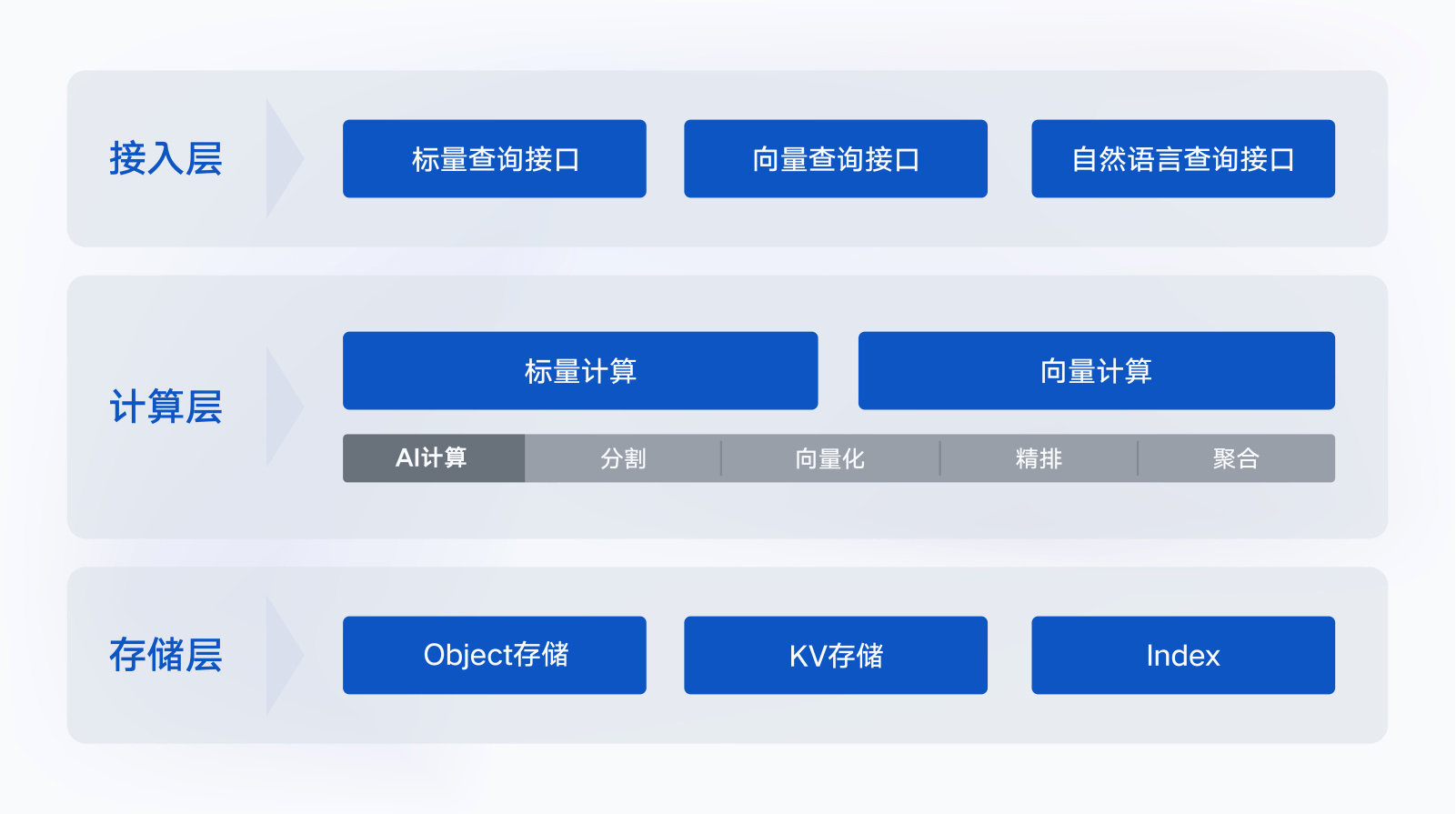

It is worth noting that Tencent Cloud has redefined the development paradigm of AI Native and provided a comprehensive AI solution for the access layer, computing layer, and storage layer, enabling users to use vector databases throughout the entire life cycle. Apply to AI capabilities.

Specifically, at the access layer, Tencent Cloud Vector Database supports the input of natural language text, adopts the "scalar vector" query method, supports full memory indexing, and supports up to one million queries per second (QPS). ; At the computing layer, the AI Native development paradigm can realize full-scale data AI calculations, and one-stop solves problems such as text segmentation (segmentation) and vectorization (embedding) when enterprises build private domain knowledge bases; at the storage layer, Tencent Cloud Vector database supports intelligent storage distribution of data, helping enterprises reduce storage costs by 50%.

It used to take about a month for enterprises to access a large model. After using Tencent Cloud Vector Database, it can be completed in 3 days, which greatly reduces the enterprise's access costs.

It is understood that the vectorization capability (embedding) of Tencent Cloud Vector Database has been recognized by authoritative organizations many times. In 2021, it topped the MS MARCO list and related results have been published in the NLP Summit ACL.

Luo Yun, deputy general manager of Tencent Cloud Database, said that the era of AI Native has arrived. "Vector database large model data" and the three will produce a "flywheel effect" and jointly help enterprises enter the AI Native era. )era.

Tencent Cloud Vector Database helps data access efficiency increase by 10 times

Tencent Cloud Vector Database is based on Tencent Group’s vector engine (OLAMA), which processes hundreds of billions of searches every day. After practice in Tencent’s internal massive scenarios, the efficiency of data access to AI is also 10 times higher than that of traditional solutions, and the operational stability is as high as 99.99%, it has been used in more than 30 national-level products such as Tencent Video, QQ Browser, QQ Music, etc.

Tencent Cloud vector database can effectively help products improve operational efficiency. Data shows that after using Tencent Cloud Vector Database, the per capita listening time of QQ Music increased by 3.2%, the per capita effective exposure time of Tencent Video increased by 1.74%, and the cost of QQ Browser decreased by 37.9%.

Take the application of Tencent Video as an example. Images, audio, title text and other contents in the video library use Tencent Cloud vector database. The average monthly retrieval and calculation volume is up to 20 billion times, which effectively meets the needs of copyright protection and original identification. , similarity retrieval and other scenario requirements.

Large model accelerated vector databases have entered a period of rapid development. According to Northeast Securities’ forecast, the global vector database market is expected to reach US$50 billion by 2030, and the domestic vector database market is expected to exceed RMB 60 billion.

Vector databases can help enterprises use large models more efficiently and conveniently to maximize the value of data. With the continuous development and popularization of large models, AI Native vector databases will become the standard for enterprise data processing.

The above is the detailed content of The 'golden partner' of large models is here! Tencent Cloud officially releases AI native vector database, providing 1 billion-level vector retrieval capabilities. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

Big model app Tencent Yuanbao is online! Hunyuan is upgraded to create an all-round AI assistant that can be carried anywhere

Jun 09, 2024 pm 10:38 PM

Big model app Tencent Yuanbao is online! Hunyuan is upgraded to create an all-round AI assistant that can be carried anywhere

Jun 09, 2024 pm 10:38 PM

On May 30, Tencent announced a comprehensive upgrade of its Hunyuan model. The App "Tencent Yuanbao" based on the Hunyuan model was officially launched and can be downloaded from Apple and Android app stores. Compared with the Hunyuan applet version in the previous testing stage, Tencent Yuanbao provides core capabilities such as AI search, AI summary, and AI writing for work efficiency scenarios; for daily life scenarios, Yuanbao's gameplay is also richer and provides multiple features. AI application, and new gameplay methods such as creating personal agents are added. "Tencent does not strive to be the first to make large models." Liu Yuhong, vice president of Tencent Cloud and head of Tencent Hunyuan large model, said: "In the past year, we continued to promote the capabilities of Tencent Hunyuan large model. In the rich and massive Polish technology in business scenarios while gaining insights into users’ real needs

Bytedance Beanbao large model released, Volcano Engine full-stack AI service helps enterprises intelligently transform

Jun 05, 2024 pm 07:59 PM

Bytedance Beanbao large model released, Volcano Engine full-stack AI service helps enterprises intelligently transform

Jun 05, 2024 pm 07:59 PM

Tan Dai, President of Volcano Engine, said that companies that want to implement large models well face three key challenges: model effectiveness, inference costs, and implementation difficulty: they must have good basic large models as support to solve complex problems, and they must also have low-cost inference. Services allow large models to be widely used, and more tools, platforms and applications are needed to help companies implement scenarios. ——Tan Dai, President of Huoshan Engine 01. The large bean bag model makes its debut and is heavily used. Polishing the model effect is the most critical challenge for the implementation of AI. Tan Dai pointed out that only through extensive use can a good model be polished. Currently, the Doubao model processes 120 billion tokens of text and generates 30 million images every day. In order to help enterprises implement large-scale model scenarios, the beanbao large-scale model independently developed by ByteDance will be launched through the volcano

Using Shengteng AI technology, the Qinling·Qinchuan transportation model helps Xi'an build a smart transportation innovation center

Oct 15, 2023 am 08:17 AM

Using Shengteng AI technology, the Qinling·Qinchuan transportation model helps Xi'an build a smart transportation innovation center

Oct 15, 2023 am 08:17 AM

"High complexity, high fragmentation, and cross-domain" have always been the primary pain points on the road to digital and intelligent upgrading of the transportation industry. Recently, the "Qinling·Qinchuan Traffic Model" with a parameter scale of 100 billion, jointly built by China Vision, Xi'an Yanta District Government, and Xi'an Future Artificial Intelligence Computing Center, is oriented to the field of smart transportation and provides services to Xi'an and its surrounding areas. The region will create a fulcrum for smart transportation innovation. The "Qinling·Qinchuan Traffic Model" combines Xi'an's massive local traffic ecological data in open scenarios, the original advanced algorithm self-developed by China Science Vision, and the powerful computing power of Shengteng AI of Xi'an Future Artificial Intelligence Computing Center to provide road network monitoring, Smart transportation scenarios such as emergency command, maintenance management, and public travel bring about digital and intelligent changes. Traffic management has different characteristics in different cities, and the traffic on different roads

Uncovering the NVIDIA large model inference framework: TensorRT-LLM

Feb 01, 2024 pm 05:24 PM

Uncovering the NVIDIA large model inference framework: TensorRT-LLM

Feb 01, 2024 pm 05:24 PM

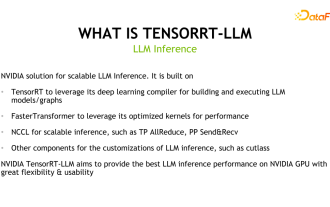

1. Product positioning of TensorRT-LLM TensorRT-LLM is a scalable inference solution developed by NVIDIA for large language models (LLM). It builds, compiles and executes calculation graphs based on the TensorRT deep learning compilation framework, and draws on the efficient Kernels implementation in FastTransformer. In addition, it utilizes NCCL for communication between devices. Developers can customize operators to meet specific needs based on technology development and demand differences, such as developing customized GEMM based on cutlass. TensorRT-LLM is NVIDIA's official inference solution, committed to providing high performance and continuously improving its practicality. TensorRT-LL

Benchmark GPT-4! China Mobile's Jiutian large model passed dual registration

Apr 04, 2024 am 09:31 AM

Benchmark GPT-4! China Mobile's Jiutian large model passed dual registration

Apr 04, 2024 am 09:31 AM

According to news on April 4, the Cyberspace Administration of China recently released a list of registered large models, and China Mobile’s “Jiutian Natural Language Interaction Large Model” was included in it, marking that China Mobile’s Jiutian AI large model can officially provide generative artificial intelligence services to the outside world. . China Mobile stated that this is the first large-scale model developed by a central enterprise to have passed both the national "Generative Artificial Intelligence Service Registration" and the "Domestic Deep Synthetic Service Algorithm Registration" dual registrations. According to reports, Jiutian’s natural language interaction large model has the characteristics of enhanced industry capabilities, security and credibility, and supports full-stack localization. It has formed various parameter versions such as 9 billion, 13.9 billion, 57 billion, and 100 billion, and can be flexibly deployed in Cloud, edge and end are different situations

Advanced practice of industrial knowledge graph

Jun 13, 2024 am 11:59 AM

Advanced practice of industrial knowledge graph

Jun 13, 2024 am 11:59 AM

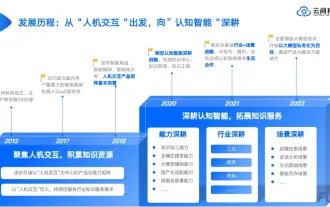

1. Background Introduction First, let’s introduce the development history of Yunwen Technology. Yunwen Technology Company...2023 is the period when large models are prevalent. Many companies believe that the importance of graphs has been greatly reduced after large models, and the preset information systems studied previously are no longer important. However, with the promotion of RAG and the prevalence of data governance, we have found that more efficient data governance and high-quality data are important prerequisites for improving the effectiveness of privatized large models. Therefore, more and more companies are beginning to pay attention to knowledge construction related content. This also promotes the construction and processing of knowledge to a higher level, where there are many techniques and methods that can be explored. It can be seen that the emergence of a new technology does not necessarily defeat all old technologies. It is also possible that the new technology and the old technology will be integrated with each other.

New test benchmark released, the most powerful open source Llama 3 is embarrassed

Apr 23, 2024 pm 12:13 PM

New test benchmark released, the most powerful open source Llama 3 is embarrassed

Apr 23, 2024 pm 12:13 PM

If the test questions are too simple, both top students and poor students can get 90 points, and the gap cannot be widened... With the release of stronger models such as Claude3, Llama3 and even GPT-5 later, the industry is in urgent need of a more difficult and differentiated model Benchmarks. LMSYS, the organization behind the large model arena, launched the next generation benchmark, Arena-Hard, which attracted widespread attention. There is also the latest reference for the strength of the two fine-tuned versions of Llama3 instructions. Compared with MTBench, which had similar scores before, the Arena-Hard discrimination increased from 22.6% to 87.4%, which is stronger and weaker at a glance. Arena-Hard is built using real-time human data from the arena and has a consistency rate of 89.1% with human preferences.

Xiaomi Byte joins forces! A large model of Xiao Ai's access to Doubao: already installed on mobile phones and SU7

Jun 13, 2024 pm 05:11 PM

Xiaomi Byte joins forces! A large model of Xiao Ai's access to Doubao: already installed on mobile phones and SU7

Jun 13, 2024 pm 05:11 PM

According to news on June 13, according to Byte's "Volcano Engine" public account, Xiaomi's artificial intelligence assistant "Xiao Ai" has reached a cooperation with Volcano Engine. The two parties will achieve a more intelligent AI interactive experience based on the beanbao large model. It is reported that the large-scale beanbao model created by ByteDance can efficiently process up to 120 billion text tokens and generate 30 million pieces of content every day. Xiaomi used the beanbao large model to improve the learning and reasoning capabilities of its own model and create a new "Xiao Ai Classmate", which not only more accurately grasps user needs, but also provides faster response speed and more comprehensive content services. For example, when a user asks about a complex scientific concept, &ldq