Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

How to use PHP and phpSpider to automatically crawl web content at regular intervals?

How to use PHP and phpSpider to automatically crawl web content at regular intervals?

How to use PHP and phpSpider to automatically crawl web content at regular intervals?

How to use PHP and phpSpider to automatically crawl web content at regular intervals?

With the development of the Internet, the crawling and processing of web content has become more and more important. In many cases, we need to automatically crawl the content of specified web pages at regular intervals for subsequent analysis and processing. This article will introduce how to use PHP and phpSpider to automatically crawl web page content at regular intervals, and provide code examples.

- What is phpSpider?

phpSpider is a lightweight crawler framework based on PHP, which can help us quickly crawl web content. Using phpSpider, you can not only crawl the HTML source code of the web page, but also parse the data and process it accordingly. - Install phpSpider

First, we need to install phpSpider in the PHP environment. Execute the following command in the terminal to install:

composer require phpspider/phpspider

- Create a simple scheduled task

Next, we will create a simple scheduled task to automatically capture the specified time The content of the web page.

First, create a file named spider.php and introduce the automatic loading file of phpSpider into the file.

<?php require_once 'vendor/autoload.php';

Next, we define a class inherited from phpSpiderSpider, which will implement our scheduled tasks.

class MySpider extends phpSpiderSpider

{

// 定义需要抓取的网址

public $start_url = 'https://example.com';

// 在抓取网页之前执行的代码

public function beforeDownloadPage($page)

{

// 在这里可以进行一些预处理的操作,例如设置请求头信息等

return $page;

}

// 在抓取网页成功之后执行的代码

public function handlePage($page)

{

// 在这里可以对抓取到的网页内容进行处理,例如提取数据等

$html = $page['raw'];

// 处理抓取到的网页内容

// ...

}

}

// 创建一个爬虫对象

$spider = new MySpider();

// 启动爬虫

$spider->start();The detailed instructions for parsing the above code are as follows:

- First, we create a class

MySpiderthat inherits fromphpSpiderSpider. In this class, we define the URL$start_urlthat needs to be crawled. - In the

beforeDownloadPagemethod we can perform some preprocessing operations, such as setting request header information, etc. The result returned by this method will be passed to thehandlePagemethod as the content of the web page. - In the

handlePagemethod, we can process the captured web page content, such as extracting data, etc.

- Set scheduled tasks

In order to realize the function of automatically crawling web page content at scheduled times, we can use the scheduled task tool crontab under the Linux system to set up scheduled tasks. Open the terminal and enter thecrontab -ecommand to open the scheduled task editor.

Add the following code in the editor:

* * * * * php /path/to/spider.php > /dev/null 2>&1

Among them, /path/to/spider.php needs to be replaced with the full path where spider.php is located .

The above code means that the spider.php script will be executed every minute and the output will be redirected to /dev/null, which means the output will not be saved.

Save and exit the editor, and the scheduled task is set up.

- Run scheduled tasks

Now, we can run scheduled tasks to automatically crawl web content. Execute the following command in the terminal to start the scheduled task:

crontab spider.cron

Every next minute, the scheduled task will automatically execute the spider.php script and crawl the content of the specified web page.

So far, we have introduced how to use PHP and phpSpider to automatically crawl web content at regular intervals. Through scheduled tasks, we can easily crawl and process web content regularly to meet actual needs. Using the powerful functions of phpSpider, we can easily parse web page content and perform corresponding processing and analysis.

I hope this article will be helpful to you, and I wish you can use phpSpider to develop more powerful web crawling applications!

The above is the detailed content of How to use PHP and phpSpider to automatically crawl web content at regular intervals?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

CakePHP Project Configuration

Sep 10, 2024 pm 05:25 PM

CakePHP Project Configuration

Sep 10, 2024 pm 05:25 PM

In this chapter, we will understand the Environment Variables, General Configuration, Database Configuration and Email Configuration in CakePHP.

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

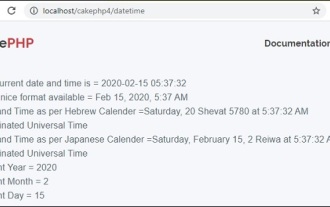

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

CakePHP Date and Time

Sep 10, 2024 pm 05:27 PM

To work with date and time in cakephp4, we are going to make use of the available FrozenTime class.

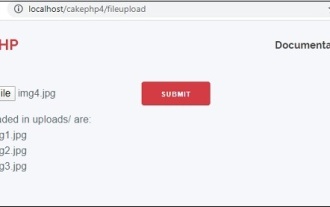

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

CakePHP File upload

Sep 10, 2024 pm 05:27 PM

To work on file upload we are going to use the form helper. Here, is an example for file upload.

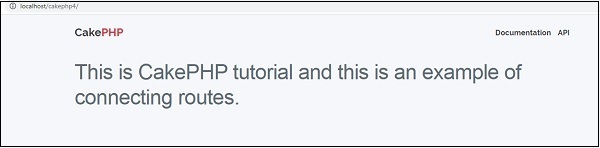

CakePHP Routing

Sep 10, 2024 pm 05:25 PM

CakePHP Routing

Sep 10, 2024 pm 05:25 PM

In this chapter, we are going to learn the following topics related to routing ?

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

Discuss CakePHP

Sep 10, 2024 pm 05:28 PM

CakePHP is an open-source framework for PHP. It is intended to make developing, deploying and maintaining applications much easier. CakePHP is based on a MVC-like architecture that is both powerful and easy to grasp. Models, Views, and Controllers gu

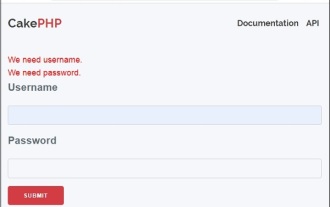

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

CakePHP Creating Validators

Sep 10, 2024 pm 05:26 PM

Validator can be created by adding the following two lines in the controller.

CakePHP Working with Database

Sep 10, 2024 pm 05:25 PM

CakePHP Working with Database

Sep 10, 2024 pm 05:25 PM

Working with database in CakePHP is very easy. We will understand the CRUD (Create, Read, Update, Delete) operations in this chapter.