Technology peripherals

Technology peripherals

AI

AI

The source code of 25 AI agents is now public, inspired by Stanford's 'Virtual Town' and 'Westworld'

The source code of 25 AI agents is now public, inspired by Stanford's 'Virtual Town' and 'Westworld'

The source code of 25 AI agents is now public, inspired by Stanford's 'Virtual Town' and 'Westworld'

Audiences who are familiar with "Westworld" know that this show is set in a huge high-tech adult theme park in the future world. Robots have behavioral capabilities similar to humans and can remember what they see and hear. , repeating the core storyline. Every day, these robots will be reset and returned to their initial state

After the release of the Stanford paper "Generative Agents: Interactive Simulacra of Human Behavior", this scenario is no longer limited to film and television dramas , AI has successfully reproduced this scene

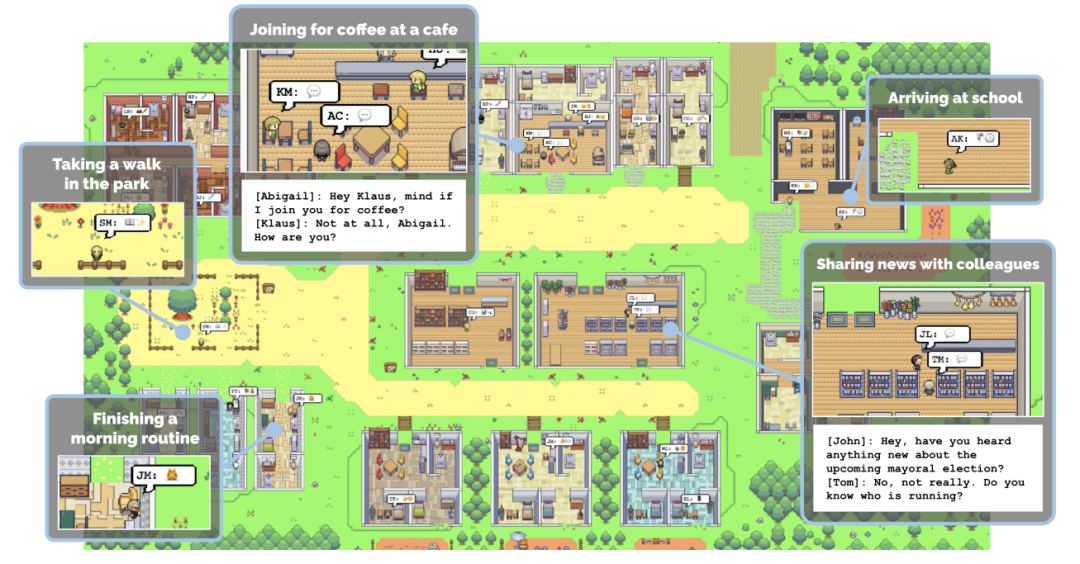

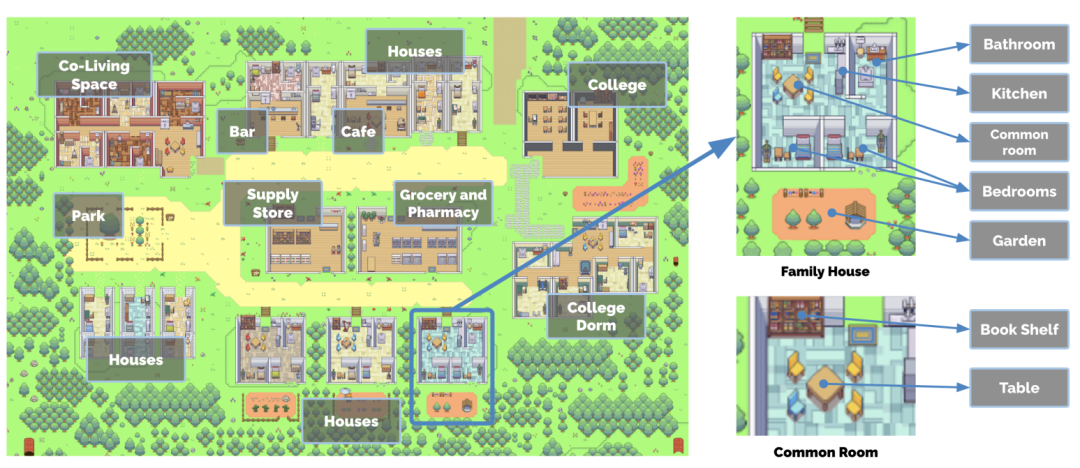

Overview of Smallville’s “virtual town”

Overview of Smallville’s “virtual town”

- Paper address: https://arxiv.org/pdf/2304.03442v1.pdf

- Project address: https://github.com/joonspk-research/generative_agents

Researchers successfully created a virtual town called Smallville, which contains 25 AI agents. They live in the town, have jobs, exchange gossip, participate in social activities, and make friends Make new friends and even host a Valentine’s Day party. Each town resident has a unique personality and background story

In order to increase the realism of the "town residents", the town of Smallville provides multiple public scenes, such as cafes, Bars, parks, schools, dormitories, houses and shops. In Smallville, residents can freely move between these locations, interact with other residents, and even greet each other

"Town residents" can Scenes of casual entry and exit

How do the residents of the town behave similarly to humans? For example, when they see breakfast on fire, they will take the initiative to go over and turn off the stove; when they find someone in the bathroom, they will wait outside; when they meet someone they want to talk to, they will stop and chat...

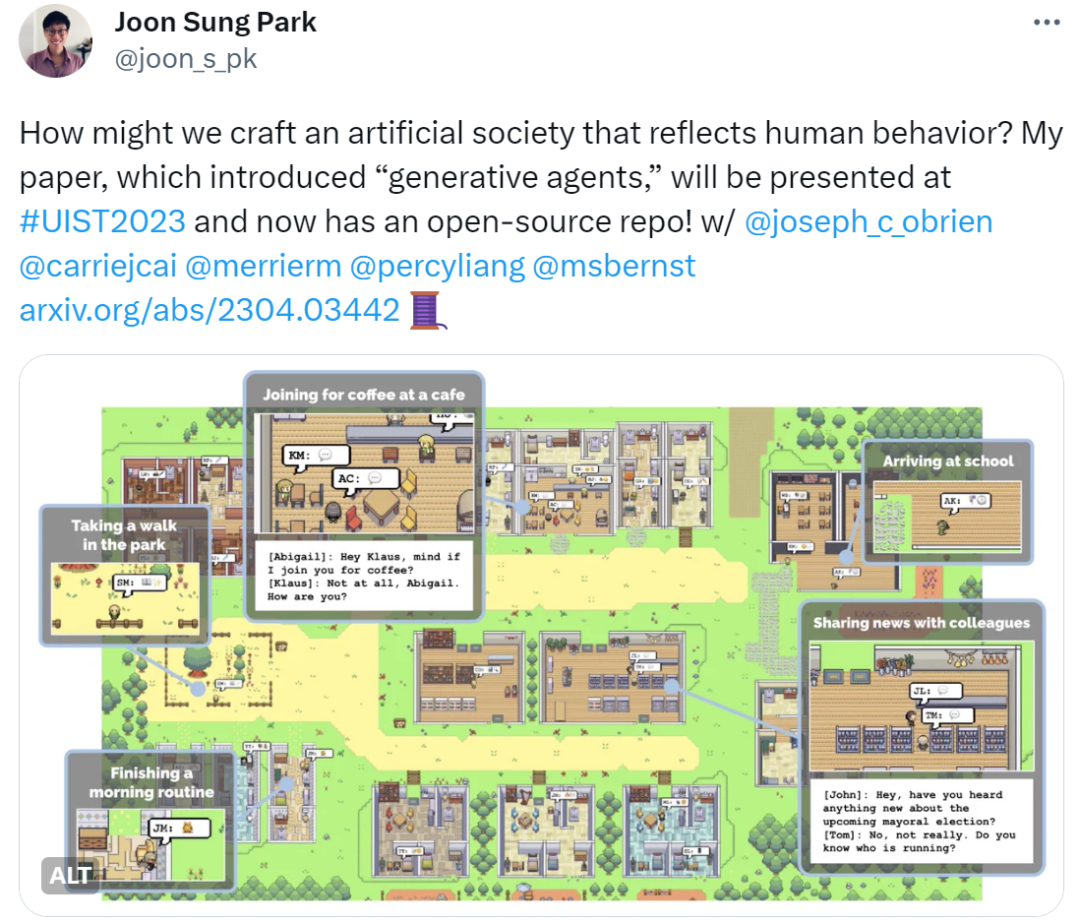

Unfortunately, this research was not made public at the time, and more information could only be obtained through published papers. However, now as time has passed, the researchers have made the research open source. This news was also confirmed by Joon Sung Park, a Stanford doctoral student and one of the authors of the paper.

With the open source of the project, it is expected to have a wide impact on the game industry and meet the expectations of netizens. Future computer games may present a virtual city where each resident has an independent life, job, and hobbies, allowing players to interact with them realistically

"I believe this research marks the beginning of AGI. Although we still have a lot of work to do, this is the right path. Finally, open source is here!"

Netizens also hope to apply this research to the video game "The Sims"

However , some people expressed concerns about this. We all know that building AI agents requires relying on large models, but we must consider a problem: LLM is being gradually "tamed" by humans, so it cannot fully reflect humans' real emotions and behaviors, and can only show behaviors that humans think are good. , and behaviors like anger, crime, inequality, jealousy, violence, etc. will be weakened to a large extent. Therefore, it is difficult for AI agents to completely replicate human real life

In any case, people are still full of open source concerns about Smallville Passion

In any case, people are still full of open source concerns about Smallville Passion

In addition to Stanford’s open-source Smallville “virtual town”, we would also like to list some other AI agents

The startup Fable uses AI agents to create a virtual town that is completely powered by AI. Completed the screenwriting, animation, directing, editing and other production processes, and successfully shot an episode of "South Park"

NVIDIA AI Agent Voyager Connect to GPT-4 and you can play Minecraft without human intervention.

Ghost in the Minecraft (GITM), a generalist AI agent jointly developed by SenseTime, Tsinghua University and other institutions, has demonstrated its performance in Minecraft Surpassing the outstanding performance of all previous agents and significantly reducing training costs

Since there are more studies, we cannot list them all. With the open source of Stanford Virtual Town, we believe that more companies and institutions will join the ranks

The above is the detailed content of The source code of 25 AI agents is now public, inspired by Stanford's 'Virtual Town' and 'Westworld'. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Ten recommended open source free text annotation tools

Mar 26, 2024 pm 08:20 PM

Ten recommended open source free text annotation tools

Mar 26, 2024 pm 08:20 PM

Text annotation is the work of corresponding labels or tags to specific content in text. Its main purpose is to provide additional information to the text for deeper analysis and processing, especially in the field of artificial intelligence. Text annotation is crucial for supervised machine learning tasks in artificial intelligence applications. It is used to train AI models to help more accurately understand natural language text information and improve the performance of tasks such as text classification, sentiment analysis, and language translation. Through text annotation, we can teach AI models to recognize entities in text, understand context, and make accurate predictions when new similar data appears. This article mainly recommends some better open source text annotation tools. 1.LabelStudiohttps://github.com/Hu

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing, and graph vision models. It has a wide range of applications, such as assisting vehicles in identifying obstacles on the road, and helping in the detection and diagnosis of diseases through medical image recognition. . This article mainly recommends some better open source and free image annotation tools. 1.Makesens

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Face detection and recognition technology is already a relatively mature and widely used technology. Currently, the most widely used Internet application language is JS. Implementing face detection and recognition on the Web front-end has advantages and disadvantages compared to back-end face recognition. Advantages include reducing network interaction and real-time recognition, which greatly shortens user waiting time and improves user experience; disadvantages include: being limited by model size, the accuracy is also limited. How to use js to implement face detection on the web? In order to implement face recognition on the Web, you need to be familiar with related programming languages and technologies, such as JavaScript, HTML, CSS, WebRTC, etc. At the same time, you also need to master relevant computer vision and artificial intelligence technologies. It is worth noting that due to the design of the Web side

Alibaba 7B multi-modal document understanding large model wins new SOTA

Apr 02, 2024 am 11:31 AM

Alibaba 7B multi-modal document understanding large model wins new SOTA

Apr 02, 2024 am 11:31 AM

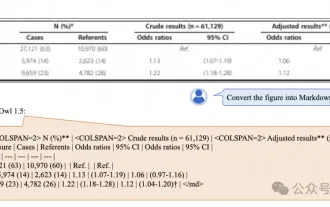

New SOTA for multimodal document understanding capabilities! Alibaba's mPLUG team released the latest open source work mPLUG-DocOwl1.5, which proposed a series of solutions to address the four major challenges of high-resolution image text recognition, general document structure understanding, instruction following, and introduction of external knowledge. Without further ado, let’s look at the effects first. One-click recognition and conversion of charts with complex structures into Markdown format: Charts of different styles are available: More detailed text recognition and positioning can also be easily handled: Detailed explanations of document understanding can also be given: You know, "Document Understanding" is currently An important scenario for the implementation of large language models. There are many products on the market to assist document reading. Some of them mainly use OCR systems for text recognition and cooperate with LLM for text processing.

Just released! An open source model for generating anime-style images with one click

Apr 08, 2024 pm 06:01 PM

Just released! An open source model for generating anime-style images with one click

Apr 08, 2024 pm 06:01 PM

Let me introduce to you the latest AIGC open source project-AnimagineXL3.1. This project is the latest iteration of the anime-themed text-to-image model, aiming to provide users with a more optimized and powerful anime image generation experience. In AnimagineXL3.1, the development team focused on optimizing several key aspects to ensure that the model reaches new heights in performance and functionality. First, they expanded the training data to include not only game character data from previous versions, but also data from many other well-known anime series into the training set. This move enriches the model's knowledge base, allowing it to more fully understand various anime styles and characters. AnimagineXL3.1 introduces a new set of special tags and aesthetics

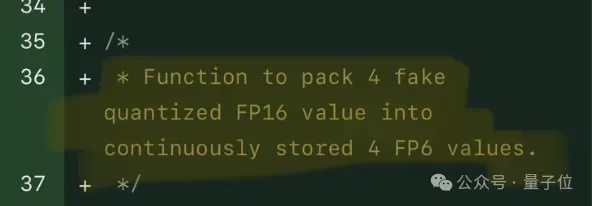

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one

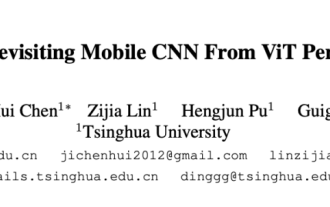

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

Paper address: https://arxiv.org/abs/2307.09283 Code address: https://github.com/THU-MIG/RepViTRepViT performs well in the mobile ViT architecture and shows significant advantages. Next, we explore the contributions of this study. It is mentioned in the article that lightweight ViTs generally perform better than lightweight CNNs on visual tasks, mainly due to their multi-head self-attention module (MSHA) that allows the model to learn global representations. However, the architectural differences between lightweight ViTs and lightweight CNNs have not been fully studied. In this study, the authors integrated lightweight ViTs into the effective

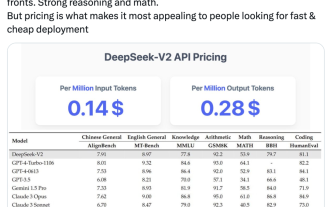

Domestic open source MoE indicators explode: GPT-4 level capabilities, API price is only one percent

May 07, 2024 pm 05:34 PM

Domestic open source MoE indicators explode: GPT-4 level capabilities, API price is only one percent

May 07, 2024 pm 05:34 PM

The latest large-scale domestic open source MoE model has become popular just after its debut. The performance of DeepSeek-V2 reaches GPT-4 level, but it is open source, free for commercial use, and the API price is only one percent of GPT-4-Turbo. Therefore, as soon as it was released, it immediately triggered a lot of discussion. Judging from the published performance indicators, DeepSeekV2's comprehensive Chinese capabilities surpass those of many open source models. At the same time, closed source models such as GPT-4Turbo and Wenkuai 4.0 are also in the first echelon. The comprehensive English ability is also in the same first echelon as LLaMA3-70B, and surpasses Mixtral8x22B, which is also a MoE. It also shows good performance in knowledge, mathematics, reasoning, programming, etc. And supports 128K context. Picture this