Technology peripherals

Technology peripherals

AI

AI

Various styles of VCT guidance, all with one picture, allowing you to easily implement it

Various styles of VCT guidance, all with one picture, allowing you to easily implement it

Various styles of VCT guidance, all with one picture, allowing you to easily implement it

In recent years, image generation technology has made many key breakthroughs. Especially since the release of large models such as DALLE2 and Stable Diffusion, text generation image technology has gradually matured, and high-quality image generation has broad practical scenarios. However, detailed editing of existing images is still a difficult problem

On the one hand, due to the limitations of text descriptions, existing high-quality textual image models , only text can be used to edit pictures descriptively, and for some specific effects, text is difficult to describe; on the other hand, in actual application scenarios, image refinement editing tasks often only have a small number of reference pictures , This makes it difficult for many solutions that require a large amount of data for training to work when there is a small amount of data, especially when there is only one reference image.

Recently, researchers from NetEase Interactive Entertainment AI Lab proposed an image-to-image editing solution based on single image guidance. Given a single reference image, Transfer objects or styles in the reference image to the source image without changing the overall structure of the source image. The research paper has been accepted by ICCV 2023, and the relevant code has been open source.

- Paper address: https://arxiv.org/abs/2307.14352

- Code address: https://github.com/CrystalNeuro/visual-concept-translator

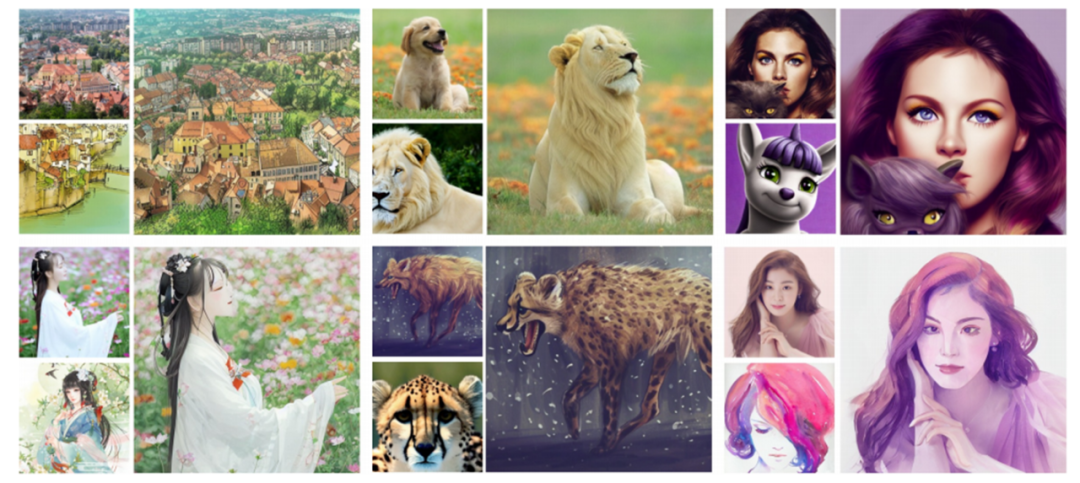

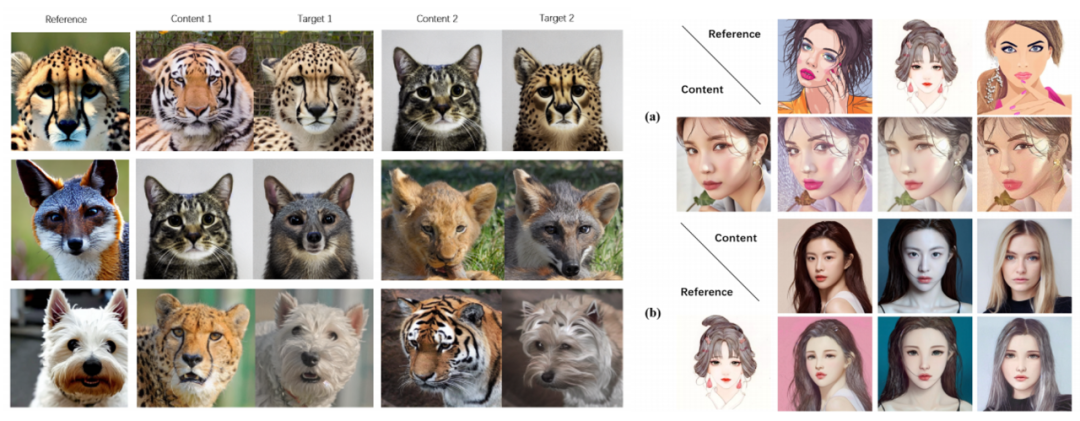

Let us first look at a set of pictures to feel its effect .

Thesis renderings: The upper left corner of each set of pictures is the source picture, the lower left corner is the reference picture, and the right side is the generated result picture

Main Body Framework

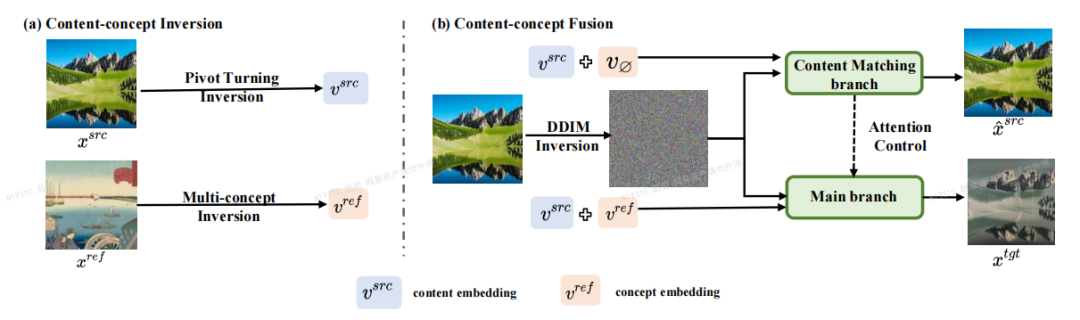

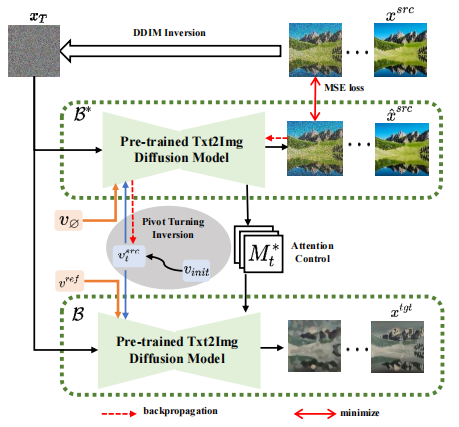

The author of the paper proposed an image editing framework based on Inversion-Fusion (Inversion-Fusion) - VCT (visual concept translator, visual concept converter). As shown in the figure below, the overall framework of VCT includes two processes: content-concept inversion process (Content-concept Inversion) and content-concept fusion process (Content-concept Fusion). The content-concept inversion process uses two different inversion algorithms to learn and represent the latent vectors of the structural information of the original image and the semantic information of the reference image respectively; the content-concept fusion process uses the latent vectors of the structural information and semantic information. Fusion to generate the final result.

The content that needs to be rewritten is: the main framework of the paper

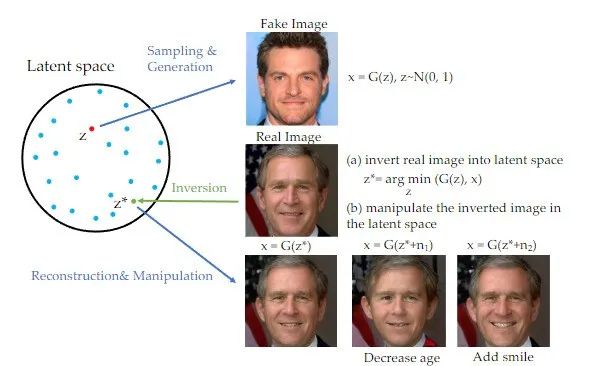

It is worth mentioning that in In recent years, inversion methods have been widely used in the field of Generative Adversarial Networks (GAN) and have achieved remarkable results in many image generation tasks [1]. When GAN rewrites content, the original text needs to be rewritten into Chinese. The original sentence does not need to appear. A picture can be mapped to the hidden space of the trained GAN generator, and the purpose of editing can be achieved by controlling the hidden space. This inversion scheme can fully exploit the generative power of pre-trained generative models. This study actually rewrites the content with GAN. The original text needs to be rewritten into Chinese, and the original sentence does not need to appear. It is applied to image editing tasks based on image guidance with the diffusion model as a priori.

When rewriting the content, the original text needs to be rewritten into Chinese, and the original sentence does not need to appear

## Method introduction

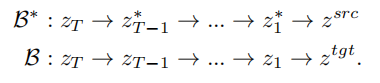

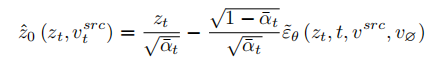

Based on the idea of inversion, VCT designed a two-branch diffusion process, which includes a branch B* for content reconstruction and a main branch B for editing. They start from the same noise xT obtained from DDIM Inversion【2】, an algorithm that uses diffusion models to calculate noise from images. , respectively for content reconstruction and content editing. The pre-training model used in this paper is Latent Diffusion Models (LDM). The diffusion process occurs in the latent vector space z space. The double-branch process can be expressed as:

Double-branch diffusion process

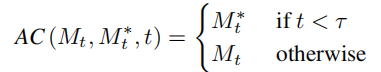

Content reconstruction branch B* learns T content feature vectors  , used to restore the structure of the original image information, and pass the structural information to the editing main branch B through the soft attention control scheme. The soft attention control scheme draws on Google's prompt2prompt[3] work. The formula is:

, used to restore the structure of the original image information, and pass the structural information to the editing main branch B through the soft attention control scheme. The soft attention control scheme draws on Google's prompt2prompt[3] work. The formula is:

That is, when the number of steps of the diffusion model is within a certain range , replace the attention feature map of the editing main branch with the feature map of the content reconstruction branch to achieve structural control of the generated images. The editing main branch B combines the content feature vector  learned from the original image and the concept feature vector

learned from the original image and the concept feature vector  learned from the reference image to generate the edited picture.

learned from the reference image to generate the edited picture.

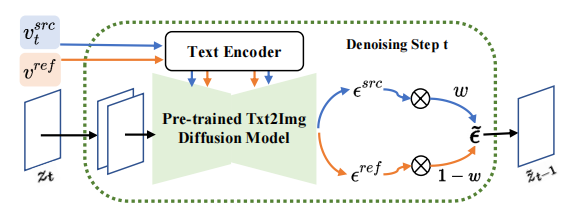

Noise space ( space) fusion

space) fusion

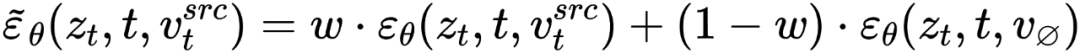

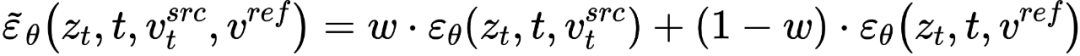

is diffusing At each step of the model, the fusion of feature vectors occurs in the noise space, which is a weighting of the noise predicted after the feature vectors are input into the diffusion model. The feature mixing of the content reconstruction branch occurs on the content feature vector  and the empty text vector, which is consistent with the form of classifier-free diffusion guidance [4]:

and the empty text vector, which is consistent with the form of classifier-free diffusion guidance [4]:

The mixture of the edit main branch is a mixture of the content feature vector  and the concept feature vector

and the concept feature vector  , which is

, which is

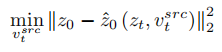

At this point, the key to the research is how to obtain the feature vector of structural information from a single source image , and Obtain the feature vector of concept information

, and Obtain the feature vector of concept information  from a single reference image. The article achieves this purpose through two different inversion schemes.

from a single reference image. The article achieves this purpose through two different inversion schemes.

In order to restore the source image, the article refers to the NULL-text[5] optimization scheme and learns the feature vectors of T stages to match the fitted source image. But unlike NULL-text, which optimizes the empty text vector to fit the DDIM path, this article directly fits the estimated clean feature vector by optimizing the source image feature vector. The fitting formula is:

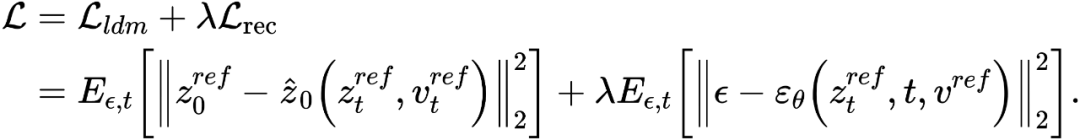

Different from learning structural information, the concept information in the reference image needs to be represented by a single highly generalized feature vector. The T stages of the diffusion model share a concept feature vector  . The article optimizes the existing inversion schemes Textual Inversion [6] and DreamArtist [7]. It uses a multi-concept feature vector to represent the content of the reference image. The loss function includes a noise estimation term of the diffusion model and an estimated reconstruction loss term in the latent vector space:

. The article optimizes the existing inversion schemes Textual Inversion [6] and DreamArtist [7]. It uses a multi-concept feature vector to represent the content of the reference image. The loss function includes a noise estimation term of the diffusion model and an estimated reconstruction loss term in the latent vector space:

Experimental results

The article is on the subject replacement and stylization tasks Experiments were conducted to transform the content into the main body or style of the reference image while better maintaining the structural information of the source image.

Rewritten content: Paper on experimental effects

Compared with previous solutions, the VCT framework proposed in this article has the following advantages:

##(1) Application generalization:Compared with previous image editing tasks based on image guidance, VCT does not require a large amount of data for training, and has better generation quality and generalization. It is based on the idea of inversion and is based on high-quality Vincentian graph models pre-trained on open world data. In actual application, only one input image and one reference image are needed to achieve better image editing effects.

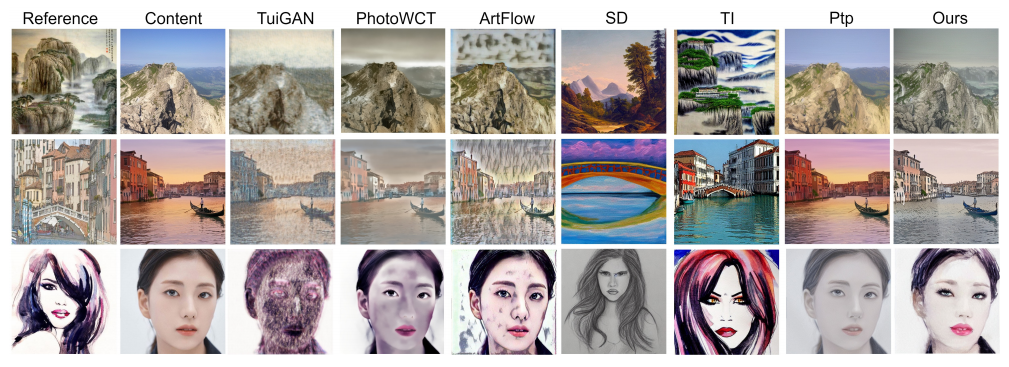

(2) Visual accuracy: Compared with recent text editing solutions for images, VCT uses pictures for reference guidance. Picture reference allows you to edit pictures more accurately than text descriptions. The following figure shows the comparison results between VCT and other solutions:

Comparison of the effects of the subject replacement task

Comparison effect of style transfer task

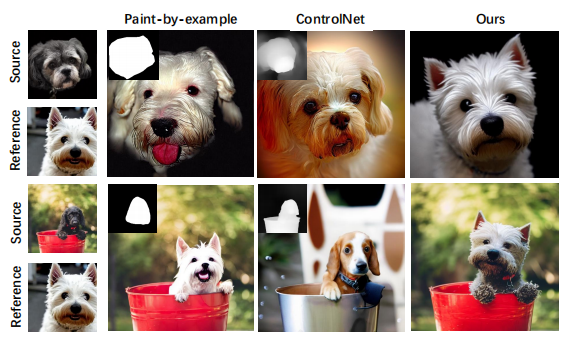

(3) No additional information is required: Comparison For some recent solutions that require adding additional control information (such as mask maps or depth maps) for guidance control, VCT directly learns structural information and semantic information from the source image and reference image for fusion generation. The following figure is Some comparison results. Among them, Paint-by-example replaces the corresponding objects with objects in the reference image by providing a mask map of the source image; Controlnet controls the generated results through line drawings, depth maps, etc.; and VCT directly draws from the source image. and reference images, learning structural information and content information to be fused into target images without additional restrictions.

Comparative effect of image editing scheme based on image guidance

NetEase Interactive Entertainment AI Lab

NetEase Interactive Entertainment AI Laboratory was established in 2017. It is affiliated to NetEase Interactive Entertainment Business Group and is the leading artificial intelligence laboratory in the game industry. The laboratory focuses on the research and application of computer vision, speech and natural language processing, and reinforcement learning in game scenarios. It aims to improve the technical level of NetEase Interactive Entertainment’s popular games and products through AI technology. At present, this technology has been used in many popular games, such as "Fantasy Westward Journey", "Harry Potter: Magic Awakening", "Onmyoji", "Westward Journey", etc.

The above is the detailed content of Various styles of VCT guidance, all with one picture, allowing you to easily implement it. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1376

1376

52

52

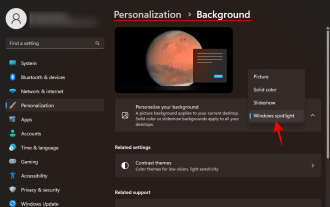

How to Download Windows Spotlight Wallpaper Image on PC

Aug 23, 2023 pm 02:06 PM

How to Download Windows Spotlight Wallpaper Image on PC

Aug 23, 2023 pm 02:06 PM

Windows are never one to neglect aesthetics. From the bucolic green fields of XP to the blue swirling design of Windows 11, default desktop wallpapers have been a source of user delight for years. With Windows Spotlight, you now have direct access to beautiful, awe-inspiring images for your lock screen and desktop wallpaper every day. Unfortunately, these images don't hang out. If you have fallen in love with one of the Windows spotlight images, then you will want to know how to download them so that you can keep them as your background for a while. Here's everything you need to know. What is WindowsSpotlight? Window Spotlight is an automatic wallpaper updater available from Personalization > in the Settings app

A deep dive into models, data, and frameworks: an exhaustive 54-page review of efficient large language models

Jan 14, 2024 pm 07:48 PM

A deep dive into models, data, and frameworks: an exhaustive 54-page review of efficient large language models

Jan 14, 2024 pm 07:48 PM

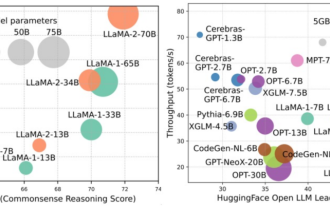

Large-scale language models (LLMs) have demonstrated compelling capabilities in many important tasks, including natural language understanding, language generation, and complex reasoning, and have had a profound impact on society. However, these outstanding capabilities require significant training resources (shown in the left image) and long inference times (shown in the right image). Therefore, researchers need to develop effective technical means to solve their efficiency problems. In addition, as can be seen from the right side of the figure, some efficient LLMs (LanguageModels) such as Mistral-7B have been successfully used in the design and deployment of LLMs. These efficient LLMs can significantly reduce inference memory while maintaining similar accuracy to LLaMA1-33B

How to use image semantic segmentation technology in Python?

Jun 06, 2023 am 08:03 AM

How to use image semantic segmentation technology in Python?

Jun 06, 2023 am 08:03 AM

With the continuous development of artificial intelligence technology, image semantic segmentation technology has become a popular research direction in the field of image analysis. In image semantic segmentation, we segment different areas in an image and classify each area to achieve a comprehensive understanding of the image. Python is a well-known programming language. Its powerful data analysis and data visualization capabilities make it the first choice in the field of artificial intelligence technology research. This article will introduce how to use image semantic segmentation technology in Python. 1. Prerequisite knowledge is deepening

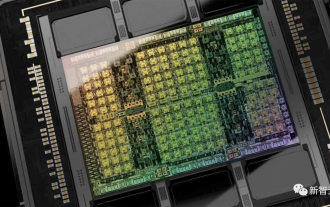

Crushing H100, Nvidia's next-generation GPU is revealed! The first 3nm multi-chip module design, unveiled in 2024

Sep 30, 2023 pm 12:49 PM

Crushing H100, Nvidia's next-generation GPU is revealed! The first 3nm multi-chip module design, unveiled in 2024

Sep 30, 2023 pm 12:49 PM

3nm process, performance surpasses H100! Recently, foreign media DigiTimes broke the news that Nvidia is developing the next-generation GPU, the B100, code-named "Blackwell". It is said that as a product for artificial intelligence (AI) and high-performance computing (HPC) applications, the B100 will use TSMC's 3nm process process, as well as more complex multi-chip module (MCM) design, and will appear in the fourth quarter of 2024. For Nvidia, which monopolizes more than 80% of the artificial intelligence GPU market, it can use the B100 to strike while the iron is hot and further attack challengers such as AMD and Intel in this wave of AI deployment. According to NVIDIA estimates, by 2027, the output value of this field is expected to reach approximately

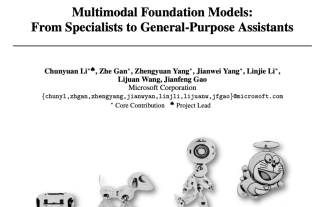

The most comprehensive review of multimodal large models is here! 7 Microsoft researchers cooperated vigorously, 5 major themes, 119 pages of document

Sep 25, 2023 pm 04:49 PM

The most comprehensive review of multimodal large models is here! 7 Microsoft researchers cooperated vigorously, 5 major themes, 119 pages of document

Sep 25, 2023 pm 04:49 PM

The most comprehensive review of multimodal large models is here! Written by 7 Chinese researchers at Microsoft, it has 119 pages. It starts from two types of multi-modal large model research directions that have been completed and are still at the forefront, and comprehensively summarizes five specific research topics: visual understanding and visual generation. The multi-modal large-model multi-modal agent supported by the unified visual model LLM focuses on a phenomenon: the multi-modal basic model has moved from specialized to universal. Ps. This is why the author directly drew an image of Doraemon at the beginning of the paper. Who should read this review (report)? In the original words of Microsoft: As long as you are interested in learning the basic knowledge and latest progress of multi-modal basic models, whether you are a professional researcher or a student, this content is very suitable for you to come together.

iOS 17: How to use one-click cropping in photos

Sep 20, 2023 pm 08:45 PM

iOS 17: How to use one-click cropping in photos

Sep 20, 2023 pm 08:45 PM

With the iOS 17 Photos app, Apple makes it easier to crop photos to your specifications. Read on to learn how. Previously in iOS 16, cropping an image in the Photos app involved several steps: Tap the editing interface, select the crop tool, and then adjust the crop using a pinch-to-zoom gesture or dragging the corners of the crop tool. In iOS 17, Apple has thankfully simplified this process so that when you zoom in on any selected photo in your Photos library, a new Crop button automatically appears in the upper right corner of the screen. Clicking on it will bring up the full cropping interface with the zoom level of your choice, so you can crop to the part of the image you like, rotate the image, invert the image, or apply screen ratio, or use markers

How to batch resize images using PowerToys on Windows

Aug 23, 2023 pm 07:49 PM

How to batch resize images using PowerToys on Windows

Aug 23, 2023 pm 07:49 PM

Those who have to work with image files on a daily basis often have to resize them to fit the needs of their projects and jobs. However, if you have too many images to process, resizing them individually can consume a lot of time and effort. In this case, a tool like PowerToys can come in handy to, among other things, batch resize image files using its image resizer utility. Here's how to set up your Image Resizer settings and start batch resizing images with PowerToys. How to Batch Resize Images with PowerToys PowerToys is an all-in-one program with a variety of utilities and features to help you speed up your daily tasks. One of its utilities is images

I2V-Adapter from the SD community: no configuration required, plug and play, perfectly compatible with Tusheng video plug-in

Jan 15, 2024 pm 07:48 PM

I2V-Adapter from the SD community: no configuration required, plug and play, perfectly compatible with Tusheng video plug-in

Jan 15, 2024 pm 07:48 PM

The image-to-video generation (I2V) task is a challenge in the field of computer vision that aims to convert static images into dynamic videos. The difficulty of this task is to extract and generate dynamic information in the temporal dimension from a single image while maintaining the authenticity and visual coherence of the image content. Existing I2V methods often require complex model architectures and large amounts of training data to achieve this goal. Recently, a new research result "I2V-Adapter: AGeneralImage-to-VideoAdapter for VideoDiffusionModels" led by Kuaishou was released. This research introduces an innovative image-to-video conversion method and proposes a lightweight adapter module, i.e.