Technology peripherals

Technology peripherals

It Industry

It Industry

UAE and Saudi Arabia accelerate purchases of Nvidia chips to boost artificial intelligence development

UAE and Saudi Arabia accelerate purchases of Nvidia chips to boost artificial intelligence development

UAE and Saudi Arabia accelerate purchases of Nvidia chips to boost artificial intelligence development

Morning news on August 15, Beijing time, it is reported that Saudi Arabia and the United Arab Emirates are rushing to purchase thousands of high-performance Nvidia chips, joining the global artificial intelligence arms race, making this most popular commodity in Silicon Valley even more in short supply. .

Both Gulf countries have made public their ambition to become leaders in artificial intelligence as a means of stimulating economic development. But there are also concerns that authoritarian leaders in both countries could misuse the technology.

According to people familiar with the matter, Saudi Arabia has purchased 3,000 Nvidia H100 chips through King Abdullah University of Science and Technology. Nvidia CEO Jensen Huang said that the chip, which sells for $40,000, is "the world's first computer chip specifically designed for generative artificial intelligence."

The UAE also ensures access to thousands of Nvidia chips, And developed its own open source large language model Falcon

at the state-owned Institute of Technology and Innovation in Masdar City, Abu Dhabi. The UAE has made the decision that they want to own and control their own computing power and computing talent, and have own platform. People familiar with the situation in Abu Dhabi say the key is that they have enough capital, they have the energy and they can attract top talent from around the world. People familiar with the matter said the UAE has made the decision to own and control its own computing power and talent and build its own platform. They pointed out that the key to this decision is that the UAE has sufficient capital and abundant energy resources, and also has the ability to attract the world's top talents

Just as the world's top technology companies are scrambling to purchase this scarce artificial intelligence chip At that time, the two Gulf countries also purchased a large number of Nvidia chips through state-owned groups.

US companies such as OpenAI and Google are currently the companies with the most advanced large language models, and are also the main buyers of NVIDIA H100 and A100 chipsAccording to multiple sources close to NVIDIA and According to people familiar with its foundry partner TSMC, it is estimated that Nvidia will ship approximately 550,000 H100 chips globally in 2023. However, Nvidia declined to comment. According to people familiar with the matter, King Abdullah University of Science and Technology is expected to obtain 3,000 of these chips by the end of 2023, with a total value of approximately US$120 million.According to estimates, OpenAI successfully trained the advanced GPT-3 model in just over a month and using 1,024 A100 chips. A100 is Nvidia's previous generation chip and its performance is not as good as H100According to people familiar with the matter,This Saudi Arabian university also has at least 200 A100 chips and they are developing a supercomputer called Shaheen III

and will be put into operation this year. The machine will be powered by 700 Nvidia Grace Hoppers superchips, a type of chip designed for cutting-edge artificial intelligence applications.According to people familiar with the matter, King Abdullah University of Science and Technology plans to use these chips to develop its own large-scale language models. This software is similar to OpenAI’s GPT-4 and can generate text, images and code comparable to humans. The UAE became the first country to set up an artificial intelligence department in 2017 and released the "Generative Artificial Intelligence" Guidance” to implement the country’s government’s plan to strengthen its position as a global pioneer in technology and artificial intelligence and limit the misuse of artificial intelligence through a regulatory framework

Falcon models in the UAE are currently available online Free to use. The model used 384 A100 chips and took 2 months to train. A leading expert on artificial intelligence and large language models said: "Considering the resources it uses, this model is really impressive. "I'm impressed. It's been one of the best models in open source." Venture capitalists like Marc Andreessen are impressed. An Emirati sovereign wealth investor said Andreessen had been in touch with the team. However, a spokesman for Andreessen declined to comment. According to people familiar with the matter, the UAE government has purchased a new batch of Nvidia chips to power applications and cloud services related to large language models. Be preparedWestern AI leaders and human rights experts worry that software developed by the two countries may lack the ethics and safety features of big tech companiesEvonne McGaw Wen, director of the European office of the Center for Democracy and Technology, said: "We are concerned about the discriminatory impact of artificial intelligence and its powerful power in illegal surveillance." After energy prices surged last year, petrodollars gave Saudi Arabia The Arab and Emirati economies have brought unexpected benefits. Both countries have the world's largest and most active sovereign investment funds. Gulf state-affiliated funds have recently made deals with Western artificial intelligence startups, according to two CEOs of European artificial intelligence companies who asked not to be identified. Contact, hoping to obtain expertise in codes and large language models by exchanging computing resources"In order to give full play to our talent advantages, they put forward high prices and data resource requirements." An executive saidWhen OpenAI CEO Sam Altman visited Abu Dhabi in June this year, he spoke highly of the country’s importance to artificial intelligence and praised its foresight. He told a question-and-answer event in Abu Dhabi's financial district that the Gulf region could play a central role in the global conversation around artificial intelligence and its regulation. At a time when artificial intelligence has not yet attracted widespread attention, Abu Dhabi Bee started discussing the technology. He said: "Everyone is in the artificial intelligence wave right now, and we are very excited about it. But at a time when everyone thought artificial intelligence was unachievable, some people were talking about this technology, and I am deeply grateful to them. ”

Advertising statement: This article contains external jump links (including but not limited to hyperlinks, QR codes, passwords, etc.), which are designed to convey more information and save screening time. However, the results of these links are for reference only, please note that all articles on this site contain this statement

The above is the detailed content of UAE and Saudi Arabia accelerate purchases of Nvidia chips to boost artificial intelligence development. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S

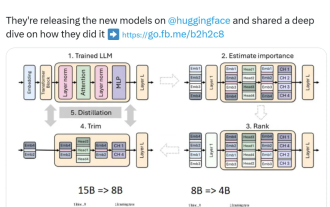

Nvidia plays with pruning and distillation: halving the parameters of Llama 3.1 8B to achieve better performance with the same size

Aug 16, 2024 pm 04:42 PM

Nvidia plays with pruning and distillation: halving the parameters of Llama 3.1 8B to achieve better performance with the same size

Aug 16, 2024 pm 04:42 PM

The rise of small models. Last month, Meta released the Llama3.1 series of models, which includes Meta’s largest model to date, the 405B model, and two smaller models with 70 billion and 8 billion parameters respectively. Llama3.1 is considered to usher in a new era of open source. However, although the new generation models are powerful in performance, they still require a large amount of computing resources when deployed. Therefore, another trend has emerged in the industry, which is to develop small language models (SLM) that perform well enough in many language tasks and are also very cheap to deploy. Recently, NVIDIA research has shown that structured weight pruning combined with knowledge distillation can gradually obtain smaller language models from an initially larger model. Turing Award Winner, Meta Chief A

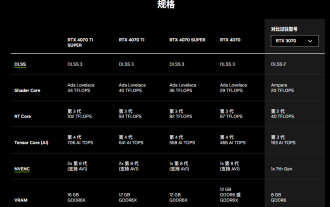

Nvidia releases GDDR6 memory version of GeForce RTX 4070 graphics card, available from September

Aug 21, 2024 am 07:31 AM

Nvidia releases GDDR6 memory version of GeForce RTX 4070 graphics card, available from September

Aug 21, 2024 am 07:31 AM

According to news from this site on August 20, multiple sources reported in July that Nvidia RTX4070 and above graphics cards will be in tight supply in August due to the shortage of GDDR6X video memory. Subsequently, speculation spread on the Internet about launching a GDDR6 memory version of the RTX4070 graphics card. As previously reported by this site, Nvidia today released the GameReady driver for "Black Myth: Wukong" and "Star Wars: Outlaws". At the same time, the press release also mentioned the release of the GDDR6 video memory version of GeForce RTX4070. Nvidia stated that the new RTX4070's specifications other than the video memory will remain unchanged (of course, it will also continue to maintain the price of 4,799 yuan), providing similar performance to the original version in games and applications, and related products will be launched from