Technology peripherals

Technology peripherals

It Industry

It Industry

Start-up companies have difficulty financing, Nvidia dominates the field of AI chips, investment volume fell by 80%

Start-up companies have difficulty financing, Nvidia dominates the field of AI chips, investment volume fell by 80%

Start-up companies have difficulty financing, Nvidia dominates the field of AI chips, investment volume fell by 80%

According to news on September 12, many investors said that Nvidia has achieved dominance in the field of artificial intelligence (AI) chip manufacturing, which makes its potential competitors encounter greater challenges in financing.

In the second quarter of this year, the number of financing transactions for chip startups in the United States dropped by 80% compared with the same period last year.

Nvidia dominates the chip market, processing massive amounts of language data. Generative AI models gradually become smarter by being exposed to more data, a process called training. As Nvidia has emerged as a powerful player in the field, chip manufacturing companies trying to compete with it have Things are getting tougher. Venture capitalists view these startups as higher risk and are reluctant to invest heavily. Advancing a chip design to a working prototype stage can require more than $500 million in funding, so investor withdrawals could soon threaten a startup's prospects

Eclipse Ventures partner Greg Reihau said : "Nvidia has been dominant, which has opened our eyes to the difficulties of entering this market. This has led to less investment in startups in this space, or at least less investment in many of them."

Data from the database analysis platform Pitchbook shows that as of the end of August this year, US chip start-ups have raised US$881.4 million. That compares with $1.79 billion in the first three quarters of 2022. The number of transactions fell from 23 to four by the end of August. Nvidia declined to comment.

According to a report from the technology website The Register, the artificial intelligence chip startup Mythic raised a total of approximately US$160 million, but ran out of cash last year and was almost forced to cease operations. However, in March of this year, the company managed to secure new investment, albeit only $13 million. Mythic CEO Dave Rick said that Nvidia "indirectly" exacerbated the overall artificial intelligence crisis. The financing dilemma of the smart chip industry is because investors want "home run investments with huge investments and huge returns." However, the difficult economic environment has exacerbated the cyclical semiconductor industry downturn.

A mysterious startup called Rivos has recently encountered difficulties in raising funds, according to two people familiar with the matter. Rivos' main goal is to design chips for use in data servers. A spokesman for Rivos said that Nvidia's dominance in the market has not hindered its financing efforts and that its hardware and software "continue to make our investments Rivos is currently in a legal battle with Apple, which accuses Rivos of stealing intellectual property secrets, exacerbating its financing challenges.

Investors are becoming more demanding

According to sources, chip startups seeking financing are facing more demanding requirements from investors. These investors are demanding products that the companies can launch or already have on sale within months. About two years ago, new investments in chip startups were typically in the $200 or $300 million range. However, that number has now dropped to about $100 million, according to PitchBook analyst Brendan Burke. At least two AI chip startups are hyping up potential customers or Relationships with high-profile executives convinced investors and allayed their concerns. Revised content: At least two artificial intelligence chip startups have succeeded in convincing investors and allaying their doubts by widely publicizing their relationships with potential customers or high-profile executives.

In August, for After raising $100 million, Canadian AI chip startup Tenstorrent hired CEO Jim Keller. Keller is a near-legendary chip designer who has designed chips for Apple, AMD and Tesla.

Silicon Valley artificial intelligence chip startup D-Matrix expects revenue this year to be less than $10 million, but it successfully raised $110 million in funding last week. The achievement comes thanks to Microsoft's support and the Windows operating system maker's commitment to test D-Matrix's new AI chips when they launch next year. Although these chips are manufactured in Nvidia's shadow Businesses are having a hard time, but startups in artificial intelligence software and related technologies do not face the same constraints. According to PitchBook data, these startups have received about $24 billion in financing as of August this year.

Despite Nvidia’s dominance in artificial intelligence computing, the company is not invulnerable in the field. AMD plans to launch a chip this year to compete with Nvidia, while Intel has grown by leaps and bounds by acquiring a competing product through acquisition. Sources believe that in the long term, these chips have the potential to become a replacement for Nvidia chips.

There are also some similar use cases that may also provide opportunities for competitors. For example, chips that perform data-intensive calculations for predictive algorithms are an emerging niche market. Nvidia doesn't dominate this space, and it's an area ripe for investment.

Advertising Statement: This article contains external jump links (including but not limited to hyperlinks, QR codes, passwords, etc.), which are designed to provide more information and save screening time. However, the linked results are for reference only, please note that all articles on this site contain this statement

The above is the detailed content of Start-up companies have difficulty financing, Nvidia dominates the field of AI chips, investment volume fell by 80%. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

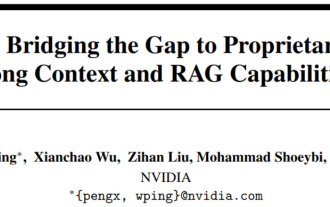

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

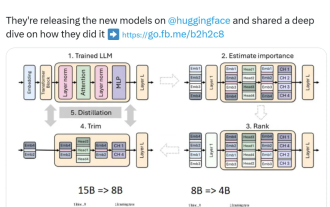

Nvidia plays with pruning and distillation: halving the parameters of Llama 3.1 8B to achieve better performance with the same size

Aug 16, 2024 pm 04:42 PM

Nvidia plays with pruning and distillation: halving the parameters of Llama 3.1 8B to achieve better performance with the same size

Aug 16, 2024 pm 04:42 PM

The rise of small models. Last month, Meta released the Llama3.1 series of models, which includes Meta’s largest model to date, the 405B model, and two smaller models with 70 billion and 8 billion parameters respectively. Llama3.1 is considered to usher in a new era of open source. However, although the new generation models are powerful in performance, they still require a large amount of computing resources when deployed. Therefore, another trend has emerged in the industry, which is to develop small language models (SLM) that perform well enough in many language tasks and are also very cheap to deploy. Recently, NVIDIA research has shown that structured weight pruning combined with knowledge distillation can gradually obtain smaller language models from an initially larger model. Turing Award Winner, Meta Chief A

Compliant with NVIDIA SFF-Ready specification, ASUS launches Prime GeForce RTX 40 series graphics cards

Jun 15, 2024 pm 04:38 PM

Compliant with NVIDIA SFF-Ready specification, ASUS launches Prime GeForce RTX 40 series graphics cards

Jun 15, 2024 pm 04:38 PM

According to news from this site on June 15, Asus has recently launched the Prime series GeForce RTX40 series "Ada" graphics card. Its size complies with Nvidia's latest SFF-Ready specification. This specification requires that the size of the graphics card does not exceed 304 mm x 151 mm x 50 mm (length x height x thickness). ). The Prime series GeForceRTX40 series launched by ASUS this time includes RTX4060Ti, RTX4070 and RTX4070SUPER, but it currently does not include RTX4070TiSUPER or RTX4080SUPER. This series of RTX40 graphics cards adopts a common circuit board design with dimensions of 269 mm x 120 mm x 50 mm. The main differences between the three graphics cards are

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S