Technology peripherals

Technology peripherals

AI

AI

More versatile and effective, Ant's self-developed optimizer WSAM was selected into KDD Oral

More versatile and effective, Ant's self-developed optimizer WSAM was selected into KDD Oral

More versatile and effective, Ant's self-developed optimizer WSAM was selected into KDD Oral

The generalization ability of deep neural networks (DNNs) is closely related to the flatness of the extreme points, so the Sharpness-Aware Minimization (SAM) algorithm has emerged to find flatter extreme points to improve the generalization ability. . This paper re-examines the loss function of SAM and proposes a more general and effective method, WSAM, to improve the flatness of training extreme points by using flatness as a regularization term. Experiments on various public datasets show that compared with the original optimizer, SAM and its variants, WSAM achieves better generalization performance in the vast majority of cases. WSAM has also been widely adopted in Ant's internal digital payment, digital finance and other scenarios and has achieved remarkable results. This paper was accepted as an Oral Paper by KDD '23.

- ##Paper address: https: //arxiv.org/pdf/2305.15817.pdf

- Code address: https://github.com/intelligent-machine-learning/dlrover/tree/ master/atorch/atorch/optimizers

#With the development of deep learning technology, highly over-parameterized DNNs are used in various machine learning scenarios such as CV and NLP. It was a huge success. Although over-parameterized models tend to overfit the training data, they usually have good generalization capabilities. The secret of generalization has attracted more and more attention and has become a popular research topic in the field of deep learning.

The latest research shows that generalization ability is closely related to the flatness of extreme points. In other words, the presence of flat extreme points in the "landscape" of the loss function allows for smaller generalization errors. Sharpness-Aware Minimization (SAM) [1] is a technique for finding flatter extreme points and is considered to be one of the most promising technical directions currently. SAM technology is widely used in many fields such as computer vision, natural language processing, and two-layer learning, and significantly outperforms previous state-of-the-art methods in these fields

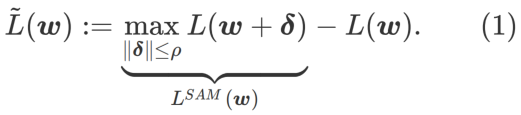

In order to explore a flatter The minimum value of , SAM defines the flatness of the loss function L at w as follows:

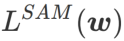

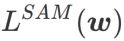

GSAM [2] proved  is an approximation of the maximum eigenvalue of the Hessian matrix at the local extreme point, indicating that is indeed an effective measure of flatness (steepness). However

is an approximation of the maximum eigenvalue of the Hessian matrix at the local extreme point, indicating that is indeed an effective measure of flatness (steepness). However  can only be used to find flatter areas rather than minimum points, which may cause the loss function to converge to a point where the loss value is still large (although the surrounding area is flat). Therefore, SAM uses

can only be used to find flatter areas rather than minimum points, which may cause the loss function to converge to a point where the loss value is still large (although the surrounding area is flat). Therefore, SAM uses

, that is,

, that is,  as the loss function. It can be seen as a compromise between finding a flatter surface and smaller loss value between and

as the loss function. It can be seen as a compromise between finding a flatter surface and smaller loss value between and

, where both are given equal weight.

, where both are given equal weight.

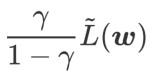

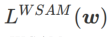

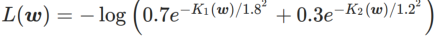

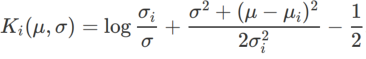

This article rethinks the construction of  and regards

and regards  as a regularization term. We have developed a more general and effective algorithm called WSAM (Weighted Sharpness-Aware Minimization), whose loss function adds a weighted flatness term

as a regularization term. We have developed a more general and effective algorithm called WSAM (Weighted Sharpness-Aware Minimization), whose loss function adds a weighted flatness term  as a regular term, in which the hyperparameter

as a regular term, in which the hyperparameter  Controls the weight of flatness. In the method introduction chapter, we demonstrated how to use

Controls the weight of flatness. In the method introduction chapter, we demonstrated how to use  to guide the loss function to find flatter or smaller extreme points. Our key contributions can be summarized as follows.

to guide the loss function to find flatter or smaller extreme points. Our key contributions can be summarized as follows.

- We propose WSAM, which treats flatness as a regularization term and gives different weights between different tasks. We propose a "weight decoupling" technique to handle the regularization term in the update formula, aiming to accurately reflect the flatness of the current step. When the underlying optimizer is not SGD, such as SGDM and Adam, WSAM differs significantly from SAM in form. Ablation experiments show that this technique improves performance in most cases.

- We verified the effectiveness of WSAM on common tasks on public datasets. Experimental results show that compared with SAM and its variants, WSAM has better generalization performance in most situations.

Preliminary knowledge

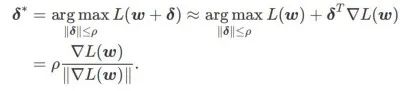

SAM is to solve the minimax optimization problem of  defined by formula (1) a technology.

defined by formula (1) a technology.

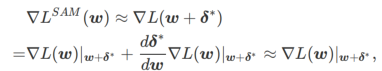

First, SAM uses the first-order Taylor expansion around w to approximate the maximization problem of the inner layer, that is, ,

##Secondly, SAM updates w by adopting the approximate gradient of , i.e.

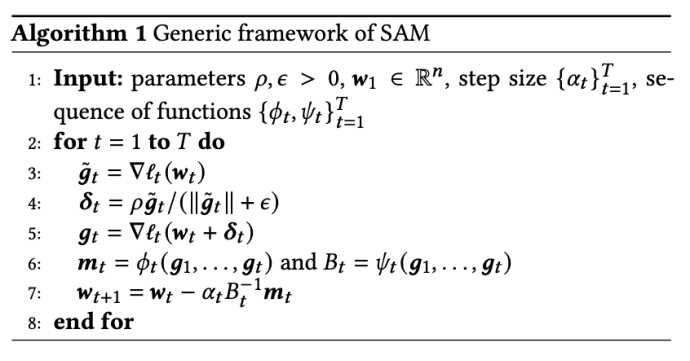

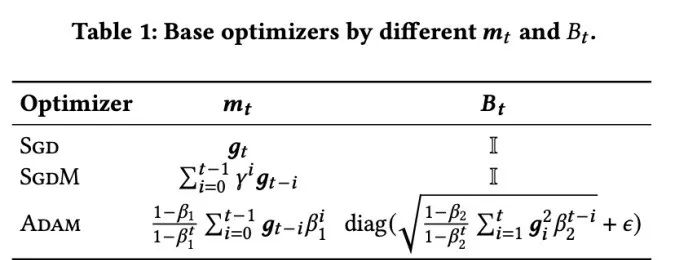

The second approximation is for acceleration calculate. Other gradient-based optimizers (called base optimizers) can be incorporated into the general framework of SAM, see Algorithm 1 for details. By changing  and

and  in Algorithm 1, we can get different basic optimizers, such as SGD, SGDM and Adam, see Tab. 1. Note that Algorithm 1 falls back to the original SAM from the SAM paper [1] when the base optimizer is SGD.

in Algorithm 1, we can get different basic optimizers, such as SGD, SGDM and Adam, see Tab. 1. Note that Algorithm 1 falls back to the original SAM from the SAM paper [1] when the base optimizer is SGD.

Design details of WSAM

Here, we give the formal definition of

, which consists of a regular loss and a flatness term. From formula (1), we have

in  . When

. When  =0,

=0,  degenerates into a regular loss; when

degenerates into a regular loss; when  =1/2,

=1/2,  is equivalent to

is equivalent to  ; When

; When  >1/2,

>1/2,  pays more attention to flatness, so it is easier to find points with smaller curvature rather than smaller loss values compared with SAM; vice versa; Likewise.

pays more attention to flatness, so it is easier to find points with smaller curvature rather than smaller loss values compared with SAM; vice versa; Likewise.

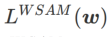

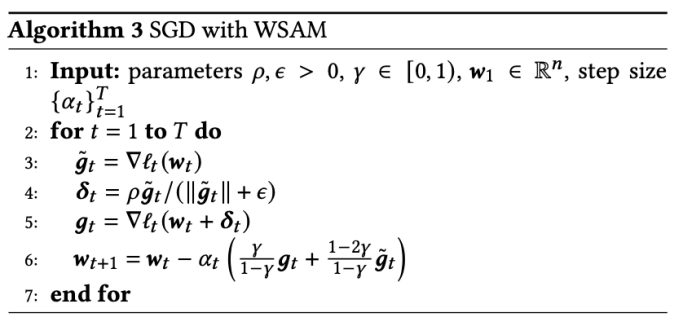

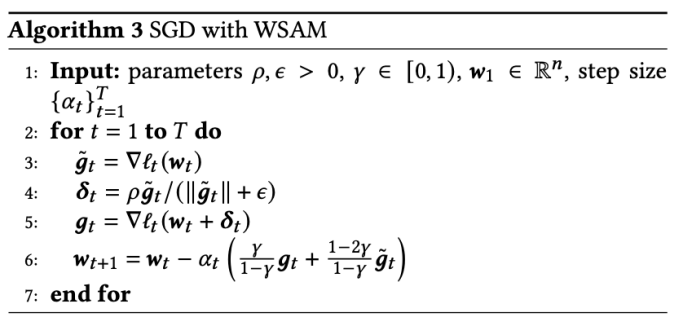

A general framework for WSAM that includes different base optimizers can be implemented by choosing different  and

and  , see Algorithm 2. For example, when

, see Algorithm 2. For example, when  and

and  , we get WSAM whose base optimizer is SGD, see Algorithm 3. Here, we adopt a "weight decoupling" technique, that is,

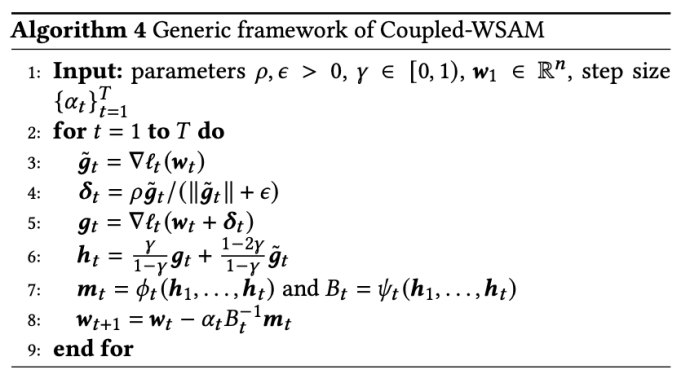

, we get WSAM whose base optimizer is SGD, see Algorithm 3. Here, we adopt a "weight decoupling" technique, that is,  the flatness term is not integrated with the base optimizer for calculating gradients and updating weights, but is calculated independently (the last term on line 7 of Algorithm 2 ). In this way, the effect of regularization only reflects the flatness of the current step without additional information. For comparison, Algorithm 4 gives a WSAM without "weight decoupling" (called Coupled-WSAM). For example, if the underlying optimizer is SGDM, the regularization term of Coupled-WSAM is an exponential moving average of flatness. As shown in the experimental section, "weight decoupling" can improve generalization performance in most cases.

the flatness term is not integrated with the base optimizer for calculating gradients and updating weights, but is calculated independently (the last term on line 7 of Algorithm 2 ). In this way, the effect of regularization only reflects the flatness of the current step without additional information. For comparison, Algorithm 4 gives a WSAM without "weight decoupling" (called Coupled-WSAM). For example, if the underlying optimizer is SGDM, the regularization term of Coupled-WSAM is an exponential moving average of flatness. As shown in the experimental section, "weight decoupling" can improve generalization performance in most cases.

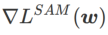

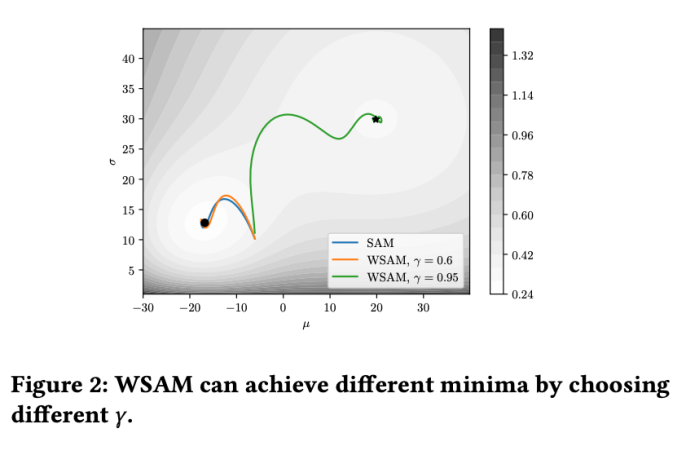

Fig. 1 shows the WSAM update process under different  values. When

values. When  ,

,  is between

is between  and

and  , and As

, and As  increases, it gradually deviates from

increases, it gradually deviates from  .

.

Simple example

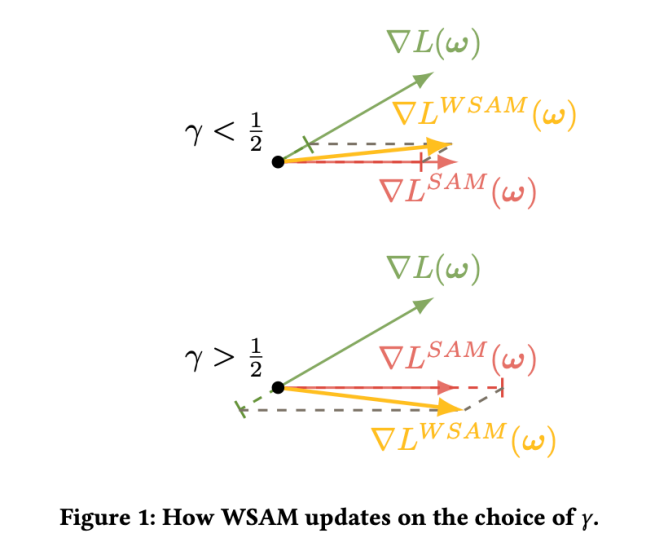

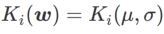

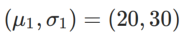

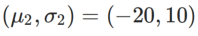

In order to better illustrate the effect and advantages of γ in WSAM, we set Here is a simple two-dimensional example. As shown in Fig. 2, the loss function has a relatively uneven extreme point in the lower left corner (position: (-16.8, 12.8), loss value: 0.28), and a flat extreme point in the upper right corner (position: (19.8, 29.9), loss value: 0.36). The loss function is defined as:  , where

, where  is the KL divergence between the univariate Gaussian model and two normal distributions, that is,

is the KL divergence between the univariate Gaussian model and two normal distributions, that is,  , where

, where  and

and  .

.

We use SGDM with a momentum of 0.9 as the base optimizer and set  =2 for SAM and WSAM. Starting from the initial point (-6, 10), the loss function is optimized in 150 steps using a learning rate of 5. SAM converges to the extreme point where the loss value is lower but more uneven, and the WSAM of

=2 for SAM and WSAM. Starting from the initial point (-6, 10), the loss function is optimized in 150 steps using a learning rate of 5. SAM converges to the extreme point where the loss value is lower but more uneven, and the WSAM of  =0.6 is similar. However,

=0.6 is similar. However,  =0.95 causes the loss function to converge to a flat extreme point, indicating that stronger flatness regularization plays a role.

=0.95 causes the loss function to converge to a flat extreme point, indicating that stronger flatness regularization plays a role.

Experiments

We conducted experiments on various tasks to verify the effectiveness of WSAM .

Image Classification

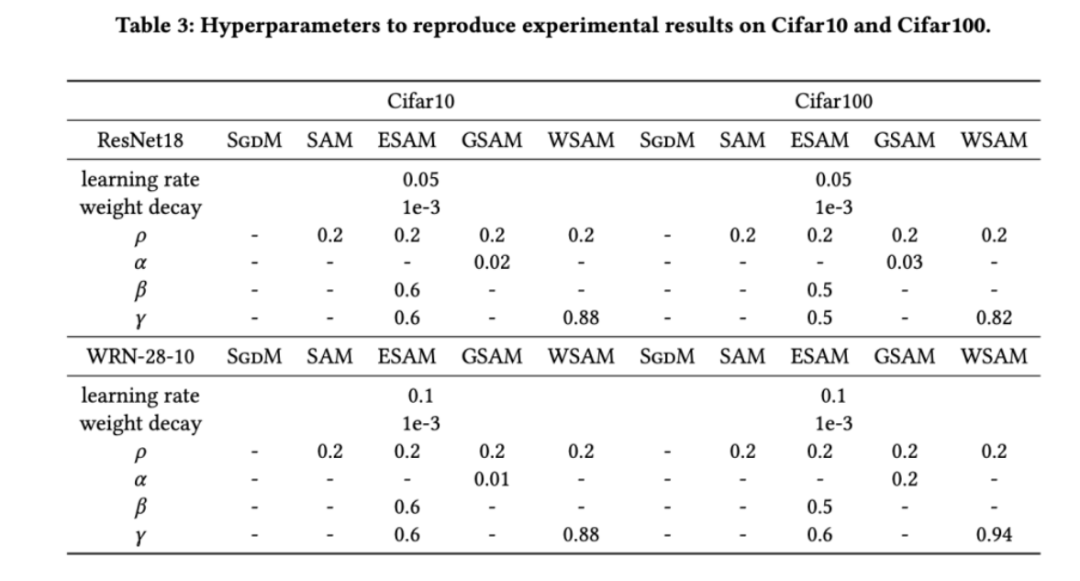

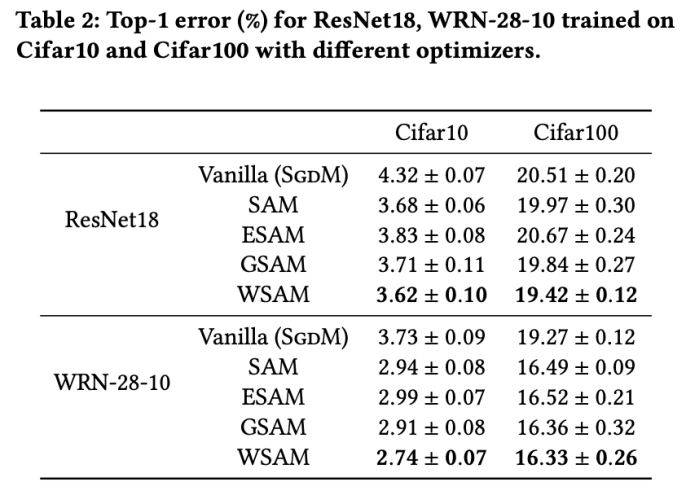

We first studied the effect of WSAM on training models from scratch on the Cifar10 and Cifar100 datasets. The models we selected include ResNet18 and WideResNet-28-10. We train models on Cifar10 and Cifar100 using predefined batch sizes of 128, 256 for ResNet18 and WideResNet-28-10 respectively. The base optimizer used here is SGDM with momentum 0.9. According to the settings of SAM [1], each basic optimizer runs twice the number of epochs as the SAM class optimizer. We trained both models for 400 epochs (200 epochs for the SAM class optimizer) and used a cosine scheduler to decay the learning rate. Here we do not use other advanced data augmentation methods such as cutout and AutoAugment.

For both models, we use joint grid search to determine the learning rate and weight decay coefficients of the base optimizer and keep them constant for the following SAM-like optimizer experiments. The search ranges of learning rate and weight decay coefficient are {0.05, 0.1} and {1e-4, 5e-4, 1e-3} respectively. Since all SAM class optimizers have a hyperparameter  (neighborhood size), we next search for the best

(neighborhood size), we next search for the best  on the SAM optimizer and use the same value for other SAMs Class optimizer. The search range of

on the SAM optimizer and use the same value for other SAMs Class optimizer. The search range of  is {0.01, 0.02, 0.05, 0.1, 0.2, 0.5}. Finally, we searched for the unique hyperparameters of other SAM class optimizers, and the search range came from the recommended range of their respective original articles. For GSAM [2], we search in the range {0.01, 0.02, 0.03, 0.1, 0.2, 0.3}. For ESAM [3], we search for

is {0.01, 0.02, 0.05, 0.1, 0.2, 0.5}. Finally, we searched for the unique hyperparameters of other SAM class optimizers, and the search range came from the recommended range of their respective original articles. For GSAM [2], we search in the range {0.01, 0.02, 0.03, 0.1, 0.2, 0.3}. For ESAM [3], we search for  in the range {0.4, 0.5, 0.6},

in the range {0.4, 0.5, 0.6},  within the range {0.4, 0.5, 0.6}, and Search

within the range {0.4, 0.5, 0.6}, and Search  within the range {0.4, 0.5, 0.6}. For WSAM, we search for

within the range {0.4, 0.5, 0.6}. For WSAM, we search for  in the range {0.5, 0.6, 0.7, 0.8, 0.82, 0.84, 0.86, 0.88, 0.9, 0.92, 0.94, 0.96}. We repeated the experiment 5 times using different random seeds and calculated the mean error and standard deviation. We conduct experiments on a single-card NVIDIA A100 GPU. Optimizer hyperparameters for each model are summarized in Tab. 3.

in the range {0.5, 0.6, 0.7, 0.8, 0.82, 0.84, 0.86, 0.88, 0.9, 0.92, 0.94, 0.96}. We repeated the experiment 5 times using different random seeds and calculated the mean error and standard deviation. We conduct experiments on a single-card NVIDIA A100 GPU. Optimizer hyperparameters for each model are summarized in Tab. 3.

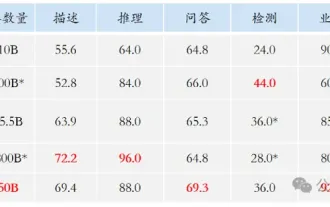

Tab. 2 shows the top-test results of ResNet18 and WRN-28-10 on Cifar10 and Cifar100 under different optimizers. 1 error rate. Compared with the basic optimizer, the SAM class optimizer significantly improves the performance. At the same time, WSAM is significantly better than other SAM class optimizers.

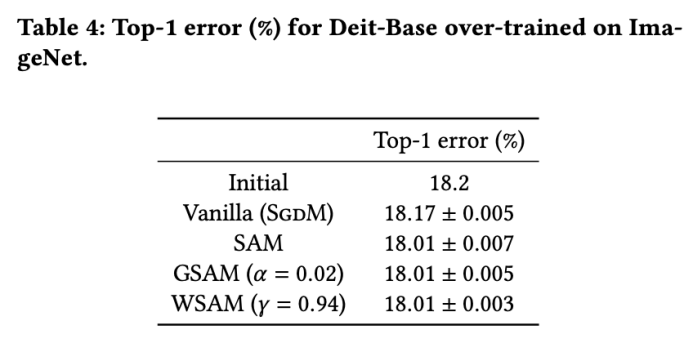

Additional training on ImageNet

We further use Data-Efficient Image on the ImageNet dataset Transformers network structure for experiments. We resume a pre-trained DeiT-base checkpoint and then continue training for three epochs. The model is trained using a batch size of 256, the base optimizer is SGDM with momentum 0.9, the weight decay coefficient is 1e-4, and the learning rate is 1e-5. We repeated the run 5 times on a four-card NVIDIA A100 GPU and calculated the average error and standard deviation

We searched for SAM in {0.05, 0.1, 0.5, 1.0,⋯ , 6.0} the best of . The optimal

. The optimal  =5.5 is used directly for other SAM class optimizers. After that, we search for the best

=5.5 is used directly for other SAM class optimizers. After that, we search for the best  of GSAM in {0.01, 0.02, 0.03, 0.1, 0.2, 0.3} and the best of WSAM between 0.80 and 0.98 with a step size of 0.02

of GSAM in {0.01, 0.02, 0.03, 0.1, 0.2, 0.3} and the best of WSAM between 0.80 and 0.98 with a step size of 0.02  .

.

The initial top-1 error rate of the model is 18.2%, and after three additional epochs, the error rate is shown in Tab. 4. We do not find significant differences between the three SAM-like optimizers, but they all outperform the base optimizer, indicating that they can find flatter extreme points and have better generalization capabilities.

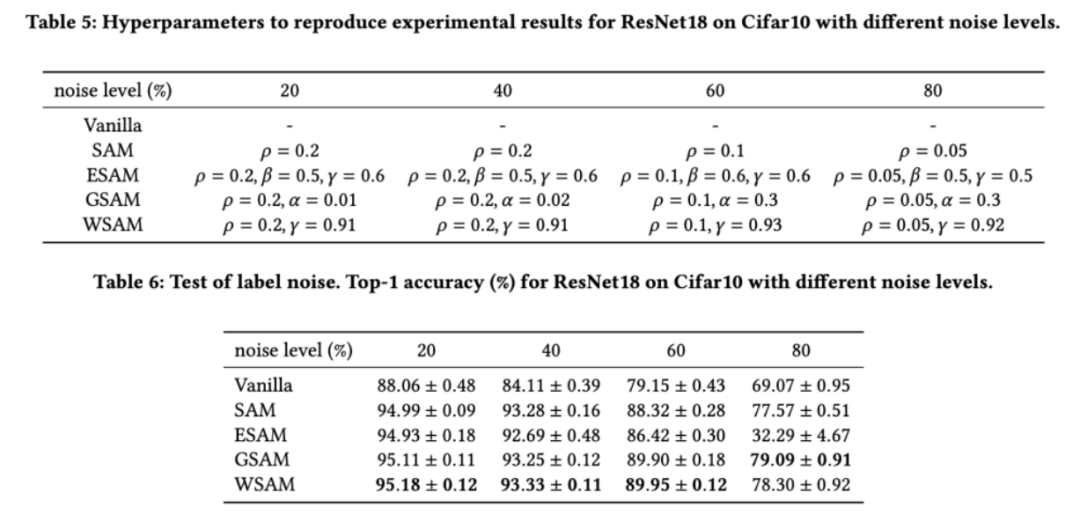

Robustness to label noise

As shown in previous studies [1, 4, 5], SAM class optimizers perform well in the presence of label noise in the training set Produces good robustness. Here, we compare the robustness of WSAM with SAM, ESAM, and GSAM. We train ResNet18 on the Cifar10 dataset for 200 epochs and inject symmetric label noise with noise levels of 20%, 40%, 60% and 80%. We use SGDM with 0.9 momentum as the base optimizer, a batch size of 128, a learning rate of 0.05, a weight decay coefficient of 1e-3, and a cosine scheduler to decay the learning rate. For each label noise level, we performed a grid search on the SAM within the range {0.01, 0.02, 0.05, 0.1, 0.2, 0.5} to determine a common  value. We then individually search for other optimizer-specific hyperparameters to find optimal generalization performance. We list the hyperparameters required to reproduce our results in Tab. 5. We present the results of the robustness test in Tab. 6. WSAM generally has better robustness than SAM, ESAM and GSAM.

value. We then individually search for other optimizer-specific hyperparameters to find optimal generalization performance. We list the hyperparameters required to reproduce our results in Tab. 5. We present the results of the robustness test in Tab. 6. WSAM generally has better robustness than SAM, ESAM and GSAM.

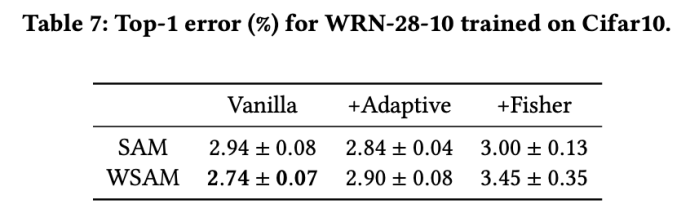

Exploring the impact of geometric structures

The SAM class optimizer can be used with ASAM [4] and Fisher Techniques such as SAM [5] are combined to adaptively adjust the shape of the explored neighborhood. We conduct experiments on WRN-28-10 on Cifar10 to compare the performance of SAM and WSAM when using adaptive and Fisher information methods, respectively, to understand how the geometry of the exploration region affects the generalization performance of SAM-like optimizers.

Except for the parameters except  and

and  , we reuse the configuration in image classification. According to previous studies [4, 5], the

, we reuse the configuration in image classification. According to previous studies [4, 5], the  of ASAM and Fisher SAM are usually larger. We search for the best

of ASAM and Fisher SAM are usually larger. We search for the best  in {0.1, 0.5, 1.0,…, 6.0}, and the best

in {0.1, 0.5, 1.0,…, 6.0}, and the best  for both ASAM and Fisher SAM is 5.0. After that, we searched for the best

for both ASAM and Fisher SAM is 5.0. After that, we searched for the best  of WSAM between 0.80 and 0.94 with a step size of 0.02, and the best

of WSAM between 0.80 and 0.94 with a step size of 0.02, and the best  of both methods was 0.88.

of both methods was 0.88.

Surprisingly, as shown in Tab. 7, the baseline WSAM shows better generalization even among multiple candidates. Therefore, we recommend directly using WSAM with a fixed  baseline.

baseline.

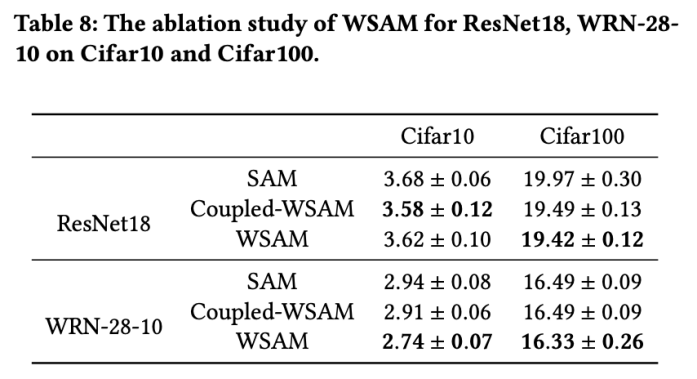

Ablation Experiment

In this section, we conduct ablation experiments to gain a deeper understanding of WSAM The importance of "weight decoupling" technology. As described in the design details of WSAM, we compare the WSAM variant without "weight decoupling" (Algorithm 4) Coupled-WSAM with the original method.

The results are shown in Tab. 8. Coupled-WSAM produces better results than SAM in most cases, and WSAM further improves the results in most cases, demonstrating the effectiveness of the "weight decoupling" technique.

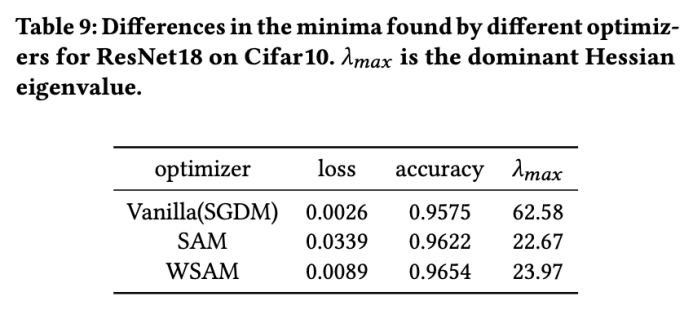

Extreme point analysis

Here, we further deepen our understanding of the WSAM optimizer by comparing the differences between the extreme points found by the WSAM and SAM optimizers. understand. The flatness (steepness) at extreme points can be described by the maximum eigenvalue of the Hessian matrix. The larger the eigenvalue, the less flat it is. We use the Power Iteration algorithm to calculate this maximum eigenvalue.

Tab. 9 shows the difference between the extreme points found by the SAM and WSAM optimizers. We find that the extreme points found by the vanilla optimizer have smaller loss values but are less flat, while the extreme points found by SAM have larger loss values but are flatter, thus improving generalization performance. Interestingly, the extreme points found by WSAM not only have much smaller loss values than SAM, but also have a flatness that is very close to SAM. This shows that in the process of finding extreme points, WSAM prioritizes ensuring smaller loss values while trying to search for flatter areas.

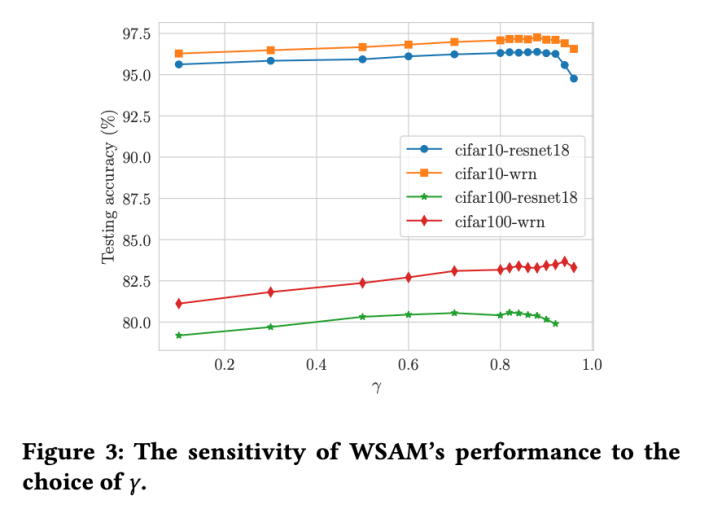

Hyperparameter sensitivity

Compared with SAM, WSAM has an additional hyperparameter , used to scale the size of the flat (steep) degree term. Here, we test the sensitivity of WSAM's generalization performance to this hyperparameter. We trained ResNet18 and WRN-28-10 models using WSAM on Cifar10 and Cifar100, using a wide range of

, used to scale the size of the flat (steep) degree term. Here, we test the sensitivity of WSAM's generalization performance to this hyperparameter. We trained ResNet18 and WRN-28-10 models using WSAM on Cifar10 and Cifar100, using a wide range of  values. As shown in Fig. 3, the results show that WSAM is not sensitive to the choice of hyperparameters. We also found that the optimal generalization performance of WSAM is almost always between 0.8 and 0.95.

values. As shown in Fig. 3, the results show that WSAM is not sensitive to the choice of hyperparameters. We also found that the optimal generalization performance of WSAM is almost always between 0.8 and 0.95.

The above is the detailed content of More versatile and effective, Ant's self-developed optimizer WSAM was selected into KDD Oral. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

DDREASE is a tool for recovering data from file or block devices such as hard drives, SSDs, RAM disks, CDs, DVDs and USB storage devices. It copies data from one block device to another, leaving corrupted data blocks behind and moving only good data blocks. ddreasue is a powerful recovery tool that is fully automated as it does not require any interference during recovery operations. Additionally, thanks to the ddasue map file, it can be stopped and resumed at any time. Other key features of DDREASE are as follows: It does not overwrite recovered data but fills the gaps in case of iterative recovery. However, it can be truncated if the tool is instructed to do so explicitly. Recover data from multiple files or blocks to a single

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

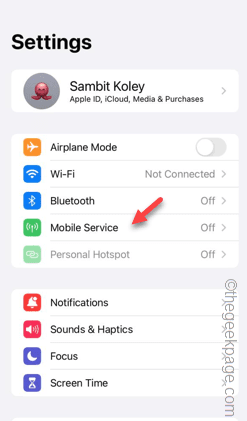

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

Recently, the military circle has been overwhelmed by the news: US military fighter jets can now complete fully automatic air combat using AI. Yes, just recently, the US military’s AI fighter jet was made public for the first time and the mystery was unveiled. The full name of this fighter is the Variable Stability Simulator Test Aircraft (VISTA). It was personally flown by the Secretary of the US Air Force to simulate a one-on-one air battle. On May 2, U.S. Air Force Secretary Frank Kendall took off in an X-62AVISTA at Edwards Air Force Base. Note that during the one-hour flight, all flight actions were completed autonomously by AI! Kendall said - "For the past few decades, we have been thinking about the unlimited potential of autonomous air-to-air combat, but it has always seemed out of reach." However now,