Technology peripherals

Technology peripherals

AI

AI

How to transform cybersecurity from reactive to proactive: The role of deep learning

How to transform cybersecurity from reactive to proactive: The role of deep learning

How to transform cybersecurity from reactive to proactive: The role of deep learning

Deep learning (DL) is an advanced subset of machine learning (ML) and is behind some of the most innovative and complex technologies today. We can witness the rapid development of artificial intelligence, machine learning and deep learning in almost every industry and experience benefits that were thought to be impossible just a few short years ago.

Rewritten content: Deep learning has made huge advances in the complexity of machine learning. Unlike machine learning, which may require human intervention to adjust the output layer if the results are wrong or unsatisfactory, deep learning can continuously learn and improve accuracy without human intervention. Multi-layered deep learning models can achieve surprising levels of accuracy and performance

The rise of deep learning models

For years, researchers have been developing complex artificial intelligence algorithms to achieve More advanced features. Through research work that closely mimics biological brains, more complex mathematical calculation methods have been developed, resulting in artificial neural networks (ANN). Simply put, ANN is composed of many nodes (or neurons), just like the human brain, that can pass and process information to each other in the network. In other words, it has the ability to learn and adapt

The development of this technology has been slow due to its requirements. Achieving this achievement requires three elements: large amounts of data, more advanced algorithms, and vastly increased processing power. This processing power comes in the form of a graphics processing unit (GPU). A GPU is a computer chip that can significantly accelerate the deep learning computing process and is a core component of artificial intelligence infrastructure. It can perform multiple computing tasks simultaneously, speed up the learning process of machine learning, and handle large amounts of data with ease. Powerful GPUs combined with cloud computing can effectively reduce the time required to train deep models from weeks to hours

Disadvantages of GPU performance

GPUs for such high-performance computing Power consumption is staggering and expensive. Training a single final version of some GPU models may require more power than 80 homes use in a year.

In addition, large-scale data storage centers around the world have a serious impact on the environment due to energy and water consumption and greenhouse gas emissions. Part of solving this problem is improving data quality through deep learning, rather than relying solely on large amounts of data. As artificial intelligence continues to develop, sustainability plans must become a globally shared platform

The more layers, the deeper we dive

For humans, the deeper we delve The more research data and empirical examples you have on a topic, the more you can create a practical and comprehensive knowledge base. Artificial neural networks are composed of three types of layers. The first input layer provides the network with an initial pool of data. The last layer is the output layer, which generates all results for a given data input. In between these two is the most important hidden layer. These middle layers are where all the computational processes are performed

At least three layers qualify as deep learning, but the more layers there are, the deeper the learning becomes to inform the output layer. Deep learning layers have different functions that act on the data as it flows through each layer in a specific order. With each additional layer, more details and features can be extracted progressively from the data set. This ultimately results in the network output predicting or stating potential outcomes, predictions and conclusions.

The Importance of Deep Learning for Accuracy and Prevention

AI automation and deep learning models are key elements in the fight against cybercrime, while also providing important protection against ransomware upgrades. Deep learning models are able to identify and predict suspicious behavior and understand the characteristics of potential attacks to prevent the execution of any payload or encryption of data

Intrusion detection and prevention systems generated by artificial neural networks compared to machine learning Smarter, more accurate, and significantly lower false alarm rates. Rather than relying on attack signatures or memorizing lists of known common attack sequences, artificial neural networks continuously learn and update to identify any system activity that indicates malicious behavior or the presence of malware.

Cybersecurity teams have always viewed external attacks as a primary concern, but malicious internal activity is on the rise. According to Ponemon's 2022 Cost of Insider Threats: Global Report, insider threat incidents have increased by 44% over the past two years, and the cost per incident has increased by more than a third to $15.38 million

Security teams are increasingly leveraging User and Entity Behavior Analytics (UEBA) to thwart insider threats. Deep learning models can analyze and learn normal employee behavior patterns over time and detect when anomalies arise. For example, it can detect any out-of-hours system access or data breach and send alerts Huge difference. A reactive approach protects against threats after they enter the network to exploit systems and steal data. Through deep learning, vulnerabilities and malicious activities can be identified and eliminated before they are exploited, thereby achieving the goal of proactively preventing and eliminating threats

While automated and multi-layered deep learning cybersecurity solutions have greatly improved security defenses, this technology can also be exploited by both sides of cybercrime

Escalating AI innovations require protective legislation

In the field of cybersecurity, the development of artificial intelligence solutions like deep learning to combat sophisticated cyber enemies has outpaced the ability of regulatory agencies to limit and control. At the same time, enterprise defense measures may also be exploited and manipulated by malicious attackers

The consequences of uncontrolled artificial intelligence technology in the future may be devastating on a global scale. If our technology gets out of hand, without legislation to maintain order, human rights and international security, this could become an escalating battleground between good and evil.

The ultimate goal of cybersecurity is to move beyond passive detection and response to proactive protection and threat elimination. Automation and multi-level deep learning are key steps in this direction. Our challenge is to maintain reasonable control and stay one step ahead of our cyber enemies

The above is the detailed content of How to transform cybersecurity from reactive to proactive: The role of deep learning. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

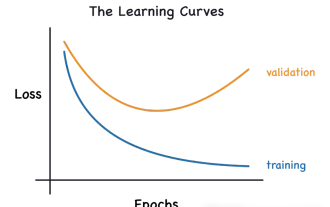

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

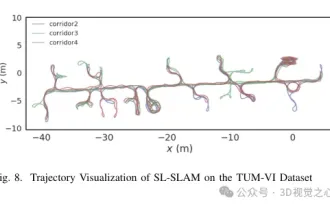

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Written previously, today we discuss how deep learning technology can improve the performance of vision-based SLAM (simultaneous localization and mapping) in complex environments. By combining deep feature extraction and depth matching methods, here we introduce a versatile hybrid visual SLAM system designed to improve adaptation in challenging scenarios such as low-light conditions, dynamic lighting, weakly textured areas, and severe jitter. sex. Our system supports multiple modes, including extended monocular, stereo, monocular-inertial, and stereo-inertial configurations. In addition, it also analyzes how to combine visual SLAM with deep learning methods to inspire other research. Through extensive experiments on public datasets and self-sampled data, we demonstrate the superiority of SL-SLAM in terms of positioning accuracy and tracking robustness.

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,