Technology peripherals

Technology peripherals

AI

AI

Think of LLM as an operating system, it has unlimited 'virtual' context, Berkeley's new work has received 1.7k stars

Think of LLM as an operating system, it has unlimited 'virtual' context, Berkeley's new work has received 1.7k stars

Think of LLM as an operating system, it has unlimited 'virtual' context, Berkeley's new work has received 1.7k stars

In recent years, large language models (LLMs) and their underlying transformer architecture have become the cornerstone of conversational AI and have spawned a wide range of consumer and enterprise applications. Despite considerable progress, the fixed-length context window used by LLM greatly limits the applicability to long conversation or long document reasoning. Even for the most widely used open source LLMs, their maximum input length only allows support of a few dozen message replies or short document inference.

At the same time, limited by the self-attention mechanism of the transformer architecture, simply extending the context length of the transformer will also cause the calculation time and memory cost to increase exponentially, which makes a new long context architecture urgent. research topic.

However, even if we can overcome the computational challenges of context scaling, recent research has shown that long-context models struggle to effectively utilize the additional context.

How to solve this? Given the massive resources required to train SOTA LLM and the apparent diminishing returns of context scaling, we urgently need alternative techniques that support long contexts. Researchers at the University of California, Berkeley, have made new progress in this regard.

In this article, researchers explore how to provide the illusion of infinite context while continuing to use a fixed context model. Their approach borrows ideas from virtual memory paging, enabling applications to process data sets that far exceed available memory.

Based on this idea, researchers took advantage of the latest advances in LLM agent function calling capabilities to design an OS-inspired LLM system for virtual context management - MemGPT.

Paper homepage: https://memgpt.ai/

arXiv address: https://arxiv.org/pdf/2310.08560.pdf

The project has been open sourced and has gained 1.7k stars on GitHub.

GitHub address: https://github.com/cpacker/MemGPT

Method Overview

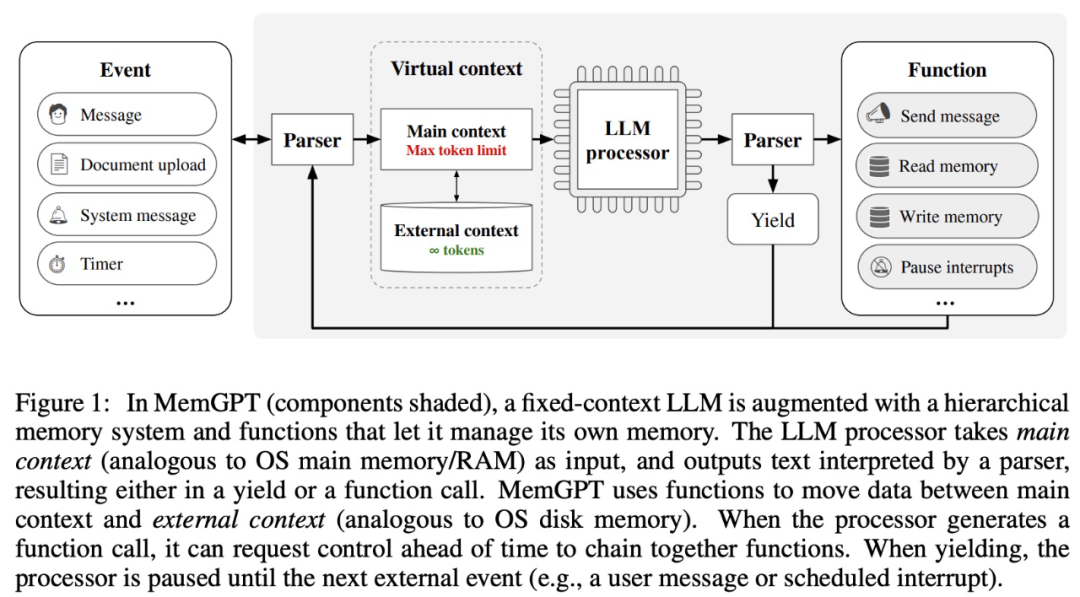

The The research draws inspiration from the hierarchical memory management of traditional operating systems to efficiently "page" information in and out between context windows (similar to "main memory" in operating systems) and external storage. MemGPT is responsible for managing the control flow between memory, LLM processing module and users. This design allows for iterative context modification during a single task, allowing the agent to more efficiently utilize its limited context window.

MemGPT treats the context window as a restricted memory resource and designs a hierarchical structure for LLM similar to hierarchical memory in traditional operating systems (Patterson et al., 1988). In order to provide longer context length, this research allows LLM to manage content placed in its context window through "LLM OS" - MemGPT. MemGPT enables LLM to retrieve relevant historical data that is lost in context, similar to page faults in operating systems. Additionally, agents can iteratively modify the contents of a single task context window, just as a process can repeatedly access virtual memory.

MemGPT enables LLM to handle unbounded contexts when the context window is limited. The components of MemGPT are shown in Figure 1 below.

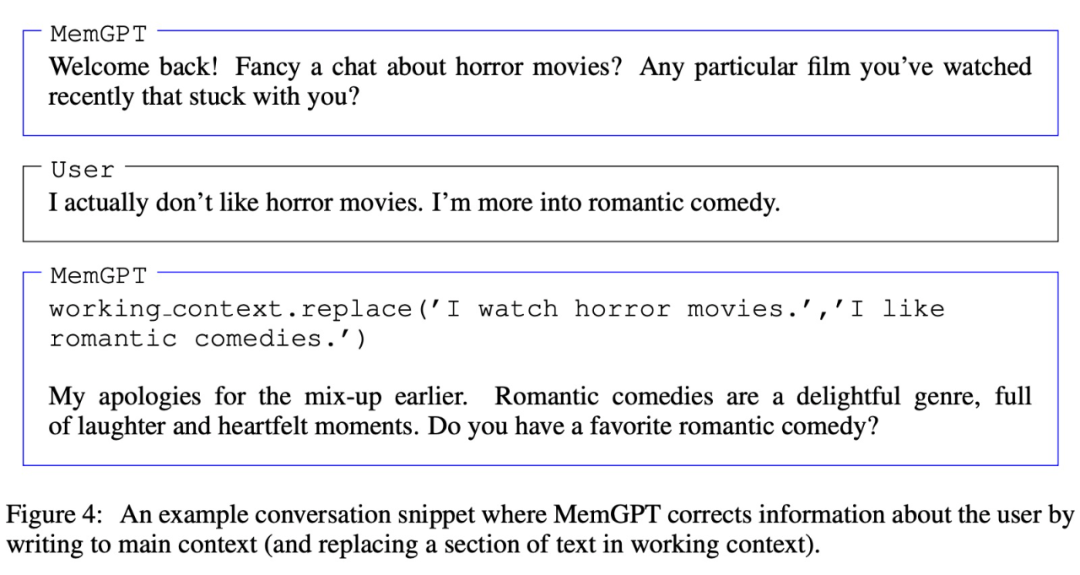

MemGPT coordinates the movement of data between the main context (content in the context window) and external contexts through function calls. MemGPT updates and retrieves autonomously based on the current context.

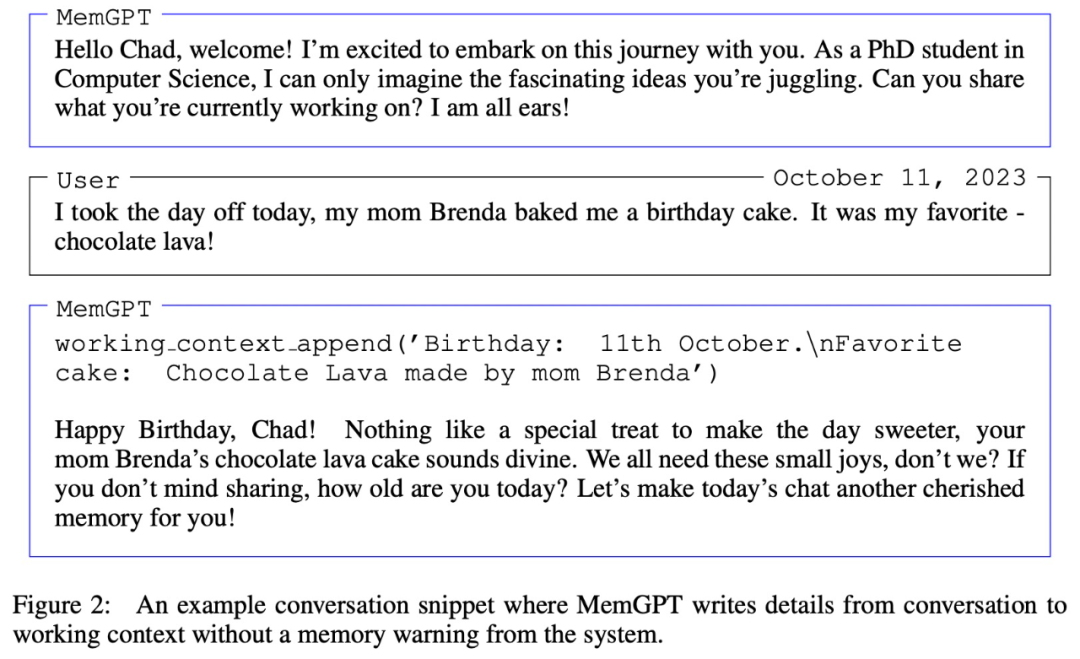

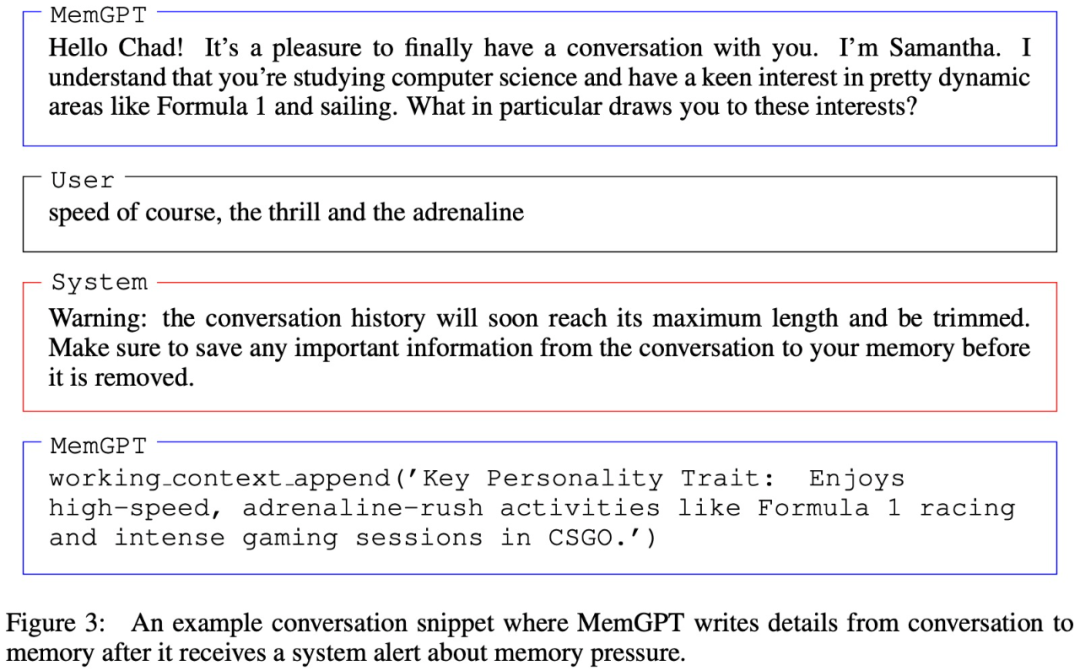

It is worth noting that the context window needs to use a warning token to identify its restrictions, as shown in Figure 3 below:

Experiments and results

In the experimental part, the researchers evaluated MemGPT in two long context domains, namely conversational agents and document processing. For conversational agents, they extended the existing multi-session chat dataset (Xu et al. (2021)) and introduced two new conversation tasks to evaluate the agent's ability to retain knowledge in long conversations. For document analysis, they benchmark MemGPT on tasks proposed by Liu et al. (2023a), including question answering and key-value retrieval of long documents.

MemGPT for Conversational Agents

When talking to the user, the agent must meet the following two key criteria.

The first is consistency, that is, the agent should maintain the continuity of the conversation, and the new facts, references, and events provided should be consistent with the previous statements of the user and the agent.

The second is participation, that is, the agent should use the user's long-term knowledge to personalize the response. Referring to previous conversations can make the conversation more natural and engaging.

Therefore, the researchers evaluated MemGPT based on these two criteria:

Can MemGPT leverage its memory to improve conversational consistency? Can you remember relevant facts, quotes, events from past interactions to maintain coherence?

MemGPT Is it possible to use memory to generate more engaging conversations? Spontaneously merge remote user information to personalize information?

Regarding the data set used, the researchers evaluated and compared MemGPT and the fixed context baseline model on the multi-session chat (MSC) proposed by Xu et al. (2021).

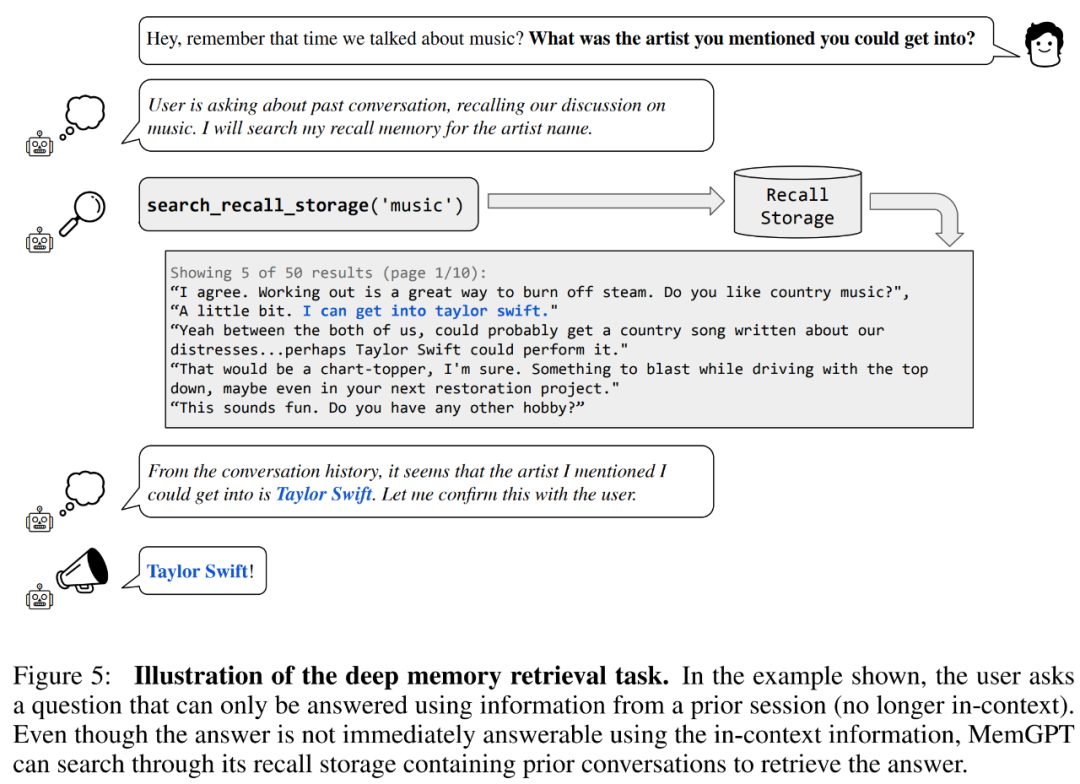

First come to consistency evaluation. The researchers introduced a deep memory retrieval (DMR) task based on the MSC dataset to test the consistency of the conversational agent. In DMR, a user poses a question to a conversational agent, and the question explicitly references a previous conversation, with the expectation that the answer range will be very narrow. For details, please refer to the example in Figure 5 below.

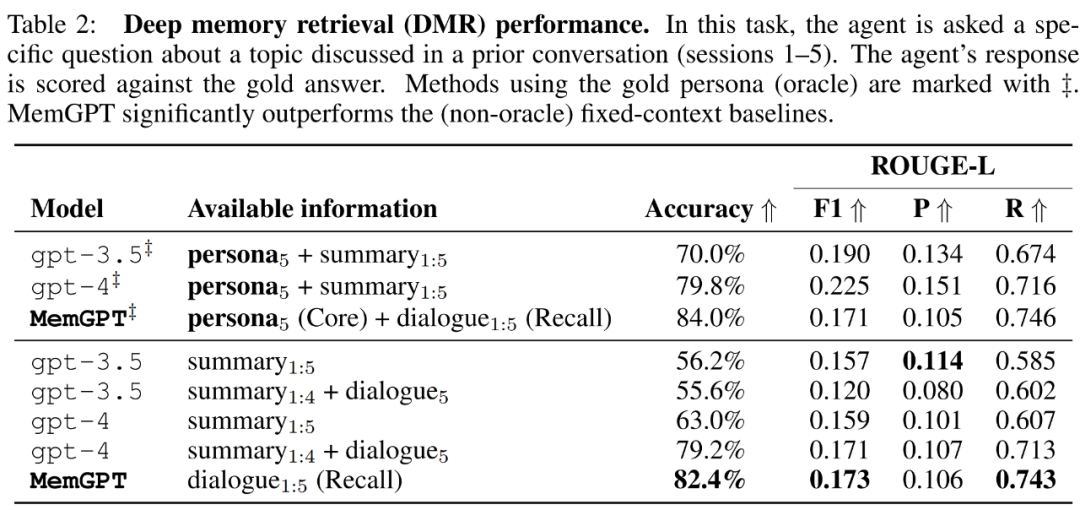

MemGPT utilizes memory to maintain consistency. Table 2 below shows the performance comparison of MemGPT against fixed memory baseline models, including GPT-3.5 and GPT-4.

It can be seen that MemGPT is significantly better than GPT-3.5 and GPT-4 in terms of LLM judgment accuracy and ROUGE-L score. MemGPT can use Recall Memory to query past conversation history to answer DMR questions, rather than relying on recursive summarization to expand context.

Then in the "conversation starter" task, the researchers assessed the agent's ability to extract engaging messages from the accumulated knowledge of previous conversations and deliver them to the user.

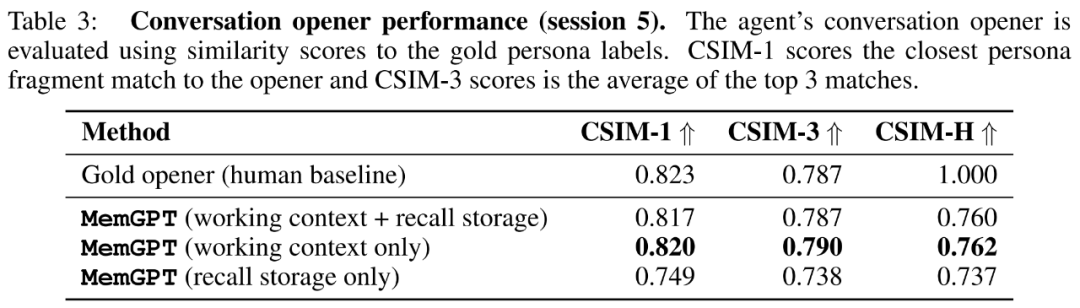

The researchers show the CSIM scores of MemGPT’s opening remarks in Table 3 below. The results show that MemGPT is able to produce engaging intros that perform as well as or better than human handwritten intros. It is also observed that MemGPT tends to produce openings that are longer and cover more character information than the human baseline. Figure 6 below is an example.

MemGPT for document analysis

To evaluate MemGPT’s ability to analyze documents, We benchmarked MemGPT and a fixed-context baseline model on the Liu et al. (2023a) retriever-reader document QA task.

The results show that MemGPT is able to efficiently make multiple calls to the retriever by querying the archive storage, allowing it to scale to larger effective context lengths. MemGPT actively retrieves documents from the archive store and can iteratively page through the results so that the total number of documents available to it is no longer limited by the number of documents in the applicable LLM processor context window.

Due to the limitations of embedding-based similarity search, the document QA task poses a great challenge to all methods. Researchers observed that MemGPT stops paginating crawler results before the crawler database is exhausted.

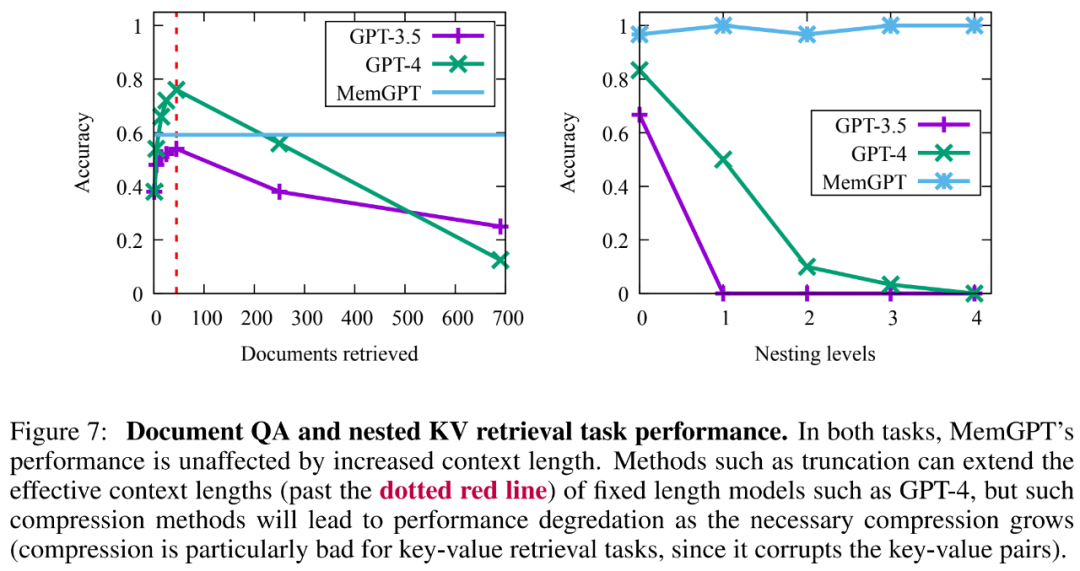

In addition, there is a trade-off in the retrieval document capacity created by more complex operations of MemGPT, as shown in Figure 7 below. Its average accuracy is lower than GPT-4 (higher than GPT-3.5), but it can be easily extended Larger document.

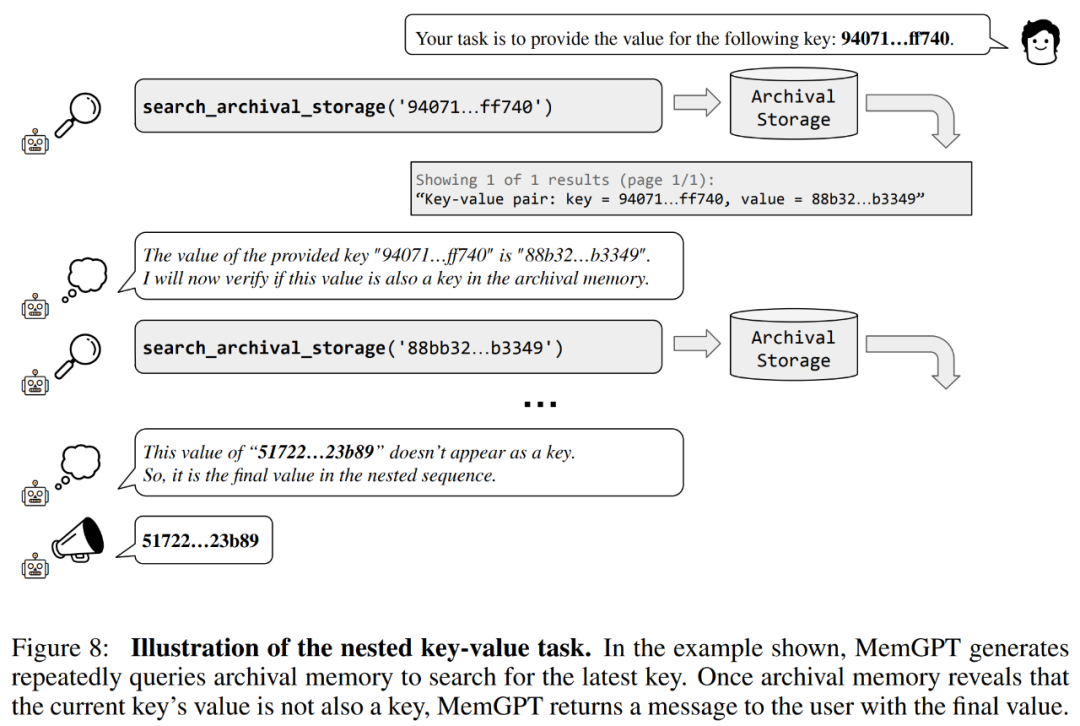

The researchers also introduced a new task based on synthetic key-value retrieval, namely Nested Key-Value Retrieval, to demonstrate how MemGPT Organize information from multiple data sources.

From the results, although GPT-3.5 and GPT-4 showed good performance on the original key-value task, they performed poorly on the nested key-value retrieval task. MemGPT is not affected by the number of nesting levels and can perform nested lookups by repeatedly accessing key-value pairs stored in main memory through function queries.

MemGPT's performance on nested key-value retrieval tasks demonstrates its ability to perform multiple lookups using a combination of multiple queries.

For more technical details and experimental results, please refer to the original paper.

The above is the detailed content of Think of LLM as an operating system, it has unlimited 'virtual' context, Berkeley's new work has received 1.7k stars. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

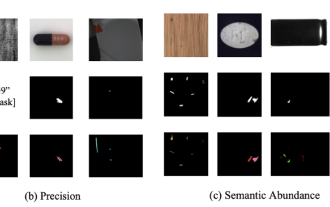

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

In modern manufacturing, accurate defect detection is not only the key to ensuring product quality, but also the core of improving production efficiency. However, existing defect detection datasets often lack the accuracy and semantic richness required for practical applications, resulting in models unable to identify specific defect categories or locations. In order to solve this problem, a top research team composed of Hong Kong University of Science and Technology Guangzhou and Simou Technology innovatively developed the "DefectSpectrum" data set, which provides detailed and semantically rich large-scale annotation of industrial defects. As shown in Table 1, compared with other industrial data sets, the "DefectSpectrum" data set provides the most defect annotations (5438 defect samples) and the most detailed defect classification (125 defect categories

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

For AI, Mathematical Olympiad is no longer a problem. On Thursday, Google DeepMind's artificial intelligence completed a feat: using AI to solve the real question of this year's International Mathematical Olympiad IMO, and it was just one step away from winning the gold medal. The IMO competition that just ended last week had six questions involving algebra, combinatorics, geometry and number theory. The hybrid AI system proposed by Google got four questions right and scored 28 points, reaching the silver medal level. Earlier this month, UCLA tenured professor Terence Tao had just promoted the AI Mathematical Olympiad (AIMO Progress Award) with a million-dollar prize. Unexpectedly, the level of AI problem solving had improved to this level before July. Do the questions simultaneously on IMO. The most difficult thing to do correctly is IMO, which has the longest history, the largest scale, and the most negative

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Editor |KX To this day, the structural detail and precision determined by crystallography, from simple metals to large membrane proteins, are unmatched by any other method. However, the biggest challenge, the so-called phase problem, remains retrieving phase information from experimentally determined amplitudes. Researchers at the University of Copenhagen in Denmark have developed a deep learning method called PhAI to solve crystal phase problems. A deep learning neural network trained using millions of artificial crystal structures and their corresponding synthetic diffraction data can generate accurate electron density maps. The study shows that this deep learning-based ab initio structural solution method can solve the phase problem at a resolution of only 2 Angstroms, which is equivalent to only 10% to 20% of the data available at atomic resolution, while traditional ab initio Calculation

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Editor | ScienceAI Based on limited clinical data, hundreds of medical algorithms have been approved. Scientists are debating who should test the tools and how best to do so. Devin Singh witnessed a pediatric patient in the emergency room suffer cardiac arrest while waiting for treatment for a long time, which prompted him to explore the application of AI to shorten wait times. Using triage data from SickKids emergency rooms, Singh and colleagues built a series of AI models that provide potential diagnoses and recommend tests. One study showed that these models can speed up doctor visits by 22.3%, speeding up the processing of results by nearly 3 hours per patient requiring a medical test. However, the success of artificial intelligence algorithms in research only verifies this

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S

Automatically identify the best molecules and reduce synthesis costs. MIT develops a molecular design decision-making algorithm framework

Jun 22, 2024 am 06:43 AM

Automatically identify the best molecules and reduce synthesis costs. MIT develops a molecular design decision-making algorithm framework

Jun 22, 2024 am 06:43 AM

Editor | Ziluo AI’s use in streamlining drug discovery is exploding. Screen billions of candidate molecules for those that may have properties needed to develop new drugs. There are so many variables to consider, from material prices to the risk of error, that weighing the costs of synthesizing the best candidate molecules is no easy task, even if scientists use AI. Here, MIT researchers developed SPARROW, a quantitative decision-making algorithm framework, to automatically identify the best molecular candidates, thereby minimizing synthesis costs while maximizing the likelihood that the candidates have the desired properties. The algorithm also determined the materials and experimental steps needed to synthesize these molecules. SPARROW takes into account the cost of synthesizing a batch of molecules at once, since multiple candidate molecules are often available