Technology peripherals

Technology peripherals

AI

AI

The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.

The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.

The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.

With just a few tweaks, the large model support context size can be extended from 16,000 tokens to 1 million? !

Still on LLaMA 2 which has only 7 billion parameters.

You must know that even the most popular Claude 2 and GPT-4 support context lengths of only 100,000 and 32,000. Beyond this range, large models will start to talk nonsense and be unable to remember things.

Now, a new study from Fudan University and Shanghai Artificial Intelligence Laboratory has not only found a way to increase the length of the context window for a series of large models, but also discovered the rules.

According to this rule, only need to adjust 1 hyperparameter, can ensure the output effect while stably improving the large modelExtrapolation performance.

Extrapolation refers to the change in output performance when the input length of the large model exceeds the length of the pre-trained text. If the extrapolation ability is not good, once the input length exceeds the length of the pre-trained text, the large model will "talk nonsense".

So, what exactly can it improve the extrapolation capabilities of large models, and how does it do it?

"Mechanism" to improve the extrapolation ability of large models

This method of improving the extrapolation ability of large models is the same as the position coding in the Transformer architecture. module related.

In fact, the simple attention mechanism (Attention) module cannot distinguish tokens in different positions. For example, "I eat apples" and "apples eat me" have no difference in its eyes. Therefore, position coding needs to be added to allow it to understand the word order information and truly understand the meaning of a sentence. The current Transformer position encoding methods include absolute position encoding (integrating position information into the input), relative position encoding (writing position information into attention score calculation) and rotation position encoding. Among them, the most popular one is the rotational position encoding, which isRoPE.

RoPE achieves the effect of relative position encoding through absolute position encoding, but compared with relative position encoding, it can better improve the extrapolation potential of large models. How to further stimulate the extrapolation capabilities of large models using RoPE position encoding has become a new direction of many recent studies. These studies are mainly divided into two major schools:Limiting attention and Adjusting rotation angle.

Representative research on limiting attention includes ALiBi, xPos, BCA, etc. The StreamingLLM recently proposed by MIT can allow large models to achieve infinite input length (but does not increase the context window length), which belongs to the type of research in this direction.There is more work to adjust the rotation angle, typical representatives such as linear interpolation, Giraffe, Code LLaMA, LLaMA2 Long et al. all belong to this type of research.

32,000 tokens.

This hyperparameter is exactly the"switch" found by Code LLaMA and LLaMA2 Long et al.——

rotation angle base(base ).

Just fine-tune it to ensure that the extrapolation performance of large models is improved. But whether it is Code LLaMA or LLaMA2 Long, they are only fine-tuned on a specific base and continued training length to enhance their extrapolation capabilities. Is it possible to find a pattern to ensure thatall large models using RoPE position encoding can steadily improve the extrapolation performance?

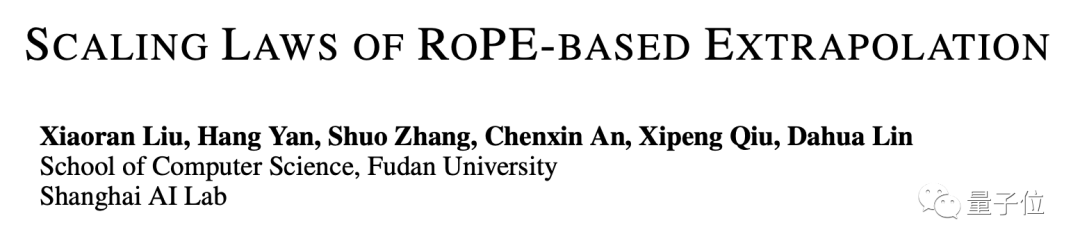

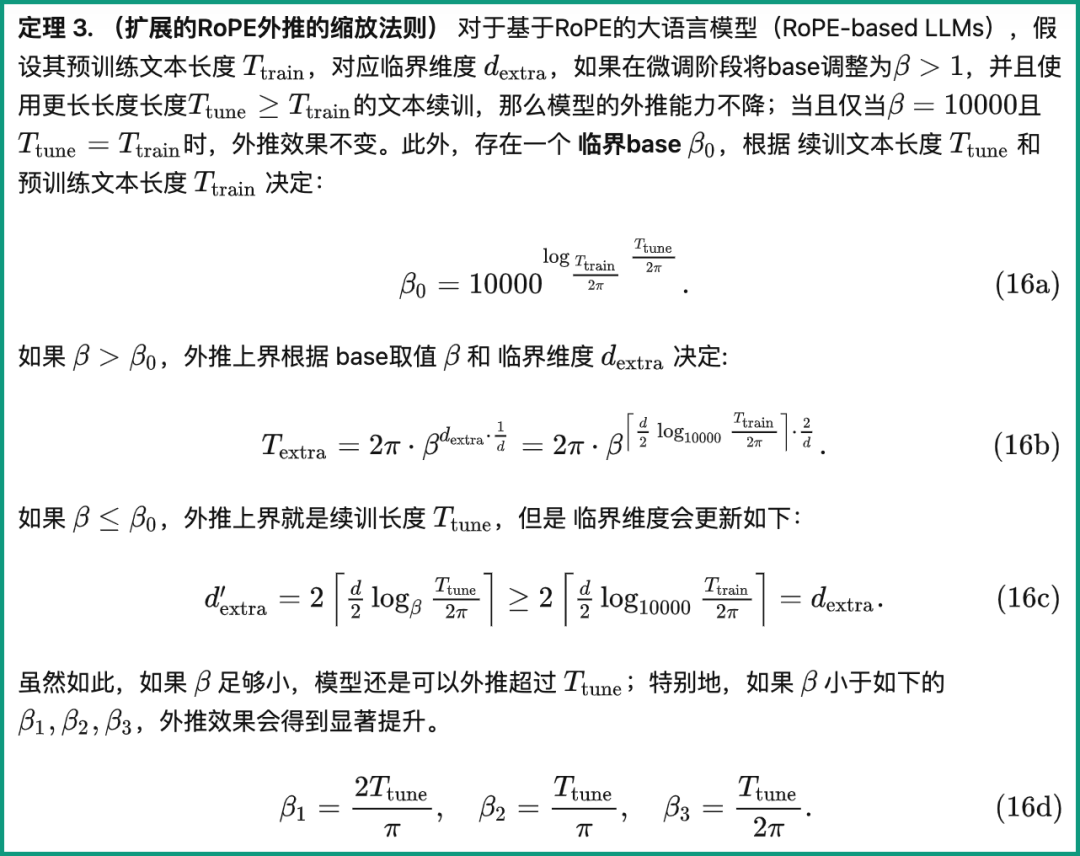

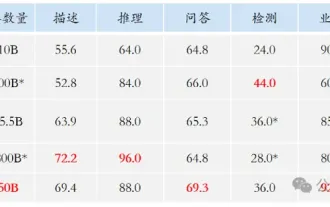

Master this rule, the context is easy 100w Researchers from Fudan University and Shanghai AI Research Institute conducted experiments on this problem. They first analyzed several parameters that affect the RoPE extrapolation ability, and proposed a concept calledCritical Dimension (Critical Dimension). Then based on this concept, they concluded A set of RoPE extrapolation scaling laws (Scaling Laws of RoPE-based Extrapolation).

Just apply thisrule to ensure that any large model based on RoPE positional encoding can improve extrapolation capabilities.

Let’s first look at what the critical dimension is.From the definition, it is related to the pre-training text length Ttrain, the number of self-attention head dimensions d and other parameters. The specific calculation method is as follows:

Among them, 10000 is the "initial value" of the hyperparameter and rotation angle base.

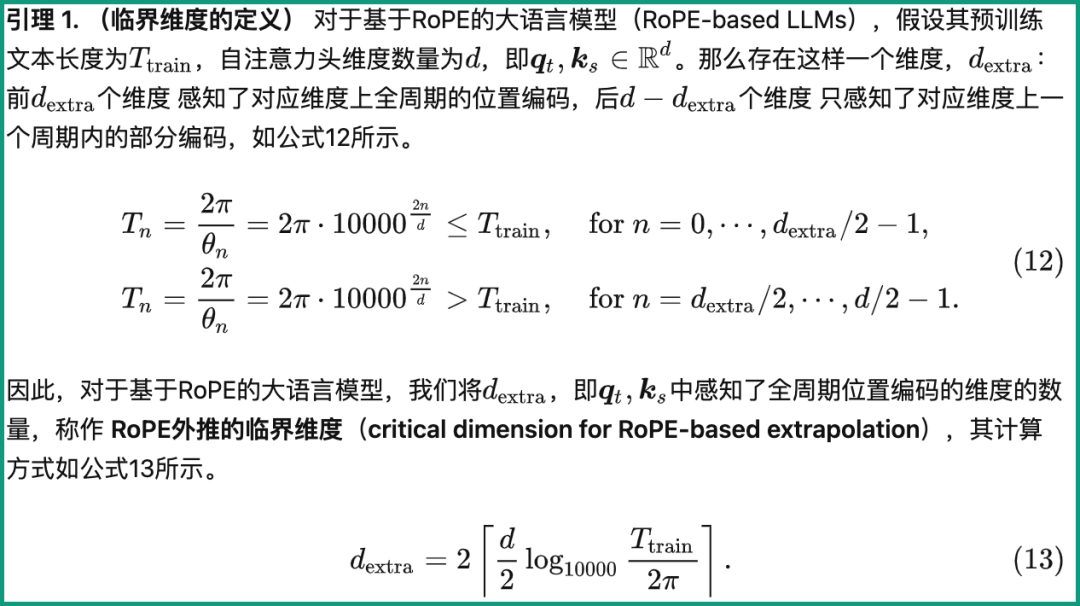

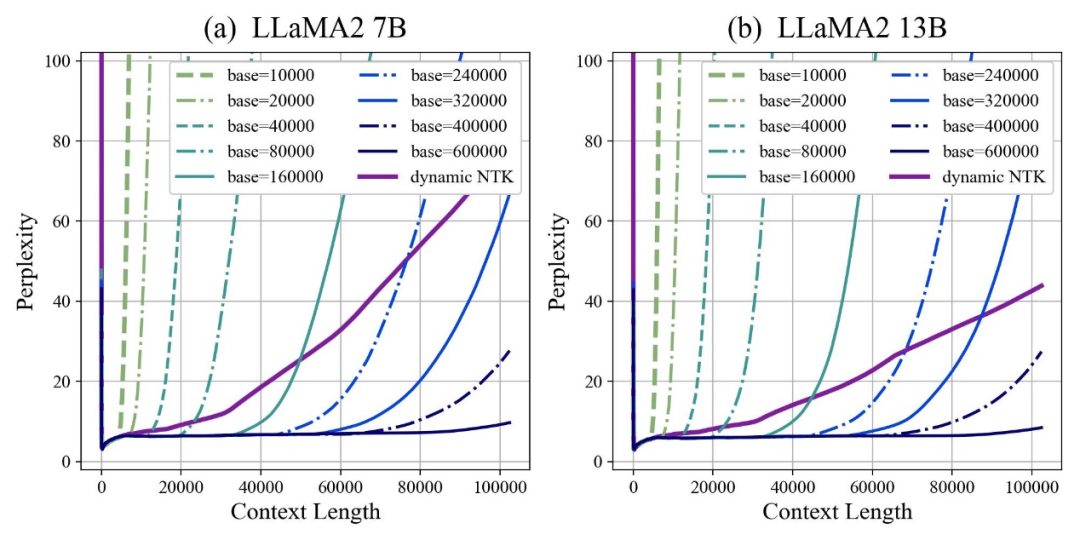

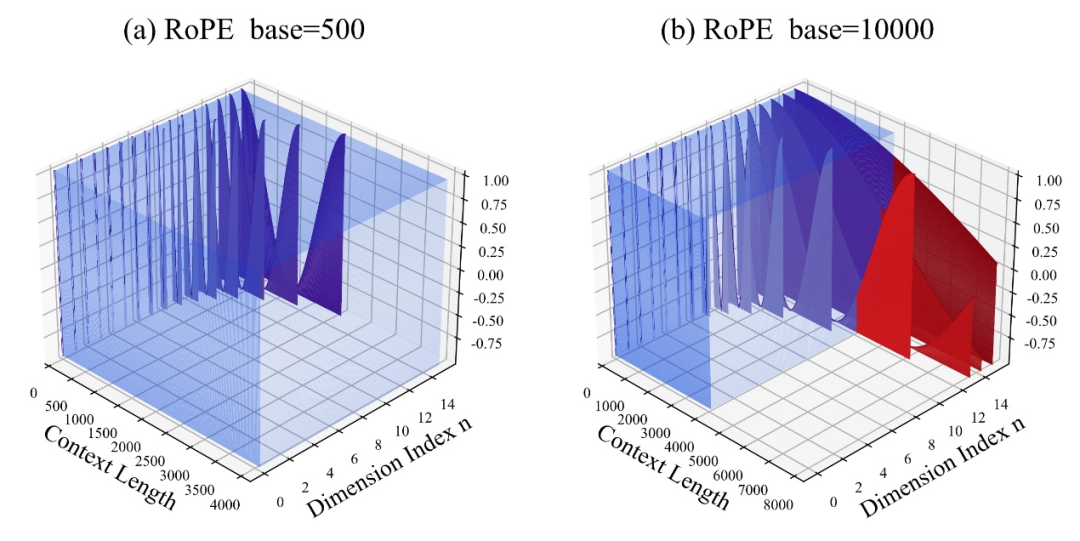

The author found that whether the base is enlarged or reduced, the extrapolation ability of the large model based on RoPE can be enhanced in the end. In contrast, when the base of the rotation angle is 10000, the extrapolation ability of the large model is the best. Poor.

This paper believes that a smaller base of the rotation angle can allow more dimensions to perceive position information, and a larger base of the rotation angle, then Can express longer location information.

In this case, when facing continued training corpus of different lengths, how much rotation angle base should be reduced and enlarged to ensure that the extrapolation ability of the large model is maximized? To what extent?

The paper gives a scaling rule for extended RoPE extrapolation, which is related to parameters such as critical dimensions, continued training text length and pre-training text length of large models:

Based on this rule, the extrapolation performance of the large model can be directly calculated based on different pre-training and continued training text lengths. In other words, the context length supported by the large model is predicted.

On the contrary, using this rule, we can also quickly deduce how to best adjust the base of the rotation angle, thereby improving the extrapolation performance of large models.

The author tested this series of tasks and found that currently inputting 100,000, 500,000, or even 1 million tokens lengths can ensure that extrapolation can be achieved without additional attention restrictions.

At the same time, work on enhancing the extrapolation capabilities of large models, including Code LLaMA and LLaMA2 Long, has proven that this rule is indeed reasonable and effective.

In this way, you only need to "adjust a parameter" according to this rule, and you can easily expand the context window length of the large model based on RoPE and enhance the extrapolation capability.

Liu Xiaoran, the first author of the paper, said that this research is still improving the downstream task effect by improving the continued training corpus. After completion, the code and model will be open source. You can look forward to it~

Paper address:

https://arxiv.org/abs/2310.05209

Github repository:

https://github.com/OpenLMLab/scaling-rope

Paper analysis blog:

https:// zhuanlan.zhihu.com/p/660073229

The above is the detailed content of The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

DDREASE is a tool for recovering data from file or block devices such as hard drives, SSDs, RAM disks, CDs, DVDs and USB storage devices. It copies data from one block device to another, leaving corrupted data blocks behind and moving only good data blocks. ddreasue is a powerful recovery tool that is fully automated as it does not require any interference during recovery operations. Additionally, thanks to the ddasue map file, it can be stopped and resumed at any time. Other key features of DDREASE are as follows: It does not overwrite recovered data but fills the gaps in case of iterative recovery. However, it can be truncated if the tool is instructed to do so explicitly. Recover data from multiple files or blocks to a single

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

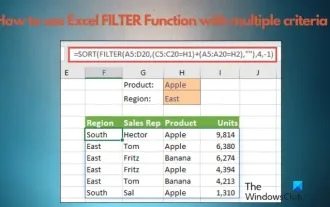

How to use Excel filter function with multiple conditions

Feb 26, 2024 am 10:19 AM

How to use Excel filter function with multiple conditions

Feb 26, 2024 am 10:19 AM

If you need to know how to use filtering with multiple criteria in Excel, the following tutorial will guide you through the steps to ensure you can filter and sort your data effectively. Excel's filtering function is very powerful and can help you extract the information you need from large amounts of data. This function can filter data according to the conditions you set and display only the parts that meet the conditions, making data management more efficient. By using the filter function, you can quickly find target data, saving time in finding and organizing data. This function can not only be applied to simple data lists, but can also be filtered based on multiple conditions to help you locate the information you need more accurately. Overall, Excel’s filtering function is a very practical

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

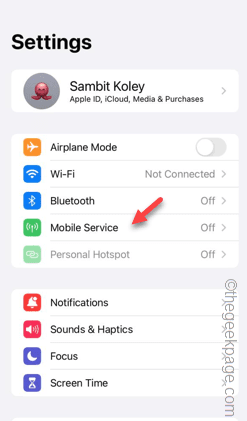

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

The U.S. Air Force showcases its first AI fighter jet with high profile! The minister personally conducted the test drive without interfering during the whole process, and 100,000 lines of code were tested for 21 times.

May 07, 2024 pm 05:00 PM

Recently, the military circle has been overwhelmed by the news: US military fighter jets can now complete fully automatic air combat using AI. Yes, just recently, the US military’s AI fighter jet was made public for the first time and the mystery was unveiled. The full name of this fighter is the Variable Stability Simulator Test Aircraft (VISTA). It was personally flown by the Secretary of the US Air Force to simulate a one-on-one air battle. On May 2, U.S. Air Force Secretary Frank Kendall took off in an X-62AVISTA at Edwards Air Force Base. Note that during the one-hour flight, all flight actions were completed autonomously by AI! Kendall said - "For the past few decades, we have been thinking about the unlimited potential of autonomous air-to-air combat, but it has always seemed out of reach." However now,

The first robot to autonomously complete human tasks appears, with five fingers that are flexible and fast, and large models support virtual space training

Mar 11, 2024 pm 12:10 PM

The first robot to autonomously complete human tasks appears, with five fingers that are flexible and fast, and large models support virtual space training

Mar 11, 2024 pm 12:10 PM

This week, FigureAI, a robotics company invested by OpenAI, Microsoft, Bezos, and Nvidia, announced that it has received nearly $700 million in financing and plans to develop a humanoid robot that can walk independently within the next year. And Tesla’s Optimus Prime has repeatedly received good news. No one doubts that this year will be the year when humanoid robots explode. SanctuaryAI, a Canadian-based robotics company, recently released a new humanoid robot, Phoenix. Officials claim that it can complete many tasks autonomously at the same speed as humans. Pheonix, the world's first robot that can autonomously complete tasks at human speeds, can gently grab, move and elegantly place each object to its left and right sides. It can autonomously identify objects