Web Front-end

Web Front-end

JS Tutorial

JS Tutorial

Using JavaScript functions to implement machine learning prediction and classification

Using JavaScript functions to implement machine learning prediction and classification

Using JavaScript functions to implement machine learning prediction and classification

With the development of artificial intelligence technology, machine learning has become a popular technical field. Among them, JavaScript is a widely used programming language, and we can use its functions to implement machine learning prediction and classification. Next, let’s take a look at how to use JavaScript functions to implement machine learning.

First of all, we need to introduce a very important JavaScript library: TensorFlow.js. This library helps us use machine learning models in JavaScript for prediction and classification. Before we start writing code, we need to install this library. You can install it through the following command:

npm install @tensorflow/tfjs

After installation, we can start writing JavaScript code.

- Perform Linear Regression

Linear regression is one of the most basic machine learning methods. It can help us build a linear model to analyze the relationship between data. In JavaScript, linear regression can be implemented using the TensorFlow.js library. Here is a simple example:

// 定义输入数据

const xs = tf.tensor([1, 2, 3, 4], [4, 1]);

const ys = tf.tensor([1, 3, 5, 7], [4, 1]);

// 定义模型和训练参数

const model = tf.sequential();

model.add(tf.layers.dense({units: 1, inputShape: [1]}));

model.compile({optimizer: 'sgd', loss: 'meanSquaredError'});

// 训练模型

model.fit(xs, ys, {epochs: 100}).then(() => {

// 预测

const output = model.predict(tf.tensor([5], [1, 1]));

output.print();

});In this example, we define the input data and define a linear model using TensorFlow.js. Training parameters include sgd optimizer and mean square error. After training the model, we can use the predict function to make predictions.

- Image classification

In addition to linear regression, we can also use TensorFlow.js for image classification. The following is a simple example:

// 加载模型

const model = await tf.loadLayersModel('http://localhost:8000/model.json');

// 加载图像并进行预测

const img = new Image();

img.src = 'cat.jpg';

img.onload = async function() {

const tensor = tf.browser.fromPixels(img)

.resizeNearestNeighbor([224, 224]) // 调整图像大小

.expandDims() // 扩展图像维度

.toFloat() // 转换为浮点数

.reverse(-1); // 反转通道

const predictions = await model.predict(tensor).data();

console.log(predictions);

}In this example, we first load a pre-trained model and load it using the loadLayersModel function. We then loaded an image and used TensorFlow.js to resize, expand dimensions, convert to floats, and invert channels. Finally, we use the predict function to make image classification predictions and the console.log function to output the prediction results.

Through these two examples, we can see that it is not difficult to use JavaScript functions to implement machine learning prediction and classification. Of course, this is just an entry-level practice. If you want to learn more about machine learning and JavaScript, you need to learn the relevant knowledge in depth and practice more.

The above is the detailed content of Using JavaScript functions to implement machine learning prediction and classification. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Quantile regression for time series probabilistic forecasting

May 07, 2024 pm 05:04 PM

Quantile regression for time series probabilistic forecasting

May 07, 2024 pm 05:04 PM

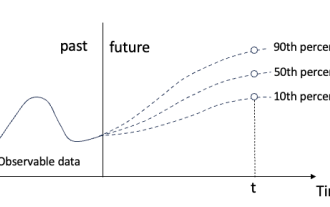

Do not change the meaning of the original content, fine-tune the content, rewrite the content, and do not continue. "Quantile regression meets this need, providing prediction intervals with quantified chances. It is a statistical technique used to model the relationship between a predictor variable and a response variable, especially when the conditional distribution of the response variable is of interest When. Unlike traditional regression methods, quantile regression focuses on estimating the conditional magnitude of the response variable rather than the conditional mean. "Figure (A): Quantile regression Quantile regression is an estimate. A modeling method for the linear relationship between a set of regressors X and the quantiles of the explained variables Y. The existing regression model is actually a method to study the relationship between the explained variable and the explanatory variable. They focus on the relationship between explanatory variables and explained variables

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,

Machine Learning in C++: A Guide to Implementing Common Machine Learning Algorithms in C++

Jun 03, 2024 pm 07:33 PM

Machine Learning in C++: A Guide to Implementing Common Machine Learning Algorithms in C++

Jun 03, 2024 pm 07:33 PM

In C++, the implementation of machine learning algorithms includes: Linear regression: used to predict continuous variables. The steps include loading data, calculating weights and biases, updating parameters and prediction. Logistic regression: used to predict discrete variables. The process is similar to linear regression, but uses the sigmoid function for prediction. Support Vector Machine: A powerful classification and regression algorithm that involves computing support vectors and predicting labels.

Complete collection of excel function formulas

May 07, 2024 pm 12:04 PM

Complete collection of excel function formulas

May 07, 2024 pm 12:04 PM

1. The SUM function is used to sum the numbers in a column or a group of cells, for example: =SUM(A1:J10). 2. The AVERAGE function is used to calculate the average of the numbers in a column or a group of cells, for example: =AVERAGE(A1:A10). 3. COUNT function, used to count the number of numbers or text in a column or a group of cells, for example: =COUNT(A1:A10) 4. IF function, used to make logical judgments based on specified conditions and return the corresponding result.