Technology peripherals

Technology peripherals

AI

AI

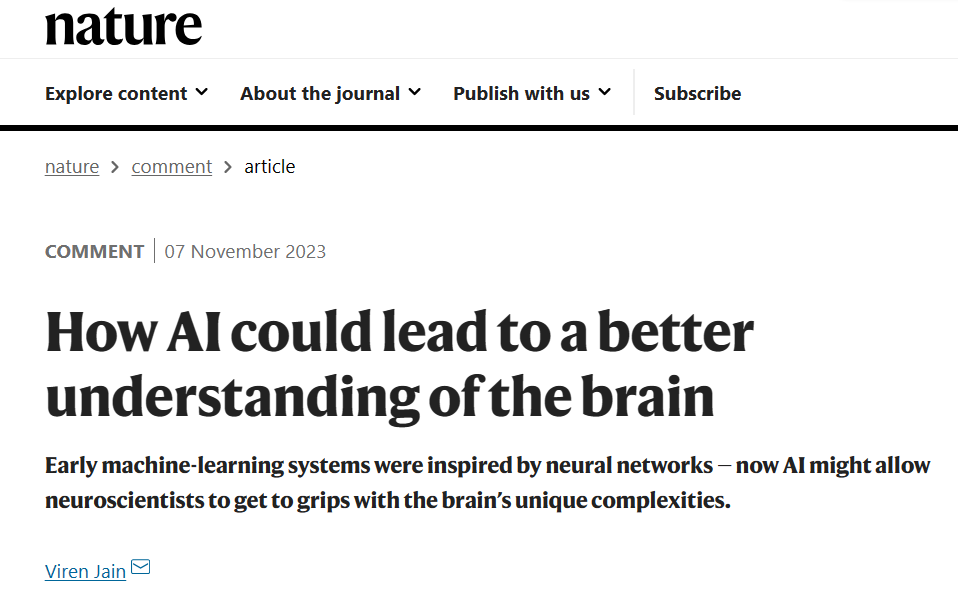

Google Scientist Nature comments: How artificial intelligence can better understand the brain

Google Scientist Nature comments: How artificial intelligence can better understand the brain

Google Scientist Nature comments: How artificial intelligence can better understand the brain

Compilation | Green Dior

On November 7, 2023, Viren Jain, senior research scientist at Google Research and head of connectomics of the Google team, published in "Nature" A review article titled "How AI could lead to a better understanding of the brain".

Paper link: https://www.nature.com/articles/d41586-023-03426-3

Can computers be programmed to simulate the brain? ? It's a question that mathematicians, theorists and experimentalists have long asked - whether out of a desire to create artificial intelligence (AI) or because its behavior can only be understood if mathematics or computers can reproduce it Complex systems like the brain. To try to answer this question, researchers have been developing simplified models of the brain's neural networks since the 1940s. In fact, today’s explosion of machine learning can be traced back to early work inspired by biological systems.

However, the results of these efforts now allow researchers to ask a slightly different question: Can machine learning be used to build computational models that simulate brain activity?

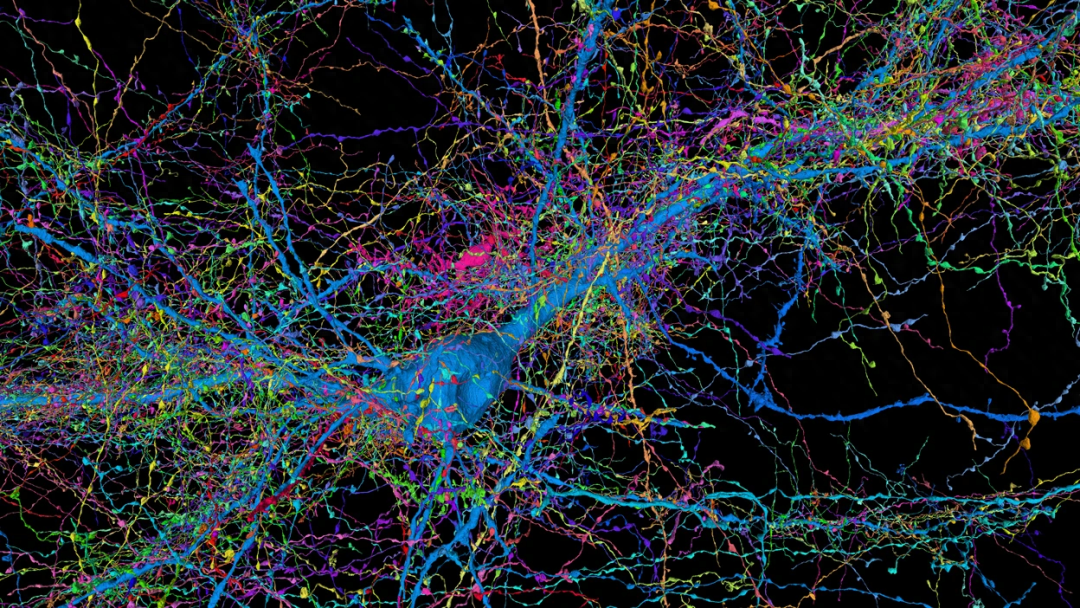

At the heart of these developments is increasing amounts of brain data. Starting in the 1970s, neuroscientists have been producing connectomes, maps of neuronal connections and morphology that capture static representations of the brain at a given moment, and this research has since intensified. In addition to these advances, researchers have also improved their ability to make functional recordings that can measure changes in neural activity over time at the resolution of single cells. Meanwhile, the field of transcriptomics allows researchers to measure gene activity in tissue samples and even map when and where that activity occurs.

To date, few attempts have been made to connect these different data sources or to collect them simultaneously from the entire brain of the same sample. But as the level of detail, size, and number of data sets increase, especially for the brains of relatively simple model organisms, machine learning systems are making a new approach to brain modeling feasible. This involves training artificial intelligence programs on connectome and other data to reproduce the neural activity you would expect to find in biological systems.

Computational neuroscientists and others need to solve some challenges before they can begin using machine learning to build simulations of the entire brain. However, a hybrid approach that combines information from traditional brain modeling techniques with machine learning systems trained on different data sets can make the entire effort more rigorous and informative.

Brain Mapping

The quest to map the brain began nearly half a century ago with 15 years of painstaking research in the nematode Caenorhabditis elegans. Over the past two decades, developments in automated tissue sectioning and imaging have made anatomical data more accessible to researchers, while advances in computing and automated image analysis have transformed the analysis of these data sets.

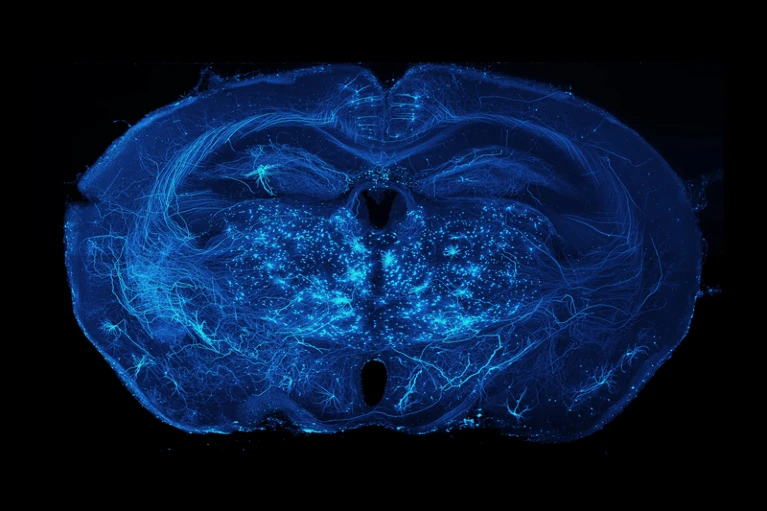

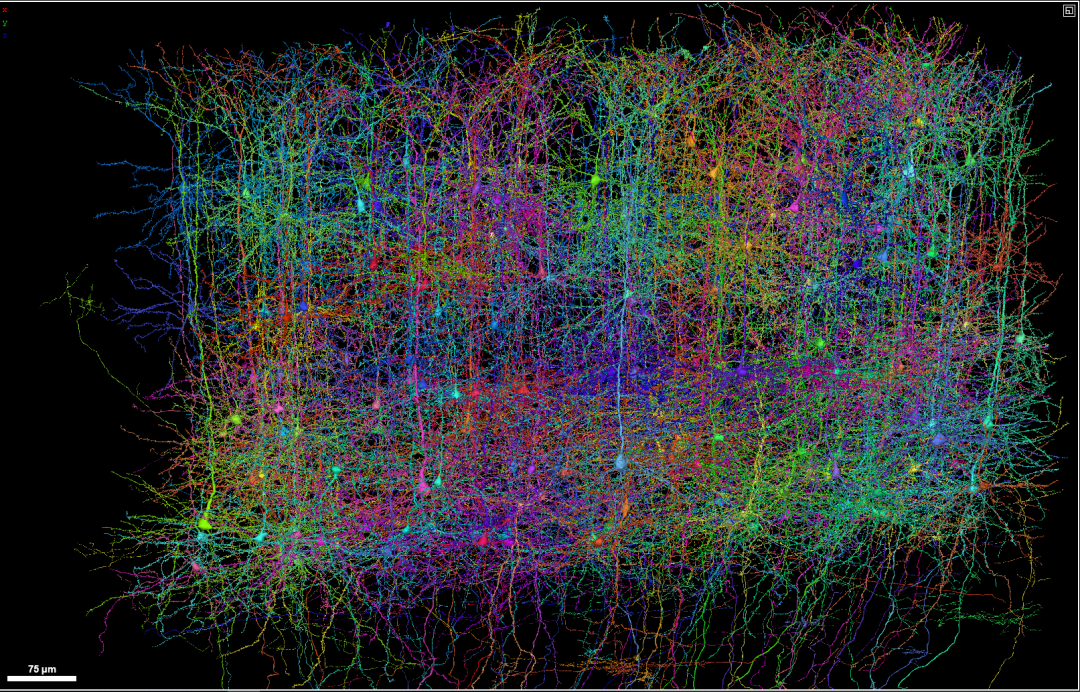

Connectomes have now been generated for the entire brain of C. elegans, larval and adult Drosophila melanogaster, and for small portions (one-thousandth and one-millionth, respectively) of mouse and human brains.

The anatomy diagrams produced so far contain major flaws. Imaging methods have not been able to map electrical connections at scale along with chemical synaptic connections. Researchers have focused primarily on neurons, although the non-neuronal glial cells that provide support to neurons appear to play a crucial role in the flow of information in the nervous system. Much is still unknown about the genes expressed and the proteins present in the neurons and other cells that were mapped.

Still, such maps have yielded some insights. In Drosophila melanogaster, for example, connectomics allows researchers to identify the mechanisms behind neural circuits responsible for behaviors such as aggression. The brain map also revealed how fruit flies compute information in the circuits responsible for knowing where they are and how to get from one place to another. In zebrafish (Danio rerio) larvae, connectomics helped reveal the workings of synaptic circuits underlying odor classification, control of eye position and movement, and navigation.

Efforts that could eventually generate the entire mouse brain connectome are underway—although with current methods, this could take a decade or more. The mouse brain is nearly 1,000 times larger than the brain of Drosophila melanogaster, which is composed of about 150,000 neurons.

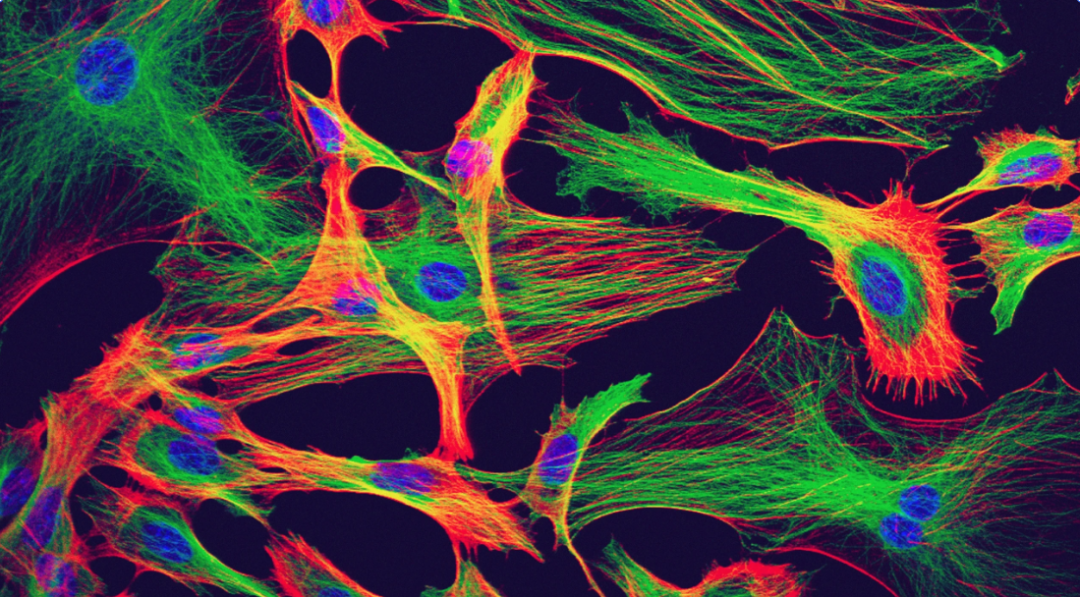

In addition to all these advances in connectomics, researchers are leveraging single-cell and spatial transcriptomics to capture gene expression patterns with ever-increasing accuracy and specificity. Various techniques also allow researchers to record neural activity from the entire brain of a vertebrate animal for hours at a time. In the case of the larval zebrafish brain, this means recording from nearly 100,000 neurons. These include proteins with fluorescent properties that change in response to changes in voltage or calcium levels, and microscopy techniques that enable 3D imaging of living brains at single-cell resolution. (Recordings of neural activity in this way provide a less accurate picture than electrophysiological recordings, but much better than non-invasive methods such as functional magnetic resonance imaging.)

Mathematics and Physics

When trying to simulate brain activity patterns, scientists mainly use physics-based methods. This requires generating a simulation of a nervous system or parts of a nervous system using a mathematical description of the behavior of real neurons or parts of a real nervous system. It also requires making informed guesses about aspects of the circuit that have not been verified by observation, such as network connectivity.

In some cases, speculation is extensive (see "Mystery Model") but in other ways, anatomical maps at single-cell and single-synapse resolution help researchers refute and generate hypotheses.

Mysterious Model

Due to a lack of data, it is difficult to evaluate whether certain neural network models capture what happens in real systems.

The controversial European Human Brain Project, which ended in September, originally aimed to computationally simulate the entire human brain. Although that goal was abandoned, the project did simulate parts of rodent and human brains, including tens of thousands of neurons in a rodent hippocampus model, based on limited biological measurements and a variety of synthetic data-generating procedures.

A major problem with this approach is that in the absence of detailed anatomical or functional diagrams, it is difficult to assess how accurately the resulting simulation captures what is happening in the biological system.

For about seventy years, neuroscientists have been refining theoretical descriptions of the circuits that enable the calculation of movement in Drosophila melanogaster. Since its completion in 2013, the motion detection circuit connectome, and subsequently the larger flight connectome, has provided detailed circuit diagrams that support some hypotheses about how the circuit works.

However, data collected from real neural networks also highlights the limitations of anatomy-driven approaches.

For example, a neural circuit model completed in the 1990s included a detailed analysis of the connectivity and physiology of the approximately 30 neurons that make up the crab (Cancer borealis) orogastric ganglion (which controls the animal's stomach). structure of movement). By measuring the activity of neurons under various conditions, the researchers found that even for relatively small collections of neurons, seemingly subtle changes, such as the introduction of a neuromodulator (a substance that changes the properties of neurons and synapses), , will also completely change the behavior of the circuit. This suggests that even with connectomes and other rich data sets to guide and constrain hypotheses about neural circuits, today's data may not be detailed enough for modelers to capture what is happening in biological systems.

This is an area where machine learning can provide a way forward.

By optimizing thousands or even billions of parameters guided by connectome and other data, machine learning models can be trained to produce neural network behavior that is consistent with real neural network behavior - using cellular resolution capabilities Record the measurement.

This machine learning model can incorporate information from traditional brain modeling techniques, such as the Hodgkin-Huxley model, which describes action potentials in neurons (across how changes in membrane voltage) are initiated and propagated using optimized parametric connectivity maps, functional activity recordings, or other data sets obtained for the whole brain. Alternatively, machine learning models can contain “black box” architectures that contain little explicitly specified biological knowledge but contain billions or hundreds of billions of parameters, all of which are empirically optimized.

For example, researchers can evaluate such models by comparing predictions of the system's neural activity to recordings of actual biological systems. Crucially, when machine learning programs are given untrained data, they evaluate how the model's predictions compare—as is standard practice when evaluating machine learning systems.

Axonal projections of neurons in the mouse brain. (Source: Adam Glaser, Jayaram Chandrashekar, Karel Svoboda, Allen Institute for Neurodynamics)

This approach will enable more rigorous modeling of brains containing thousands or more neurons. For example, researchers will be able to evaluate whether simpler models that are easier to compute simulate neural networks better than more complex models that provide more detailed biophysical information, and vice versa.

Machine learning is already being used in this way to improve understanding of other extremely complex systems. For example, since the 1950s, weather prediction systems have typically relied on carefully constructed mathematical models of meteorological phenomena, and modern systems are the result of the iterative refinement of such models by hundreds of researchers. However, over the past five years or so, researchers have developed several weather prediction systems that leverage machine learning. For example, these contain fewer assumptions related to how pressure gradients drive changes in wind speed and how wind speed moves moisture through the atmosphere. Instead, millions of parameters are optimized through machine learning to produce simulated weather behavior that is consistent with a database of past weather patterns.

This way of doing things does come with some challenges. Even if a model makes accurate predictions, it's difficult to explain how it does it. Additionally, models often fail to predict scenarios that are not included in their training data. A weather model trained to predict the next few days has difficulty extrapolating forecasts to weeks or months into the future. But in some cases - for forecasting rainfall several hours into the future - machine learning methods have outperformed traditional methods. Machine learning models also have practical advantages. They use simpler underlying code and can be used by scientists with less specialized meteorological knowledge.

For brain modeling, on the one hand, this approach could help fill some of the gaps in current datasets and reduce the need for more detailed measurements of individual biological components, such as individual neurons. On the other hand, as more comprehensive data sets become available, incorporating the data into the model will become simple.

Think Big

In order to realize this idea, some challenges need to be solved.

Machine learning programs are only as good as the data used to train and evaluate them. Therefore, neuroscientists should aim to obtain data sets from the entire brain of a sample—or even the entire body, if this becomes more feasible. Although it is easier to collect data from certain parts of the brain, using machine learning to model highly interconnected systems, such as neural networks, is unlikely to yield useful information if many parts of the system are not present in the underlying data. .

Researchers should also work to obtain anatomical maps of neural connections and functional recordings (and perhaps in the future gene expression maps) from whole brains from the same sample. Currently, either group tends to focus solely on getting one or the other, rather than both.

With only 302 neurons, the C. elegans nervous system may have enough hardwiring to allow researchers to assume that the connectivity map obtained from one sample will be the same for any other sample - although some studies Shows otherwise. But for larger nervous systems, such as those of Drosophila melanogaster and zebrafish larvae, the connectome variation between samples is significant, so brain models should be trained on structural and functional data obtained from the same sample.

Currently, this can only be achieved in two common model organisms. The bodies of C. elegans and zebrafish larvae are transparent, meaning researchers can make functional recordings from the organism's entire brain and pinpoint the activity of individual neurons. Following such recordings, the animals can be killed immediately, embedded in resin and sectioned, and anatomical measurements of neural connections made. In the future, however, researchers could expand the range of organisms for which such combined data acquisition is possible, for example, by developing new non-invasive methods, possibly using ultrasound, to record neural activity at high resolution.

Obtaining such multimodal datasets in the same sample requires extensive collaboration among researchers, investment in large team science, and increased funding agency support for a more comprehensive effort. But there is precedent for this approach, such as the U.S. Intelligence Advanced Research Program Activity's MICrONS project, which obtained functional and anatomical data on 1 cubic millimeter of mouse brain between 2016 and 2021.

In addition to obtaining this data, neuroscientists need to agree on key modeling goals and quantitative metrics to measure progress. Should the goal of the model be to predict the behavior of individual neurons based on past states or the entire brain? Should the activity of a single neuron be the key metric, or should it be the percentage of hundreds of thousands of active neurons? Likewise, what constitutes an accurate representation of neural activity in a biological system? Formal, agreed-upon benchmarks are essential for comparing modeling approaches and tracking progress over time.

Finally, to present brain modeling challenges to diverse communities, including computational neuroscientists and machine learning experts, researchers need to clarify to the broader scientific community which modeling tasks are the highest priority and which metrics should be used to evaluate the performance of the model. WeatherBench, an online platform that provides a framework for evaluating and comparing weather forecast models, provides a useful template.

Complexity of Key Technologies

Some will question—rightly so—whether machine learning approaches to brain modeling are scientifically useful. Could the problem of trying to understand how the brain work simply be replaced by the problem of trying to understand how large artificial networks work?

However, it is encouraging to use similar methods in a branch of neuroscience involved in determining how the brain processes and encodes sensory stimuli, such as sight and smell. Researchers are increasingly using classically modeled neural networks, in which some biological details are specified, combined with machine learning systems. The latter are trained on large visual or audio data sets to reproduce the visual or auditory abilities of the neural system, such as image recognition. The resulting network showed striking similarities to biological networks, but was easier to analyze and interrogate than true neural networks.

For now, perhaps it is enough to ask whether data from current brain atlases and other work can train machine learning models to reproduce neural activity that corresponds to what is seen in biological systems. Here, even failure can be fun - suggesting that mapping research must go deeper.

The above is the detailed content of Google Scientist Nature comments: How artificial intelligence can better understand the brain. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

Breaking through the boundaries of traditional defect detection, 'Defect Spectrum' achieves ultra-high-precision and rich semantic industrial defect detection for the first time.

Jul 26, 2024 pm 05:38 PM

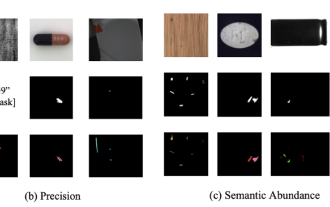

In modern manufacturing, accurate defect detection is not only the key to ensuring product quality, but also the core of improving production efficiency. However, existing defect detection datasets often lack the accuracy and semantic richness required for practical applications, resulting in models unable to identify specific defect categories or locations. In order to solve this problem, a top research team composed of Hong Kong University of Science and Technology Guangzhou and Simou Technology innovatively developed the "DefectSpectrum" data set, which provides detailed and semantically rich large-scale annotation of industrial defects. As shown in Table 1, compared with other industrial data sets, the "DefectSpectrum" data set provides the most defect annotations (5438 defect samples) and the most detailed defect classification (125 defect categories

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

Google AI won the IMO Mathematical Olympiad silver medal, the mathematical reasoning model AlphaProof was launched, and reinforcement learning is so back

Jul 26, 2024 pm 02:40 PM

For AI, Mathematical Olympiad is no longer a problem. On Thursday, Google DeepMind's artificial intelligence completed a feat: using AI to solve the real question of this year's International Mathematical Olympiad IMO, and it was just one step away from winning the gold medal. The IMO competition that just ended last week had six questions involving algebra, combinatorics, geometry and number theory. The hybrid AI system proposed by Google got four questions right and scored 28 points, reaching the silver medal level. Earlier this month, UCLA tenured professor Terence Tao had just promoted the AI Mathematical Olympiad (AIMO Progress Award) with a million-dollar prize. Unexpectedly, the level of AI problem solving had improved to this level before July. Do the questions simultaneously on IMO. The most difficult thing to do correctly is IMO, which has the longest history, the largest scale, and the most negative

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Nature's point of view: The testing of artificial intelligence in medicine is in chaos. What should be done?

Aug 22, 2024 pm 04:37 PM

Editor | ScienceAI Based on limited clinical data, hundreds of medical algorithms have been approved. Scientists are debating who should test the tools and how best to do so. Devin Singh witnessed a pediatric patient in the emergency room suffer cardiac arrest while waiting for treatment for a long time, which prompted him to explore the application of AI to shorten wait times. Using triage data from SickKids emergency rooms, Singh and colleagues built a series of AI models that provide potential diagnoses and recommend tests. One study showed that these models can speed up doctor visits by 22.3%, speeding up the processing of results by nearly 3 hours per patient requiring a medical test. However, the success of artificial intelligence algorithms in research only verifies this

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Training with millions of crystal data to solve the crystallographic phase problem, the deep learning method PhAI is published in Science

Aug 08, 2024 pm 09:22 PM

Editor |KX To this day, the structural detail and precision determined by crystallography, from simple metals to large membrane proteins, are unmatched by any other method. However, the biggest challenge, the so-called phase problem, remains retrieving phase information from experimentally determined amplitudes. Researchers at the University of Copenhagen in Denmark have developed a deep learning method called PhAI to solve crystal phase problems. A deep learning neural network trained using millions of artificial crystal structures and their corresponding synthetic diffraction data can generate accurate electron density maps. The study shows that this deep learning-based ab initio structural solution method can solve the phase problem at a resolution of only 2 Angstroms, which is equivalent to only 10% to 20% of the data available at atomic resolution, while traditional ab initio Calculation

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S

Automatically identify the best molecules and reduce synthesis costs. MIT develops a molecular design decision-making algorithm framework

Jun 22, 2024 am 06:43 AM

Automatically identify the best molecules and reduce synthesis costs. MIT develops a molecular design decision-making algorithm framework

Jun 22, 2024 am 06:43 AM

Editor | Ziluo AI’s use in streamlining drug discovery is exploding. Screen billions of candidate molecules for those that may have properties needed to develop new drugs. There are so many variables to consider, from material prices to the risk of error, that weighing the costs of synthesizing the best candidate molecules is no easy task, even if scientists use AI. Here, MIT researchers developed SPARROW, a quantitative decision-making algorithm framework, to automatically identify the best molecular candidates, thereby minimizing synthesis costs while maximizing the likelihood that the candidates have the desired properties. The algorithm also determined the materials and experimental steps needed to synthesize these molecules. SPARROW takes into account the cost of synthesizing a batch of molecules at once, since multiple candidate molecules are often available