Technology peripherals

Technology peripherals

AI

AI

Google's large model research has triggered fierce controversy: the generalization ability beyond the training data has been questioned, and netizens said that the AGI singularity may be delayed.

Google's large model research has triggered fierce controversy: the generalization ability beyond the training data has been questioned, and netizens said that the AGI singularity may be delayed.

Google's large model research has triggered fierce controversy: the generalization ability beyond the training data has been questioned, and netizens said that the AGI singularity may be delayed.

A new result recently discovered by Google DeepMind has caused widespread controversy in the Transformer field:

Its generalization ability cannot be extended to content beyond the training data.

This conclusion has not yet been further verified, but it has alarmed many big names. For example, Francois Chollet, the father of Keras, said that if the news is true, it will become a big news. A big deal in the modeling world.

Google Transformer is the infrastructure behind today's large models, and the "T" in GPT that we are familiar with refers to it.

A series of large models show strong contextual learning capabilities and can quickly learn examples and complete new tasks.

But now, researchers also from Google seem to have pointed out its fatal flaw - it is powerless beyond the training data, that is, human beings' existing knowledge.

For a time, many practitioners believed that AGI had become out of reach again.

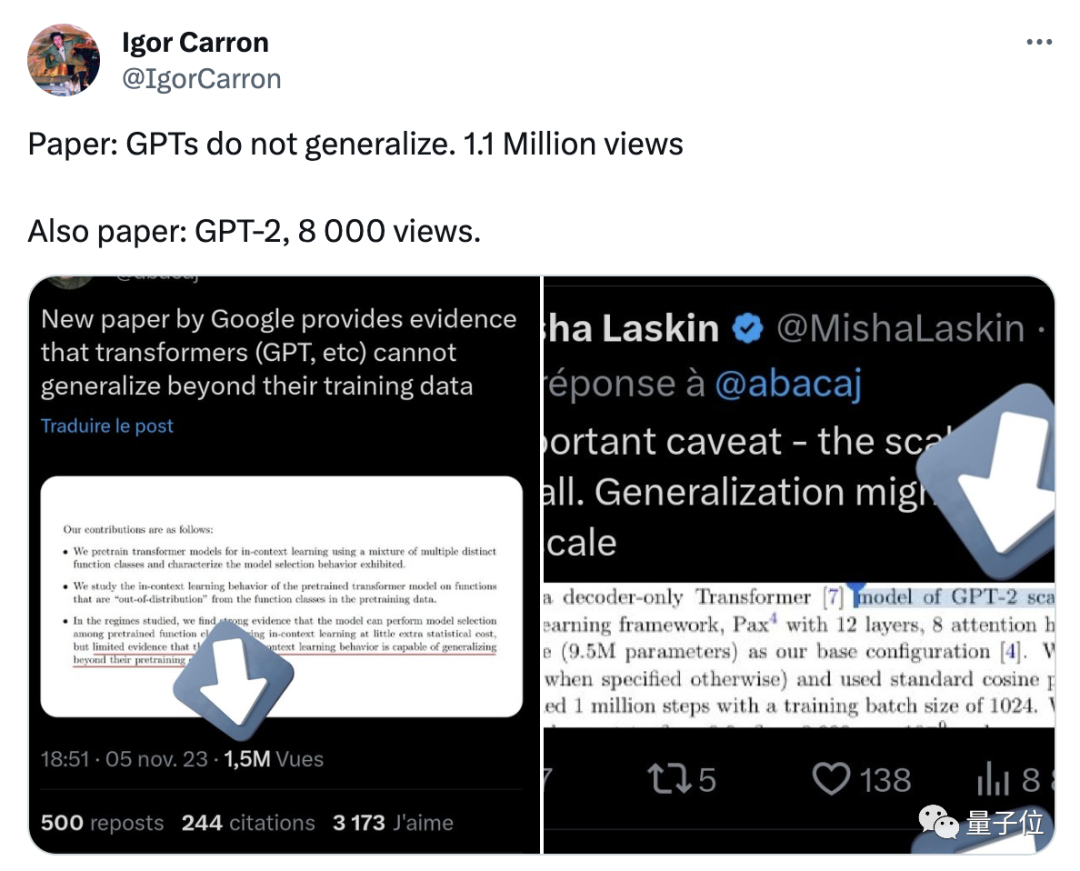

Some netizens pointed out that there are some key details that have been overlooked in the paper. For example, the experiment only involves the scale of GPT-2, and the training data is not rich enough

As time goes by, more netizens who have carefully studied this paper pointed out that there is nothing wrong with the research conclusion itself, but people have made excessive interpretations based on it.

After the paper triggered heated discussions among netizens, one of the authors also publicly made two clarifications:

First of all, the experiment used a simple Transformer is neither a "big" model nor a language model;

Secondly, the model can learn new tasks, but it cannot be generalized tonew types Task

After that, another netizen repeated this experiment in Colab, but got completely different results.

So, let’s first take a look at this paper and what Samuel, who proposed different results, said.

The new function is almost unpredictable

In this experiment, the author used a Jax-based machine learning framework to train a Transformer model close to the size of GPT-2, which only contains the decoder part

This model contains 12 layers, 8 attention heads, the embedding space dimension is 256, and the number of parameters is about 9.5 million

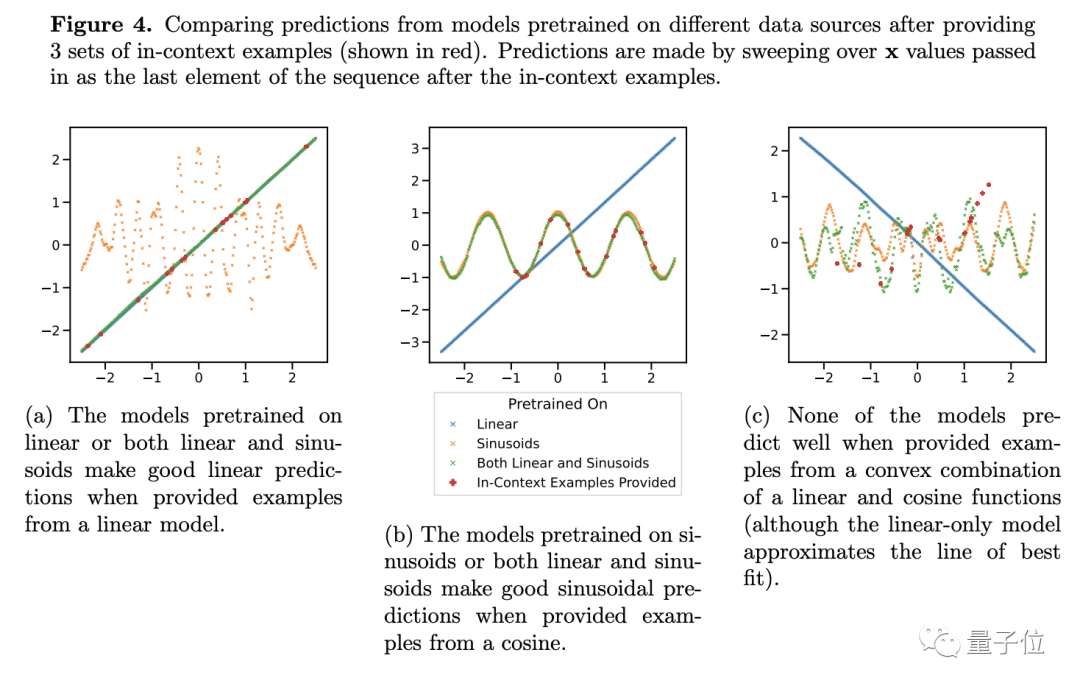

In order to test its generalization ability, the author chose functions as test objects . They input linear functions and sine functions into the model as training data.

These two functions are known to the model at this time, and the prediction results are naturally very good. However, when the researchers put the linear function and Problems arise when convex combinations of sinusoidal functions are performed.

Convexity combination is not that mysterious. The author constructed a function of the form f(x)=a·kx (1-a)sin(x). In our opinion, it is just two functions according to Proportions simply add up.

The reason why we think this is because our brains have this generalization ability, but large-scale models are different

For models that have only learned linear and sine functions, simple The addition looks novel

For this new function, Transformer’s predictions have almost no accuracy (see Figure 4c), so the author believes that the model lacks generalization ability on the function

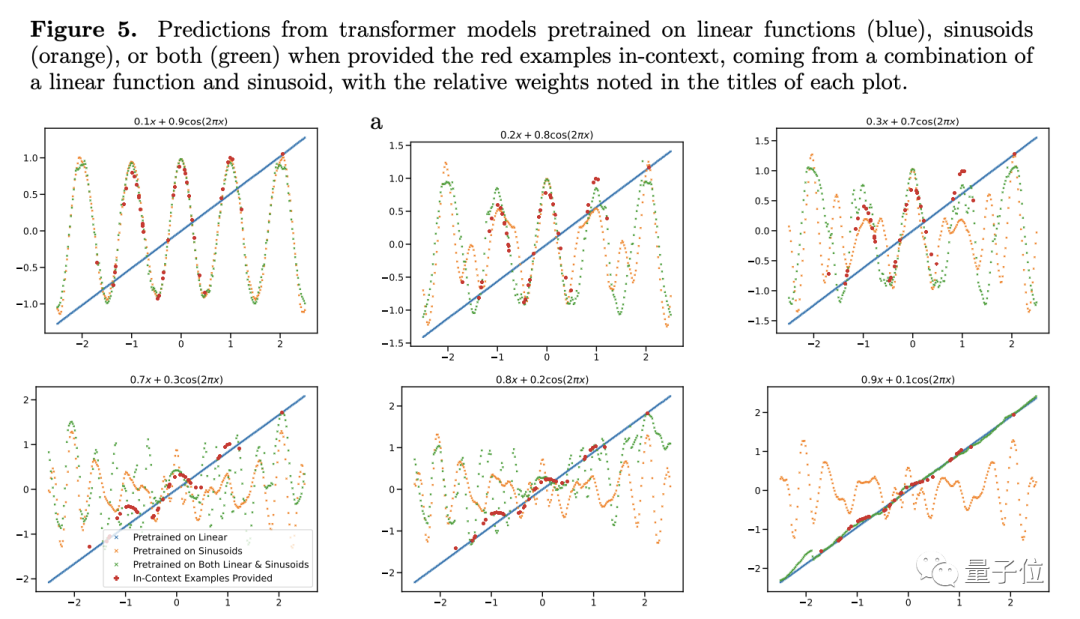

In order to further verify his conclusion, the author adjusted the weight of the linear or sinusoidal function, but even so the prediction performance of Transformer did not change significantly.

There is only one exception - when the weight of one of the items is close to 1, the model's prediction results are more consistent with the actual situation.

If the weight is 1, it means that the unfamiliar new function directly becomes the function that has been seen during training. This kind of data is obviously not helpful to the generalization ability of the model

Further experiments also showed that Transformer is not only very sensitive to the type of function, but even the same type of function may become unfamiliar conditions.

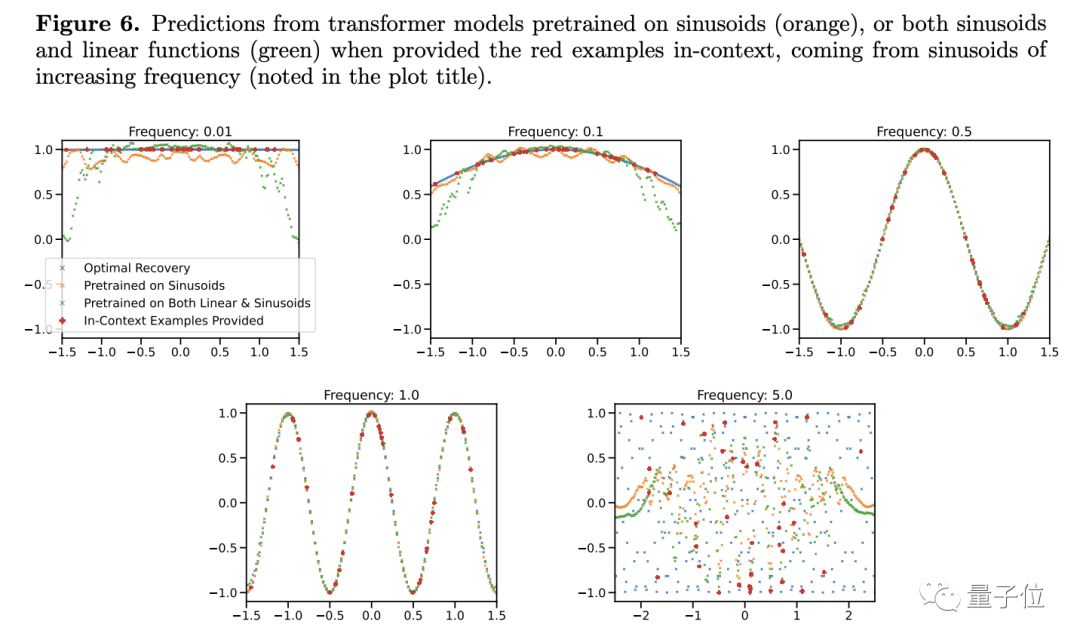

The researchers found that when changing the frequency of the sine function, even a simple function model, the prediction results will appear to change

Only when the frequency is close to the function in the training data, the model Only when the frequency is too high or too low can a more accurate prediction be given. When the frequency is too high or too low, the prediction results will have serious deviations...

Accordingly, the author believes that as long as the conditions are slightly It's a little different. I don't know how to do it with a large model. Doesn't this mean that the generalization ability is poor?

The author also describes some limitations in the research and how to apply observations on functional data to tokenized natural language problems.

The team also tried similar experiments on language models but encountered some obstacles. How to properly define task families (equivalent to the types of functions here), convex combinations, etc. have yet to be solved.

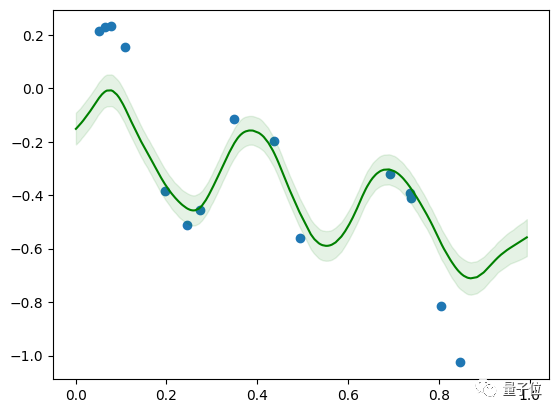

However, Samuel’s model is small in scale, with only 4 layers. It can be applied to the combination of linear and sine functions after 5 minutes of training on Colab

So what if it cannot be generalized

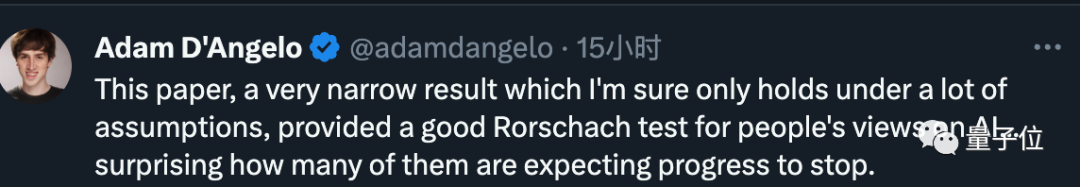

Based on the comprehensive content of the entire article, Quora CEO’s conclusion in this article is very narrow and can only be established if many assumptions are true

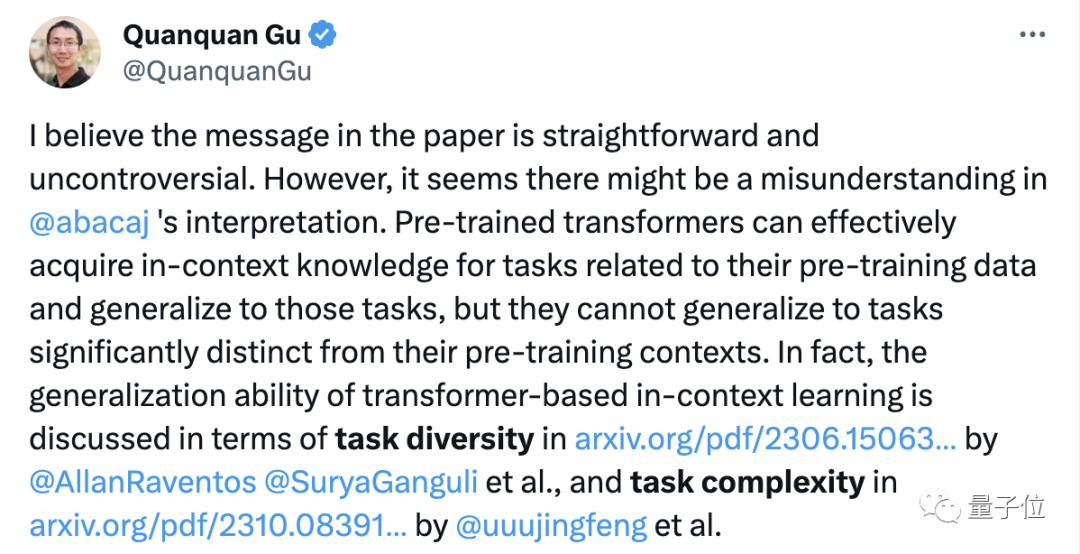

Sloan Prize winner and UCLA professor Gu Quanquan said that the conclusion of the paper itself is not controversial, but it should not be over-interpreted.

According to previous research, the Transformer model cannot generalize only when faced with content that is significantly different from the pre-training data. In fact, the generalization ability of large models is usually evaluated by the diversity and complexity of tasks

If you carefully investigate the generalization ability of Transformer, I am afraid it will take a bullet. Fly for a while longer.

But, even if we really lack generalization ability, what can we do?

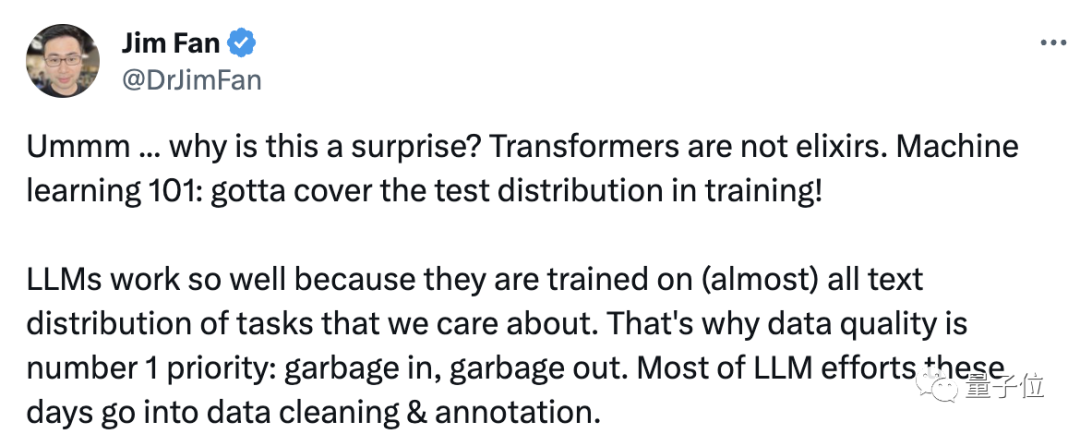

NVIDIA AI scientist Jim Fan said that this phenomenon is actually not surprising, because Transformer is not a panacea. The large model performs well because the training data is just right. It's the content we care about.

Jim further added, This is like saying, use 100 billion photos of cats and dogs to train a visual model, and then let the model identify aircraft, and then find, Wow, I really don’t know him.

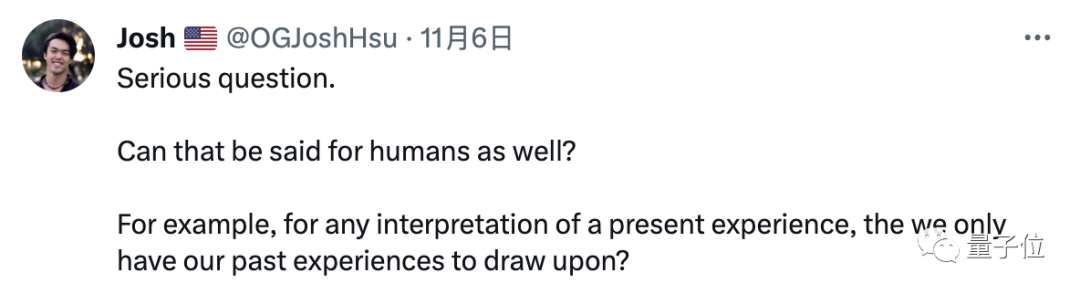

#When humans face some unknown tasks, not only large-scale models may not be able to find solutions. Does this also imply that humans lack generalization ability?

Therefore, in a goal-oriented process, whether it is a large model or a human, the ultimate goal is to solve the problem, and generalization is only a means

Change this expression into Chinese. Since the generalization ability is insufficient, then train it until there is no data other than the training sample

So, what do you think of this research?

Paper address: https://arxiv.org/abs/2311.00871

The above is the detailed content of Google's large model research has triggered fierce controversy: the generalization ability beyond the training data has been questioned, and netizens said that the AGI singularity may be delayed.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to comment deepseek

Feb 19, 2025 pm 05:42 PM

How to comment deepseek

Feb 19, 2025 pm 05:42 PM

DeepSeek is a powerful information retrieval tool. Its advantage is that it can deeply mine information, but its disadvantages are that it is slow, the result presentation method is simple, and the database coverage is limited. It needs to be weighed according to specific needs.

How to search deepseek

Feb 19, 2025 pm 05:39 PM

How to search deepseek

Feb 19, 2025 pm 05:39 PM

DeepSeek is a proprietary search engine that only searches in a specific database or system, faster and more accurate. When using it, users are advised to read the document, try different search strategies, seek help and feedback on the user experience in order to make the most of their advantages.

Sesame Open Door Exchange Web Page Registration Link Gate Trading App Registration Website Latest

Feb 28, 2025 am 11:06 AM

Sesame Open Door Exchange Web Page Registration Link Gate Trading App Registration Website Latest

Feb 28, 2025 am 11:06 AM

This article introduces the registration process of the Sesame Open Exchange (Gate.io) web version and the Gate trading app in detail. Whether it is web registration or app registration, you need to visit the official website or app store to download the genuine app, then fill in the user name, password, email, mobile phone number and other information, and complete email or mobile phone verification.

Why can't the Bybit exchange link be directly downloaded and installed?

Feb 21, 2025 pm 10:57 PM

Why can't the Bybit exchange link be directly downloaded and installed?

Feb 21, 2025 pm 10:57 PM

Why can’t the Bybit exchange link be directly downloaded and installed? Bybit is a cryptocurrency exchange that provides trading services to users. The exchange's mobile apps cannot be downloaded directly through AppStore or GooglePlay for the following reasons: 1. App Store policy restricts Apple and Google from having strict requirements on the types of applications allowed in the app store. Cryptocurrency exchange applications often do not meet these requirements because they involve financial services and require specific regulations and security standards. 2. Laws and regulations Compliance In many countries, activities related to cryptocurrency transactions are regulated or restricted. To comply with these regulations, Bybit Application can only be used through official websites or other authorized channels

Sesame Open Door Trading Platform Download Mobile Version Gateio Trading Platform Download Address

Feb 28, 2025 am 10:51 AM

Sesame Open Door Trading Platform Download Mobile Version Gateio Trading Platform Download Address

Feb 28, 2025 am 10:51 AM

It is crucial to choose a formal channel to download the app and ensure the safety of your account.

gate.io exchange official registration portal

Feb 20, 2025 pm 04:27 PM

gate.io exchange official registration portal

Feb 20, 2025 pm 04:27 PM

Gate.io is a leading cryptocurrency exchange that offers a wide range of crypto assets and trading pairs. Registering Gate.io is very simple. You just need to visit its official website or download the app, click "Register", fill in the registration form, verify your email, and set up two-factor verification (2FA), and you can complete the registration. With Gate.io, users can enjoy a safe and convenient cryptocurrency trading experience.

Top 10 recommended for crypto digital asset trading APP (2025 global ranking)

Mar 18, 2025 pm 12:15 PM

Top 10 recommended for crypto digital asset trading APP (2025 global ranking)

Mar 18, 2025 pm 12:15 PM

This article recommends the top ten cryptocurrency trading platforms worth paying attention to, including Binance, OKX, Gate.io, BitFlyer, KuCoin, Bybit, Coinbase Pro, Kraken, BYDFi and XBIT decentralized exchanges. These platforms have their own advantages in terms of transaction currency quantity, transaction type, security, compliance, and special features. For example, Binance is known for its largest transaction volume and abundant functions in the world, while BitFlyer attracts Asian users with its Japanese Financial Hall license and high security. Choosing a suitable platform requires comprehensive consideration based on your own trading experience, risk tolerance and investment preferences. Hope this article helps you find the best suit for yourself

The latest download address of Bitget in 2025: Steps to obtain the official app

Feb 25, 2025 pm 02:54 PM

The latest download address of Bitget in 2025: Steps to obtain the official app

Feb 25, 2025 pm 02:54 PM

This guide provides detailed download and installation steps for the official Bitget Exchange app, suitable for Android and iOS systems. The guide integrates information from multiple authoritative sources, including the official website, the App Store, and Google Play, and emphasizes considerations during download and account management. Users can download the app from official channels, including app store, official website APK download and official website jump, and complete registration, identity verification and security settings. In addition, the guide covers frequently asked questions and considerations, such as