Technology peripherals

Technology peripherals

AI

AI

New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%

New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%

New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%

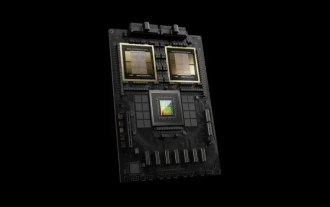

According to news on November 14, Nvidia officially released the new H200 GPU at the "Supercomputing 23" conference on the morning of the 13th local time, and updated the GH200 product line

Among them, H200 is still built on the existing Hopper H100 architecture, but adds more high-bandwidth memory (HBM3e) to better handle the large data sets required to develop and implement artificial intelligence, making it easier to run The overall performance of the large model is improved by 60% to 90% compared to the previous generation H100. The updated GH200 will also power the next generation of AI supercomputers. More than 200 exaflops of AI computing power will come online in 2024.

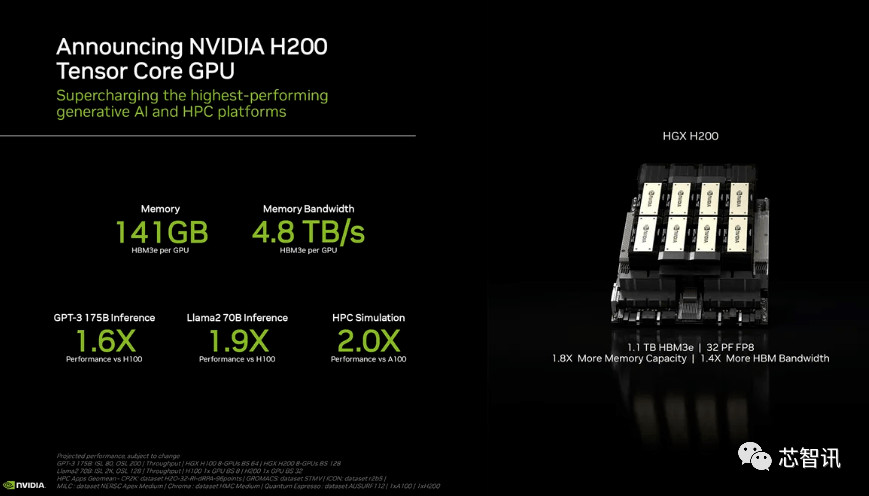

H200: HBM capacity increased by 76%, performance of large models improved by 90%

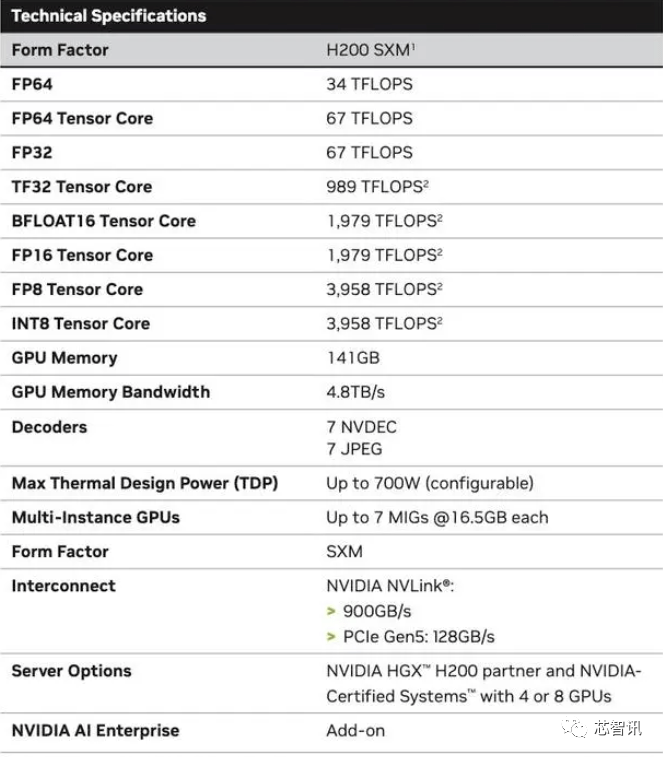

Specifically, the new H200 offers up to 141GB of HBM3e memory, effectively running at approximately 6.25 Gbps, for a total bandwidth of 4.8 TB/s per GPU in the six HBM3e stacks. This is a huge improvement compared to the previous generation H100 (with 80GB HBM3 and 3.35 TB/s bandwidth), with more than 76% increase in HBM capacity. According to official data, when running large models, H200 will bring an improvement of 60% (GPT3 175B) to 90% (Llama 2 70B) compared to H100

While some configurations of the H100 do offer more memory, such as the H100 NVL which pairs the two boards and offers a total of 188GB of memory (94GB per GPU), even compared to the H100 SXM variant, the new The H200 SXM also offers 76% more memory capacity and 43% more bandwidth.

It should be pointed out that the raw computing performance of H200 does not seem to have changed much. The only slide Nvidia showed that reflected compute performance was based on an HGX 200 configuration using eight GPUs, with a total performance of "32 PFLOPS FP8." While the original H100 provided 3,958 teraflops of FP8 computing power, eight such GPUs also provide approximately 32 PFLOPS of FP8 computing power

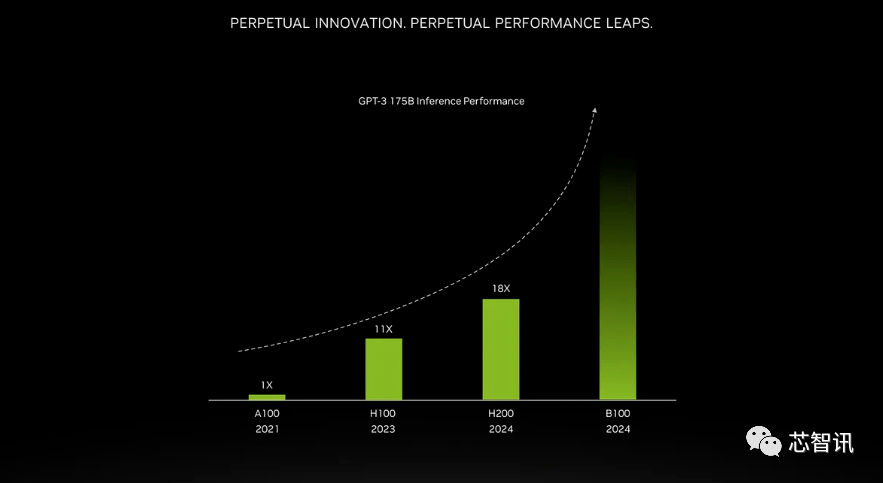

The improvement brought by higher bandwidth memory depends on the workload. Large models (such as GPT-3) will greatly benefit from the increase in HBM memory capacity. According to Nvidia, the H200 will perform 18 times better than the original A100 when running GPT-3 and approximately 11 times faster than the H100. Additionally, a teaser for the upcoming Blackwell B100 shows that it contains a taller bar that fades to black, roughly twice as long as the H200's.

Nvidia said that by launching new products, they hope to keep up with the growth in the size of data sets used to create artificial intelligence models and services. The enhanced memory capabilities will make the H200 faster in the process of feeding data to software, a process that helps train artificial intelligence to perform tasks such as recognizing images and speech.

"Integrating faster and larger HBM memory can help improve performance for computationally demanding tasks, including generative AI models and high-performance computing applications, while optimizing GPU usage and efficiency." NVIDIA High said Ian Buck, vice president of performance computing products.

Dion Harris, head of data center products at Nvidia, said: “When you look at the trends in the market, model sizes are increasing rapidly. This is our continued introduction of the latest and greatest technology. Model.”

Mainframe computer manufacturers and cloud service providers are expected to begin using the H200 in the second quarter of 2024. NVIDIA server manufacturing partners (including Evergreen, ASUS, Dell, Eviden, Gigabyte, HPE, Hongbai, Lenovo, Wenda, MetaVision, Wistron and Wiwing) can use the H200 to update existing systems, while Amazon , Google, Microsoft, Oracle, etc. will become the first cloud service providers to adopt H200.

Given the current strong market demand for Nvidia AI chips and the new H200 adding more expensive HBM3e memory, the price of H200 will definitely be more expensive. Nvidia doesn't list a price, but the previous generation H100 cost $25,000 to $40,000.

Nvidia spokesperson Kristin Uchiyama said final pricing will be determined by Nvidia’s manufacturing partners

Regarding whether the launch of H200 will affect the production of H100, Kristin Uchiyama said: "We expect the total supply throughout the year to increase."

Nvidia’s high-end AI chips have always been regarded as the best choice for processing large amounts of data and training large language models and AI generation tools. However, when the H200 chip was launched, AI companies were still desperately seeking A100/H100 chips in the market. The market's focus remains on whether Nvidia can provide enough supply to meet market demand. Therefore, NVIDIA has not given an answer to whether H200 chips will be in short supply like H100 chips

However, next year may be a more favorable period for GPU buyers. According to a report in the Financial Times in August, NVIDIA plans to triple H100 production in 2024, and the production target will increase from approximately 2023 to approximately 500,000 to 2 million in 2024. But generative AI is still booming, and demand is likely to be greater in the future.

For example, the newly launched GPT-4 is trained on approximately 10,000-25,000 blocks of A100. Meta’s large AI model requires approximately 21,000 A100 blocks for training. Stability AI uses approximately 5,000 A100s. Falcon-40B training requires 384 A100

According to Musk, GPT-5 may require 30,000-50,000 H100. Morgan Stanley's quote is 25,000 GPUs.

Sam Altman denied training GPT-5, but mentioned that "OpenAI has a serious shortage of GPUs, and the fewer people using our products, the better."

Of course, in addition to NVIDIA, AMD and Intel are also actively entering the AI market to compete with NVIDIA. The MI300X previously launched by AMD is equipped with 192GB HBM3 and 5.2TB/s memory bandwidth, which will make it far exceed the H200 in terms of capacity and bandwidth.

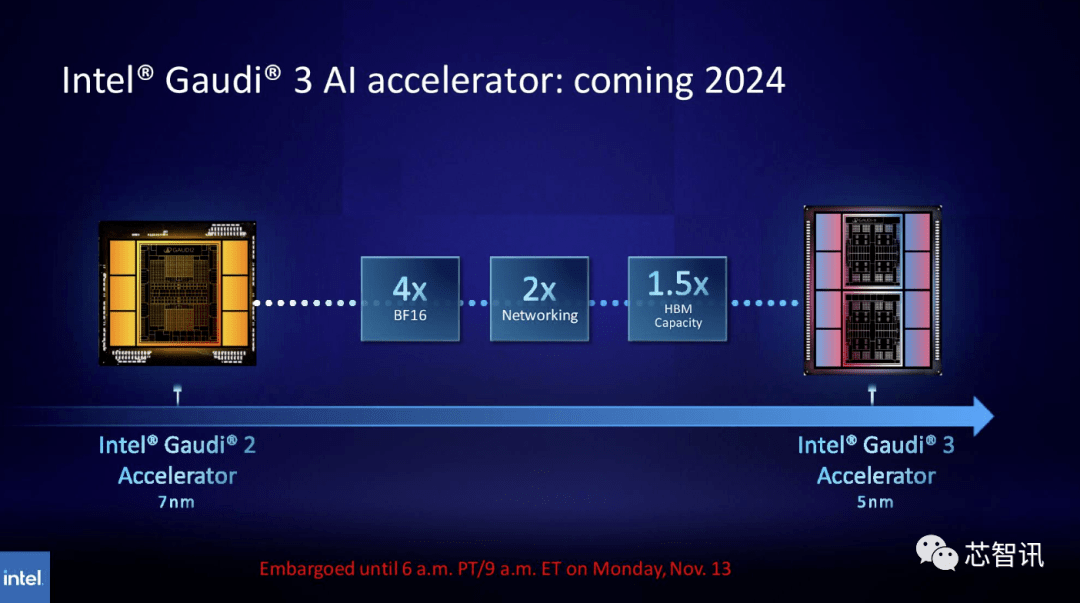

Similarly, Intel plans to increase the HBM capacity of Gaudi AI chips. According to the latest released information, Gaudi 3 uses a 5nm process, and its performance in BF16 workloads will be four times that of Gaudi 2, and its network performance will also be twice that of Gaudi 2 (Gaudi 2 has 24 built-in 100 GbE RoCE NICs ). Additionally, Gaudi 3 has 1.5 times the HBM capacity of Gaudi 2 (Gaudi 2 has a 96 GB HBM2E). As can be seen from the figure below, Gaudi 3 uses a chiplet-based design with two computing clusters, unlike Gaudi 2 which uses Intel’s single-chip solution

New GH200 super chip: Powering the next generation of AI supercomputers

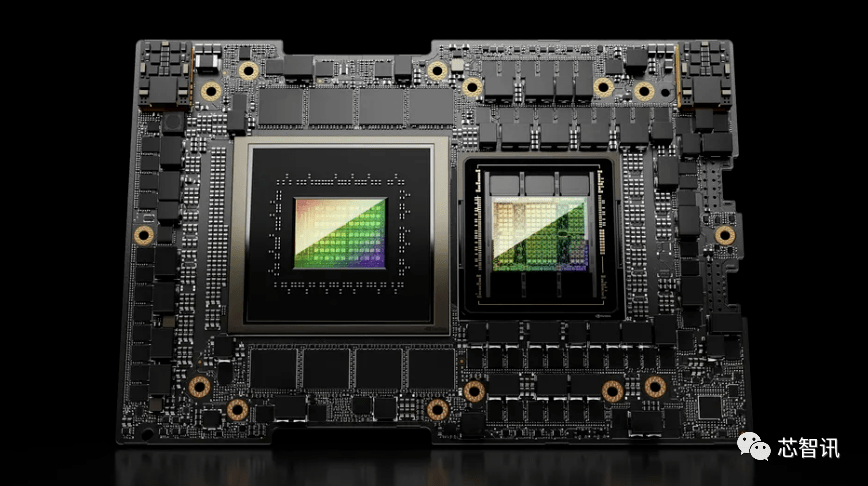

In addition to releasing the new H200 GPU, Nvidia also launched an upgraded version of the GH200 super chip. This chip uses NVIDIA NVLink-C2C chip interconnect technology, combining the latest H200 GPU and Grace CPU (not sure if it is an upgraded version). Each GH200 super chip will also carry a total of 624GB of memory

For comparison, the previous generation GH200 is based on the H100 GPU and the 72-core Grace CPU, providing 96GB of HBM3 and 512GB of LPDDR5X integrated in the same package.

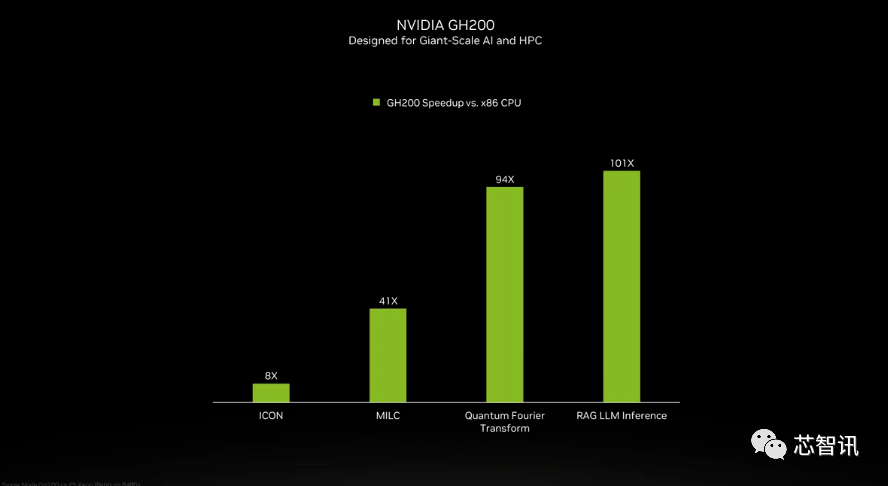

Although NVIDIA did not introduce the details of the Grace CPU in the GH200 super chip, NVIDIA provided some comparisons between GH200 and "modern dual-channel x86 CPUs." It can be seen that GH200 has brought an 8-fold improvement in ICON performance, and MILC, Quantum Fourier Transform, RAG LLM Inference, etc. have brought dozens or even hundreds of times improvement.

But it should be pointed out that accelerated and "non-accelerated systems" are mentioned. what does that mean? We can only assume that x86 servers are running code that is not fully optimized, especially considering that the world of artificial intelligence is evolving rapidly and new advances in optimization seem to be coming out on a regular basis.

The new GH200 will also be used in the new HGX H200 system. These are said to be "seamlessly compatible" with existing HGX H100 systems, meaning HGX H200s can be used in the same installation to increase performance and memory capacity without the need to redesign the infrastructure.

According to reports, the Alpine supercomputer of the Swiss National Supercomputing Center may be one of the first batch of GH100-based Grace Hopper supercomputers put into use next year. The first GH200 system to enter service in the United States will be the Venado supercomputer at Los Alamos National Laboratory. Texas Advanced Computing Center (TACC) Vista systems will also use the just-announced Grace CPU and Grace Hopper superchips, but it's unclear whether they will be based on the H100 or H200

Currently, the largest supercomputer to be installed is the Jupiter supercomputer at the Jϋlich Supercomputing Center. It will house "nearly" 24,000 GH200 superchips, totaling 93 exaflops of AI computing (presumably using FP8, although most AI still uses BF16 or FP16). It will also deliver 1 exaflop of traditional FP64 computing. It will use a "Quad GH200" board with four GH200 superchips.

Nvidia expects these new supercomputers to be installed in the next year or so to, collectively, achieve more than 200 exaflops of artificial intelligence computing power

If the original meaning does not need to be changed, the content needs to be rewritten into Chinese and the original sentence does not need to appear

The above is the detailed content of New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

NVIDIA launches RTX HDR function: unsupported games use AI filters to achieve HDR gorgeous visual effects

Feb 24, 2024 pm 06:37 PM

NVIDIA launches RTX HDR function: unsupported games use AI filters to achieve HDR gorgeous visual effects

Feb 24, 2024 pm 06:37 PM

According to news from this website on February 23, NVIDIA updated and launched the NVIDIA application last night, providing players with a new unified GPU control center, allowing players to capture wonderful moments through the powerful recording tool provided by the in-game floating window. In this update, NVIDIA also introduced the RTXHDR function. The official introduction is attached to this site: RTXHDR is a new AI-empowered Freestyle filter that can seamlessly introduce the gorgeous visual effects of high dynamic range (HDR) into In games that do not originally support HDR. All you need is an HDR-compatible monitor to use this feature with a wide range of DirectX and Vulkan-based games. After the player enables the RTXHDR function, the game will run even if it does not support HD

It is reported that NVIDIA RTX 50 series graphics cards are natively equipped with a 16-Pin PCIe Gen 6 power supply interface

Feb 20, 2024 pm 12:00 PM

It is reported that NVIDIA RTX 50 series graphics cards are natively equipped with a 16-Pin PCIe Gen 6 power supply interface

Feb 20, 2024 pm 12:00 PM

According to news from this website on February 19, in the latest video of Moore's LawisDead channel, anchor Tom revealed that Nvidia GeForce RTX50 series graphics cards will be natively equipped with PCIeGen6 16-Pin power supply interface. Tom said that in addition to the high-end GeForceRTX5080 and GeForceRTX5090 series, the mid-range GeForceRTX5060 will also enable new power supply interfaces. It is reported that Nvidia has set clear requirements that in the future, each GeForce RTX50 series will be equipped with a PCIeGen6 16-Pin power supply interface to simplify the supply chain. The screenshots attached to this site are as follows: Tom also said that GeForceRTX5090

NVIDIA RTX 4070 and 4060 Ti FE graphics cards have dropped below the recommended retail price, 4599/2999 yuan respectively

Feb 22, 2024 pm 09:43 PM

NVIDIA RTX 4070 and 4060 Ti FE graphics cards have dropped below the recommended retail price, 4599/2999 yuan respectively

Feb 22, 2024 pm 09:43 PM

According to news from this site on February 22, generally speaking, NVIDIA and AMD have restrictions on channel pricing, and some dealers who privately reduce prices significantly will also be punished. For example, AMD recently punished dealers who sold 6750GRE graphics cards at prices below the minimum price. The merchant was punished. This site has noticed that NVIDIA GeForce RTX 4070 and 4060 Ti have dropped to record lows. Their founder's version, that is, the public version of the graphics card, can currently receive a 200 yuan coupon at JD.com's self-operated store, with prices of 4,599 yuan and 2,999 yuan. Of course, if you consider third-party stores, there will be lower prices. In terms of parameters, the RTX4070 graphics card has a 5888CUDA core, uses 12GBGDDR6X memory, and a bit width of 192bi

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

NVIDIA dialogue model ChatQA has evolved to version 2.0, with the context length mentioned at 128K

Jul 26, 2024 am 08:40 AM

The open LLM community is an era when a hundred flowers bloom and compete. You can see Llama-3-70B-Instruct, QWen2-72B-Instruct, Nemotron-4-340B-Instruct, Mixtral-8x22BInstruct-v0.1 and many other excellent performers. Model. However, compared with proprietary large models represented by GPT-4-Turbo, open models still have significant gaps in many fields. In addition to general models, some open models that specialize in key areas have been developed, such as DeepSeek-Coder-V2 for programming and mathematics, and InternVL for visual-language tasks.

'AI Factory” will promote the reshaping of the entire software stack, and NVIDIA provides Llama3 NIM containers for users to deploy

Jun 08, 2024 pm 07:25 PM

'AI Factory” will promote the reshaping of the entire software stack, and NVIDIA provides Llama3 NIM containers for users to deploy

Jun 08, 2024 pm 07:25 PM

According to news from this site on June 2, at the ongoing Huang Renxun 2024 Taipei Computex keynote speech, Huang Renxun introduced that generative artificial intelligence will promote the reshaping of the full stack of software and demonstrated its NIM (Nvidia Inference Microservices) cloud-native microservices. Nvidia believes that the "AI factory" will set off a new industrial revolution: taking the software industry pioneered by Microsoft as an example, Huang Renxun believes that generative artificial intelligence will promote its full-stack reshaping. To facilitate the deployment of AI services by enterprises of all sizes, NVIDIA launched NIM (Nvidia Inference Microservices) cloud-native microservices in March this year. NIM+ is a suite of cloud-native microservices optimized to reduce time to market

After multiple transformations and cooperation with AI giant Nvidia, why did Vanar Chain surge 4.6 times in 30 days?

Mar 14, 2024 pm 05:31 PM

After multiple transformations and cooperation with AI giant Nvidia, why did Vanar Chain surge 4.6 times in 30 days?

Mar 14, 2024 pm 05:31 PM

Recently, Layer1 blockchain VanarChain has attracted market attention due to its high growth rate and cooperation with AI giant NVIDIA. Behind VanarChain's popularity, in addition to undergoing multiple brand transformations, popular concepts such as main games, metaverse and AI have also earned the project plenty of popularity and topics. Prior to its transformation, Vanar, formerly TerraVirtua, was founded in 2018 as a platform that supported paid subscriptions, provided virtual reality (VR) and augmented reality (AR) content, and accepted cryptocurrency payments. The platform was created by co-founders Gary Bracey and Jawad Ashraf, with Gary Bracey having extensive experience involved in video game production and development.

How to increase critical hit rate in Love and Deep Space

Mar 23, 2024 pm 01:31 PM

How to increase critical hit rate in Love and Deep Space

Mar 23, 2024 pm 01:31 PM

The characters in Love and Deep Sky have various numerical attributes. Each attribute in the game has its own specific role, and the critical hit rate attribute will affect the damage of the character, which can be said to be a very important attribute. , and the following is the method to improve this attribute, so players who want to know can take a look. Method 1. Core method for increasing the critical hit rate of Love and Deep Space. To achieve a critical hit rate of 80%, the key lies in the sum of the critical hit attributes of the six cards in your hand. Selection of Corona Cards: When selecting two Corona Cards, make sure that at least one of their core α and core β sub-attribute entries is a critical hit attribute. Advantages of the Lunar Corona Card: Not only do the Lunar Corona cards include critical hit in their basic attributes, but when they reach level 60 and have not broken through, each card can provide 4.1% of the critical hit.

TrendForce: Nvidia's Blackwell platform products drive TSMC's CoWoS production capacity to increase by 150% this year

Apr 17, 2024 pm 08:00 PM

TrendForce: Nvidia's Blackwell platform products drive TSMC's CoWoS production capacity to increase by 150% this year

Apr 17, 2024 pm 08:00 PM

According to news from this site on April 17, TrendForce recently released a report, believing that demand for Nvidia's new Blackwell platform products is bullish, and is expected to drive TSMC's total CoWoS packaging production capacity to increase by more than 150% in 2024. NVIDIA Blackwell's new platform products include B-series GPUs and GB200 accelerator cards integrating NVIDIA's own GraceArm CPU. TrendForce confirms that the supply chain is currently very optimistic about GB200. It is estimated that shipments in 2025 are expected to exceed one million units, accounting for 40-50% of Nvidia's high-end GPUs. Nvidia plans to deliver products such as GB200 and B100 in the second half of the year, but upstream wafer packaging must further adopt more complex products.