The primary focus of this article is the concept and theory of RAG. Next, we will show how to use LangChain, OpenAI language model and Weaviate vector database to implement a simple RAG orchestration system

The concept of Retrieval Augmented Generation (RAG) refers to providing additional information to LLM through external knowledge sources. This allows LLM to generate more accurate and contextual answers while reducing hallucinations.

When rewriting the content, the original text needs to be rewritten into Chinese without the original sentence

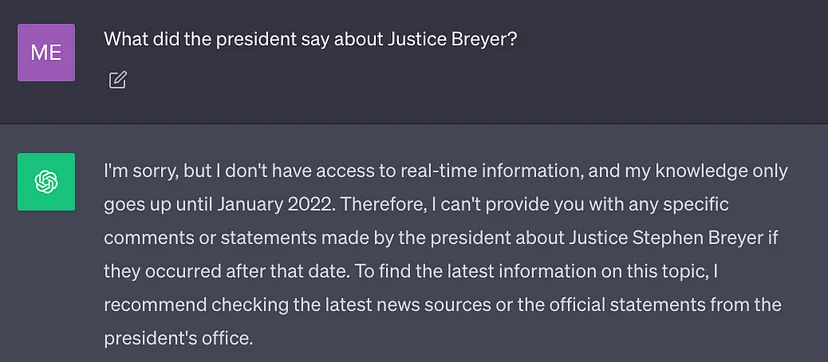

##Currently The best LLMs are trained using large amounts of data, so a large amount of general knowledge (parameter memory) is stored in their neural network weights. However, if the prompt requires LLM to generate results that require knowledge other than its training data (such as new information, proprietary data, or domain-specific information), factual inaccuracies may occur. When rewriting the content, you need to The original text was rewritten into Chinese without the original sentence (illusion), as shown in the screenshot below:

Therefore, it is important is to combine general knowledge of LLM with additional context in order to produce more accurate and contextual results with fewer hallucinations

Solution

Traditionally, we can fine-tune a model to adapt a neural network to a specific domain or proprietary information. Although this technology is effective, it requires extensive computing resources, is expensive, and requires the support of technical experts, making it difficult to quickly adapt to changing information

2020, Lewis et al.'s paper "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks" proposes a more flexible technology: Retrieval Augmented Generation (RAG). In this paper, the researchers combined the generative model with a retrieval module that can provide additional information using an external knowledge source that is more easily updated.

To put it in vernacular: RAG is to LLM what open book examination is to humans. For open-book exams, students can bring reference materials such as textbooks and notes where they can find relevant information to answer the questions. The idea behind open-book exams is that the exam focuses on students' ability to reason rather than their ability to memorize specific information.

Similarly, factual knowledge is distinct from LLM reasoning capabilities and can be stored in external knowledge sources that are easily accessible and updated

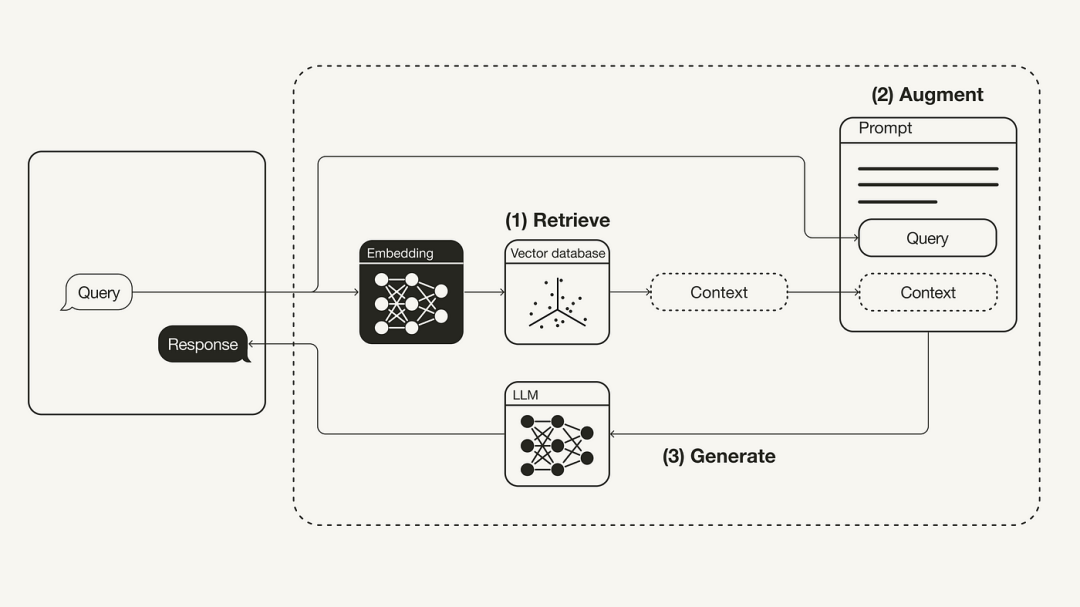

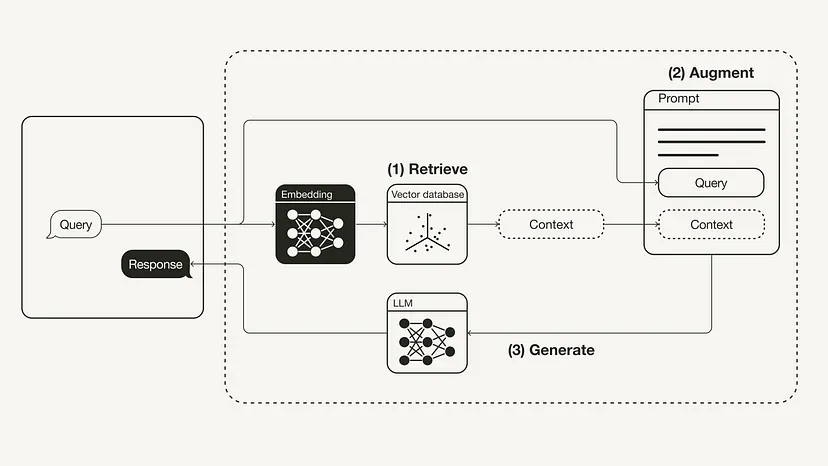

The following diagram shows the most basic RAG workflow:

Rewritten Content: Workflow for Reconstructing Retrieval Augmented Generation (RAG)

The following will introduce how to implement the RAG workflow through Python, which will use OpenAI LLM As well as the Weaviate vector database and an OpenAI embedding model. The role of LangChain is orchestration.

Please rephrase: Required prerequisites

Please make sure you have the required Python packages installed:

#!pip install langchain openai weaviate-client

In addition, use an .env file in the root directory to define relevant environment variables. You need an OpenAI account to obtain the OpenAI API Key, and then "Create a new key" in API keys (https://platform.openai.com/account/api-keys).

OPENAI_API_KEY="<your_openai_api_key>"</your_openai_api_key>

Then, run the following command to load the relevant environment variables.

import dotenvdotenv.load_dotenv()

Preparation work

#In the preparation phase, you need to prepare a vector database as an external knowledge source, using to save all additional information. The construction of this vector database includes the following steps:

Rewritten content: First, we need to collect and load data. As an example, if we wanted to use President Biden’s 2022 State of the Union address as additional context, LangChain’s GitHub repository provides the original text document of the file. In order to load this data, we can take advantage of LangChain's various built-in document loading tools. A document is a dictionary composed of text and metadata. To load text, you can use LangChain’s TextLoader tool

Original document address: https://raw.githubusercontent.com/langchain-ai/langchain/master/docs/docs/modules/ state_of_the_union.txt

import requestsfrom langchain.document_loaders import TextLoaderurl = "https://raw.githubusercontent.com/langchain-ai/langchain/master/docs/docs/modules/state_of_the_union.txt"res = requests.get(url)with open("state_of_the_union.txt", "w") as f:f.write(res.text)loader = TextLoader('./state_of_the_union.txt')documents = loader.load()Next, break the document into chunks. Because the original state of the document is too long to fit into LLM's context window, it needs to be split into smaller chunks of text. LangChain also has many built-in splitting tools. For this simple example, we can use a CharacterTextSplitter with chunk_size set to 500 and chunk_overlap set to 50, which maintains text continuity between text chunks.

from langchain.text_splitter import CharacterTextSplittertext_splitter = CharacterTextSplitter(chunk_size=500, chunk_overlap=50)chunks = text_splitter.split_documents(documents)

Finally, embed the text block and save it. In order for semantic search to be performed across text blocks, vector embeddings need to be generated for each text block and saved together with their embeddings. To generate vector embeddings, use the OpenAI embedding model; for storage, use the Weaviate vector database. Blocks of text can be automatically populated into a vector database by calling .from_documents().

from langchain.embeddings import OpenAIEmbeddingsfrom langchain.vectorstores import Weaviateimport weaviatefrom weaviate.embedded import EmbeddedOptionsclient = weaviate.Client(embedded_options = EmbeddedOptions())vectorstore = Weaviate.from_documents(client = client,documents = chunks,embedding = OpenAIEmbeddings(),by_text = False)

Step 1: Retrieve

After filling the vector database, we can It is defined as a retriever component that can obtain additional context based on the semantic similarity between the user query and the embedded block

retriever = vectorstore.as_retriever()

Step 2: Enhance

from langchain.prompts import ChatPromptTemplatetemplate = """You are an assistant for question-answering tasks. Use the following pieces of retrieved context to answer the question. If you don't know the answer, just say that you don't know. Use three sentences maximum and keep the answer concise.Question: {question} Context: {context} Answer:"""prompt = ChatPromptTemplate.from_template(template)print(prompt)Next, in order to enhance the prompt with additional context, you need to prepare a prompt template. As shown below, prompt can be easily customized using prompt template.

Step 3: Generate

Finally, we can build a Thought chain, linking the retriever, prompt template and LLM together. Once the RAG chain is defined, it can be called

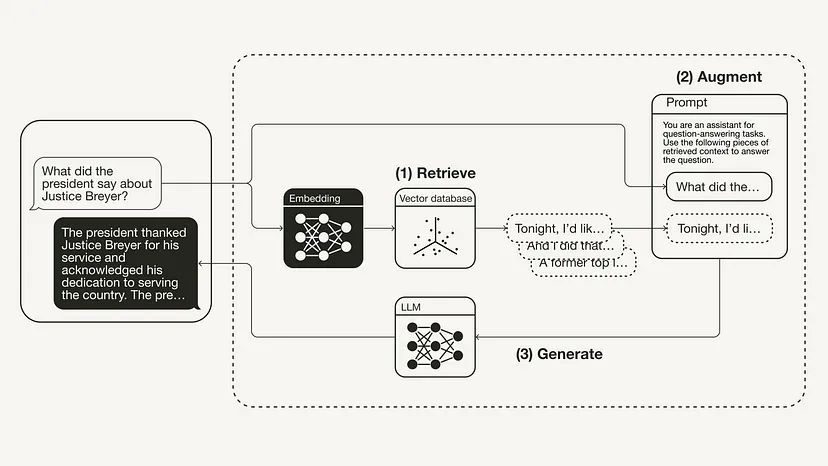

from langchain.chat_models import ChatOpenAIfrom langchain.schema.runnable import RunnablePassthroughfrom langchain.schema.output_parser import StrOutputParserllm = ChatOpenAI(model_name="gpt-3.5-turbo", temperature=0)rag_chain = ({"context": retriever,"question": RunnablePassthrough()} | prompt | llm| StrOutputParser() )query = "What did the president say about Justice Breyer"rag_chain.invoke(query)"The president thanked Justice Breyer for his service and acknowledged his dedication to serving the country. The president also mentioned that he nominated Judge Ketanji Brown Jackson as a successor to continue Justice Breyer's legacy of excellence."The following figure shows the RAG process for this specific example:

This article introduces the concept of RAG, which first came from the 2020 paper "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks" . After introducing the theory behind RAG, including motivation and solutions, this article shows how to implement it in Python. This article shows how to implement a RAG workflow using OpenAI LLM coupled with the Weaviate vector database and OpenAI embedding model. The role of LangChain is orchestration.

The above is the detailed content of Implement Python code to enhance retrieval capabilities for large models. For more information, please follow other related articles on the PHP Chinese website!