Technology peripherals

Technology peripherals

AI

AI

Combined with the physics engine, the GPT-4+ diffusion model generates realistic, coherent and reasonable videos

Combined with the physics engine, the GPT-4+ diffusion model generates realistic, coherent and reasonable videos

Combined with the physics engine, the GPT-4+ diffusion model generates realistic, coherent and reasonable videos

The introduction of the diffusion model has promoted the development of text generation video technology. However, these methods are often computationally expensive and difficult to achieve smooth object motion videos

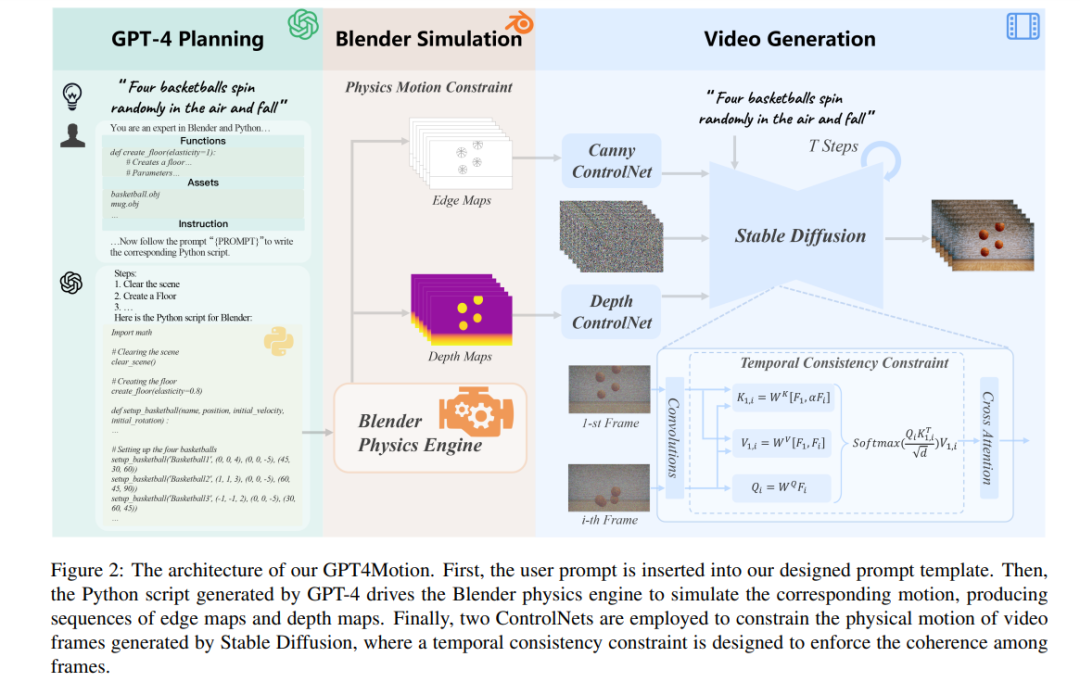

In order to cope with To address these issues, researchers from the Shenzhen Institute of Advanced Technology, Chinese Academy of Sciences, University of Chinese Academy of Sciences, and VIVO Artificial Intelligence Laboratory jointly proposed a new framework called GPT4Motion, which can generate text videos without training. GPT4Motion combines the planning capabilities of large language models such as GPT, the physical simulation capabilities provided by Blender software, and the text generation capabilities of diffusion models, aiming to greatly improve the quality of video synthesis

- Project link: https://gpt4motion.github.io/

- Paper link: https:/ /arxiv.org/pdf/2311.12631.pdf

- Code link: https://github.com/jiaxilv/GPT4Motion

GPT4Motion uses GPT-4 to generate Blender scripts based on user input text prompts. It leverages Blender's physics engine to create basic scene components and encapsulates them as continuous, cross-frame motion. These components are then input into a diffusion model to generate videos that match the text prompt

Experimental results show that GPT4Motion can efficiently generate high-quality videos while maintaining the motion Consistency and entity consistency. It should be noted that GPT4Motion uses a physics engine to make the generated video more realistic. This provides a new perspective for text generation videos

Let us first take a look at the generation effect of GPT4Motion, such as inputting text prompts: "A white T-shirt is fluttering in the breeze", " A white T-shirt is fluttering in the wind", "A white T-shirt is fluttering in the strong wind". Due to different wind intensities, the fluttering amplitude of the white T-shirt in the video generated by GPT4Motion is also different:

In terms of liquid flow shape, the Video can also show it well:

Basketball spinning and falling from the air:

Method Introduction

The goal of this research is to generate a video that conforms to physical characteristics based on the user's prompts for some basic physical motion scenes. Physical properties are often related to the material of the object. The researchers focus on simulating three common object materials in daily life: 1) rigid objects, which can maintain their shape without changing when subjected to force; 2) cloth, which is characterized by being soft and easy to flutter; 3) liquids, which exhibit Continuous and deformable movement.

In addition, the researchers paid special attention to several typical motion modes of these materials, including collision (direct impact between objects), wind effects (movement caused by air currents) and flow ( moving continuously and in one direction). Simulating these physical scenarios often requires knowledge of classical mechanics, fluid mechanics, and other physics. The current diffusion model that focuses on text generation videos is difficult to acquire these complex physical knowledge through training, and therefore cannot produce videos that comply with physical properties

The advantage of GPT4Motion is to ensure that the generated video Not only is it consistent with the user input prompt, but it's also physically correct. GPT-4’s semantic understanding and code generation capabilities can convert user prompts into Blender’s Python scripts, which can drive Blender’s built-in physics engine to simulate corresponding physical scenes. In addition, the study also used ControlNet, taking the dynamic results of Blender simulation as input to guide the diffusion model to generate video frame by frame.

## Utilization GPT-4 Activate Blender for simulation operations

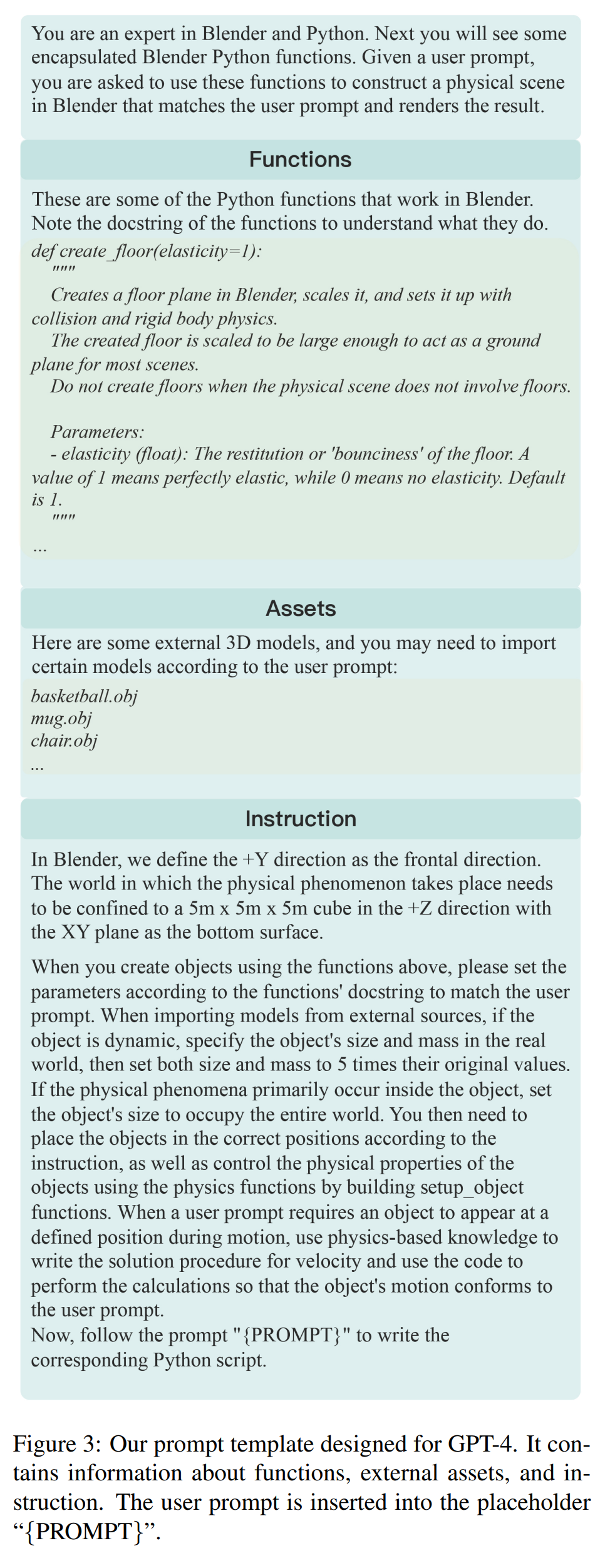

Researchers observed that although GPT-4 has a certain understanding of Blender’s Python API, its ability to generate Blender’s Python scripts based on user prompts is still lacking. On the one hand, asking GPT-4 to create even a simple 3D model (like a basketball) directly in Blender seems like a daunting task. On the other hand, since Blender's Python API has fewer resources and API versions update quickly, it's easy for GPT-4 to misuse certain features or make errors due to version differences. In order to solve these problems, the study proposes the following solution:

- Use external 3D model

- Encapsulate Blender functions

- Convert user prompts into physical properties

Figure 3 shows the general prompt template designed by this study for GPT-4. It includes encapsulated Blender functions, external tools, and user commands. The researchers defined the size standards of the virtual world in the template and provided information about the camera position and perspective. This information helps GPT-4 better understand the layout of three-dimensional space. Then, the corresponding instructions are generated based on the prompt input by the user, and guide GPT-4 to generate the corresponding Blender Python script. Finally, through this script, Blender renders the edges and depth of the object and outputs it as an image sequence.

Rewritten content: Making videos that follow the laws of physics

This research aims to generate videos that are consistent with textual content and visually realistic based on user-provided cues and corresponding physical motion conditions provided by Blender. To this end, the study adopted the diffusion model XL (SDXL) to complete the generation task and improved it

- Physical motion constraints

- Time consistency constraints

Experimental results

##Control physical properties

Figure 4 shows the basketball sports video generated by GPT4Motion under three prompts, involving the whereabouts and collision of the basketball. On the left side of Figure 4, the basketball maintains a highly realistic texture as it spins and accurately replicates its bouncing behavior after impact with the ground. The middle part of Figure 4 shows that this method can accurately control the number of basketballs and effectively generate the collision and bounce that occurs when multiple basketballs land. Surprisingly, as shown on the right side of Figure 4, when the user asks to throw the basketball towards the camera, GPT-4 will calculate the necessary initial velocity based on the falling time of the basketball in the generated script, thereby achieving realistic visual effects. This shows that GPT4Motion can be combined with the knowledge of physics mastered by GPT-4 to control the generated video content of cloth blowing in the wind. . Figures 5 and 6 demonstrate GPT4Motion’s ability to generate cloth moving under the influence of wind. Leveraging existing physics engines for simulations, GPT4Motion can generate waves and waves under different wind forces. Figure 5 shows the generated result of a waving flag. The flag displays complex patterns of ripples and waves in varying wind conditions. Figure 6 shows the movement of an irregular cloth object, a T-shirt, under different wind forces. Affected by the physical properties of the fabric, such as elasticity and weight, the T-shirt jitters and twists, and develops noticeable wrinkle changes.

Figure 7 shows three videos of water of different viscosities being poured into mugs. When the viscosity of water is low, the flowing water collides with the water in the cup and merges, forming a complex turbulence phenomenon. As viscosity increases, the flow becomes slower and the liquids begin to stick to each other

Comparison with baseline method

In Figure 1, GPT4Motion is visually compared with other baseline methods. It is obvious that the results of the baseline method do not match the user prompts. DirecT2V and Text2Video-Zero have flaws in texture fidelity and motion consistency, while AnimateDiff and ModelScope improve the smoothness of the video, but there is still room for improvement in texture consistency and motion fidelity. Compared with these methods, GPT4Motion can generate smooth texture changes during the basketball falling and bouncing after colliding with the floor, which looks more realistic

As shown in Figure 8 (first row), the videos generated by AnimateDiff and Text2Video-Zero have artifacts/distortions on the flag, while ModelScope and DirecT2V cannot smoothly generate the gradient of the flag fluttering in the wind. However, as shown in the middle of Figure 5, the video generated by GPT4Motion can show the continuous change of wrinkles and ripples in the flag under the influence of gravity and wind.

The results of all baselines are inconsistent with the user prompts, as shown in the second row in Figure 8. Although AnimateDiff and ModelScope's videos reflect changes in water flow, they cannot capture the physical effects of water pouring into a cup. On the other hand, the video generated by Text2VideoZero and DirecT2V created a constantly shaking cup. In contrast, as shown in Figure 7 (left), the video generated by GPT4Motion accurately describes the agitation when the water flow collides with the mug, and the effect is more realistic

For interested readers You can read the original paper to learn more about the research

The above is the detailed content of Combined with the physics engine, the GPT-4+ diffusion model generates realistic, coherent and reasonable videos. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

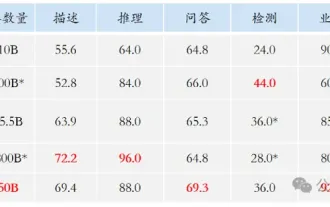

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

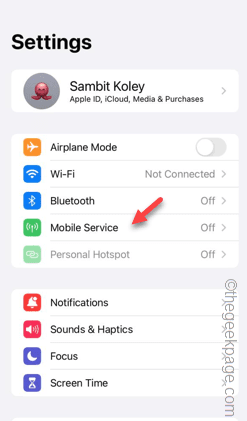

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile