Technology peripherals

Technology peripherals

AI

AI

Specific pre-trained models for the biomedical NLP domain: PubMedBERT

Specific pre-trained models for the biomedical NLP domain: PubMedBERT

Specific pre-trained models for the biomedical NLP domain: PubMedBERT

The rapid development of large language models this year has resulted in models like BERT now being called "small" models. In Kaggle's LLM science exam competition, players using deberta achieved fourth place, which is an excellent result. Therefore, in a specific domain or need, a large language model is not necessarily required as the best solution, and small models also have their place. Therefore, what we are going to introduce today is PubMedBERT, which is a paper published by Microsoft Research at ACM in 2022. This model pre-trains BERT from scratch by using domain-specific corpora

Here are the main takeaways from the paper:

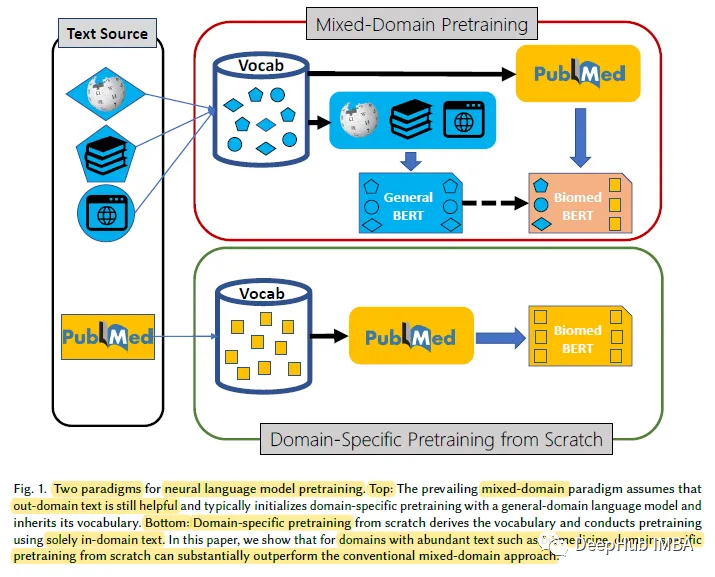

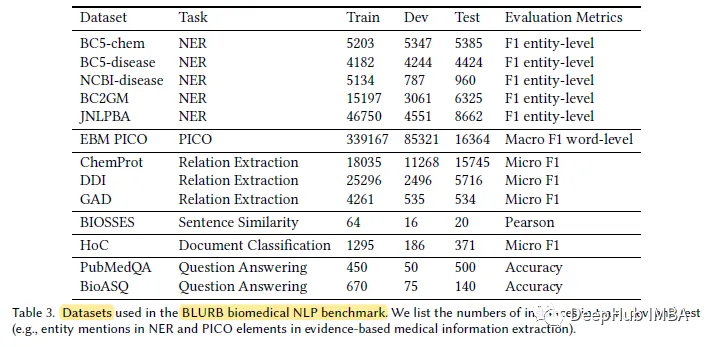

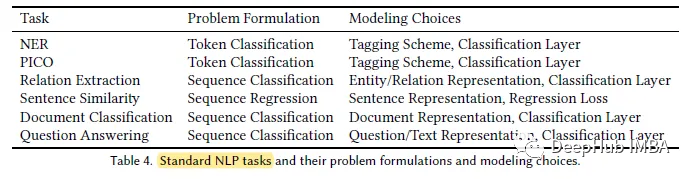

For specific domains with large amounts of unlabeled text, such as the biomedical domain, pre-training from scratch Language models are more effective than continuous pre-training of general-domain language models. To this end, we propose the Biomedical Language Understanding and Reasoning Benchmark (BLURB) for domain-specific pre-training

PubMedBERT

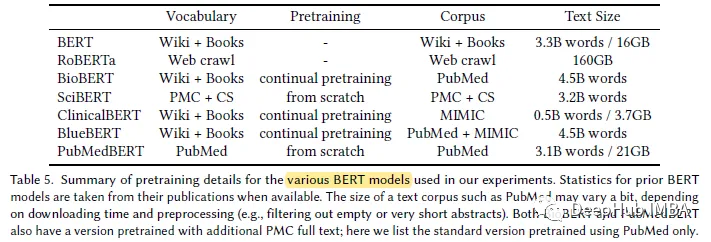

1 , Domain-specific Pretraining

# Research shows that domain-specific pretraining from scratch greatly outperforms continuous pretraining of general language models, thus demonstrating support for hybrid The prevailing assumptions of domain pretraining do not always apply.

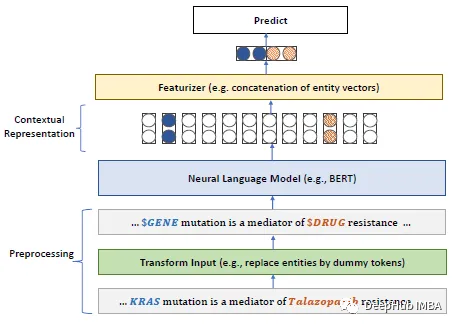

2. Model

Using the BERT model, for the masked language model (MLM), the requirement of whole word masking (WWM) is necessary Mask the entire word

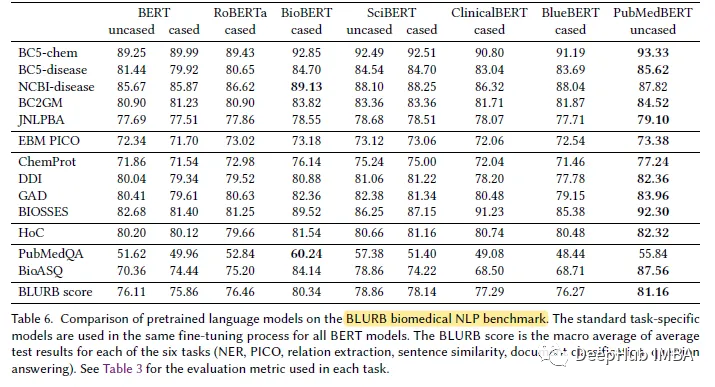

3. BLURB data set

According to the author, BLUE[45] is The first attempt to create an NLP benchmark in the biomedical field. But BLUE's coverage is limited. For biomedical applications based on pubmed, the author proposes the Biomedical Language Understanding and Reasoning Benchmark (BLURB).

PubMedBERT uses a larger domain-specific corpus (21GB).

Results displayed

In most biomedical natural In language processing (NLP) tasks, PubMedBERT consistently outperforms all other BERT models, often with clear advantages

The above is the detailed content of Specific pre-trained models for the biomedical NLP domain: PubMedBERT. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1246

1246

24

24

Step-by-step guide to using Groq Llama 3 70B locally

Jun 10, 2024 am 09:16 AM

Step-by-step guide to using Groq Llama 3 70B locally

Jun 10, 2024 am 09:16 AM

Translator | Bugatti Review | Chonglou This article describes how to use the GroqLPU inference engine to generate ultra-fast responses in JanAI and VSCode. Everyone is working on building better large language models (LLMs), such as Groq focusing on the infrastructure side of AI. Rapid response from these large models is key to ensuring that these large models respond more quickly. This tutorial will introduce the GroqLPU parsing engine and how to access it locally on your laptop using the API and JanAI. This article will also integrate it into VSCode to help us generate code, refactor code, enter documentation and generate test units. This article will create our own artificial intelligence programming assistant for free. Introduction to GroqLPU inference engine Groq

Large models are also very powerful in time series prediction! The Chinese team activates new capabilities of LLM and achieves SOTA beyond traditional models

Apr 11, 2024 am 09:43 AM

Large models are also very powerful in time series prediction! The Chinese team activates new capabilities of LLM and achieves SOTA beyond traditional models

Apr 11, 2024 am 09:43 AM

The potential of large language models is stimulated - high-precision time series prediction can be achieved without training large language models, surpassing all traditional time series models. Monash University, Ant and IBM Research jointly developed a general framework that successfully promoted the ability of large language models to process sequence data across modalities. The framework has become an important technological innovation. Time series prediction is beneficial to decision-making in typical complex systems such as cities, energy, transportation, and remote sensing. Since then, large models are expected to revolutionize time series/spatiotemporal data mining. The general large language model reprogramming framework research team proposed a general framework to easily use large language models for general time series prediction without any training. Two key technologies are mainly proposed: timing input reprogramming; prompt prefixing. Time-

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

To learn more about AIGC, please visit: 51CTOAI.x Community https://www.51cto.com/aigc/Translator|Jingyan Reviewer|Chonglou is different from the traditional question bank that can be seen everywhere on the Internet. These questions It requires thinking outside the box. Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text

Deploy large language models locally in OpenHarmony

Jun 07, 2024 am 10:02 AM

Deploy large language models locally in OpenHarmony

Jun 07, 2024 am 10:02 AM

This article will open source the results of "Local Deployment of Large Language Models in OpenHarmony" demonstrated at the 2nd OpenHarmony Technology Conference. Open source address: https://gitee.com/openharmony-sig/tpc_c_cplusplus/blob/master/thirdparty/InferLLM/docs/ hap_integrate.md. The implementation ideas and steps are to transplant the lightweight LLM model inference framework InferLLM to the OpenHarmony standard system, and compile a binary product that can run on OpenHarmony. InferLLM is a simple and efficient L

Hongmeng Smart Travel S9 and full-scenario new product launch conference, a number of blockbuster new products were released together

Aug 08, 2024 am 07:02 AM

Hongmeng Smart Travel S9 and full-scenario new product launch conference, a number of blockbuster new products were released together

Aug 08, 2024 am 07:02 AM

This afternoon, Hongmeng Zhixing officially welcomed new brands and new cars. On August 6, Huawei held the Hongmeng Smart Xingxing S9 and Huawei full-scenario new product launch conference, bringing the panoramic smart flagship sedan Xiangjie S9, the new M7Pro and Huawei novaFlip, MatePad Pro 12.2 inches, the new MatePad Air, Huawei Bisheng With many new all-scenario smart products including the laser printer X1 series, FreeBuds6i, WATCHFIT3 and smart screen S5Pro, from smart travel, smart office to smart wear, Huawei continues to build a full-scenario smart ecosystem to bring consumers a smart experience of the Internet of Everything. Hongmeng Zhixing: In-depth empowerment to promote the upgrading of the smart car industry Huawei joins hands with Chinese automotive industry partners to provide

Summarizing 374 related works, Tao Dacheng's team, together with the University of Hong Kong and UMD, released the latest review of LLM knowledge distillation

Mar 18, 2024 pm 07:49 PM

Summarizing 374 related works, Tao Dacheng's team, together with the University of Hong Kong and UMD, released the latest review of LLM knowledge distillation

Mar 18, 2024 pm 07:49 PM

Large Language Models (LLMs) have developed rapidly in the past two years, and some phenomenal models and products have emerged, such as GPT-4, Gemini, Claude, etc., but most of them are closed source. There is a large gap between most open source LLMs currently accessible to the research community and closed source LLMs. Therefore, improving the capabilities of open source LLMs and other small models to reduce the gap between them and closed source large models has become a research hotspot in this field. The powerful capabilities of LLM, especially closed-source LLM, enable scientific researchers and industrial practitioners to utilize the output and knowledge of these large models when training their own models. This process is essentially knowledge distillation (Knowledge, Dist

OWASP releases large language model network security and governance checklist

Apr 17, 2024 pm 07:31 PM

OWASP releases large language model network security and governance checklist

Apr 17, 2024 pm 07:31 PM

The biggest risk currently faced by artificial intelligence technology is that the development and application speed of large language models (LLM) and generative artificial intelligence technology have far exceeded the speed of security and governance. Use of generative AI and large language model products from companies like OpenAI, Anthropic, Google, and Microsoft is growing exponentially. At the same time, open source large language model solutions are also growing rapidly. Open source artificial intelligence communities such as HuggingFace provide a large number of open source models, data sets and AI applications. In order to promote the development of artificial intelligence, industry organizations such as OWASP, OpenSSF, and CISA are actively developing and providing key assets for artificial intelligence security and governance, such as OWASPAIExchange,

Stimulate the spatial reasoning ability of large language models: thinking visualization tips

Apr 11, 2024 pm 03:10 PM

Stimulate the spatial reasoning ability of large language models: thinking visualization tips

Apr 11, 2024 pm 03:10 PM

Large language models (LLMs) demonstrate impressive performance in language understanding and various reasoning tasks. However, their role in spatial reasoning, a key aspect of human cognition, remains understudied. Humans have the ability to create mental images of unseen objects and actions through a process known as the mind's eye, making it possible to imagine the unseen world. Inspired by this cognitive ability, researchers proposed "Visualization of Thought" (VoT). VoT aims to guide the spatial reasoning of LLMs by visualizing their reasoning signs, thereby guiding subsequent reasoning steps. Researchers apply VoT to multi-hop spatial reasoning tasks, including natural language navigation, vision