Technology peripherals

Technology peripherals

AI

AI

Tencent Cloud releases new high-performance application service HAI, claiming to be able to develop customized AI applications in 10 minutes

Tencent Cloud releases new high-performance application service HAI, claiming to be able to develop customized AI applications in 10 minutes

Tencent Cloud releases new high-performance application service HAI, claiming to be able to develop customized AI applications in 10 minutes

Tencent Cloud’s official public account recently announced the launch of high-performance application service “HAI” (Hyper Application Inventor). According to reports, through this service, users can easily develop their own AI applications, achieve instant use of GPU computing power and one-click deployment, and the development time only takes 10 minutes

According to the official introduction, using "HAI" can automatically configure more cost-effective GPU computing power, and support the dependency environment required for "one-click deployment". Users can complete the configuration by selecting the model, region, computing power type and hard disk size

In addition, "HAI" is also pre-installed with a variety of popular models such as stable diffusion and ChatGLM. Users can build their own large language models, AI paintings and other application environments in a few minutes. If the pre-installed models are not enough, users can also use the "HAI" platform to deploy their own open source models

"HAI" provides a visual interactive interface, supports various computing power connection methods such as JupyterLab and WebUI, and claims to be a graphical interface that can be developed with hands. At the same time, "HAI" supports academic acceleration. By automatically selecting the best line, it can greatly improve the access and download speed of mainstream academic resource platforms

According to previous reports from IT House, during the Industry Large Model and Intelligent Application Technology Summit held in June this year, Tang Daosheng, Senior Executive Vice President of Tencent Group and CEO of Cloud and Smart Industry Group, announced that Tencent Cloud MaaS will be established A one-stop shop for selected industry models

Tencent Cloud stated that they can use Tencent HCC high-performance computing clusters and large model capabilities to provide more than 50 large model industry solutions for ten major industries including cultural tourism, government affairs, finance, media, and education. Users only need to add their own scene data to the model store to quickly generate exclusive models

The above is the detailed content of Tencent Cloud releases new high-performance application service HAI, claiming to be able to develop customized AI applications in 10 minutes. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Recognition from the first prize of Science and Technology Progress Award: Tencent solved the problem of training large models with trillions of parameters

Mar 27, 2024 pm 09:41 PM

Recognition from the first prize of Science and Technology Progress Award: Tencent solved the problem of training large models with trillions of parameters

Mar 27, 2024 pm 09:41 PM

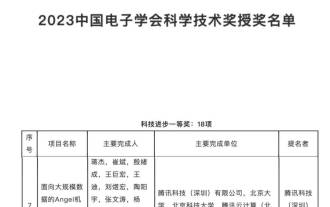

The list of recipients of the China Electronics Society’s 2023 Science and Technology Awards has been announced. This time, we discovered a familiar figure—Tencent’s Angel machine learning platform. In the current era of rapid development of large models, the Science and Technology Award is awarded to machine learning platform research and application projects, fully affirming the value and importance of model training platforms. The Science and Technology Award recognizes the research and application of machine learning platform projects, and fully recognizes the value and importance of model training platforms, especially in the context of the rapid development of large-scale models. With the rise of deep learning, major companies have begun to realize the importance of machine learning platforms in the development of artificial intelligence technology. Google, Microsoft, Nvidia and other companies have launched their own machine learning platforms to accelerate

How to make a WeChat link? Sharing how to create WeChat links

Mar 09, 2024 pm 09:37 PM

How to make a WeChat link? Sharing how to create WeChat links

Mar 09, 2024 pm 09:37 PM

WeChat, as a popular social software, not only provides people with the convenience of instant messaging, but also integrates a variety of functions to enrich users' social experience. Among them, the creation and sharing of WeChat links is an important part of WeChat functions. The production of WeChat links mainly relies on the WeChat public platform and its related functions, as well as third-party tools. The following are several common methods of making WeChat links. How to make a WeChat link? The first way to create WeChat links is to use the image and text editor of the WeChat public platform. 1. Log in to the WeChat public platform and enter the image and text editing interface. 2. Add text or images in the editor, and then use the link button to add the required link. This method is suitable for simple text or image links. The second method is to use HTML code

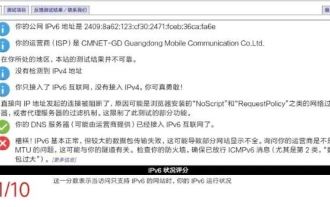

Should I enable IPv6 on my home router? 'Must-see: Advantages of enabling IPV6 on your home router'

Feb 07, 2024 am 09:03 AM

Should I enable IPv6 on my home router? 'Must-see: Advantages of enabling IPV6 on your home router'

Feb 07, 2024 am 09:03 AM

IPv4 is exhausted and IPv6 is urgently needed, but is this upgrade just a passive change? What does IPv6 mean to the general public? How much change can the comprehensive upgrade of IPv6 bring to our network? 01 Large-scale IPv6 transformation is about to be realized. Recently, the General Office of the Ministry of Industry and Information Technology and the General Office of the State Administration of Radio and Television issued a notice proposing requirements to promote the IPv6 transformation of Internet TV services. China Mobile, Alibaba Cloud, Tencent Cloud, Baidu Cloud, JD Cloud, Huawei Cloud and Wangsu Technology need to carry out IPv6 transformation of the content distribution network (CDN) related to Internet TV business. By the end of 2020, Internet TV service capabilities based on IPv6 protocol will reach 85% of IPv4

Tencent Hunyuan large model has been fully reduced in price! Hunyuan-lite is free from now on

Jun 02, 2024 pm 08:07 PM

Tencent Hunyuan large model has been fully reduced in price! Hunyuan-lite is free from now on

Jun 02, 2024 pm 08:07 PM

On May 22, Tencent Cloud announced a new large model upgrade plan. One of the main models, Hunyuan-lite model, the total API input and output length is planned to be upgraded from the current 4k to 256k, and the price is adjusted from 0.008 yuan/thousand tokens to fully free. The Hunyuan-standardAPI input price dropped from 0.01 yuan/thousand tokens to 0.0045 yuan/thousand tokens, a decrease of 55%, and the API output price dropped from 0.01 yuan/thousand tokens to 0.005 yuan/thousand tokens, a decrease of 50%. The newly launched Hunyuan-standard-256k has the ability to process ultra-long text of more than 380,000 characters, and the API input price has been reduced to 0.015 yuan/thousand toke.

GPT Store can't even open its doors. How dare this domestic platform take this path? ?

Apr 19, 2024 pm 09:30 PM

GPT Store can't even open its doors. How dare this domestic platform take this path? ?

Apr 19, 2024 pm 09:30 PM

Pay attention, this man has connected more than 1,000 large models, allowing you to plug in and switch seamlessly. Recently, a visual AI workflow has been launched: giving you an intuitive drag-and-drop interface, you can drag, pull, and drag to arrange your own workflow on an infinite canvas. As the saying goes, war costs speed, and Qubit heard that within 48 hours of this AIWorkflow going online, users had already configured personal workflows with more than 100 nodes. Without further ado, what I want to talk about today is Dify, an LLMOps company, and its CEO Zhang Luyu. Zhang Luyu is also the founder of Dify. Before joining the business, he had 11 years of experience in the Internet industry. I am engaged in product design, understand project management, and have some unique insights into SaaS. Later he

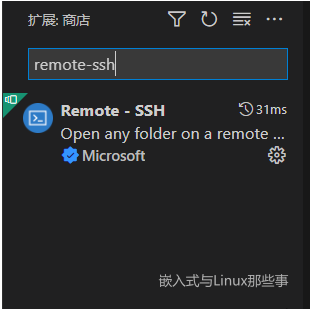

Use vscode to remotely debug the Linux kernel

Feb 05, 2024 pm 12:30 PM

Use vscode to remotely debug the Linux kernel

Feb 05, 2024 pm 12:30 PM

Preface The previous article introduced the use of QEMU+GDB to debug the Linux kernel. However, sometimes it is not very convenient to directly use GDB to debug and view the code. Therefore, on such an important occasion, how can the artifact of vscode be missing? This article introduces how to use vscode to remotely debug the kernel. Environment for this article: Windows 10 vs Code Ubuntu 20.04. I personally use Tencent Cloud Server, so I save the process of installing a virtual machine. Start directly from vscode configuration. Install the vscode plug-in remote-ssh. Find the Remote-SSH plug-in in the plug-in library and install it. After the installation is complete, there will be an additional function on the right toolbar. Press F1 to call out the pair.

Tencent Hunyuan upgrades model matrix, launching 256k long text model on the cloud

Jun 01, 2024 pm 01:46 PM

Tencent Hunyuan upgrades model matrix, launching 256k long text model on the cloud

Jun 01, 2024 pm 01:46 PM

The implementation of large models is accelerating, and "industrial practicality" has become a development consensus. On May 17, 2024, the Tencent Cloud Generative AI Industry Application Summit was held in Beijing, announcing a series of progress in large model development and application products. Tencent's Hunyuan large model capabilities continue to upgrade. Multiple versions of models hunyuan-pro, hunyuan-standard, and hunyuan-lite are open to the public through Tencent Cloud to meet the model needs of enterprise customers and developers in different scenarios, and to implement the most cost-effective model solutions. . Tencent Cloud releases three major tools: knowledge engine for large models, image creation engine, and video creation engine, creating a native tool chain for the era of large models, simplifying data access, model fine-tuning, and application development processes through PaaS services to help enterprises

C++ High-Performance Programming Tips: Optimizing Code for Large-Scale Data Processing

Nov 27, 2023 am 08:29 AM

C++ High-Performance Programming Tips: Optimizing Code for Large-Scale Data Processing

Nov 27, 2023 am 08:29 AM

C++ is a high-performance programming language that provides developers with flexibility and scalability. Especially in large-scale data processing scenarios, the efficiency and fast computing speed of C++ are very important. This article will introduce some techniques for optimizing C++ code to cope with large-scale data processing needs. Using STL containers instead of traditional arrays In C++ programming, arrays are one of the commonly used data structures. However, in large-scale data processing, using STL containers, such as vector, deque, list, set, etc., can be more