Technology peripherals

Technology peripherals

AI

AI

Combining the diffusion model with NeRF, Tsinghua Wensheng proposed a new 3D method to achieve SOTA

Combining the diffusion model with NeRF, Tsinghua Wensheng proposed a new 3D method to achieve SOTA

Combining the diffusion model with NeRF, Tsinghua Wensheng proposed a new 3D method to achieve SOTA

The AI model that uses text to synthesize 3D graphics has a new SOTA!

Recently, the research group of Professor Liu Yongjin of Tsinghua University proposed a new method of creating 3D images based on the diffusion model.

Both the consistency between different perspectives and the matching with prompt words have been greatly improved compared to before.

Picture

Picture

Vincent 3D is a hot research content of 3D AIGC and has received widespread attention from academia and industry.

The new model proposed by Professor Liu Yongjin’s research team is called TICD (Text-Image Conditioned Diffusion), which has reached the SOTA level on the T3Bench data set.

Relevant papers have been released and the code will be open source soon.

The evaluation results have reached SOTA

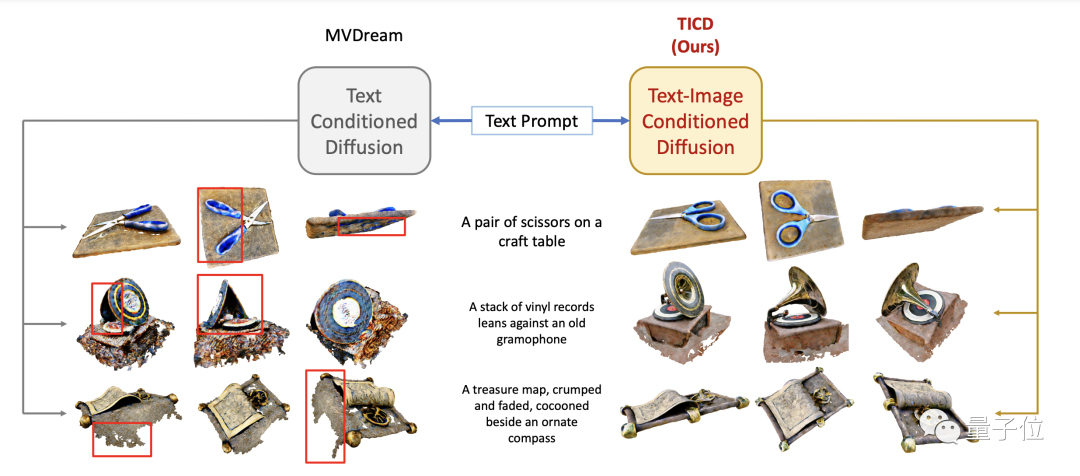

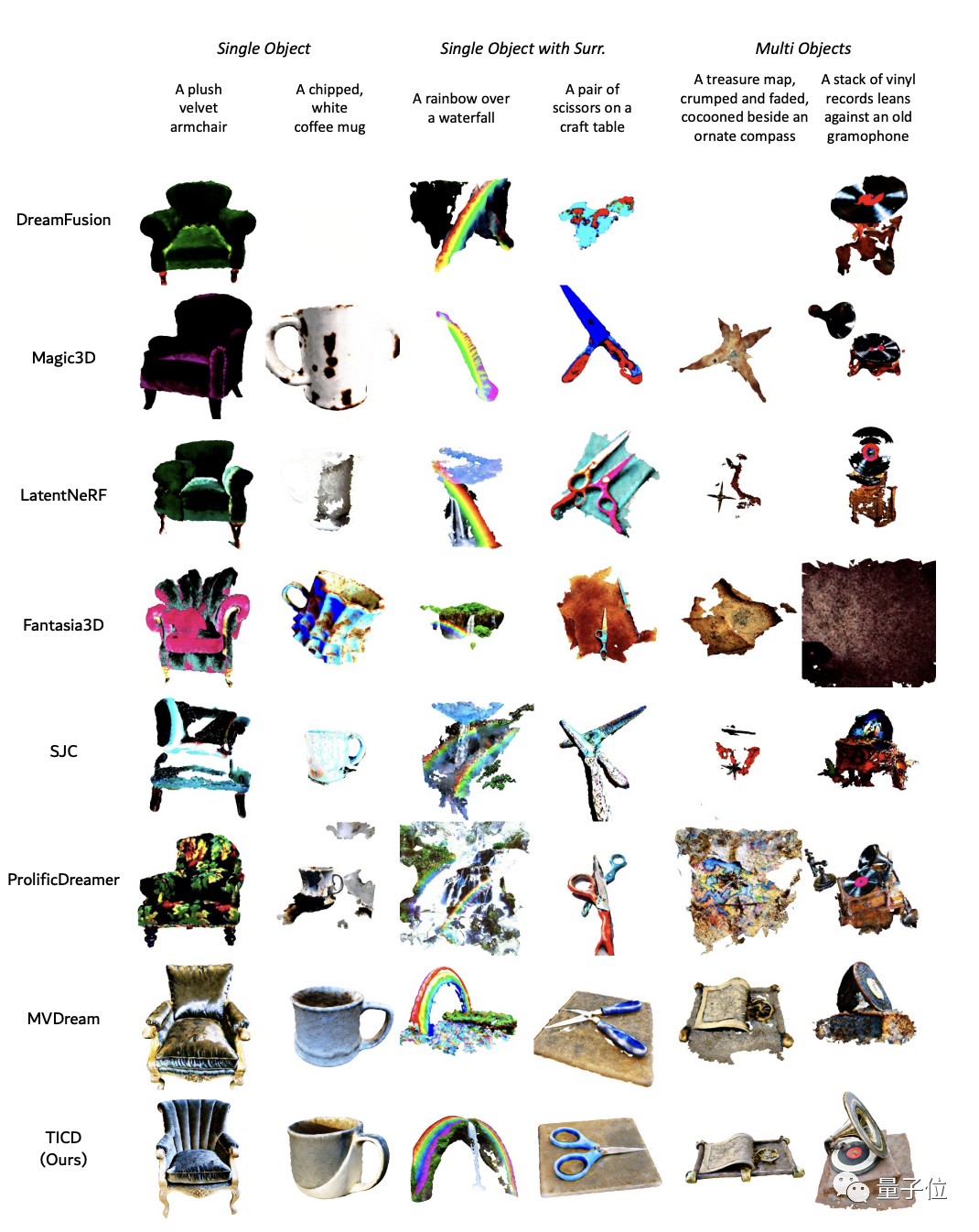

In order to evaluate the effect of the TICD method, the research team first conducted qualitative experiments and compared some previous better methods.

The results show that the 3D graphics generated by the TICD method have better quality, clearer graphics, and a higher degree of matching with the prompt words.

Picture

Picture

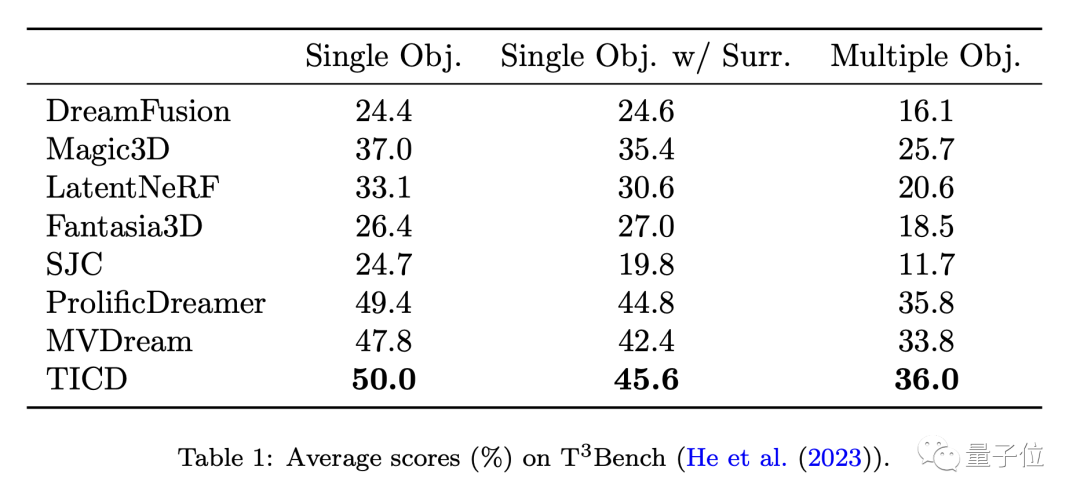

To further evaluate the performance of these models, the team quantitatively tested TICD with these methods on the T3Bench dataset.

The results show that TICD achieved the best results in the three prompt sets of single object, single object with background, and multiple objects, proving that it has overall advantages in both generation quality and text alignment. .

Picture

Picture

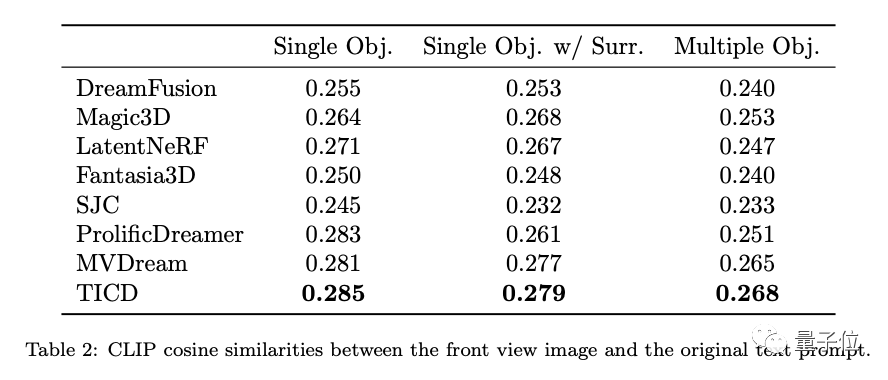

In addition, in order to further evaluate the text alignment of these models, the research team also performed CLIP of the pictures rendered by the 3D object and the original prompt words Cosine similarity was tested, and the result was that TICD still performed best.

So, how does the TICD method achieve such an effect?

Incorporating multi-view consistency prior into NeRF supervision

Currently mainstream 3D text generation methods mostly use pre-trained 2D diffusion models and are optimized through Score Distillation Sampling (SDS) Neural Radiation Field (NeRF) to generate brand new 3D models.

However, the supervision provided by this pre-trained diffusion model is limited to the input text itself, and does not constrain the consistency between multiple views, and may cause problems such as poor generated geometric structures.

To introduce multi-view consistency in the prior of the diffusion model, some recent studies have fine-tuned 2D diffusion models by using multi-view data, but still lack fine-grained inter-view continuity.

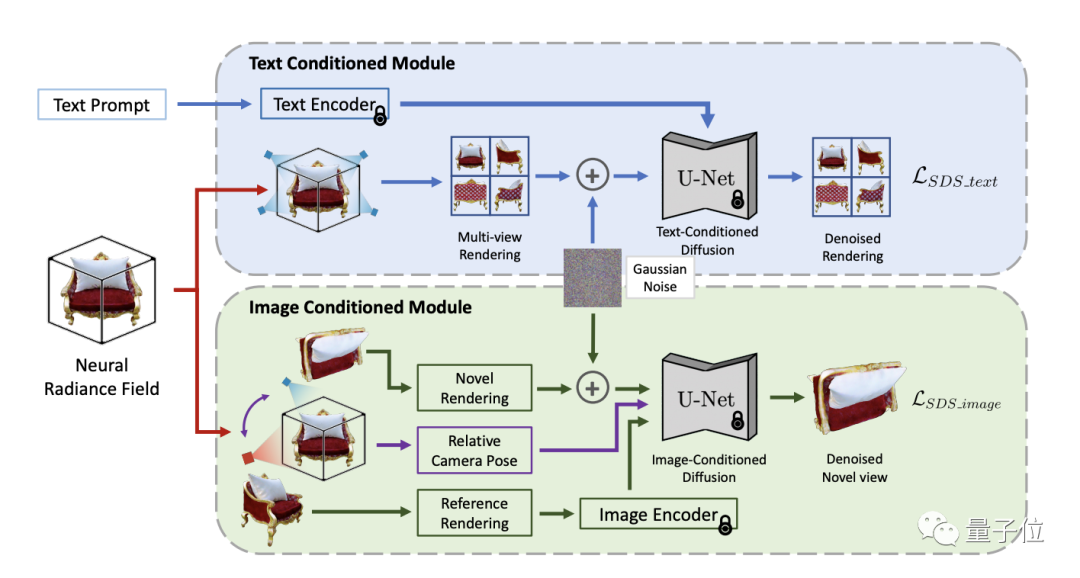

To solve this challenge, the TICD method incorporates text-conditioned and image-conditioned multi-view images into the NeRF-optimized supervision signal, ensuring the alignment of 3D information with prompt words and 3D objects respectively. The strong consistency between viewing angles effectively improves the quality of generated 3D models.

Picture

Picture

In terms of workflow, TICD first samples several sets of orthogonal reference camera perspectives, uses NeRF to render the corresponding reference views, and then renders these The reference view uses a text-based conditional diffusion model to constrain the overall consistency of content and text.

On this basis, select several sets of reference camera perspectives, and render a view from an additional new perspective for each perspective. Then, the pose relationship between the two views and perspectives is used as a new condition, and an image-based conditional diffusion model is used to constrain the consistency of details between different perspectives.

Combining the supervision signals of the two diffusion models, TICD can update the parameters of the NeRF network and optimize iteratively until the final NeRF model is obtained and renders high-quality, geometrically clear and text-consistent 3D content.

In addition, the TICD method can effectively eliminate problems such as the disappearance of geometric information, excessive generation of incorrect geometric information, and color confusion that may occur when existing methods face specific text input.

Paper address: https://www.php.cn/link/8553adf92deaf5279bcc6f9813c8fdcc

The above is the detailed content of Combining the diffusion model with NeRF, Tsinghua Wensheng proposed a new 3D method to achieve SOTA. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

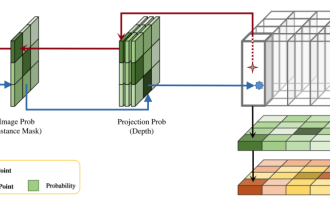

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

DualBEV: significantly surpassing BEVFormer and BEVDet4D, open the book!

Mar 21, 2024 pm 05:21 PM

DualBEV: significantly surpassing BEVFormer and BEVDet4D, open the book!

Mar 21, 2024 pm 05:21 PM

This paper explores the problem of accurately detecting objects from different viewing angles (such as perspective and bird's-eye view) in autonomous driving, especially how to effectively transform features from perspective (PV) to bird's-eye view (BEV) space. Transformation is implemented via the Visual Transformation (VT) module. Existing methods are broadly divided into two strategies: 2D to 3D and 3D to 2D conversion. 2D-to-3D methods improve dense 2D features by predicting depth probabilities, but the inherent uncertainty of depth predictions, especially in distant regions, may introduce inaccuracies. While 3D to 2D methods usually use 3D queries to sample 2D features and learn the attention weights of the correspondence between 3D and 2D features through a Transformer, which increases the computational and deployment time.