Technology peripherals

Technology peripherals

AI

AI

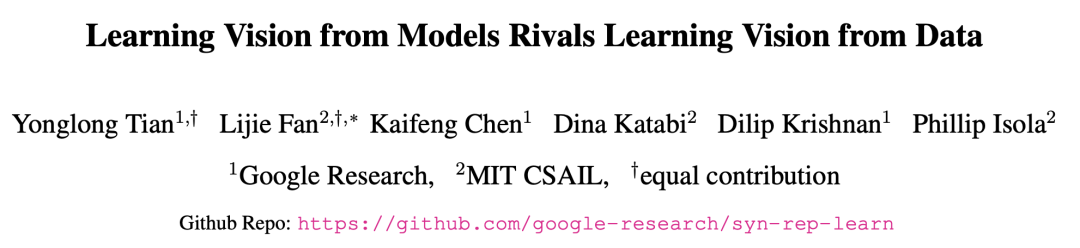

Google MIT's latest research shows: Obtaining high-quality data is not difficult, large models are the solution

Google MIT's latest research shows: Obtaining high-quality data is not difficult, large models are the solution

Google MIT's latest research shows: Obtaining high-quality data is not difficult, large models are the solution

Получение высококачественных данных стало основным узким местом в современном обучении больших моделей.

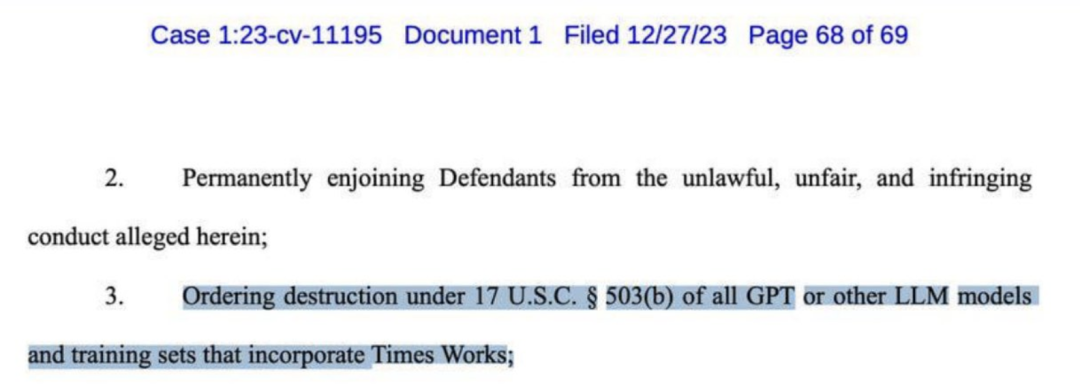

Несколько дней назад газета New York Times подала в суд на OpenAI и потребовала миллиарды долларов компенсации. В жалобе перечислены многочисленные доказательства плагиата со стороны GPT-4.

Даже New York Times призывала к уничтожению почти всех крупных моделей, таких как GPT.

Многие громкие имена в индустрии искусственного интеллекта уже давно полагают, что «синтетические данные» могут быть лучшим решением этой проблемы.

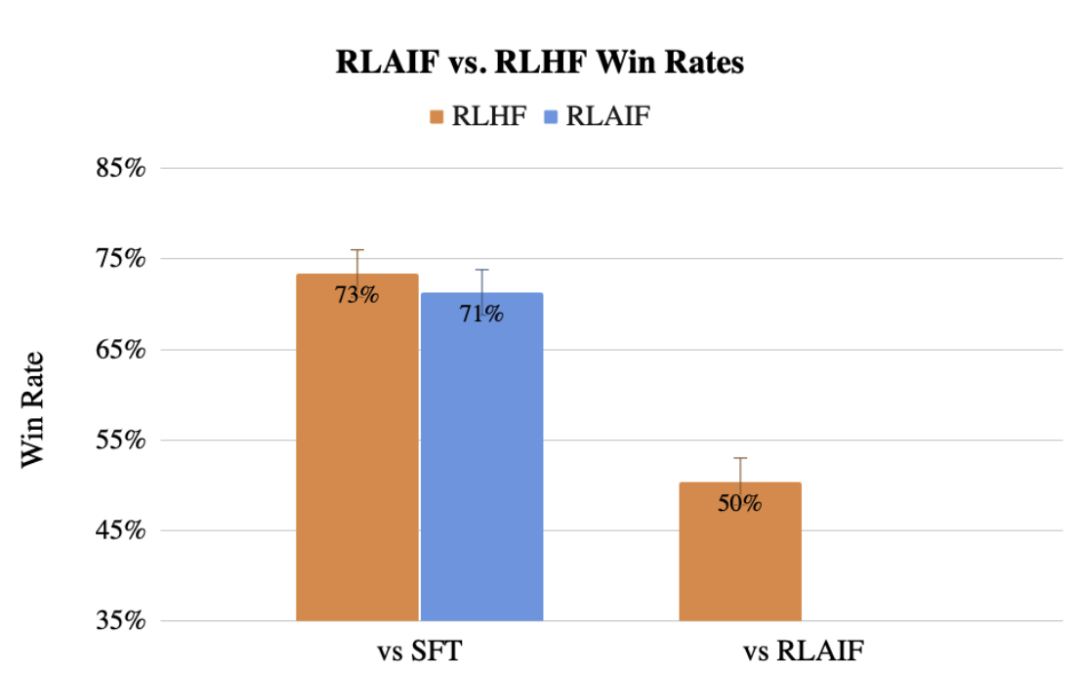

Ранее команда Google также предложила RLAIF, метод, который использует LLM для замены предпочтений человека в отношении маркировки, и эффект даже не уступает люди.

Теперь исследователи из Google и MIT обнаружили, что обучение на больших моделях может привести к представлениям лучших моделей, обученных с использованием реальных данных.

Этот последний метод называется SynCLR и представляет собой метод изучения виртуальных представлений полностью на основе синтетических изображений и синтетических описаний без каких-либо реальных данных.

Адрес статьи: https://arxiv.org/abs/2312.17742

Результаты эксперимента показывают, что представление, полученное с помощью метода SynCLR, может быть таким же хорошим, как эффект передачи CLIP OpenAI в ImageNet.

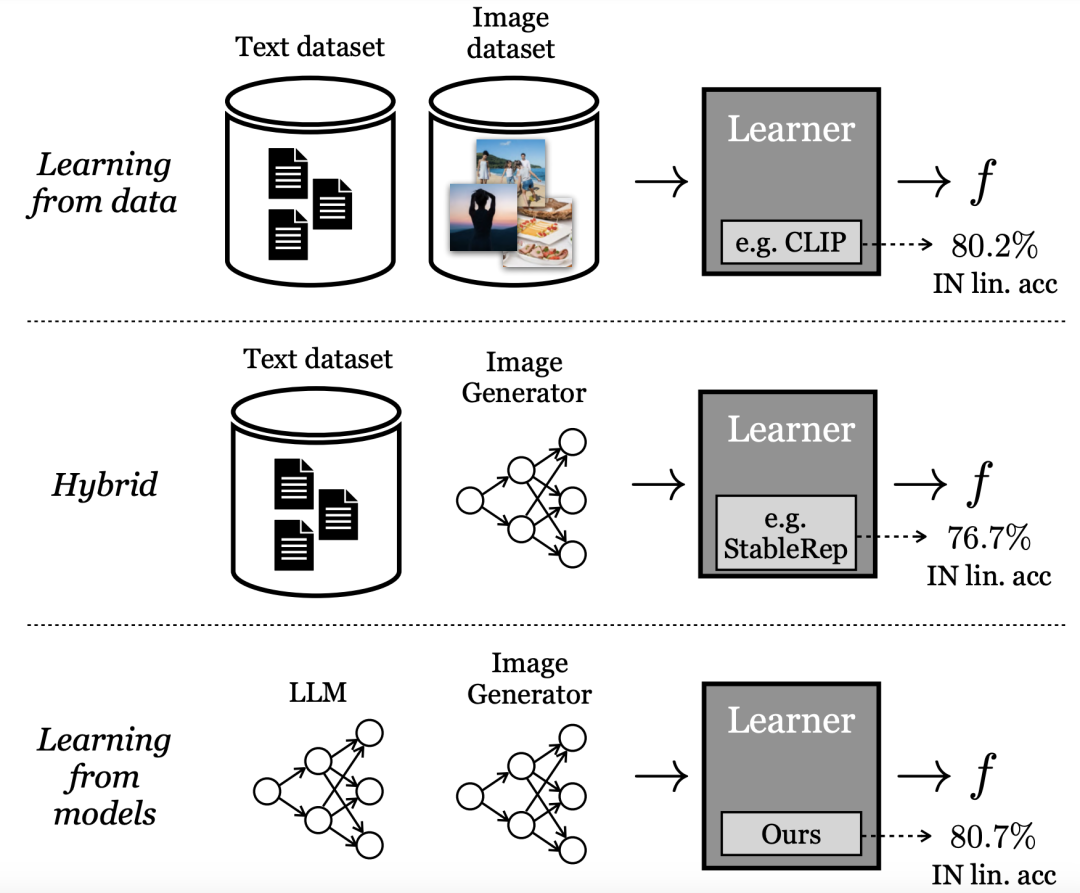

Обучение на генеративных моделях

#Наиболее эффективные методы обучения «визуального представления» в настоящее время основаны на крупномасштабных реальных наборах данных. Однако при сборе реальных данных возникает много трудностей.

Чтобы снизить затраты на сбор данных, исследователи в этой статье задаются вопросом:

Выборка из готовых материалов генеративные модели Являются ли синтетические данные жизнеспособным путем к созданию масштабных наборов данных для обучения современным визуальным представлениям?

## В отличие от обучения непосредственно на данных, исследователи Google называют эту модель «обучением на модели». В качестве источника данных для создания крупномасштабных обучающих наборов модели имеют несколько преимуществ:

- Предоставляют новые методы управления данными через скрытые переменные, условные переменные и гиперпараметры.

— моделями также легче делиться и хранить (поскольку модели легче сжимать, чем данные), и они могут создавать неограниченное количество образцов данных.

Все большее количество литературы исследует эти свойства, а также другие преимущества и недостатки генеративных моделей как источника данных для обучения последующих моделей.

Некоторые из этих методов используют гибридную модель, т. е. смешивают реальные и синтетические наборы данных или требуют, чтобы один реальный набор данных генерировал другой синтетический набор данных.

Другие методы пытаются изучить представления на основе чисто «синтетических данных», но сильно отстают от наиболее эффективных моделей.

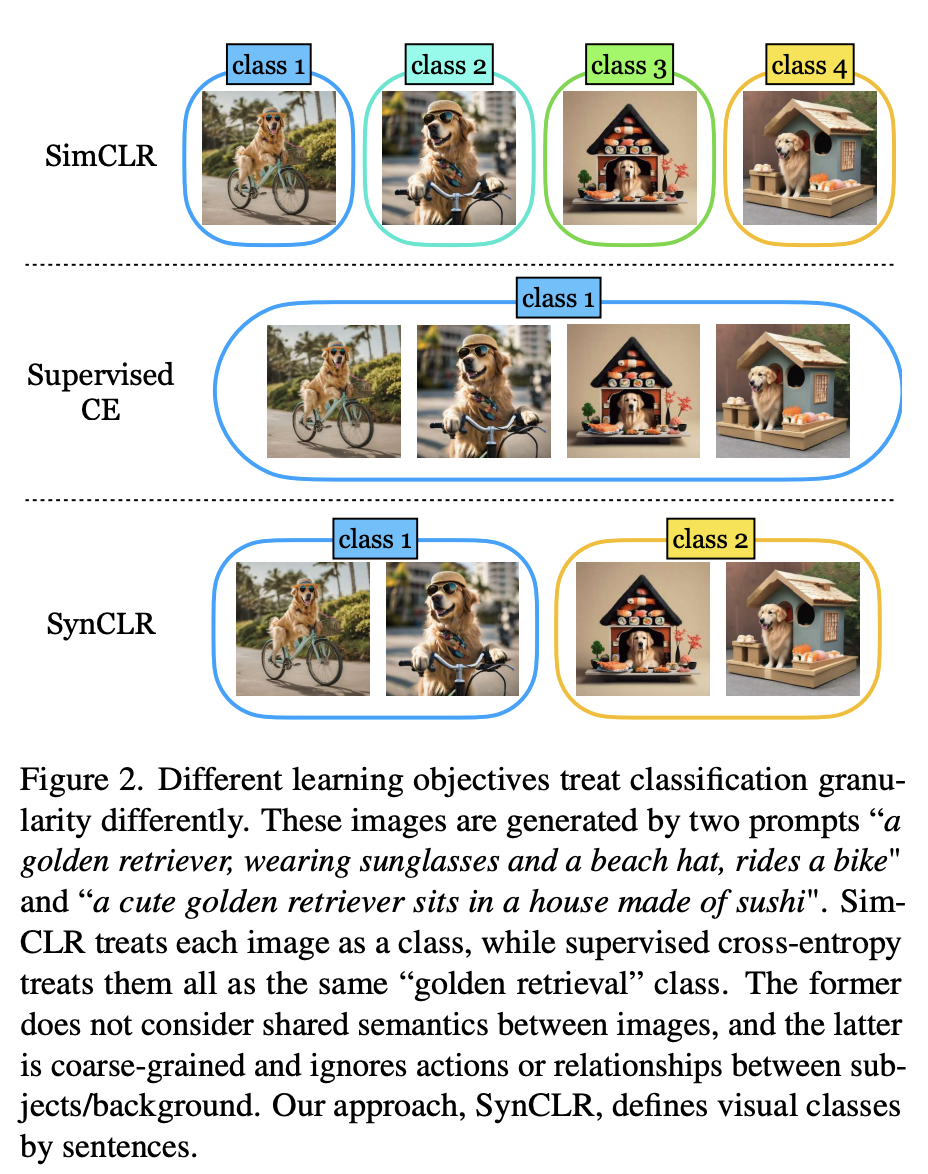

В статье последний метод, предложенный исследователями, использует генеративную модель для переопределения степени детализации классов визуализации.

Как показано на рисунке 2, четыре изображения были созданы с использованием двух подсказок: «Золотистый ретривер в солнцезащитных очках и пляжной шляпе едет на велосипеде» и «Милый золотистый ретривер». Собака сидит. в доме из суши».

Traditional self-supervised methods (such as Sim-CLR) will treat these images as different classes, and the embeddings of different images will be separated, without explicitly considering the shared semantics between images.

At the other extreme, supervised learning methods (i.e. SupCE) treat all these images as a single class (such as "golden retriever"). This ignores semantic nuances in the images, such as a dog riding a bicycle in one pair of images and a dog sitting in a sushi house in another.

In contrast, the SynCLR approach treats descriptions as classes, i.e. one visual class per description.

In this way, we can group the pictures according to the two concepts of "riding a bicycle" and "sitting in a sushi restaurant".

This kind of granularity is difficult to mine in real data because collecting multiple images by a given description is not trivial, especially when the number of descriptions increases.

However, the text-to-image diffusion model fundamentally has this capability.

By simply conditioning on the same description and using different noise inputs, a text-to-image diffusion model can generate different images that match the same description.

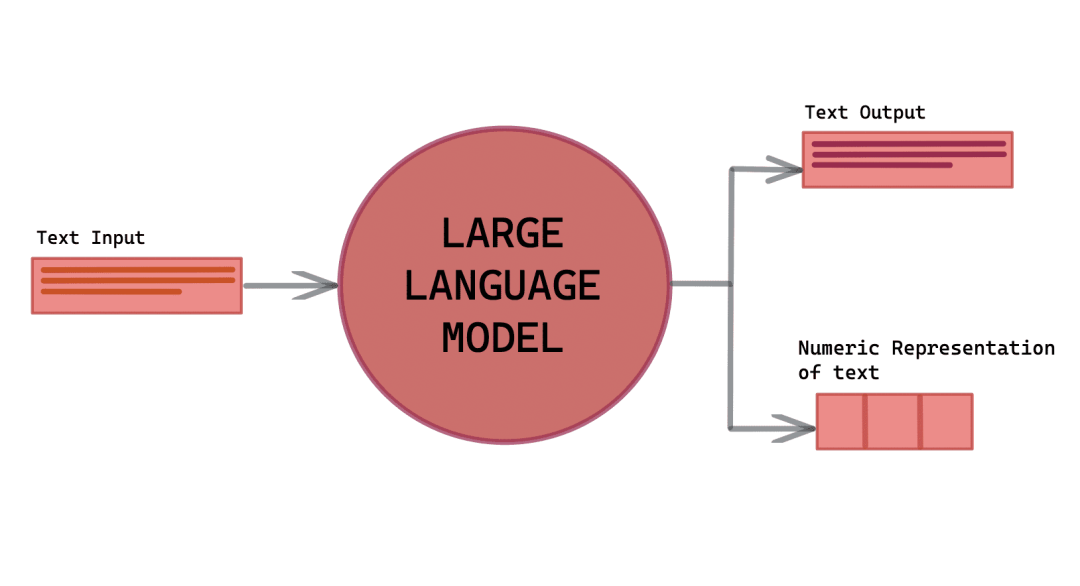

Specifically, the authors study the problem of learning visual encoders without real image or text data.

Latest methods rely on the utilization of 3 key resources: a language generative model (g1), a text-to-image generative model (g2), and a curated list of visual concepts ( c).

Pre-processing includes three steps:

(1) Use (g1) to synthesize a comprehensive set of image descriptions T, which covers Various visual concepts in C;

(2) For each title in T, use (g2) to generate multiple images, ultimately generating an extensive synthetic image dataset X ;

(3) Train on X to obtain the visual representation encoder f.

Then, use llama-27b and Stable Diffusion 1.5 as (g1) and (g2) respectively because of their fast inference speed.

Synthetic description

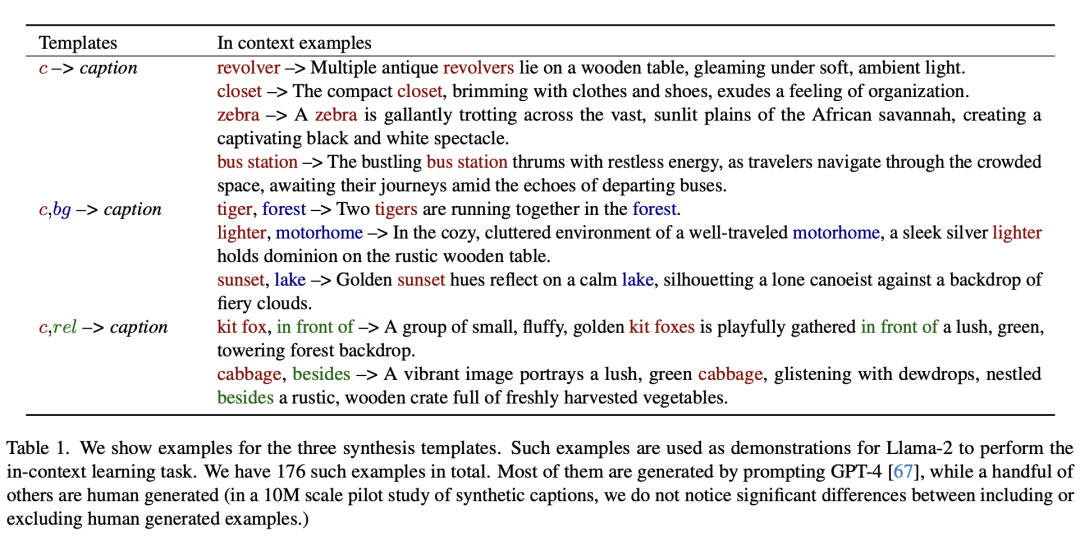

To take advantage of the power of powerful text-to-image models to generate large amounts of training image data Set, first requires a set of descriptions that not only accurately describe the image but also exhibit diversity to encompass a wide range of visual concepts.

In response, the authors developed a scalable method to create such a large set of descriptions, leveraging the contextual learning capabilities of large models.

The following shows three examples of synthetic templates.

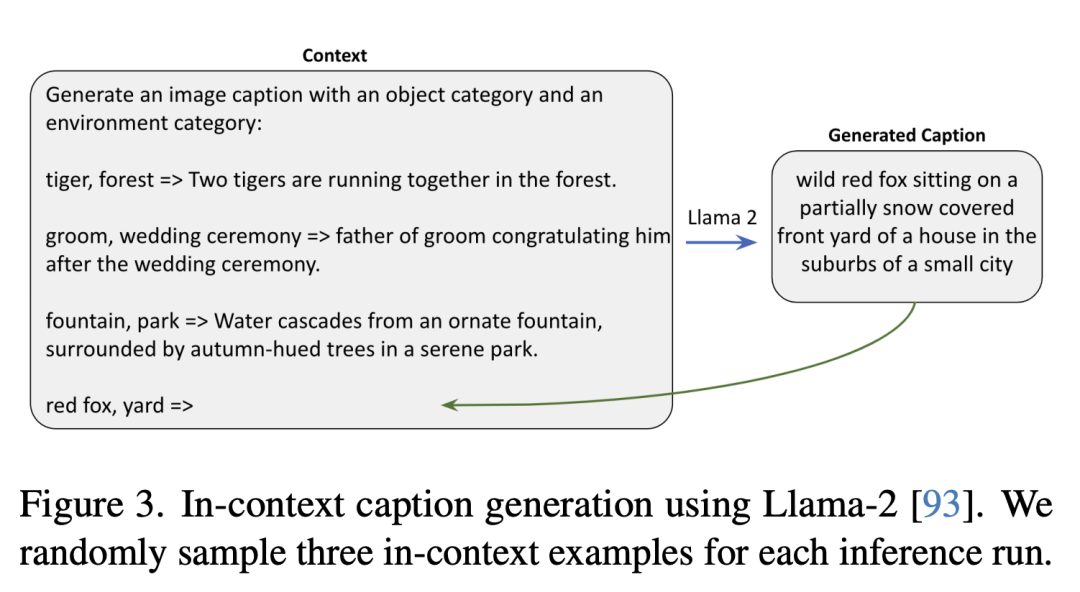

The following is a context description generated using Llama-2. The researchers randomly sampled three context examples in each inference run.

Synthetic Image

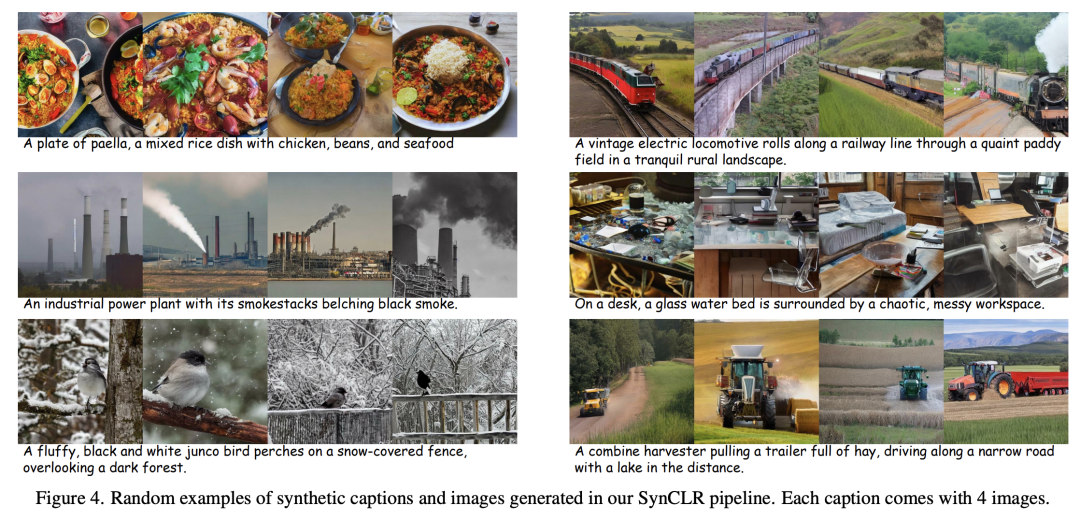

For each text description, the researchers The back-diffusion process is started with different random noises, resulting in various images.

In this process, the classifier-free bootstrapping (CFG) ratio is a key factor.

The higher the CFG scale, the better the quality of the samples and the consistency between text and images, while the lower the scale, the greater the diversity of the samples, that is The closer it is to the original conditional distribution of the image based on the given text.

Representation learning

In the paper, the representation learning method is based on Based on StableRep.

The key component of the method proposed by the authors is the multi-positive contrast learning loss, which works by aligning (in embedding space) images generated from the same description.

In addition, various techniques from other self-supervised learning methods were also combined in the study.

Comparable to OpenAI’s CLIP

In the experimental evaluation, the researchers first conducted an ablation study to evaluate the effectiveness of various designs and modules within the pipeline, and then continued to expand the amount of synthetic data.

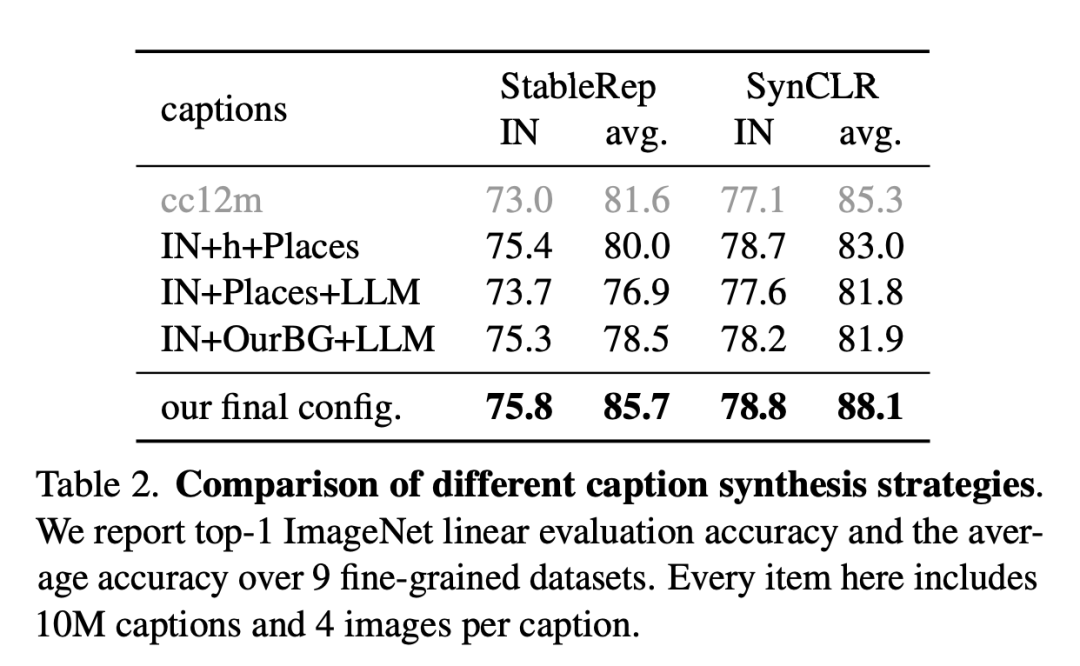

The following figure is a comparison of different description synthesis strategies.

The researchers report the ImageNet linear evaluation accuracy and average accuracy on 9 fine-grained datasets. Each item here includes 10 million descriptions and 4 pictures per description.

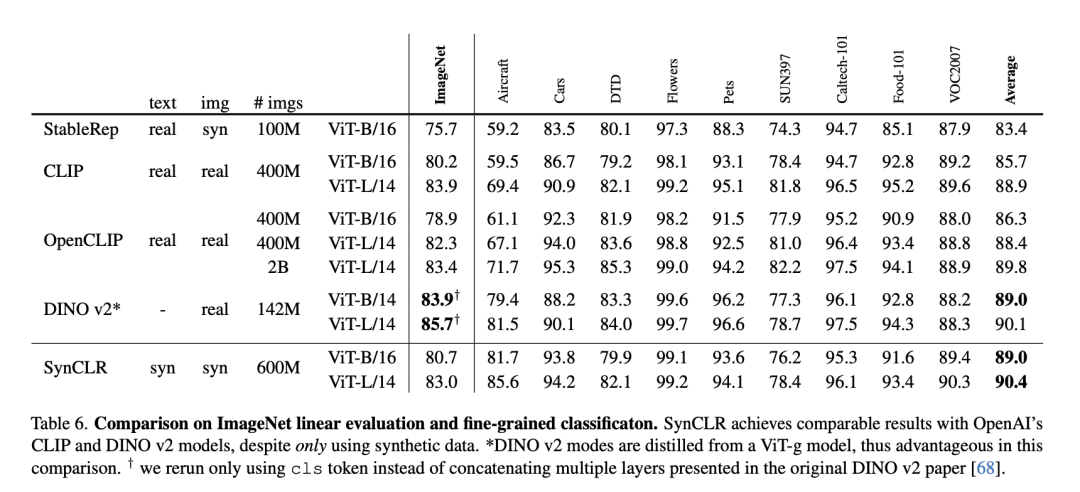

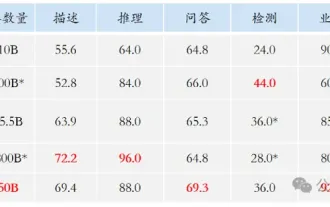

The following table is a comparison of ImageNet linear evaluation and fine-grained classification.

Despite using only synthetic data, SynCLR achieved comparable results to OpenAI’s CLIP and DINO v2 models.

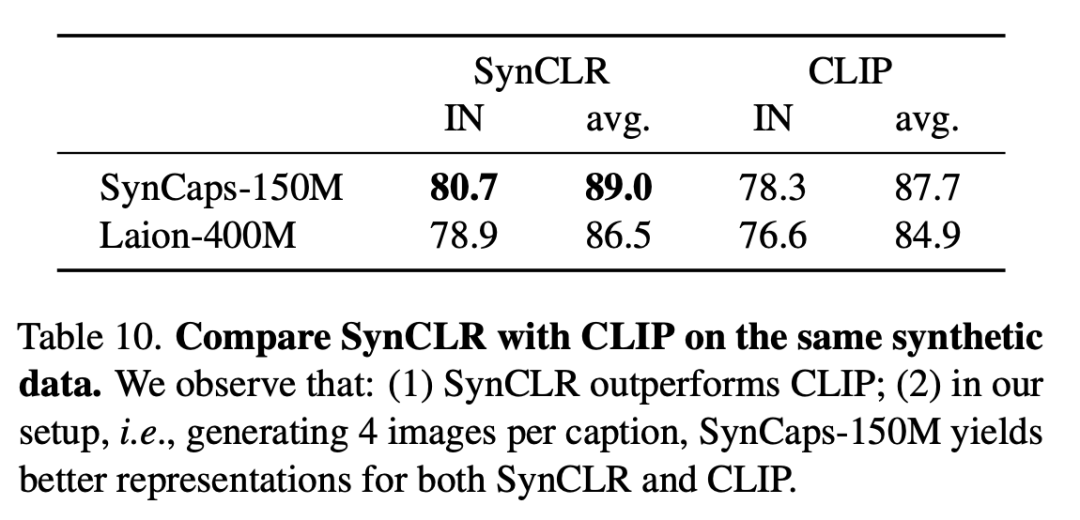

The following table compares SynCLR and CLIP on the same synthetic data. It can be seen that SynCLR is significantly better than CLIP.

The specific setting is to generate 4 images per title. SynCaps-150M provides better representation for SynCLR and CLIP.

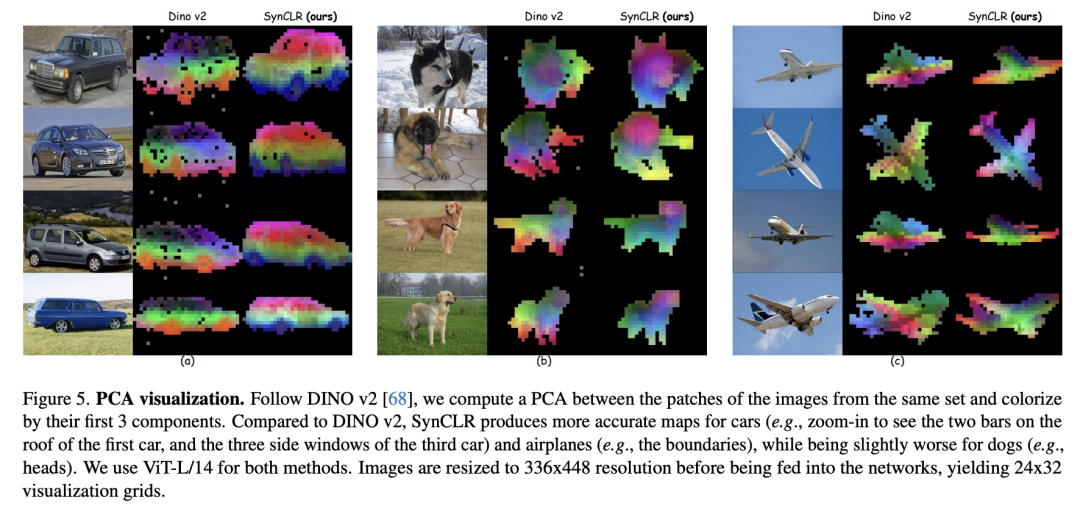

PCA visualization is as follows. Following DINO v2, the researchers calculated PCA between patches of the same set of images and colored them based on their first 3 components.

Compared with DINO v2, SynCLR is more accurate for drawings of cars and airplanes, but slightly worse for drawings that can be drawn.

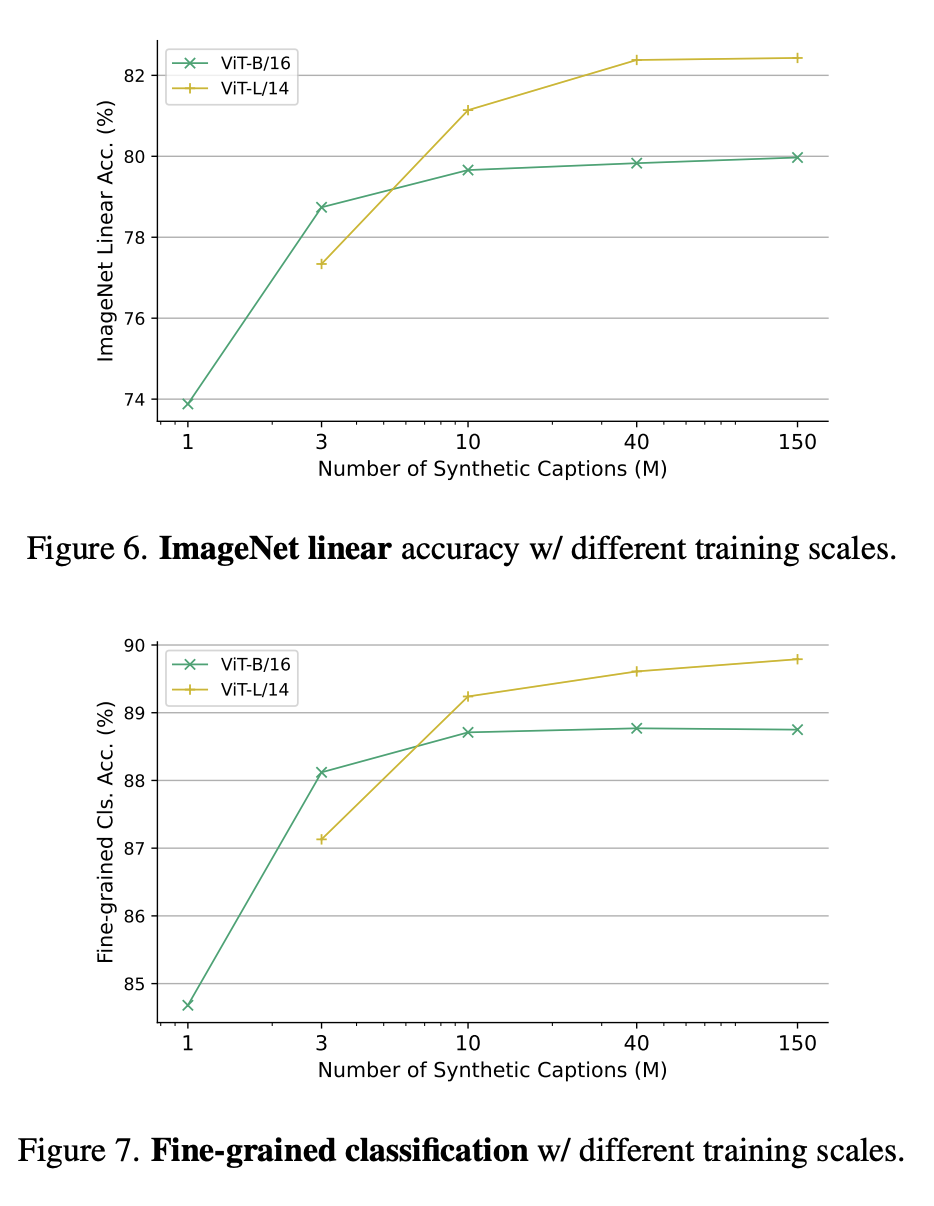

Figure 6 and Figure 7 respectively show the linear accuracy of ImageNet under different training scales and the fine classification under different training parameter scales. .

Why learn from generative models?

One compelling reason is that generative models can operate on hundreds of data sets simultaneously, providing a convenient and efficient way to curate training data.

In summary, the latest paper investigates a new paradigm of visual representation learning - learning from generative models.

Without using any actual data, SynCLR learns visual representations that are comparable to those learned by state-of-the-art general-purpose visual representation learners.

The above is the detailed content of Google MIT's latest research shows: Obtaining high-quality data is not difficult, large models are the solution. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

Use ddrescue to recover data on Linux

Mar 20, 2024 pm 01:37 PM

DDREASE is a tool for recovering data from file or block devices such as hard drives, SSDs, RAM disks, CDs, DVDs and USB storage devices. It copies data from one block device to another, leaving corrupted data blocks behind and moving only good data blocks. ddreasue is a powerful recovery tool that is fully automated as it does not require any interference during recovery operations. Additionally, thanks to the ddasue map file, it can be stopped and resumed at any time. Other key features of DDREASE are as follows: It does not overwrite recovered data but fills the gaps in case of iterative recovery. However, it can be truncated if the tool is instructed to do so explicitly. Recover data from multiple files or blocks to a single

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile