Technology peripherals

Technology peripherals

AI

AI

FATE 2.0 released: realizing interconnection of heterogeneous federated learning systems

FATE 2.0 released: realizing interconnection of heterogeneous federated learning systems

FATE 2.0 released: realizing interconnection of heterogeneous federated learning systems

- FATE 2.0Comprehensive upgrade to promote large-scale application of private computing federated learning

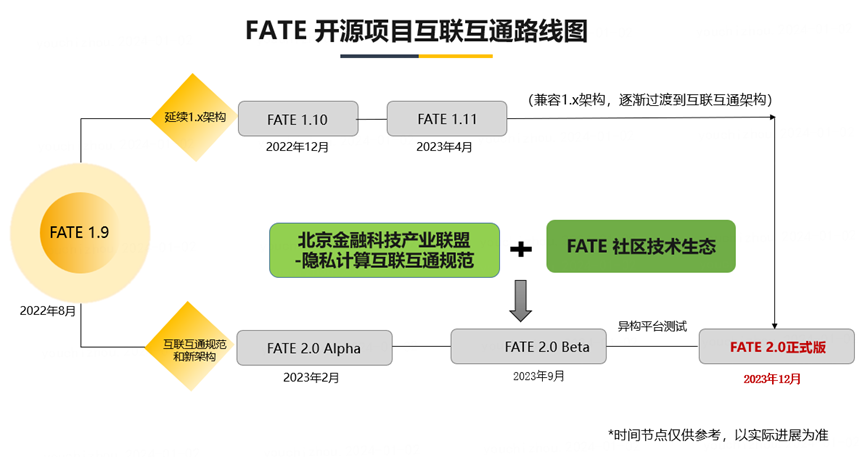

FATE open source platform announced the release of FATE 2.0 version, as the world's leading industrial-grade open source framework for federated learning. This update realizes the interconnection between federated heterogeneous systems and continues to enhance the interconnection capabilities of the privacy computing platform. This progress further promotes the development of large-scale applications of federated learning and privacy computing.

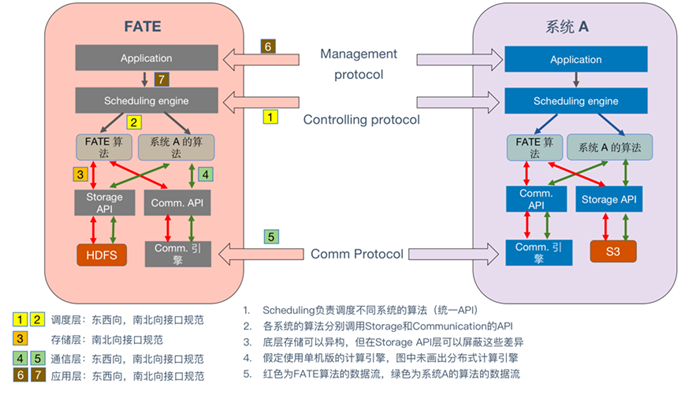

##FATE 2.0Designed with comprehensive interoperability as the design concept , using open source methods to transform the four levels of application layer, scheduling, communication, and heterogeneous computing (algorithms), realizes the integration of systems and systems, systems and algorithms, and algorithms and algorithms. The ability of heterogeneous interoperability.

##The design of FATE 2.0

is compatible with the "Financial Industrial Privacy Computing InteroperabilityAPITechnical Document》##[3] and other industry standards, before release, FATE 2.0 has completed interconnection and interoperability verification with multiple heterogeneous privacy computing platforms. Recently Beijing Financial Technology Industry Alliance mentioned in a document released that “the research team joined forces with the FATE open source community and leading technology companies to complete a five-party cross-platform, cross- The interoperability and joint debugging of algorithms have verified the feasibility and security of interface documents in supporting the interoperability of multi-party heterogeneous platforms".

Visit the following URL to get version 2.0 of FATE:

##https:/ /www.php.cn/link/99113167f3b816bdeb56ff1af6cec7af

##FATE 2.0

- Highlight OverviewApplication Layer Interconnection: Building

-

StandardCan Extended federated DSL, supporting application layer interconnection, unifiedDSLAdapting to multiple heterogeneous privacy computing platforms Task description

- Scheduling layerInterconnection: Decoupling system modules from multiple levels toBuild an open and standardized interconnection and interoperability scheduling platform, Support task scheduling between multiple heterogeneous privacy computing platforms

- Transmission Layer cross-site interconnection: Build open cross-site interconnection communication components, support multiple transmission modes and multiple communication protocols, can adapt to data transmission between multiple heterogeneous privacy computing platforms, enhance transmission efficiency and System stability

- ##Federal heterogeneous computing interconnection: Build Distributed and plain ciphertextTensor/DataFrame, DecouplingHE、MPC and other security protocols and federated algorithm protocols, facilitate the interconnection of federated heterogeneous computing engines

- ## Core algorithm migration and expansion, algorithm development experience and performance are significantly enhanced: using distributed, plain-ciphertext

- Tensor/Dataframe programming model to realize core algorithm migration and expansion; core algorithm performance Improvement: PSI Privacy protection intersection algorithm performance improvement 1.8 times, vertical federation SSHE-LR Algorithm performance is improved 4.3 times, vertical federated neural network algorithm performance is improved 143 times, etc.

FATE 2.0

Schematic diagram of the overall interconnection architecture

- FATE 2.0 Feature List

FATE-Client 2.0: Building a scalable federated DSL,Support application layer interconnection

1.Introducing a new scalable and standardized federated DSL IR, namely federated modeling Process DSL standardized middle layer representation

2. Supports compiling python client federated modeling process code into DSL IR

3. DSL IR Protocol extension enhancement: Support multi-party asymmetric scheduling

4. Support FATE's standardized federated DSL IR and other protocol conversion, such asMutual conversion of Beijing Financial Technology Industry Alliance Interoperability BFIA protocol

5. Complete the migration of Flow Cli and Flow SDK functions

FATE-Flow 2.0: Building an open and standardized interconnection scheduling platform

1. Adapt to the scalable and standardized FATE 2.0 federated DSL IR

2. Build an interconnection scheduling layer framework and support other protocols through adapters, such as "Privacy Computing Interconnection" The control layer interface involved in the "Interoperability API Technical Document".

3. Optimize process scheduling, the scheduling logic is decoupled and customizable, and priority scheduling is added

4. Optimize algorithm component scheduling, support container-level algorithm loading, and improve support for cross-platform heterogeneous scenarios

5. Optimization Multi-version algorithm component registration supports registration of component operating modes

6. Federated DSL IR extension enhancement: supports multi-party asymmetric scheduling

7. Optimize client authentication logic and support permission management of multiple clients

8. Optimize RESTful The interface makes the input parameter fields and types, return fields and status codes clearer

9. Added OFX (Open Flow Exchange) module: encapsulates the scheduling client, allowing cross-platform scheduling

10.Supports the new communication engine OSX, while working with FATE Flow All engines in 1.x remain compatible

11. The system layer and algorithm layer are decoupled, and the system configuration is moved from the FATE repository to the Flow repository

12. Released the FATE Flow package in PyPI, and added a service-level CLI for service management

13. Complete 1.x main function migration

OSX (Open Site Exchange ) 1.0: Building open cross-site interconnection and communication components

- ReferenceBeijing Finance The "Financial Industry Privacy Computing Interoperability API Technical Documentation" released by the Technology Industry Alliance implements the Interconnection transmission interface. The transmission interface is compatible with FATE 1.X version and FATE 2.X version

- Support grpc synchronous transmission and streaming, support TLS secure transmission protocol, compatible with FATE 1.X rollsite component

- Support Http 1.X protocol Transmission, supports TLS secure transmission protocol

- Supports message queue mode transmission, used to replace rabbitmq and pulsar components in FATE1.X

- Support eggroll and spark computing engines

- support networking as Exchange components and support FATE 1.X and FATE 2.X access

- Compare The rollsite component improves the logic of exception handling during transmission and provides more accurate log output for quickly locating exceptions

- The routing configuration is basically the same as the original rollsite, reducing the difficulty of transplantation

- Support http interface to modify the routing table and provide simple permission verification

- Improving the network connection management logic, reducing the risk of connection leakage and improving transmission efficiency

- Use different ports to handle access requests inside and outside the cluster, so that different security policies can be adopted for different ports

##FATE-Arch 2.0: Build a unified and standardized API to facilitate the interconnection of federated heterogeneous computing engines

- Context: Introducing "Context" to manage developer-friendly APIs, such as "distributed computing", "federated learning", "encryption" Algorithm", "Tensor Operation", "Metric" and "Input and Output Management"

- Tensor: Introducing Tensor data structure to handle local and distributed matrix operations , supports built-in heterogeneous acceleration; PHETensor abstraction layer optimization, using a variety of underlying PHE implementations through standard interfaces, free switching

- DataFrame: Introducing "DataFrame" Two-dimensional tabular data structure, used for data input and output and basic feature engineering. The new data block manager supports column multi-type management and feature anonymous logic; new statistics, comparison, indexing, data binning and transformation, etc. 30 operator interfaces

- Reconstruct Federation: Provide a unified federationcommunicationinterface, including unified serialization/deserialization control and a more friendly API

- Config: Provide unified configuration settings for FATE, including security configuration, system configuration and algorithm configuration

- Reconstruct "logger": Customize logging details according to different usage methods and needs

- Launcher: A simplified federated program execution tool, especially suitable for stand-alone operation And local debugging

- Protocol layer: Support SSHE (hybridsecuritymulti-party computation and homomorphic encryption protocol), ECDH, secure aggregation protocol

- Integration of Deepspeed: supports training scheduling of distributed GPU clusters through Eggroll

- Experimental integration of Crypten: supports SMPC, more protocols will be added in the future and functions

##FATE-Component 2.0: Build standardized algorithms Components, adapted to different scheduling engines

- Introducing the component toolbox: encapsulating the machine learning module into a standard executable program

- Provide clear API through spec and loader to facilitate internal expansion and integration with external systems

- Input and output: further decouple FATE-Flow and provide a standardized black box calling process

- Component definition: supports type-based definition, automatically checks component parameters, supports multiple data and model input and output types, and multiple inputs

FATE-ML 2.0: Core algorithm migration and extension,Algorithm development experience and performance are significantly enhanced

- Adopt distributed, clear and ciphertextTensor/Dataframe programming model to realize core algorithm migration and expansion:

- Data preprocessing: Add DataFrame Transformer, complete Reader, PSI, Union and DataSplit migration

- Feature engineering: Complete migration of HeteroFederatedBinning, HeteroFeatureSelection, DataStatistics, Sampling, FeatureScale and Pearson Correlation

- Federated training algorithm migration: including HeteroSecureBoost, HomoNN, HeteroCoordinatedLogisticRegressio, HeteroCoordinatedLinearRegression, SSHE-LogisticRegression and SSHE-LinearRegression

- New federated training algorithm Protocol: SSHE-HeteroNN

based on MPC and homomorphic encryption hybrid protocol, FedPASS-HeteroNN

# based on FedPASS protocol- ##Performance is significantly improved

- PSIPrivacy protection intersection: tested on a data set of 100 million ids, and the intersection result is 100 million, the performance is 1.8 times that of FATE-1.11

- Vertical federated binning algorithm: one hundred thousand rows * thirty-dimensional features in guest, one hundred thousand rows * three hundred-dimensional features in host Tested on data, the performance is 1.5 times that of FATE-1.11

- Vertical federated SSHE-LR algorithm: 100,000 rows*30-dimensional features in guest, 100,000 rows*300-dimensional features in host Tested on feature data, the performance is 4.3 times that of FATE-1.11

- Longitudinal federated LR algorithm with coordinator: 100,000 rows*30-dimensional features in guest, 100,000 rows in host *Tested on data with three hundred dimensional features, the performance is 1.2 times that of FATE-1.11

- Longitudinal federated neural network (based on FedPass protocol): One hundred thousand rows in guest * Thirty dimensional features , tested on host 100,000 rows*300-dimensional feature data, the performance is basically consistent with the plain text, and the performance is 143 times that of FATE-1.11

Eggroll 3.0: Comprehensive enhancements to system performance, availability and reliability

##1. JVMEnhancement

- Core component reconstruction: cluster-manager and node-manager components are fully rebuilt using Java language to ensure uniformity and improve performance

- Transmission component modification: remove the rollsite transmission component and replace it with a more efficient osx component

- Process management improvement: implement more advanced process management logic, display To reduce the risk of process leakage

- Data storage logic enhancement: data storage mechanism optimization, improve performance and reliability

- Concurrency control improvement: upgrade the original There is concurrency control logic in the component to improve performance

- Visual component: New visual component is added to facilitate monitoring of calculation information

- Log improvement: log System enhancement, output is more accurate, helping to detect abnormalities quickly

Upgrade

- roll_pair and egg_pair refactoring: support caller-controlled serialization and partitioning methods , Serialization is safe by the caller Unified party management

- Automatic cleaning of intermediate tables: solves the problem of automatic cleaning of intermediate tables between federation and calculation without requiring additional operations on the caller

- Unified configuration control: Introducing a flexible configuration system that supports direct transfer, configuration files and environment variables to meet diverse needs

- Client PyPI installation: Eggroll 3.0.0 supports client through PyPI for easy installation

- Log configuration optimization: The caller can customize the log format as needed

- Code structure adjustment: The code base is streamlined and structured The logic is clearer and a lot of redundant code is removed

- ##Gather the power of open source to help the development of the privacy computing industry

Cross-industry and cross-institutional data integration in finance, telecommunications, medical, government affairs, advertising and marketing, wisdom Many scenes such as cities have a wide range of needs. Privacy computing has become a powerful tool for breaking down data barriers between industries, and interconnection is the whetstone for giving full play to this powerful tool. FATE 2.0 provides an open source framework to achieve interconnection and interoperability, solving a major pain point in the industry. Most privacy computing platforms can achieve the purpose of interacting and integrating with heterogeneous systems by implementing open interoperability interfaces.

The launch of FATE 2.0

The launch of FATE 2.0provides strong support for the realization of interconnection between heterogeneous platforms, and continuous iteration shows commitment to continuous improvement of technology. It is not only about data privacy protection, but also about the development of the entire industry. In this process, privacy computing industry users and technology partners have more opportunities to participate. Through the joint efforts of the community, we can better address the challenges of data security and privacy protection, and lay a solid foundation for building a more secure and reliable digital society. The release of FATE 2.0 is a new chapter of industry cooperation and win-win. We look forward to more innovators and practitioners joining in to jointly promote the vigorous development of privacy computing technology.

The above is the detailed content of FATE 2.0 released: realizing interconnection of heterogeneous federated learning systems. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

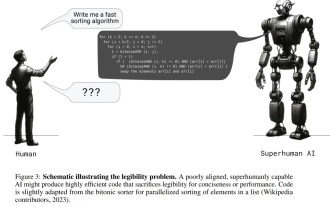

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations

The launch of FATE 2.0

The launch of FATE 2.0