Technology peripherals

Technology peripherals

AI

AI

The world's longest open source model XVERSE-Long-256K, which is unconditionally free for commercial use

The world's longest open source model XVERSE-Long-256K, which is unconditionally free for commercial use

The world's longest open source model XVERSE-Long-256K, which is unconditionally free for commercial use

Yuanxiang released the world's first open source large model XVERSE-Long-256K with a context window length of 256K. This model supports the input of 250,000 Chinese characters, enabling large model applications to enter the "long text era." The model is completely open source and can be commercially used for free without any conditions. It also comes with detailed step-by-step training tutorials, which allows a large number of small and medium-sized enterprises, researchers and developers to realize "large model freedom" earlier.

Global mainstream long text large model map

Global mainstream long text large model map

The amount of parameters and the amount of high-quality data determine the computational complexity of the large model, and long text technology (Long Context) is the "killer weapon" for the development of large-scale model applications. Due to the new technology and high difficulty in research and development, most of them are currently provided by paid closed sources.

XVERSE-Long-256K supports ultra-long text input and can be used for large-scale data analysis, multi-document reading comprehension, and cross-domain knowledge integration, effectively improving the depth and breadth of large model applications: 1. For lawyers and finance Analysts or consultants, prompt engineers, scientific researchers, etc. can solve the work of analyzing and processing longer texts; 2. In role-playing or chat applications, alleviate the memory problem of the model "forgetting" the previous dialogue, or the "hallucination" problem of nonsense etc.; 3. Better support for AI agents to plan and make decisions based on historical information; 4. Help AI native applications maintain a coherent and personalized user experience.

So far, XVERSE-Long-256K has filled the gap in the open source ecosystem, and has also formed a "high-performance family bucket" with Yuanxiang's previous 7 billion, 13 billion, and 65 billion parameter large models, improving domestic open source to the international first-class level.  Yuanxiang large model series

Yuanxiang large model series

Free download of Yuanxiang large model

- ##GitHub: https://github.com/xverse- ai / XVERSE-13B-256K

- Send inquiries to: opensource@xverse.cn

- Users can log in to the official website of the large model (chat.xverse.cn) or the mini program to experience XVERSE-Long immediately -256K.

- High performance positioning

Excellent evaluation performance

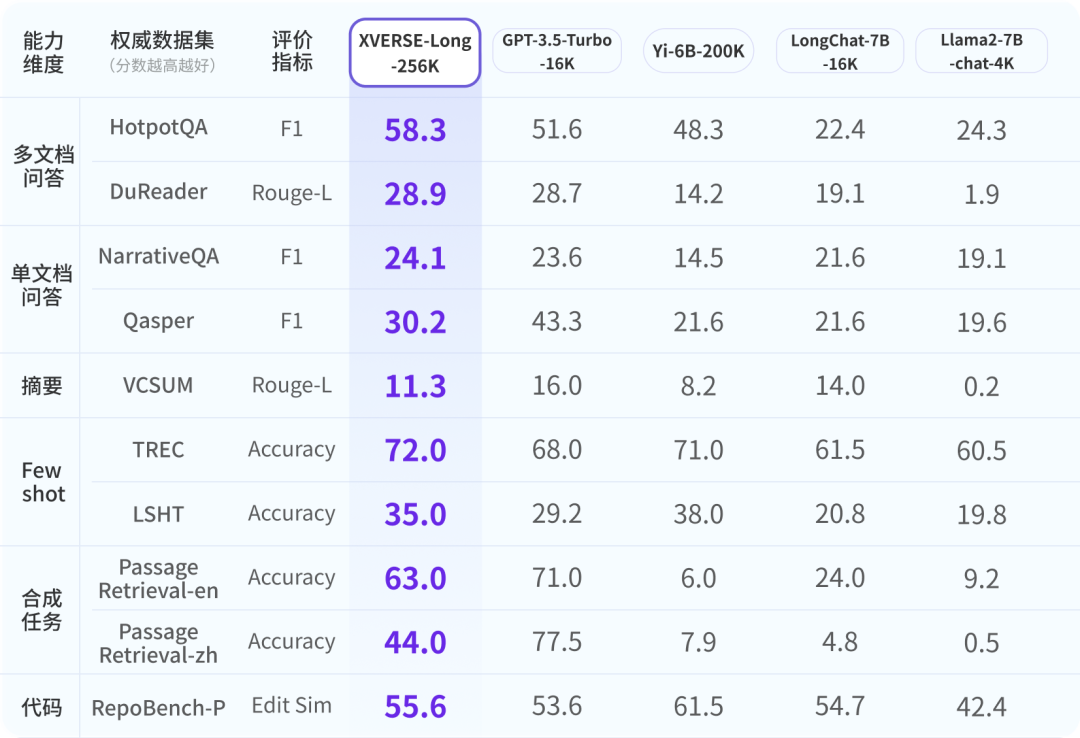

In order to ensure that the industry has a comprehensive, objective and long-term understanding of the Yuanxiang large model, researchers refer to authoritative industries For evaluation, a 9-item comprehensive evaluation system with six dimensions has been developed. XVERSE-Long-256K all perform well, outperforming other long text models. Global mainstream long text open source large model evaluation results XVERSE-Long-256K passed the common long text large model performance stress test "finding the needle in the haystack". This test hides a sentence in a long text corpus that has nothing to do with its content, and uses natural language questions to let the large model accurately extract the sentence.  Novel

Novel

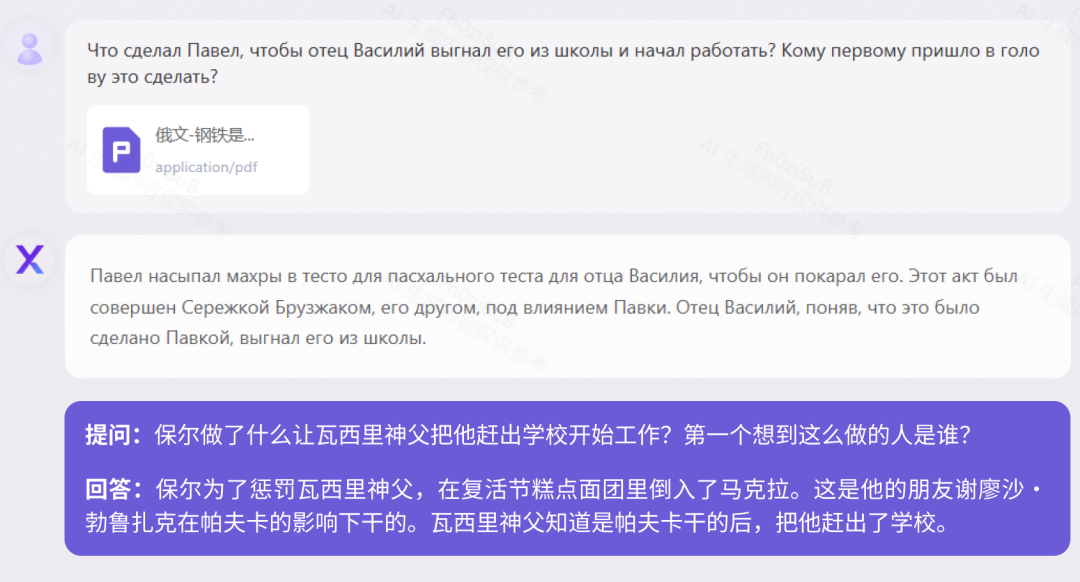

Reading ComprehensionAt present, most large-scale models in China only support Chinese and English bilingual, but Yuanxiang supports it More than 40 languages. We tested the 160,000-character Russian classic novel "How the Steel Was Tempered" and the 410,000-character German historical biography "When the Stars of Mankind Shine." "How Steel Was Tempered (Russian Version)" Test

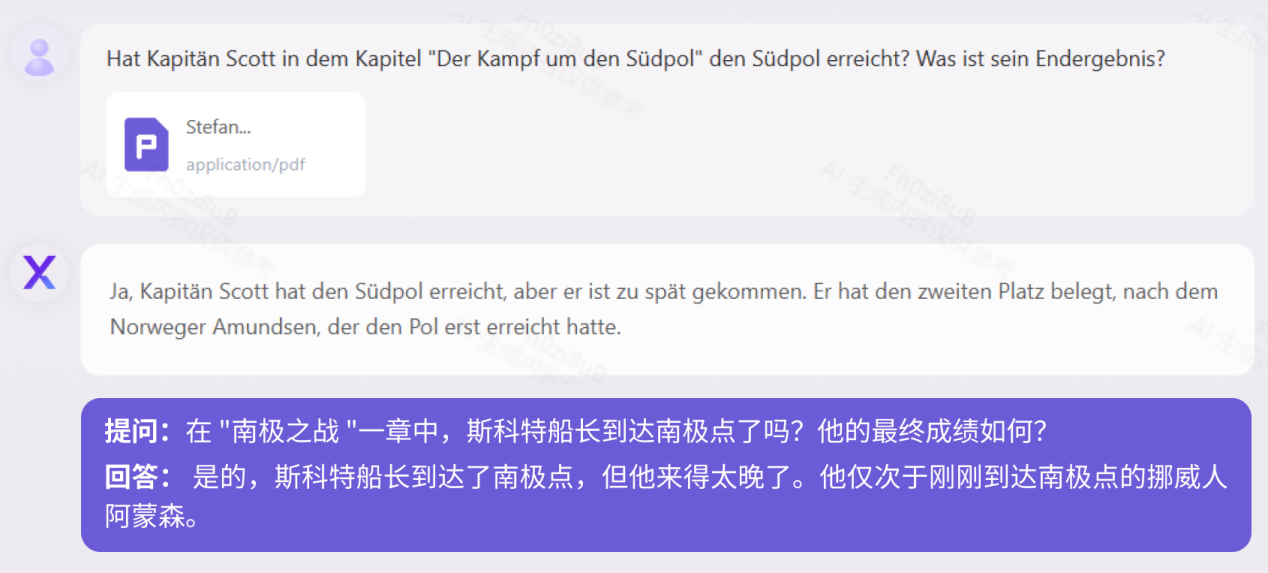

"When the Human Stars Shine (German Version)" Test

Legal Articles

Legal Articles

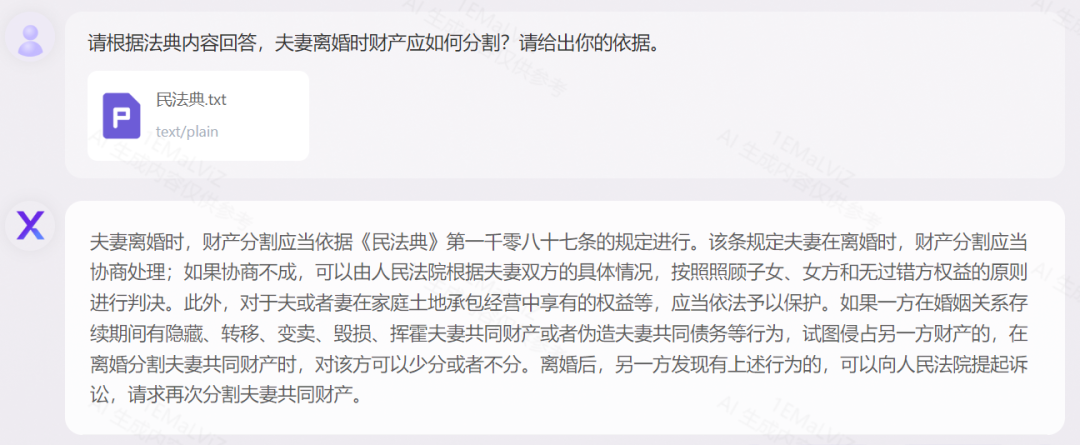

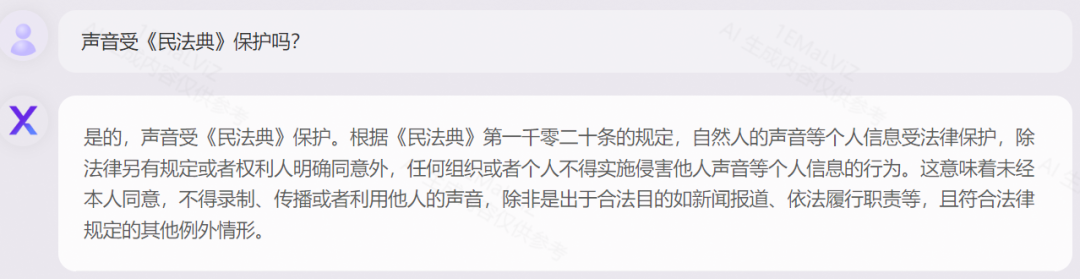

Accurate Application Take the "Civil Code of the People's Republic of China" as an example to show the explanation of legal terms and cases Carry out logical analysis and combine it with practical and flexible applications:

## "Civil Code" testTeach you step by step how to train long text large models

1. Technical Challenge

- Model training: GPU memory usage is proportional to the square of the sequence length, causing a sharp increase in training volume.

- Model structure: The longer the sequence, the more dispersed the model’s attention is, and the easier it is for the model to forget the previous content.

- Inference speed: The longer the model sequence, the slower the model inference will be.

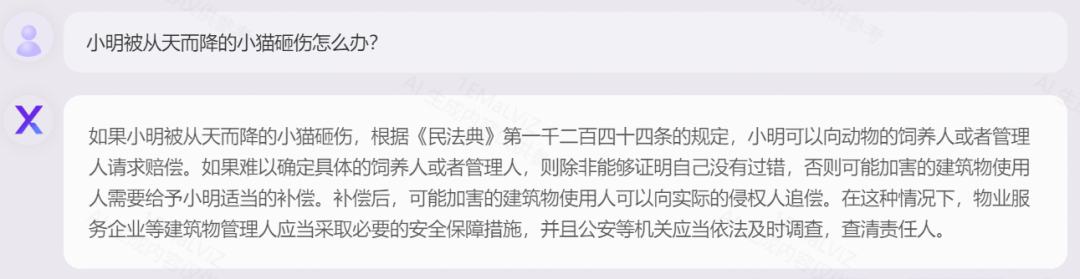

2. Yuanxiang Technology Route

Long text large model technology is a new technology developed in the past year, and its main technical solutions For:

- Directly perform pre-training of long sequences, but this will lead to a squared increase in training volume.

- Expand the sequence length through interpolation or extrapolation of positional encoding. This method will reduce the resolution of positional encoding, thereby reducing the output effect of large models.

Yuanxiang long text large model training process

First stage:ABF Continue pre-training

- GitHub: https://github.com/xverse-ai/XVERSE-13B

- hugging face: https://huggingface.co /xverse/XVERSE-13B-256K

- Magic: https://modelscope.cn/models/xverse/XVERSE-13B-256K

- For inquiries, please send: opensource@xverse.cn

The above is the detailed content of The world's longest open source model XVERSE-Long-256K, which is unconditionally free for commercial use. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1392

1392

52

52

37

37

110

110

DeepMind robot plays table tennis, and its forehand and backhand slip into the air, completely defeating human beginners

Aug 09, 2024 pm 04:01 PM

DeepMind robot plays table tennis, and its forehand and backhand slip into the air, completely defeating human beginners

Aug 09, 2024 pm 04:01 PM

But maybe he can’t defeat the old man in the park? The Paris Olympic Games are in full swing, and table tennis has attracted much attention. At the same time, robots have also made new breakthroughs in playing table tennis. Just now, DeepMind proposed the first learning robot agent that can reach the level of human amateur players in competitive table tennis. Paper address: https://arxiv.org/pdf/2408.03906 How good is the DeepMind robot at playing table tennis? Probably on par with human amateur players: both forehand and backhand: the opponent uses a variety of playing styles, and the robot can also withstand: receiving serves with different spins: However, the intensity of the game does not seem to be as intense as the old man in the park. For robots, table tennis

The first mechanical claw! Yuanluobao appeared at the 2024 World Robot Conference and released the first chess robot that can enter the home

Aug 21, 2024 pm 07:33 PM

The first mechanical claw! Yuanluobao appeared at the 2024 World Robot Conference and released the first chess robot that can enter the home

Aug 21, 2024 pm 07:33 PM

On August 21, the 2024 World Robot Conference was grandly held in Beijing. SenseTime's home robot brand "Yuanluobot SenseRobot" has unveiled its entire family of products, and recently released the Yuanluobot AI chess-playing robot - Chess Professional Edition (hereinafter referred to as "Yuanluobot SenseRobot"), becoming the world's first A chess robot for the home. As the third chess-playing robot product of Yuanluobo, the new Guoxiang robot has undergone a large number of special technical upgrades and innovations in AI and engineering machinery. For the first time, it has realized the ability to pick up three-dimensional chess pieces through mechanical claws on a home robot, and perform human-machine Functions such as chess playing, everyone playing chess, notation review, etc.

Claude has become lazy too! Netizen: Learn to give yourself a holiday

Sep 02, 2024 pm 01:56 PM

Claude has become lazy too! Netizen: Learn to give yourself a holiday

Sep 02, 2024 pm 01:56 PM

The start of school is about to begin, and it’s not just the students who are about to start the new semester who should take care of themselves, but also the large AI models. Some time ago, Reddit was filled with netizens complaining that Claude was getting lazy. "Its level has dropped a lot, it often pauses, and even the output becomes very short. In the first week of release, it could translate a full 4-page document at once, but now it can't even output half a page!" https:// www.reddit.com/r/ClaudeAI/comments/1by8rw8/something_just_feels_wrong_with_claude_in_the/ in a post titled "Totally disappointed with Claude", full of

At the World Robot Conference, this domestic robot carrying 'the hope of future elderly care' was surrounded

Aug 22, 2024 pm 10:35 PM

At the World Robot Conference, this domestic robot carrying 'the hope of future elderly care' was surrounded

Aug 22, 2024 pm 10:35 PM

At the World Robot Conference being held in Beijing, the display of humanoid robots has become the absolute focus of the scene. At the Stardust Intelligent booth, the AI robot assistant S1 performed three major performances of dulcimer, martial arts, and calligraphy in one exhibition area, capable of both literary and martial arts. , attracted a large number of professional audiences and media. The elegant playing on the elastic strings allows the S1 to demonstrate fine operation and absolute control with speed, strength and precision. CCTV News conducted a special report on the imitation learning and intelligent control behind "Calligraphy". Company founder Lai Jie explained that behind the silky movements, the hardware side pursues the best force control and the most human-like body indicators (speed, load) etc.), but on the AI side, the real movement data of people is collected, allowing the robot to become stronger when it encounters a strong situation and learn to evolve quickly. And agile

ACL 2024 Awards Announced: One of the Best Papers on Oracle Deciphering by HuaTech, GloVe Time Test Award

Aug 15, 2024 pm 04:37 PM

ACL 2024 Awards Announced: One of the Best Papers on Oracle Deciphering by HuaTech, GloVe Time Test Award

Aug 15, 2024 pm 04:37 PM

At this ACL conference, contributors have gained a lot. The six-day ACL2024 is being held in Bangkok, Thailand. ACL is the top international conference in the field of computational linguistics and natural language processing. It is organized by the International Association for Computational Linguistics and is held annually. ACL has always ranked first in academic influence in the field of NLP, and it is also a CCF-A recommended conference. This year's ACL conference is the 62nd and has received more than 400 cutting-edge works in the field of NLP. Yesterday afternoon, the conference announced the best paper and other awards. This time, there are 7 Best Paper Awards (two unpublished), 1 Best Theme Paper Award, and 35 Outstanding Paper Awards. The conference also awarded 3 Resource Paper Awards (ResourceAward) and Social Impact Award (

Hongmeng Smart Travel S9 and full-scenario new product launch conference, a number of blockbuster new products were released together

Aug 08, 2024 am 07:02 AM

Hongmeng Smart Travel S9 and full-scenario new product launch conference, a number of blockbuster new products were released together

Aug 08, 2024 am 07:02 AM

This afternoon, Hongmeng Zhixing officially welcomed new brands and new cars. On August 6, Huawei held the Hongmeng Smart Xingxing S9 and Huawei full-scenario new product launch conference, bringing the panoramic smart flagship sedan Xiangjie S9, the new M7Pro and Huawei novaFlip, MatePad Pro 12.2 inches, the new MatePad Air, Huawei Bisheng With many new all-scenario smart products including the laser printer X1 series, FreeBuds6i, WATCHFIT3 and smart screen S5Pro, from smart travel, smart office to smart wear, Huawei continues to build a full-scenario smart ecosystem to bring consumers a smart experience of the Internet of Everything. Hongmeng Zhixing: In-depth empowerment to promote the upgrading of the smart car industry Huawei joins hands with Chinese automotive industry partners to provide

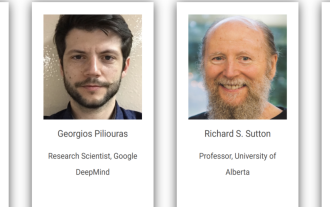

Distributed Artificial Intelligence Conference DAI 2024 Call for Papers: Agent Day, Richard Sutton, the father of reinforcement learning, will attend! Yan Shuicheng, Sergey Levine and DeepMind scientists will give keynote speeches

Aug 22, 2024 pm 08:02 PM

Distributed Artificial Intelligence Conference DAI 2024 Call for Papers: Agent Day, Richard Sutton, the father of reinforcement learning, will attend! Yan Shuicheng, Sergey Levine and DeepMind scientists will give keynote speeches

Aug 22, 2024 pm 08:02 PM

Conference Introduction With the rapid development of science and technology, artificial intelligence has become an important force in promoting social progress. In this era, we are fortunate to witness and participate in the innovation and application of Distributed Artificial Intelligence (DAI). Distributed artificial intelligence is an important branch of the field of artificial intelligence, which has attracted more and more attention in recent years. Agents based on large language models (LLM) have suddenly emerged. By combining the powerful language understanding and generation capabilities of large models, they have shown great potential in natural language interaction, knowledge reasoning, task planning, etc. AIAgent is taking over the big language model and has become a hot topic in the current AI circle. Au

Li Feifei's team proposed ReKep to give robots spatial intelligence and integrate GPT-4o

Sep 03, 2024 pm 05:18 PM

Li Feifei's team proposed ReKep to give robots spatial intelligence and integrate GPT-4o

Sep 03, 2024 pm 05:18 PM

Deep integration of vision and robot learning. When two robot hands work together smoothly to fold clothes, pour tea, and pack shoes, coupled with the 1X humanoid robot NEO that has been making headlines recently, you may have a feeling: we seem to be entering the age of robots. In fact, these silky movements are the product of advanced robotic technology + exquisite frame design + multi-modal large models. We know that useful robots often require complex and exquisite interactions with the environment, and the environment can be represented as constraints in the spatial and temporal domains. For example, if you want a robot to pour tea, the robot first needs to grasp the handle of the teapot and keep it upright without spilling the tea, then move it smoothly until the mouth of the pot is aligned with the mouth of the cup, and then tilt the teapot at a certain angle. . this