Technology peripherals

Technology peripherals

AI

AI

Yang Mi and Taylor's mixed styles: Xiaohongshu AI launches SD and ControlNet suitable styles

Yang Mi and Taylor's mixed styles: Xiaohongshu AI launches SD and ControlNet suitable styles

Yang Mi and Taylor's mixed styles: Xiaohongshu AI launches SD and ControlNet suitable styles

I have to say that taking photos now is really"so easy to be ridiculous".

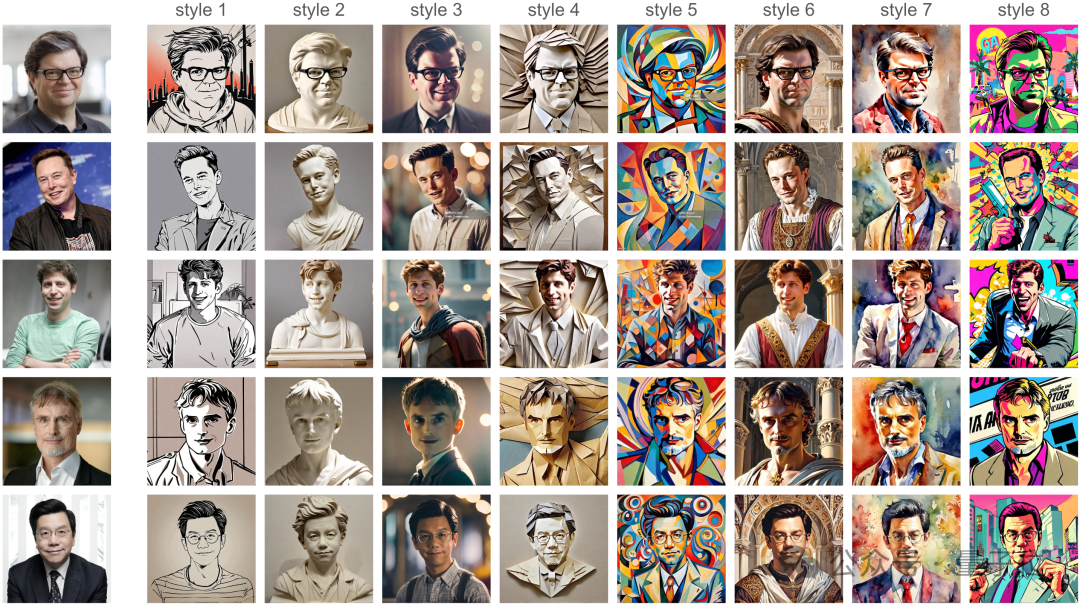

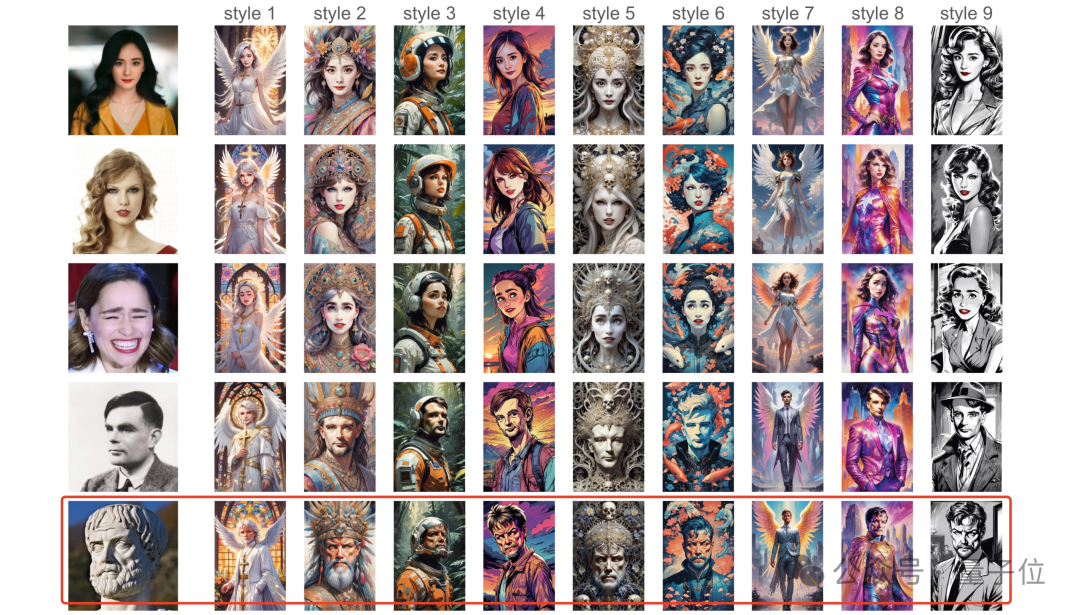

A real person doesn’t need to appear on camera, and doesn’t need to worry about posing or hairstyle. You only need an image of yourself and wait a few seconds to get 7 completely different styles. :

Look carefully, the shape/pose is all clearly done for you, and the original image comes out straight without any need for editing.

Before this, we must not spend at least a whole day in the photo studio, which will make us, the photographer, and the makeup artist almost exhausted.

The above is the power of an AI called InstantID.

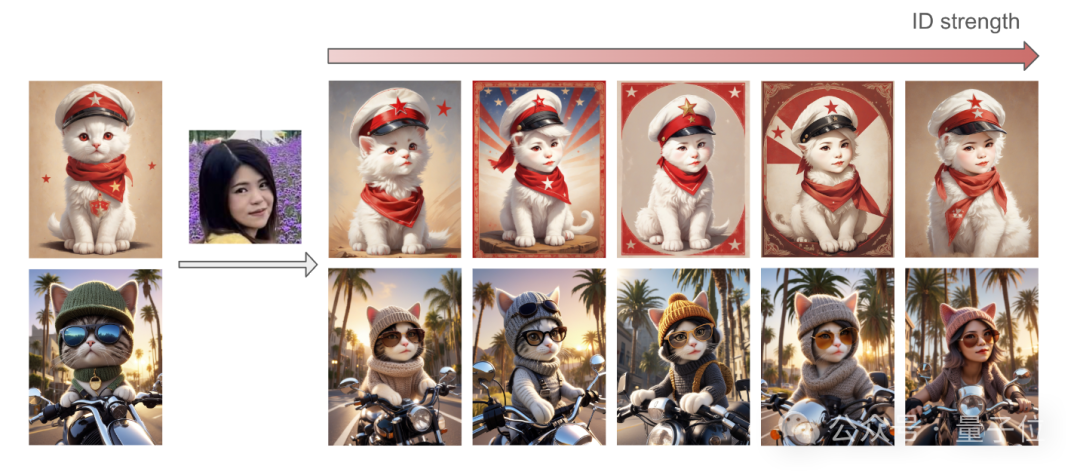

In addition to realistic photos, it can also be "non-human":

For example, it has a cat head and a cat body, but if you look closely, it has your facial features.

Not to mention the various virtual styles:

Like style 2, a real person directly turns into a stone statue.

Of course, inputting a stone statue can also directly change it:

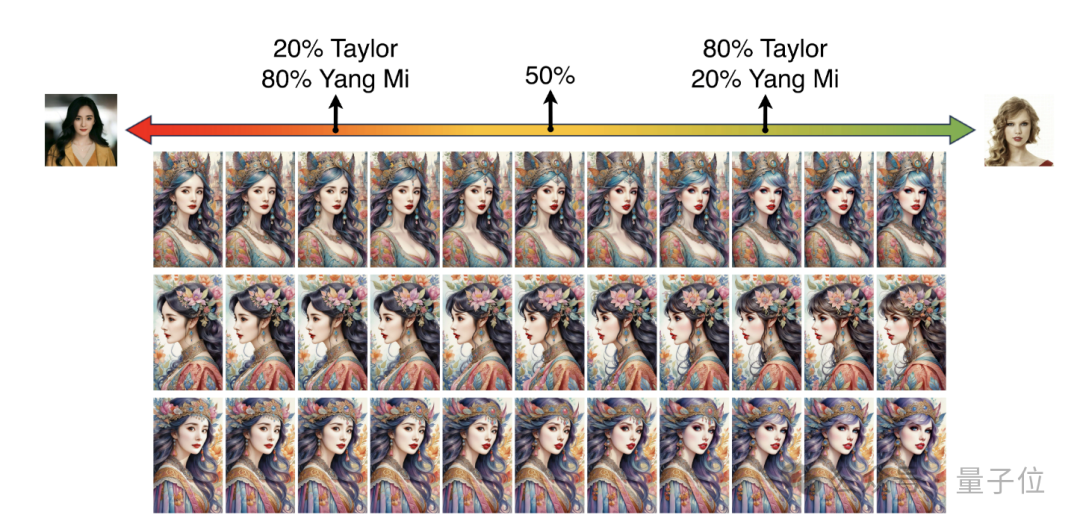

By the way, you can also perform two human face fusion high-power operations, Let’s look at what 20% of Yang Mi and 80% of Taylor look like:

One picture has unlimited high-quality transformations, but you have to figure it out.

So, how is this done?

Based on the diffusion model, it can be seamlessly integrated with SD

The author introduces that the current image stylization technology can already complete the task with only one forward inference (i.e. Based on ID embedding).

But this technology also has problems: it either requires extensive fine-tuning of numerous model parameters, lacks compatibility with community-developed pre-trained models, or cannot maintain high-fidelity facial features.

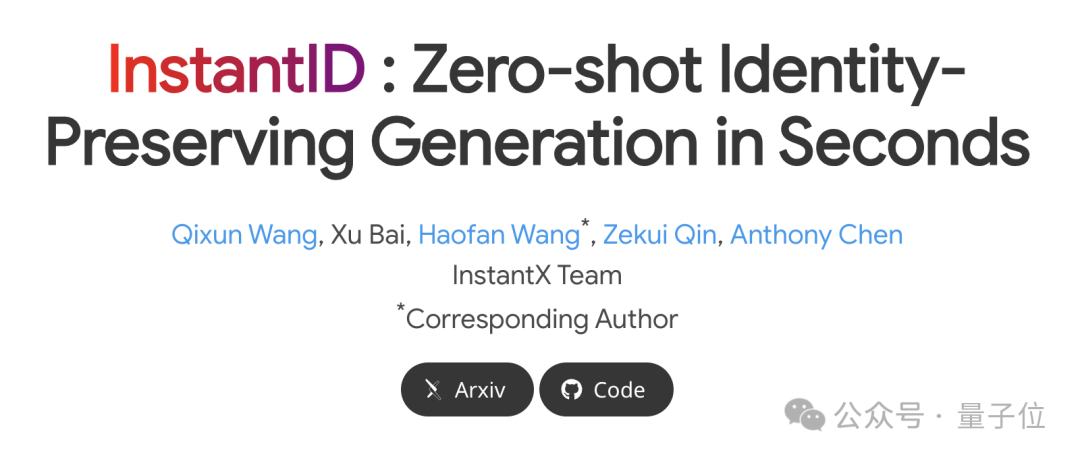

To solve these challenges, they developed InstantID.

InstantID is based on the diffusion model, and its plug-and-play (plug-and-play) module can skillfully handle various stylized transformations using only a single facial image. High fidelity indeed.

The most noteworthy thing is that it can be seamlessly integrated with popular text-to-image pre-trained diffusion models (such as SD1.5, SDXL) and can be used as a plug-in.

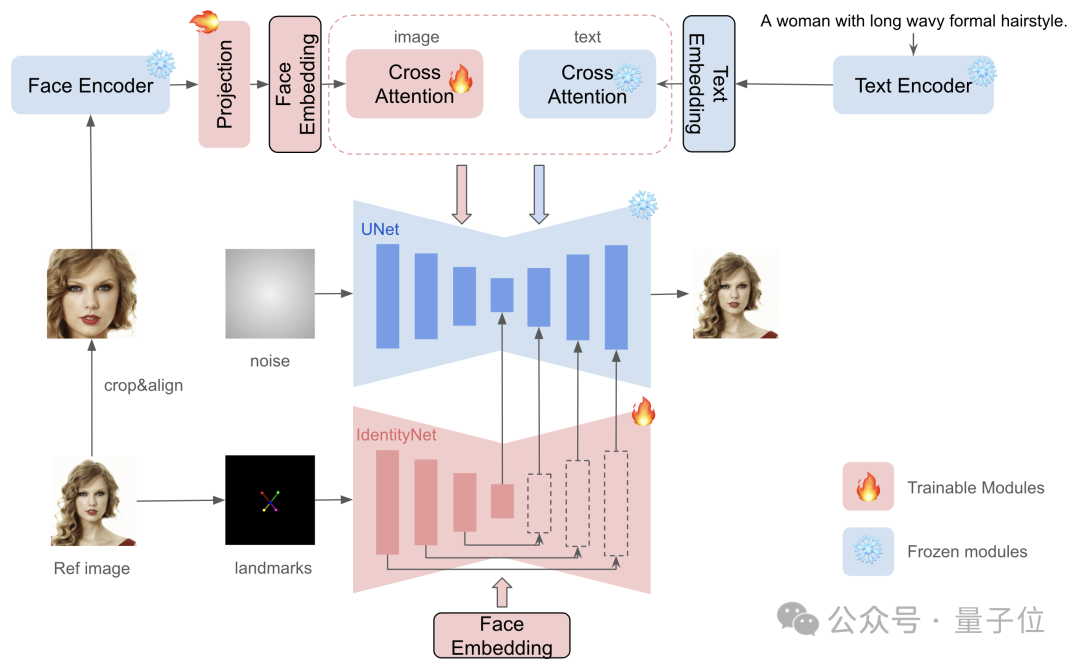

Specifically, InstantID consists of three key components:

(1) ID embedding that captures robust semantic face information;

(2) It has decoupling A lightweight adaptation module of cross-attention to facilitate images as visual cues;

(3) IdentityNet network, which encodes detailed features of reference images through additional spatial control, and ultimately completes image generation.

Compared with previous work in the industry, InstantID has several differences:

First, there is no need to train UNet, so the original text can be retained in the image model generation capabilities and is compatible with existing pre-trained models and ControlNet in the community.

The second is that test-time adjustment is not required, so for a specific style, there is no need to collect multiple images for fine-tuning, and only need to make an inference on a single image.

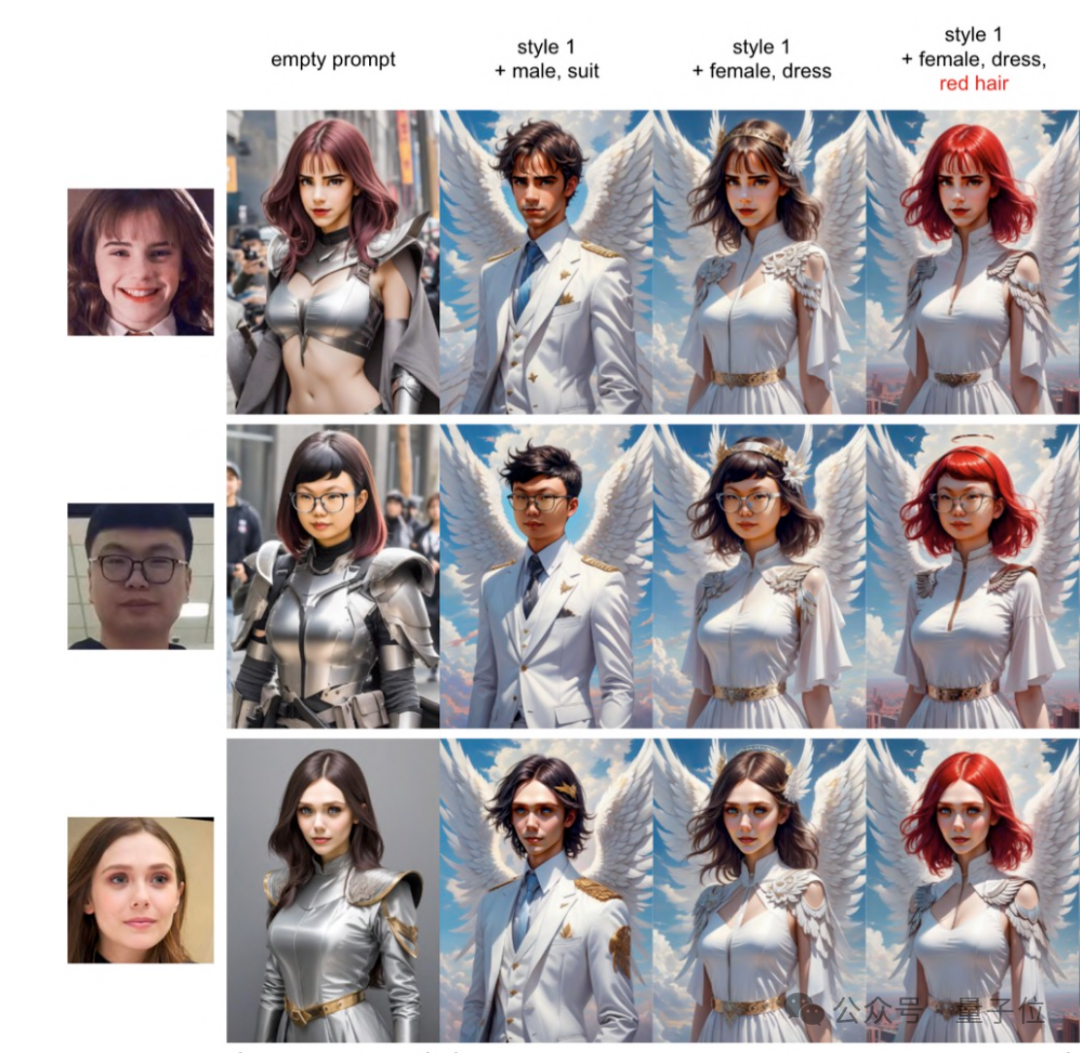

Third, in addition to achieving better facial fidelity, text editability is also retained. As shown in the picture below, with just a few words, you can change the gender of the image, change the suit, change the hairstyle and hair color.

#Again, all the above effects can be completed in a few seconds with just 1 reference image.

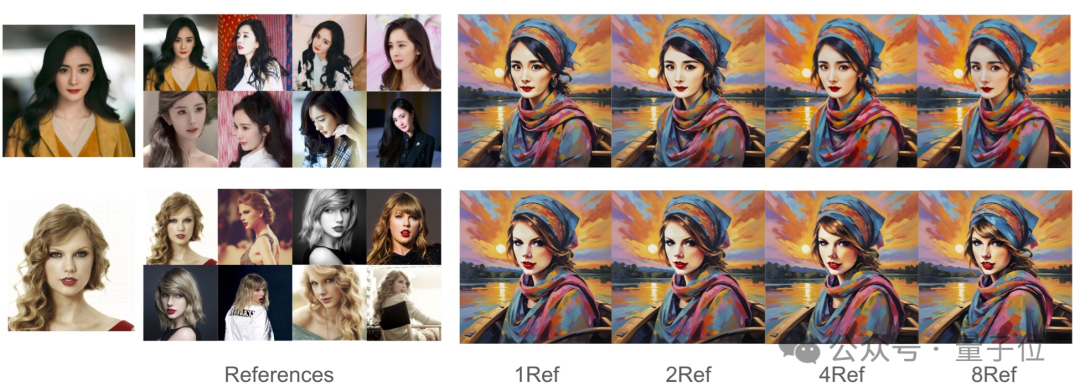

The experiment shown below proves that A few more reference pictures are of little use, and one picture can do a good job.

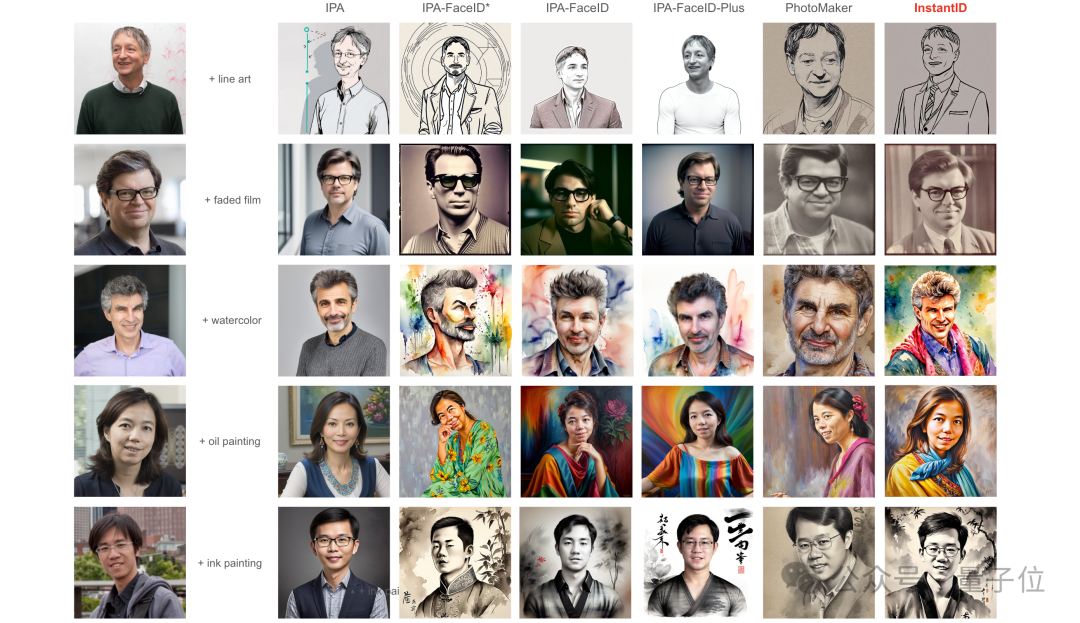

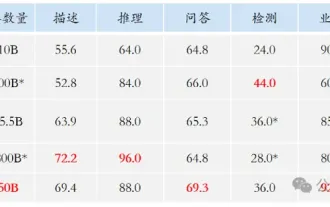

The following are some specific comparisons.

The comparison objects are the existing tuning-free SOTA methods: IP-Adapter (IPA), IP-Adapter-FaceID, and PhotoMaker just produced by Tencent two days ago.

It can be seen that everyone is quite "volume" and the effect is not bad - but if you compare them carefully, PhotoMaker and IP-Adapter-FaceID both have good fidelity, but their text control capabilities are obviously worse.

In contrast, InstantID’s faces and styles blend better, achieving better fidelity while retaining good text editability sex.

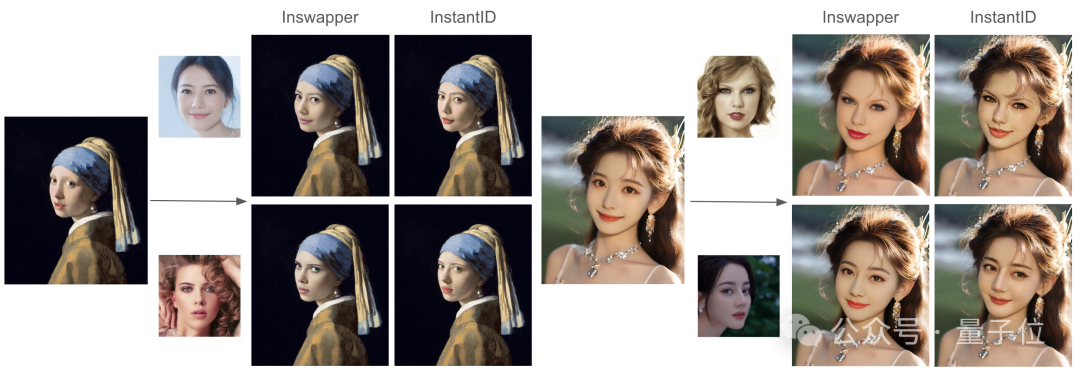

In addition, there is also a comparison with the InsightFace Swapper model. Which one do you think is better?

Introduction to the author

There are 5 authors in this article, from the mysterious InstantX team (not much information can be found online).

But the first one is Qixun Wang from 小红书.

The corresponding author Wang Haofan is also an engineer at Xiaohongshu. He is engaged in research on controllable and conditional content generation (AIGC) and is a CMU’20 alumnus.

The above is the detailed content of Yang Mi and Taylor's mixed styles: Xiaohongshu AI launches SD and ControlNet suitable styles. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

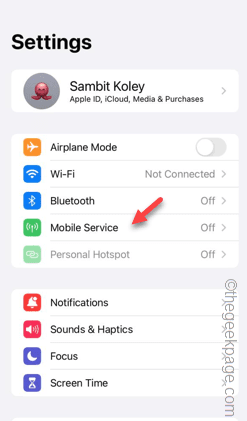

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving