Technology peripherals

Technology peripherals

AI

AI

Utilize vector embeddings and knowledge graphs to improve the accuracy of LLM models

Utilize vector embeddings and knowledge graphs to improve the accuracy of LLM models

Utilize vector embeddings and knowledge graphs to improve the accuracy of LLM models

Language models play a key role in the field of natural language processing, helping to understand and generate natural language text. However, traditional language models have some problems, such as the inability to handle complex long sentences, lack of contextual information, and limitations in knowledge understanding. To solve these problems, we can use vector embeddings combined with knowledge graphs to improve the accuracy of language models. Vector embedding technology can map words or phrases to vector representations in high-dimensional space to better capture semantic information. The knowledge graph provides rich semantic relationships and connections between entities, which can introduce more background knowledge into the language model. By combining vector embeddings and knowledge graphs with language models, we can improve the model's ability to handle complex sentences, better utilize contextual information, and expand the model's knowledge understanding capabilities. This combined method can improve the accuracy of the language model and bring better results to natural language processing tasks.

1. Vector embedding

#Vector embedding is a technology that converts text information into vectors. It can represent semantic units such as words and phrases as Vectors in high-dimensional vector spaces. These vectors capture the semantic and contextual information of the text and help improve the LLM model's ability to understand natural language.

In traditional LLM models, pre-trained word vector models (such as Word2Vec, GloVe, etc.) are usually used as input features. These word vector models are trained on large corpora to learn semantic relationships between words. However, this method can only capture local semantic information and cannot take into account global context information. To solve this problem, an improved method is to use contextual word vector models, such as BERT (Bidirectional Encoder Representations from Transformers). Through the two-way training method, the BERT model can take into account the context information at the same time, thereby better capturing the global semantic relationship. In addition, in addition to using word vector models, you can also consider using sentence vector models as input features. The sentence vector model can capture the

by mapping the entire sentence into a fixed-dimensional vector space. To solve this problem, the self-attention mechanism in the Transformer model can be used to capture Global contextual information. Specifically, the interactive information between words is calculated through a multi-layer self-attention mechanism to obtain a richer semantic representation. At the same time, the use of bidirectional context information can improve the quality of word vectors. For example, the vector representation of the current word is calculated by combining the context information of the previous and subsequent texts. This can effectively improve the semantic understanding ability of the model.

2. Knowledge graph

The knowledge graph is a graphical structure used to represent and organize knowledge. It usually consists of nodes and edges, where nodes represent entities or concepts and edges represent relationships between entities. By embedding the knowledge graph into the language model, we can introduce external knowledge into the training process of the language model. This helps improve the language model's ability to understand and generate complex problems.

Traditional LLM models usually only consider the linguistic information in the text, while ignoring the semantic relationships between entities and concepts involved in the text. This approach may cause the model to perform poorly when processing some texts involving entities and concepts.

In order to solve this problem, the concept and entity information in the knowledge graph can be integrated into the LLM model. Specifically, entity and concept information can be added to the input of the model, so that the model can better understand the semantic information and background knowledge in the text. In addition, the semantic relationships in the knowledge graph can also be integrated into the calculation process of the model, so that the model can better capture the semantic relationships between concepts and entities.

3. Strategy of combining vector embedding and knowledge graph

In practical applications, vector embedding and knowledge graph can be combined. This further improves the accuracy of the LLM model. Specifically, the following strategies can be adopted:

1. Fusion of word vectors and concept vectors in knowledge graphs. Specifically, word vectors and concept vectors can be spliced to obtain a richer semantic representation. This approach allows the model to take into account both the linguistic information in the text and the semantic relationships between entities and concepts.

2. When calculating self-attention, consider the information of entities and concepts. Specifically, when calculating self-attention, the vectors of entities and concepts can be added to the calculation process, so that the model can better capture the semantic relationship between entities and concepts.

3. Integrate the semantic relationships in the knowledge graph into the context information calculation of the model. Specifically, the semantic relationships in the knowledge graph can be taken into account when calculating context information, thereby obtaining richer context information. This approach allows the model to better understand the semantic information and background knowledge in the text.

4. During the training process of the model, add the information of the knowledge graph as a supervision signal. Specifically, during the training process, the semantic relationships in the knowledge graph can be added to the loss function as supervision signals, so that the model can better learn the semantic relationships between entities and concepts.

By combining the above strategies, the accuracy of the LLM model can be further improved. In practical applications, appropriate strategies can be selected for optimization and adjustment according to specific needs and scenarios.

The above is the detailed content of Utilize vector embeddings and knowledge graphs to improve the accuracy of LLM models. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

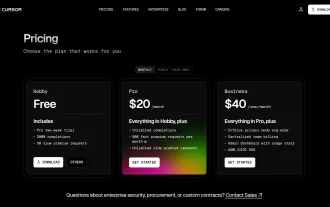

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

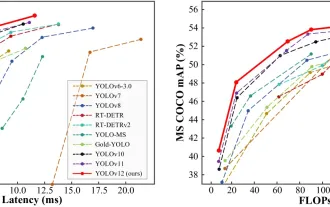

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

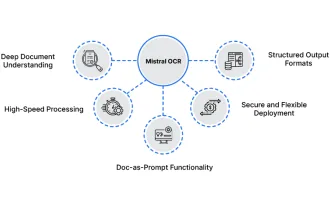

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

Mistral OCR: Revolutionizing Retrieval-Augmented Generation with Multimodal Document Understanding Retrieval-Augmented Generation (RAG) systems have significantly advanced AI capabilities, enabling access to vast data stores for more informed respons

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist