What is the difference between TTE and traditional embedding?

TTE is a text encoding technology using the Transformer model, which is significantly different from traditional embedding methods. This article will introduce in detail the differences between TTE and traditional embedding from many aspects.

1. Model structure

Traditional embedding methods usually use bag-of-words models or N-gram models to encode text. However, these methods usually ignore the relationship between words and only encode each word as an independent feature. In addition, for the same word, its encoding representation is the same in different contexts. This encoding method ignores the semantic and syntactic relationships between words in the text, and is therefore less effective for certain tasks, such as semantic similarity calculation and sentiment analysis. Therefore, more advanced methods are needed to solve these problems.

TTE adopts the Transformer model, a deep neural network structure based on the self-attention mechanism, which is widely used in the field of natural language processing. The Transformer model can automatically learn the semantic and syntactic relationships between words in the text, providing a better foundation for text encoding. Compared with traditional embedding methods, TTE can better characterize the semantic information of text and improve the accuracy and efficiency of text encoding.

2. Training method

Traditional embedding methods usually use pre-trained word vectors as text encoding. These word vectors are encoded through large Obtained from large-scale corpus training, such as Word2Vec, GloVe, etc. This training method can effectively extract semantic features in text, but for some special words or contexts, the accuracy may not be as good as manually annotated labels. Therefore, when applying these pre-trained word vectors, you need to pay attention to their limitations, especially when dealing with special vocabulary or context. In order to improve the accuracy of text encoding, you can consider combining other methods, such as context-based word vector generation models or deep learning models, to further optimize the semantic representation of text. This can make up for the shortcomings of traditional embedding methods to a certain extent, making text encoding more accurate.

TTE uses self-supervised learning for training. Specifically, TTE uses two tasks: mask language model and next sentence prediction for pre-training. Among them, the MLM task requires the model to randomly mask some words in the input text, and then predict the masked words; the NSP task requires the model to determine whether two input texts are adjacent sentences. In this way, TTE can automatically learn the semantic and syntactic information in the text, improving the accuracy and generalization of text encoding.

3. Application scope

Traditional embedding methods are usually suitable for some simple text processing tasks, such as text classification, sentiment analysis, etc. However, for some complex tasks, such as natural language reasoning, question answering systems, etc., the effect may be poor.

TTE is suitable for various text processing tasks, especially those that require understanding the relationship between sentences in the text. For example, in natural language reasoning, TTE can capture the logical relationships in the text and help the model perform better reasoning; in the question and answer system, TTE can understand the semantic relationship between questions and answers, improving the accuracy and efficiency of question and answer.

4. Example explanation

The following is an application example in a natural language reasoning task to illustrate the difference between TTE and traditional embedding. The natural language reasoning task requires judging the logical relationship between two sentences. For example, the premise "dogs are mammals" and the hypothesis is "dogs can fly". We can judge that this is a wrong hypothesis because "dog" does not Can fly.

Traditional embedding methods usually use bag-of-words models or N-gram models to encode premises and hypotheses. This encoding method ignores the semantic and syntactic relationships between words in the text, resulting in poor results for tasks such as natural language reasoning. For example, for the premise "dogs are mammals" and the hypothesis "dogs can fly", traditional embedding methods may encode them into two vectors, and then use simple similarity calculations to determine the logical relationship between them. However, due to the limitations of the coding method, this method may not accurately determine that the hypothesis is wrong.

TTE uses the Transformer model to encode premises and assumptions. The Transformer model can automatically learn the semantic and syntactic relationships between words in text while avoiding the limitations of traditional embedding methods. For example, for the premise "dogs are mammals" and the hypothesis "dogs can fly", TTE can encode them into two vectors, and then use similarity calculation to determine the logical relationship between them. Since TTE can better characterize the semantic information of the text, it can more accurately determine whether the hypothesis is correct.

In short, the difference between TTE and traditional embedding methods lies in the model structure and training method. In natural language reasoning tasks, TTE can better capture the logical relationship between premises and assumptions, improving the accuracy and efficiency of the model.

The above is the detailed content of What is the difference between TTE and traditional embedding?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

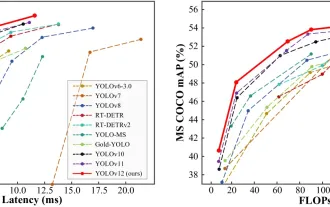

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

Best AI Chatbots Compared (ChatGPT, Gemini, Claude & More)

Apr 02, 2025 pm 06:09 PM

The article compares top AI chatbots like ChatGPT, Gemini, and Claude, focusing on their unique features, customization options, and performance in natural language processing and reliability.

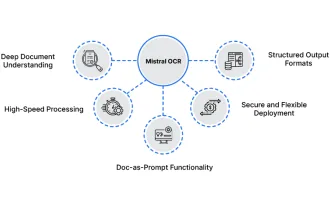

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

How to Use Mistral OCR for Your Next RAG Model

Mar 21, 2025 am 11:11 AM

Mistral OCR: Revolutionizing Retrieval-Augmented Generation with Multimodal Document Understanding Retrieval-Augmented Generation (RAG) systems have significantly advanced AI capabilities, enabling access to vast data stores for more informed respons

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

Top AI Writing Assistants to Boost Your Content Creation

Apr 02, 2025 pm 06:11 PM

The article discusses top AI writing assistants like Grammarly, Jasper, Copy.ai, Writesonic, and Rytr, focusing on their unique features for content creation. It argues that Jasper excels in SEO optimization, while AI tools help maintain tone consist