Technology peripherals

Technology peripherals

AI

AI

A deep dive into the concept of dimensionality reduction in machine learning: What is dimensionality reduction?

A deep dive into the concept of dimensionality reduction in machine learning: What is dimensionality reduction?

A deep dive into the concept of dimensionality reduction in machine learning: What is dimensionality reduction?

降维是一种通过优化机器学习模型的训练数据输入变量来减少模型训练成本的技术。在高维数据中,输入变量的数量可能非常庞大,降维的目的是尽可能地保留原始数据的可变性。通过降维,我们可以减少模型训练所需的计算资源,并在一定程度上提高模型的准确性。

在机器学习中,对于较少输入变量或低维度的数据,可以使用结构更简单、参数更少的机器学习模型进行处理。特别是在神经网络中,通过使用简单模型来降低数据的维数,可以实现很好的泛化效果,从而使模型更加可取。

降维的重要性

- 高维数据执行学习训练具有非常高的计算成本。而且通过高维数据训练的模型往往在训练数据上表现得相当好,但在测试时表现不佳。

- 降维消除了数据中不相关的特征或变量,可以帮助模型预测避免维度诅咒,同时还能保留数据中的相关特征,提高准确性。

- 减少数据的维度也使数据可视化更容易,节省训练时间和存储空间。

- 降维还可以通过消除多重共线性来帮助增强对机器学习模型参数的解释。

- 可以应用降维来缓解过拟合问题

- 降维可用于因子分析

- 降维可用于图像压缩

- 降维可将非线性数据转换为线性可分形式

- 降维可用于压缩神经网络架构

降维的组成部分

特征选择

这涉及尝试识别原始特征的子集,以尝试找到可以用来对问题进行建模的较小子集。

特征选择类型

- 递归特征消除

递归特征消除(RFE)方法的核心是不同机器学习算法本质上是RFE包装的,用于帮助选择特征。

从技术上讲,它是一种包装类型的特征选择算法,它在内部也使用了基于过滤器的特征选择。它的工作原理是从训练数据集中的特征开始寻找特征的子集,然后消除特征直到保留所需的数量。

- 遗传特征选择

遗传算法(GA)的灵感来自达尔文的自然选择理论,在该理论中,只有最适合的个体才能得到保存,模仿自然选择的力量来找到函数的最佳值。

由于变量在组中起作用,因此对于遗传算法,所选变量被视为一个整体。该算法不会针对目标单独对变量进行排名。

- 顺序前向选择

在顺序前向选择中,首先选择最好的单个特征。之后,它通过剩余特征之一与最佳特征形成特征对,再然后选择最佳对。接下来会看到使用这对最佳特征以及剩余特征之一形成的三重特征。这可以一直持续到选择了预定义数量的特征。

特征提取

特征提取涉及将原始原始数据集减少为可管理的组以进行处理。它最常使用文本和图像数据进行,提取和处理最重要的特征,而不是处理整个数据集。

降维的方法

主成分分析(PCA)

这是一种线性降维技术,它将一组相关特征“p”转换为较少数量的不相关特征“k”(k 线性判别分析(LDA) 这种技术按类别将训练实例分开。它识别输入变量的线性组合,从而优化类可分离性。它是一种有监督的机器学习算法。 广义判别分析(GDA) 此方法使用一个函数核心操作员。它将输入向量映射到高维特征空间。该方法旨在通过最大化类间散度与类内散度的比率来找到变量到低维空间的投影。 还有其他的降维方法,如t分布随机邻域嵌入(t-SNE)、因子分析(FA)、截断奇异值分解(SVD)、多维缩放(MDS)、等距映射(Isomap)、后向消除、前向选择等。 降维在自动编码器中是可逆的。这些本质上是常规的神经网络,中间有一个瓶颈层。例如,第一层可以有20个输入,中间层有10个神经元,最后一层有另外20个神经元。在训练这样一个网络时,基本上强制它把信息压缩到10个神经元,然后再解压缩,从而最大限度地减少最后一层的错误。 The above is the detailed content of A deep dive into the concept of dimensionality reduction in machine learning: What is dimensionality reduction?. For more information, please follow other related articles on the PHP Chinese website!降维是可逆的吗?

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing, and graph vision models. It has a wide range of applications, such as assisting vehicles in identifying obstacles on the road, and helping in the detection and diagnosis of diseases through medical image recognition. . This article mainly recommends some better open source and free image annotation tools. 1.Makesens

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

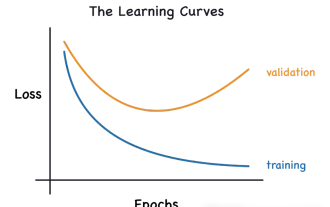

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,