Technology peripherals

Technology peripherals

AI

AI

Use decision tree classifiers to determine key feature selection methods in the data set

Use decision tree classifiers to determine key feature selection methods in the data set

Use decision tree classifiers to determine key feature selection methods in the data set

The decision tree classifier is a supervised learning algorithm based on a tree structure. It divides the data set into multiple decision-making units, each unit corresponding to a set of feature conditions and a predicted output value. In the classification task, the decision tree classifier builds a decision tree model by learning the relationship between features and labels in the training data set, and classifies new samples to the corresponding predicted output values. In this process, selecting important features is crucial. This article explains how to use a decision tree classifier to select important features from a dataset.

1. The significance of feature selection

Feature selection is to predict the target variable more accurately and select the most representative from the original data set. sexual characteristics. In practical applications, there may be many redundant or irrelevant features, which will interfere with the learning process of the model and lead to a decrease in the model's generalization ability. Therefore, selecting a set of the most representative features can effectively improve model performance and reduce the risk of overfitting.

2. Use the decision tree classifier for feature selection

The decision tree classifier is a classifier based on a tree structure. It uses information gain to evaluate feature importance. The greater the information gain, the greater the impact of the feature on the classification result. Therefore, in the decision tree classifier, features with larger information gain are selected for classification. The steps for feature selection are as follows:

1. Calculate the information gain of each feature

Information gain refers to the degree of influence of features on classification results , which can be measured by entropy. The smaller the entropy, the higher the purity of the data set, which means the greater the impact of the features on classification. In the decision tree classifier, the information gain of each feature can be calculated using the formula:

\operatorname{Gain}(F)=\operatorname{Ent}(S)-\sum_ {v\in\operatorname{Values}(F)}\frac{\left|S_{v}\right|}{|S|}\operatorname{Ent}\left(S_{v}\right)

Among them, \operatorname{Ent}(S) represents the entropy of the data set S, \left|S_{v}\right| represents the sample set whose feature F value is v, \operatorname{ Ent}\left(S_{v}\right) represents the entropy of the sample set with value v. The greater the information gain, the greater the impact of this feature on the classification results.

2. Select the feature with the largest information gain

After calculating the information gain of each feature, select the feature with the largest information gain as Split features of classifiers. The data set is then divided into multiple subsets based on this feature, and the above steps are recursively performed on each subset until the stopping condition is met.

3. Stop conditions

- #The process of recursively building a decision tree by the decision tree classifier needs to meet the stop conditions, which usually include the following: Case:

- The sample set is empty or contains only samples of one category, and the sample set is divided into leaf nodes.

- The information gain of all features is less than a certain threshold, and the sample set is divided into leaf nodes.

- The depth of the tree reaches the preset maximum value, and the sample set is divided into leaf nodes.

4. Avoid overfitting

When building a decision tree, in order to avoid overfitting, pruning technology can be used . Pruning refers to pruning the generated decision tree and removing some unnecessary branches in order to reduce the complexity of the model and improve the generalization ability. Commonly used pruning methods include pre-pruning and post-pruning.

Pre-pruning means to evaluate each node during the decision tree generation process. If the split of the current node cannot improve the model performance, the split will be stopped and the node will be split. The node is set as a leaf node. The advantage of pre-pruning is that it is simple to calculate, but the disadvantage is that it is easy to underfit.

Post-pruning refers to pruning the generated decision tree after the decision tree is generated. The specific method is to replace some nodes of the decision tree with leaf nodes and calculate the performance of the model after pruning. If the model performance does not decrease but increases after pruning, the pruned model will be retained. The advantage of post-pruning is that it can reduce overfitting, but the disadvantage is high computational complexity.

The above is the detailed content of Use decision tree classifiers to determine key feature selection methods in the data set. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing, and graph vision models. It has a wide range of applications, such as assisting vehicles in identifying obstacles on the road, and helping in the detection and diagnosis of diseases through medical image recognition. . This article mainly recommends some better open source and free image annotation tools. 1.Makesens

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

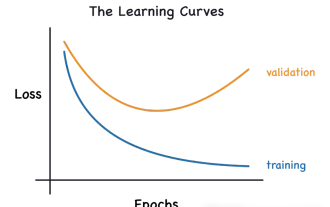

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

The application potential of Go language in the field of machine learning is huge. Its advantages are: Concurrency: It supports parallel programming and is suitable for computationally intensive operations in machine learning tasks. Efficiency: The garbage collector and language features ensure that the code is efficient, even when processing large data sets. Ease of use: The syntax is concise, making it easy to learn and write machine learning applications.