Technology peripherals

Technology peripherals

AI

AI

The difference between single-stage and dual-stage target detection algorithms

The difference between single-stage and dual-stage target detection algorithms

The difference between single-stage and dual-stage target detection algorithms

Object detection is an important task in the field of computer vision, used to identify objects in images or videos and locate their locations. This task is usually divided into two categories of algorithms, single-stage and two-stage, which differ in terms of accuracy and robustness.

Single-stage target detection algorithm

The single-stage target detection algorithm converts target detection into a classification problem. Its advantage is that it is fast and only requires The test can be completed in one step. However, due to oversimplification, the accuracy is usually not as good as the two-stage object detection algorithm.

Common single-stage target detection algorithms include YOLO, SSD and Faster R-CNN. These algorithms generally take the entire image as input and run a classifier to identify the target object. Different from traditional two-stage object detection algorithms, they do not need to define the area in advance, but directly predict the bounding box and category of the target object. Due to this simple yet efficient approach, single-stage object detection algorithms are more popular in real-time vision applications.

Two-stage target detection algorithm

The two-stage target detection algorithm consists of two steps: first generating candidate regions, and then running on these regions Classifier. This method is more accurate than single-stage, but slower.

The representative two-stage target detection algorithms include R-CNN, Fast R-CNN, Faster R-CNN and Mask R-CNN. These algorithms first use a region proposal network to generate a set of candidate regions, and then use a convolutional neural network to classify each candidate region. This method is more accurate than the single-stage method, but requires more computing resources and time.

The difference between single-stage and two-stage target detection algorithms

Let’s compare the difference between single-stage and two-stage target detection algorithms in detail :

1. Accuracy and Robustness

Single-stage target detection algorithms usually have higher speed and lower memory consumption , but the accuracy is usually slightly lower than the two-stage algorithm. Since single-stage algorithms predict object bounding boxes directly from input images or videos, it is difficult to accurately predict objects with complex shapes or partial occlusions. In addition, due to the lack of candidate region extraction step in two-stage detection, the single-stage algorithm may be affected by background noise and object diversity.

The dual-stage target detection algorithm performs better in terms of accuracy, especially for objects that are partially occluded, have complex shapes, or vary in size. Through a two-stage detection process, the dual-stage algorithm can better filter background noise and improve prediction accuracy.

2. Speed

Single-stage object detection algorithms are generally faster than two-stage object detection algorithms. This is because the single-stage algorithm handles the target detection task as a single step, while the two-stage algorithm requires two steps to complete. In real-time vision applications such as autonomous driving, speed is a very important factor.

3. Adaptability to different scales and rotations

Dual-stage target detection algorithms usually have better adaptability to different scales and rotations Adaptability. This is because the two-stage algorithm first generates candidate regions that can contain various scales and rotations of the target object, and then performs classification and bounding box adjustment on these regions. This enables the dual-stage algorithm to better adapt to various scenarios and tasks.

4. Computing resource consumption

Two-stage target detection algorithms usually require more computing resources to run. This is because they require two steps of processing and require a lot of calculations in each step. In contrast, single-stage algorithms handle the object detection task as a single step and therefore typically require fewer computational resources.

In short, single-stage and dual-stage target detection algorithms each have their own advantages and disadvantages. Which algorithm to choose depends on the specific application scenarios and needs. In scenarios that require high detection accuracy, such as autonomous driving, a two-stage target detection algorithm is usually selected. In scenarios that require high speed for real-time processing, such as face recognition, a single-stage target detection algorithm can be selected. .

The above is the detailed content of The difference between single-stage and dual-stage target detection algorithms. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1389

1389

52

52

The difference between single-stage and dual-stage target detection algorithms

Jan 23, 2024 pm 01:48 PM

The difference between single-stage and dual-stage target detection algorithms

Jan 23, 2024 pm 01:48 PM

Object detection is an important task in the field of computer vision, used to identify objects in images or videos and locate their locations. This task is usually divided into two categories of algorithms, single-stage and two-stage, which differ in terms of accuracy and robustness. Single-stage target detection algorithm The single-stage target detection algorithm converts target detection into a classification problem. Its advantage is that it is fast and can complete the detection in just one step. However, due to oversimplification, the accuracy is usually not as good as the two-stage object detection algorithm. Common single-stage target detection algorithms include YOLO, SSD and FasterR-CNN. These algorithms generally take the entire image as input and run a classifier to identify the target object. Unlike traditional two-stage target detection algorithms, they do not need to define areas in advance, but directly predict

Application of AI technology in image super-resolution reconstruction

Jan 23, 2024 am 08:06 AM

Application of AI technology in image super-resolution reconstruction

Jan 23, 2024 am 08:06 AM

Super-resolution image reconstruction is the process of generating high-resolution images from low-resolution images using deep learning techniques, such as convolutional neural networks (CNN) and generative adversarial networks (GAN). The goal of this method is to improve the quality and detail of images by converting low-resolution images into high-resolution images. This technology has wide applications in many fields, such as medical imaging, surveillance cameras, satellite images, etc. Through super-resolution image reconstruction, we can obtain clearer and more detailed images, which helps to more accurately analyze and identify targets and features in images. Reconstruction methods Super-resolution image reconstruction methods can generally be divided into two categories: interpolation-based methods and deep learning-based methods. 1) Interpolation-based method Super-resolution image reconstruction based on interpolation

How to use AI technology to restore old photos (with examples and code analysis)

Jan 24, 2024 pm 09:57 PM

How to use AI technology to restore old photos (with examples and code analysis)

Jan 24, 2024 pm 09:57 PM

Old photo restoration is a method of using artificial intelligence technology to repair, enhance and improve old photos. Using computer vision and machine learning algorithms, the technology can automatically identify and repair damage and flaws in old photos, making them look clearer, more natural and more realistic. The technical principles of old photo restoration mainly include the following aspects: 1. Image denoising and enhancement. When restoring old photos, they need to be denoised and enhanced first. Image processing algorithms and filters, such as mean filtering, Gaussian filtering, bilateral filtering, etc., can be used to solve noise and color spots problems, thereby improving the quality of photos. 2. Image restoration and repair In old photos, there may be some defects and damage, such as scratches, cracks, fading, etc. These problems can be solved by image restoration and repair algorithms

Scale Invariant Features (SIFT) algorithm

Jan 22, 2024 pm 05:09 PM

Scale Invariant Features (SIFT) algorithm

Jan 22, 2024 pm 05:09 PM

The Scale Invariant Feature Transform (SIFT) algorithm is a feature extraction algorithm used in the fields of image processing and computer vision. This algorithm was proposed in 1999 to improve object recognition and matching performance in computer vision systems. The SIFT algorithm is robust and accurate and is widely used in image recognition, three-dimensional reconstruction, target detection, video tracking and other fields. It achieves scale invariance by detecting key points in multiple scale spaces and extracting local feature descriptors around the key points. The main steps of the SIFT algorithm include scale space construction, key point detection, key point positioning, direction assignment and feature descriptor generation. Through these steps, the SIFT algorithm can extract robust and unique features, thereby achieving efficient image processing.

An introduction to image annotation methods and common application scenarios

Jan 22, 2024 pm 07:57 PM

An introduction to image annotation methods and common application scenarios

Jan 22, 2024 pm 07:57 PM

In the fields of machine learning and computer vision, image annotation is the process of applying human annotations to image data sets. Image annotation methods can be mainly divided into two categories: manual annotation and automatic annotation. Manual annotation means that human annotators annotate images through manual operations. This method requires human annotators to have professional knowledge and experience and be able to accurately identify and annotate target objects, scenes, or features in images. The advantage of manual annotation is that the annotation results are reliable and accurate, but the disadvantage is that it is time-consuming and costly. Automatic annotation refers to the method of using computer programs to automatically annotate images. This method uses machine learning and computer vision technology to achieve automatic annotation by training models. The advantages of automatic labeling are fast speed and low cost, but the disadvantage is that the labeling results may not be accurate.

Interpretation of the concept of target tracking in computer vision

Jan 24, 2024 pm 03:18 PM

Interpretation of the concept of target tracking in computer vision

Jan 24, 2024 pm 03:18 PM

Object tracking is an important task in computer vision and is widely used in traffic monitoring, robotics, medical imaging, automatic vehicle tracking and other fields. It uses deep learning methods to predict or estimate the position of the target object in each consecutive frame in the video after determining the initial position of the target object. Object tracking has a wide range of applications in real life and is of great significance in the field of computer vision. Object tracking usually involves the process of object detection. The following is a brief overview of the object tracking steps: 1. Object detection, where the algorithm classifies and detects objects by creating bounding boxes around them. 2. Assign a unique identification (ID) to each object. 3. Track the movement of detected objects in frames while storing relevant information. Types of Target Tracking Targets

Examples of practical applications of the combination of shallow features and deep features

Jan 22, 2024 pm 05:00 PM

Examples of practical applications of the combination of shallow features and deep features

Jan 22, 2024 pm 05:00 PM

Deep learning has achieved great success in the field of computer vision, and one of the important advances is the use of deep convolutional neural networks (CNN) for image classification. However, deep CNNs usually require large amounts of labeled data and computing resources. In order to reduce the demand for computational resources and labeled data, researchers began to study how to fuse shallow features and deep features to improve image classification performance. This fusion method can take advantage of the high computational efficiency of shallow features and the strong representation ability of deep features. By combining the two, computational costs and data labeling requirements can be reduced while maintaining high classification accuracy. This method is particularly important for application scenarios where the amount of data is small or computing resources are limited. By in-depth study of the fusion methods of shallow features and deep features, we can further

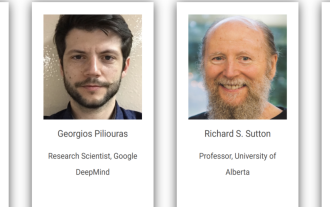

Distributed Artificial Intelligence Conference DAI 2024 Call for Papers: Agent Day, Richard Sutton, the father of reinforcement learning, will attend! Yan Shuicheng, Sergey Levine and DeepMind scientists will give keynote speeches

Aug 22, 2024 pm 08:02 PM

Distributed Artificial Intelligence Conference DAI 2024 Call for Papers: Agent Day, Richard Sutton, the father of reinforcement learning, will attend! Yan Shuicheng, Sergey Levine and DeepMind scientists will give keynote speeches

Aug 22, 2024 pm 08:02 PM

Conference Introduction With the rapid development of science and technology, artificial intelligence has become an important force in promoting social progress. In this era, we are fortunate to witness and participate in the innovation and application of Distributed Artificial Intelligence (DAI). Distributed artificial intelligence is an important branch of the field of artificial intelligence, which has attracted more and more attention in recent years. Agents based on large language models (LLM) have suddenly emerged. By combining the powerful language understanding and generation capabilities of large models, they have shown great potential in natural language interaction, knowledge reasoning, task planning, etc. AIAgent is taking over the big language model and has become a hot topic in the current AI circle. Au