Technology peripherals

Technology peripherals

AI

AI

Single layer neural network cannot solve the root cause of the XOR problem

Single layer neural network cannot solve the root cause of the XOR problem

Single layer neural network cannot solve the root cause of the XOR problem

In the field of machine learning, neural networks are an important model that perform well in many tasks. However, some tasks are difficult to solve for single-layer neural networks. One typical example is the XOR problem. The XOR problem means that for the input of two binary numbers, the output result is 1 if and only if the two inputs are not the same. This article will explain the reasons why a single-layer neural network cannot solve the XOR problem from three aspects: the structural characteristics of the single-layer neural network, the essential characteristics of the XOR problem, and the training process of the neural network.

First of all, the structural characteristics of a single-layer neural network determine that it cannot solve the XOR problem. A single-layer neural network consists of an input layer, an output layer and an activation function. There are no other layers between the input layer and the output layer, which means that a single-layer neural network can only achieve linear classification. Linear classification refers to a classification method that can use a straight line to separate data points into two categories. However, the XOR problem is a nonlinear classification problem and therefore cannot be solved by a single-layer neural network. This is because the data points of the XOR problem cannot be perfectly divided by a straight line. For the XOR problem, we need to introduce multi-layer neural networks, also called deep neural networks, to solve nonlinear classification problems. Multi-layer neural networks have multiple hidden layers, and each hidden layer can learn and extract different features to better solve complex classification problems. By introducing hidden layers, neural networks can learn more complex feature combinations, and can approach the decision boundary of the XOR problem through multiple nonlinear transformations. In this way, multi-layer neural networks can better solve nonlinear classification problems, including XOR problems. All in all, the essential characteristic of a single-layer neural network's linear important cause of the problem. Taking the representation of data points on a plane as an example, blue points represent data points with an output result of 0, and red points represent data points with an output result of 1. It can be observed that these data points cannot be perfectly divided into two categories by a straight line and therefore cannot be classified with a single layer neural network.

The process is the key factor that affects the single-layer neural network to solve the XOR problem. Training neural networks usually uses the backpropagation algorithm, which is based on the gradient descent optimization method. However, in a single-layer neural network, the gradient descent algorithm can only find the local optimal solution and cannot find the global optimal solution. This is because the characteristics of the XOR problem cause its loss function to be non-convex. There are multiple local optimal solutions in the optimization process of non-convex functions, causing the single-layer neural network to be unable to find the global optimal solution.

There are three main reasons why a single-layer neural network cannot solve the XOR problem. First of all, the structural characteristics of a single-layer neural network determine that it can only achieve linear classification. Since the essential characteristic of the XOR problem is a nonlinear classification problem, a single-layer neural network cannot accurately classify it. Secondly, the data distribution of the XOR problem is not linearly separable, which means that the two types of data cannot be completely separated by a straight line. Therefore, a single-layer neural network cannot achieve classification of XOR problems through simple linear transformation. Finally, there may be multiple local optimal solutions during the training process of the neural network, and the global optimal solution cannot be found. This is because the parameter space of a single-layer neural network is non-convex and there are multiple local optimal solutions, so it is difficult to find the global optimal solution through a simple gradient descent algorithm. Therefore, a single layer neural network cannot solve the XOR problem.

Therefore, in order to solve the XOR problem, multi-layer neural networks or other more complex models need to be used. Multi-layer neural networks can achieve nonlinear classification by introducing hidden layers, and can also use more complex optimization algorithms to find the global optimal solution.

The above is the detailed content of Single layer neural network cannot solve the root cause of the XOR problem. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

Explore the concepts, differences, advantages and disadvantages of RNN, LSTM and GRU

Jan 22, 2024 pm 07:51 PM

Explore the concepts, differences, advantages and disadvantages of RNN, LSTM and GRU

Jan 22, 2024 pm 07:51 PM

In time series data, there are dependencies between observations, so they are not independent of each other. However, traditional neural networks treat each observation as independent, which limits the model's ability to model time series data. To solve this problem, Recurrent Neural Network (RNN) was introduced, which introduced the concept of memory to capture the dynamic characteristics of time series data by establishing dependencies between data points in the network. Through recurrent connections, RNN can pass previous information into the current observation to better predict future values. This makes RNN a powerful tool for tasks involving time series data. But how does RNN achieve this kind of memory? RNN realizes memory through the feedback loop in the neural network. This is the difference between RNN and traditional neural network.

A case study of using bidirectional LSTM model for text classification

Jan 24, 2024 am 10:36 AM

A case study of using bidirectional LSTM model for text classification

Jan 24, 2024 am 10:36 AM

The bidirectional LSTM model is a neural network used for text classification. Below is a simple example demonstrating how to use bidirectional LSTM for text classification tasks. First, we need to import the required libraries and modules: importosimportnumpyasnpfromkeras.preprocessing.textimportTokenizerfromkeras.preprocessing.sequenceimportpad_sequencesfromkeras.modelsimportSequentialfromkeras.layersimportDense,Em

Calculating floating point operands (FLOPS) for neural networks

Jan 22, 2024 pm 07:21 PM

Calculating floating point operands (FLOPS) for neural networks

Jan 22, 2024 pm 07:21 PM

FLOPS is one of the standards for computer performance evaluation, used to measure the number of floating point operations per second. In neural networks, FLOPS is often used to evaluate the computational complexity of the model and the utilization of computing resources. It is an important indicator used to measure the computing power and efficiency of a computer. A neural network is a complex model composed of multiple layers of neurons used for tasks such as data classification, regression, and clustering. Training and inference of neural networks requires a large number of matrix multiplications, convolutions and other calculation operations, so the computational complexity is very high. FLOPS (FloatingPointOperationsperSecond) can be used to measure the computational complexity of neural networks to evaluate the computational resource usage efficiency of the model. FLOP

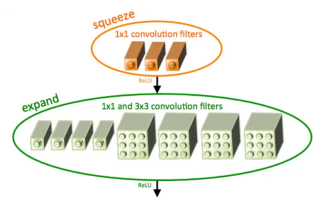

Introduction to SqueezeNet and its characteristics

Jan 22, 2024 pm 07:15 PM

Introduction to SqueezeNet and its characteristics

Jan 22, 2024 pm 07:15 PM

SqueezeNet is a small and precise algorithm that strikes a good balance between high accuracy and low complexity, making it ideal for mobile and embedded systems with limited resources. In 2016, researchers from DeepScale, University of California, Berkeley, and Stanford University proposed SqueezeNet, a compact and efficient convolutional neural network (CNN). In recent years, researchers have made several improvements to SqueezeNet, including SqueezeNetv1.1 and SqueezeNetv2.0. Improvements in both versions not only increase accuracy but also reduce computational costs. Accuracy of SqueezeNetv1.1 on ImageNet dataset

Definition and structural analysis of fuzzy neural network

Jan 22, 2024 pm 09:09 PM

Definition and structural analysis of fuzzy neural network

Jan 22, 2024 pm 09:09 PM

Fuzzy neural network is a hybrid model that combines fuzzy logic and neural networks to solve fuzzy or uncertain problems that are difficult to handle with traditional neural networks. Its design is inspired by the fuzziness and uncertainty in human cognition, so it is widely used in control systems, pattern recognition, data mining and other fields. The basic architecture of fuzzy neural network consists of fuzzy subsystem and neural subsystem. The fuzzy subsystem uses fuzzy logic to process input data and convert it into fuzzy sets to express the fuzziness and uncertainty of the input data. The neural subsystem uses neural networks to process fuzzy sets for tasks such as classification, regression or clustering. The interaction between the fuzzy subsystem and the neural subsystem makes the fuzzy neural network have more powerful processing capabilities and can

Image denoising using convolutional neural networks

Jan 23, 2024 pm 11:48 PM

Image denoising using convolutional neural networks

Jan 23, 2024 pm 11:48 PM

Convolutional neural networks perform well in image denoising tasks. It utilizes the learned filters to filter the noise and thereby restore the original image. This article introduces in detail the image denoising method based on convolutional neural network. 1. Overview of Convolutional Neural Network Convolutional neural network is a deep learning algorithm that uses a combination of multiple convolutional layers, pooling layers and fully connected layers to learn and classify image features. In the convolutional layer, the local features of the image are extracted through convolution operations, thereby capturing the spatial correlation in the image. The pooling layer reduces the amount of calculation by reducing the feature dimension and retains the main features. The fully connected layer is responsible for mapping learned features and labels to implement image classification or other tasks. The design of this network structure makes convolutional neural networks useful in image processing and recognition.

Compare the similarities, differences and relationships between dilated convolution and atrous convolution

Jan 22, 2024 pm 10:27 PM

Compare the similarities, differences and relationships between dilated convolution and atrous convolution

Jan 22, 2024 pm 10:27 PM

Dilated convolution and dilated convolution are commonly used operations in convolutional neural networks. This article will introduce their differences and relationships in detail. 1. Dilated convolution Dilated convolution, also known as dilated convolution or dilated convolution, is an operation in a convolutional neural network. It is an extension based on the traditional convolution operation and increases the receptive field of the convolution kernel by inserting holes in the convolution kernel. This way, the network can better capture a wider range of features. Dilated convolution is widely used in the field of image processing and can improve the performance of the network without increasing the number of parameters and the amount of calculation. By expanding the receptive field of the convolution kernel, dilated convolution can better process the global information in the image, thereby improving the effect of feature extraction. The main idea of dilated convolution is to introduce some

Steps to write a simple neural network using Rust

Jan 23, 2024 am 10:45 AM

Steps to write a simple neural network using Rust

Jan 23, 2024 am 10:45 AM

Rust is a systems-level programming language focused on safety, performance, and concurrency. It aims to provide a safe and reliable programming language suitable for scenarios such as operating systems, network applications, and embedded systems. Rust's security comes primarily from two aspects: the ownership system and the borrow checker. The ownership system enables the compiler to check code for memory errors at compile time, thus avoiding common memory safety issues. By forcing checking of variable ownership transfers at compile time, Rust ensures that memory resources are properly managed and released. The borrow checker analyzes the life cycle of the variable to ensure that the same variable will not be accessed by multiple threads at the same time, thereby avoiding common concurrency security issues. By combining these two mechanisms, Rust is able to provide