Technology peripherals

Technology peripherals

AI

AI

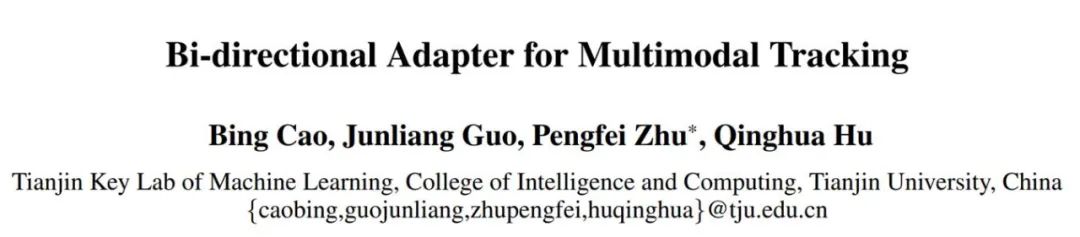

BAT method: AAAI 2024's first multi-modal target tracking universal bidirectional adapter

BAT method: AAAI 2024's first multi-modal target tracking universal bidirectional adapter

BAT method: AAAI 2024's first multi-modal target tracking universal bidirectional adapter

Object tracking is one of the basic tasks of computer vision. In recent years, single-modality (RGB) object tracking has made significant progress. However, due to the limitations of a single imaging sensor, we need to introduce multi-modal images (such as RGB, infrared, etc.) to make up for this shortcoming to achieve all-weather target tracking in complex environments. The application of such multi-modal images can provide more comprehensive information and enhance the accuracy and robustness of target detection and tracking. The development of multimodal target tracking is of great significance for realizing higher-level computer vision applications.

However, existing multi-modal tracking tasks also face two main problems:

- Due to multi-modal target tracking The cost of data annotation is high, and most existing data sets are limited in size and insufficient to support the construction of effective multi-modal trackers;

- Because different imaging methods have different effects on Objects have different sensitivities, the dominant mode in the open world changes dynamically, and the dominant correlation between multi-modal data is not fixed.

Many multi-modal tracking efforts that pre-train on RGB sequences and then fully fine-tune to multi-modal scenes have time and efficiency issues, as well as limited performance.

In addition to the complete fine-tuning method, it is also inspired by the efficient fine-tuning method of parameters in the field of natural language processing (NLP). Some recent methods have introduced parameter-efficient prompt fine-tuning in multi-modal tracking. These methods do this by freezing the backbone network parameters and adding an additional set of learnable parameters.

Typically, these methods focus on one modality (usually RGB) as the primary modality and the other modality as the auxiliary modality. However, this method ignores the dynamic correlation between multi-modal data and therefore cannot fully utilize the complementary effects of multi-modal information in complex scenes, thus limiting the tracking performance.

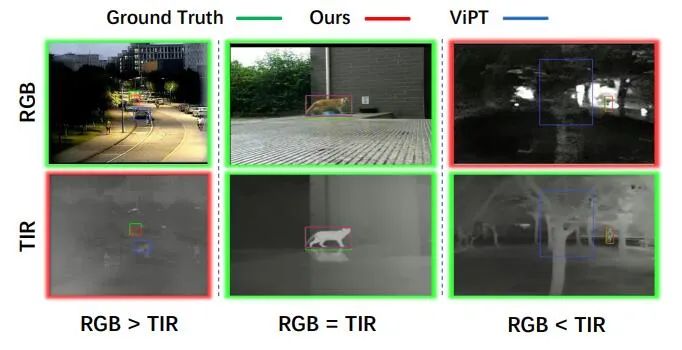

Figure 1: Different dominant modes in complex scenarios.

To solve the above problems, researchers from Tianjin University proposed a solution called Bidirectional Adapter for Multimodal Tracking (BAT). Different from traditional methods, the BAT method does not rely on fixed dominant mode and auxiliary mode, but obtains better performance in the change of auxiliary mode to dominant mode through the process of dynamically extracting effective information. The innovation of this method is that it can adapt to different data characteristics and task requirements, thereby improving the representation ability of the basic model in downstream tasks. By using the BAT method, researchers hope to provide a more flexible and efficient multi-modal tracking solution, bringing better results to research and applications in related fields.

BAT consists of two base model encoders with shared parameters specific to the modal branches and a general bidirectional adapter. During the training process, BAT did not fully fine-tune the basic model, but adopted a step-by-step training method. Each specific modality branch is initialized by using the base model with fixed parameters, and only the newly added bidirectional adapters are trained. Each modal branch learns cue information from other modalities and combines it with the feature information of the current modality to enhance representation capabilities. Two modality-specific branches interact through a universal bidirectional adapter to dynamically fuse dominant and auxiliary information with each other to adapt to the paradigm of multi-modal non-fixed association. This design enables BAT to fine-tune the content without changing the meaning of the original content, improving the model's representation ability and adaptability.

The universal bidirectional adapter adopts a lightweight hourglass structure and can be embedded into each layer of the transformer encoder of the basic model to avoid introducing a large number of learnable parameters. By adding only a small number of training parameters (0.32M), the universal bidirectional adapter has lower training cost and achieves better tracking performance compared with fully fine-tuned methods and cue learning-based methods.

The paper "Bi-directional Adapter for Multi-modal Tracking":

Paper link: https ://arxiv.org/abs/2312.10611

Code link: https://github.com/SparkTempest/BAT

Main Contributions

- We first propose an adapter-based multi-modal tracking visual cue framework. Our model is able to perceive the dynamic changes of dominant modalities in open scenes and effectively fuse multi-modal information in an adaptive manner.

- To the best of our knowledge, we propose a universal bidirectional adapter for the base model for the first time. It has a simple and efficient structure and can effectively realize multi-modal cross-cue tracking. By adding only 0.32M learnable parameters, our model is robust to multi-modal tracking in open scenarios.

- We conducted an in-depth analysis of the impact of our universal adapter at different levels. We also explore a more efficient adapter architecture in experiments and verify our advantages on multiple RGBT tracking related datasets.

Core method

As shown in Figure 2, we propose a multi-modal tracking visual cue framework based on a bidirectional Adapter (BAT), the framework has a dual-stream encoder structure with RGB modality and thermal infrared modality, and each stream uses the same basic model parameters. The bidirectional Adapter is set up in parallel with the dual-stream encoder layer to cross-cue multimodal data from the two modalities.

The method does not completely fine-tune the basic model. It only efficiently transfers the pre-trained RGB tracker to multi-modal scenes by learning a lightweight bidirectional Adapter. It achieves excellent multi-modal complementarity and excellent tracking accuracy.

Figure 2: Overall architecture of BAT.

First, the  template frame of each modality (the initial frame of the target object in the first frame

template frame of each modality (the initial frame of the target object in the first frame ) and

) and  search frames (subsequent tracking images) are converted into

search frames (subsequent tracking images) are converted into  , and they are spliced together and passed to the N-layer dual-stream transformer encoder respectively.

, and they are spliced together and passed to the N-layer dual-stream transformer encoder respectively.

Bidirectional adapter is set up in parallel with the dual-stream encoder layer to learn feature cues from one modality to another. For this purpose, the output features of the two branches are added and input into the prediction head H to obtain the final tracking result box B.

The bidirectional adapter adopts a modular design and is embedded in the multi-head self-attention stage and MLP stage respectively, as shown on the right side of Figure 1. Detailed structures designed to transfer feature cues from one modality to another. It consists of three linear projection layers, tn represents the number of tokens in each modality, the input token is first dimensionally reduced to de through down projection and passes through a linear projection layer, and then projected upward to the original dimension dt and fed back as a feature prompt Transformer encoder layers to other modalities.

Through this simple structure, the bidirectional adapter can effectively perform feature prompts between  modalities to achieve multi-modal tracking.

modalities to achieve multi-modal tracking.

Since the transformer encoder and prediction head are frozen, only the parameters of the newly added adapter need to be optimized. Notably, unlike most traditional adapters, our bidirectional adapter functions as a cross-modal feature cue for dynamically changing dominant modalities, ensuring good tracking performance in the open world.

Experimental results

As shown in Table 1, the comparison on the two data sets of RGBT234 and LasHeR shows that our method has both accuracy and success rate. Outperforms state-of-the-art methods. As shown in Figure 3, the performance comparison with state-of-the-art methods under different scene properties of the LasHeR dataset also demonstrates the superiority of the proposed method.

These experiments fully prove that our dual-stream tracking framework and bidirectional Adapter successfully track targets in most complex environments and adaptively switch from dynamically changing dominant-auxiliary modes Extract effective information from the system and achieve state-of-the-art performance.

Table 1 Overall performance on RGBT234 and LasHeR datasets.

Figure 3 Comparison of BAT and competing methods under different attributes in the LasHeR dataset.

Experiments demonstrate our effectiveness in dynamically prompting effective information from changing dominant-auxiliary patterns in complex scenarios. As shown in Figure 4, compared with related methods that fix the dominant mode, our method can effectively track the target even when RGB is completely unavailable, when both RGB and TIR can provide effective information in subsequent scenes. , the tracking effect is much better. Our bidirectional Adapter dynamically extracts effective features of the target from both RGB and IR modalities, captures more accurate target response locations, and eliminates interference from the RGB modality.

# Figure 4 Visualization of tracking results.

# We also evaluate our method on the RGBE trace dataset. As shown in Figure 5, compared with other methods on the VisEvent test set, our method has the most accurate tracking results in different complex scenarios, proving the effectiveness and generalization of our BAT model.

Figure 5 Tracking results under the VisEvent data set.

Figure 6 Attention weight visualization.

We visualize the attention weights of different layers tracking targets in Figure 6. Compared with the baseline-dual (dual-stream framework for basic model parameter initialization) method, our BAT effectively drives the auxiliary mode to learn more complementary information from the dominant mode, while maintaining the effectiveness of the dominant mode as the network depth increases. performance, thereby improving overall tracking performance.

Experiments show that BAT successfully captures multi-modal complementary information and achieves sample adaptive dynamic tracking.

The above is the detailed content of BAT method: AAAI 2024's first multi-modal target tracking universal bidirectional adapter. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1375

1375

52

52

What method is used to convert strings into objects in Vue.js?

Apr 07, 2025 pm 09:39 PM

What method is used to convert strings into objects in Vue.js?

Apr 07, 2025 pm 09:39 PM

When converting strings to objects in Vue.js, JSON.parse() is preferred for standard JSON strings. For non-standard JSON strings, the string can be processed by using regular expressions and reduce methods according to the format or decoded URL-encoded. Select the appropriate method according to the string format and pay attention to security and encoding issues to avoid bugs.

How to use mysql after installation

Apr 08, 2025 am 11:48 AM

How to use mysql after installation

Apr 08, 2025 am 11:48 AM

The article introduces the operation of MySQL database. First, you need to install a MySQL client, such as MySQLWorkbench or command line client. 1. Use the mysql-uroot-p command to connect to the server and log in with the root account password; 2. Use CREATEDATABASE to create a database, and USE select a database; 3. Use CREATETABLE to create a table, define fields and data types; 4. Use INSERTINTO to insert data, query data, update data by UPDATE, and delete data by DELETE. Only by mastering these steps, learning to deal with common problems and optimizing database performance can you use MySQL efficiently.

How to solve mysql cannot be started

Apr 08, 2025 pm 02:21 PM

How to solve mysql cannot be started

Apr 08, 2025 pm 02:21 PM

There are many reasons why MySQL startup fails, and it can be diagnosed by checking the error log. Common causes include port conflicts (check port occupancy and modify configuration), permission issues (check service running user permissions), configuration file errors (check parameter settings), data directory corruption (restore data or rebuild table space), InnoDB table space issues (check ibdata1 files), plug-in loading failure (check error log). When solving problems, you should analyze them based on the error log, find the root cause of the problem, and develop the habit of backing up data regularly to prevent and solve problems.

Laravel's geospatial: Optimization of interactive maps and large amounts of data

Apr 08, 2025 pm 12:24 PM

Laravel's geospatial: Optimization of interactive maps and large amounts of data

Apr 08, 2025 pm 12:24 PM

Efficiently process 7 million records and create interactive maps with geospatial technology. This article explores how to efficiently process over 7 million records using Laravel and MySQL and convert them into interactive map visualizations. Initial challenge project requirements: Extract valuable insights using 7 million records in MySQL database. Many people first consider programming languages, but ignore the database itself: Can it meet the needs? Is data migration or structural adjustment required? Can MySQL withstand such a large data load? Preliminary analysis: Key filters and properties need to be identified. After analysis, it was found that only a few attributes were related to the solution. We verified the feasibility of the filter and set some restrictions to optimize the search. Map search based on city

Vue.js How to convert an array of string type into an array of objects?

Apr 07, 2025 pm 09:36 PM

Vue.js How to convert an array of string type into an array of objects?

Apr 07, 2025 pm 09:36 PM

Summary: There are the following methods to convert Vue.js string arrays into object arrays: Basic method: Use map function to suit regular formatted data. Advanced gameplay: Using regular expressions can handle complex formats, but they need to be carefully written and considered. Performance optimization: Considering the large amount of data, asynchronous operations or efficient data processing libraries can be used. Best practice: Clear code style, use meaningful variable names and comments to keep the code concise.

How to set the timeout of Vue Axios

Apr 07, 2025 pm 10:03 PM

How to set the timeout of Vue Axios

Apr 07, 2025 pm 10:03 PM

In order to set the timeout for Vue Axios, we can create an Axios instance and specify the timeout option: In global settings: Vue.prototype.$axios = axios.create({ timeout: 5000 }); in a single request: this.$axios.get('/api/users', { timeout: 10000 }).

How to optimize database performance after mysql installation

Apr 08, 2025 am 11:36 AM

How to optimize database performance after mysql installation

Apr 08, 2025 am 11:36 AM

MySQL performance optimization needs to start from three aspects: installation configuration, indexing and query optimization, monitoring and tuning. 1. After installation, you need to adjust the my.cnf file according to the server configuration, such as the innodb_buffer_pool_size parameter, and close query_cache_size; 2. Create a suitable index to avoid excessive indexes, and optimize query statements, such as using the EXPLAIN command to analyze the execution plan; 3. Use MySQL's own monitoring tool (SHOWPROCESSLIST, SHOWSTATUS) to monitor the database health, and regularly back up and organize the database. Only by continuously optimizing these steps can the performance of MySQL database be improved.

Remote senior backend engineers (platforms) need circles

Apr 08, 2025 pm 12:27 PM

Remote senior backend engineers (platforms) need circles

Apr 08, 2025 pm 12:27 PM

Remote Senior Backend Engineer Job Vacant Company: Circle Location: Remote Office Job Type: Full-time Salary: $130,000-$140,000 Job Description Participate in the research and development of Circle mobile applications and public API-related features covering the entire software development lifecycle. Main responsibilities independently complete development work based on RubyonRails and collaborate with the React/Redux/Relay front-end team. Build core functionality and improvements for web applications and work closely with designers and leadership throughout the functional design process. Promote positive development processes and prioritize iteration speed. Requires more than 6 years of complex web application backend