Technology peripherals

Technology peripherals

AI

AI

The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released

The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released

The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released

"Is the API of large models a loss-making business?"

With the practicalization of large language model technology, many technologies The company has launched a large model API for developers to use. However, we can't help but start to wonder whether a business based on large models can be sustained, especially considering that OpenAI is burning through $700,000 a day.

This Thursday, AI startup Martian calculated it carefully for us.

Leaderboard link: https://leaderboard.withmartian.com/

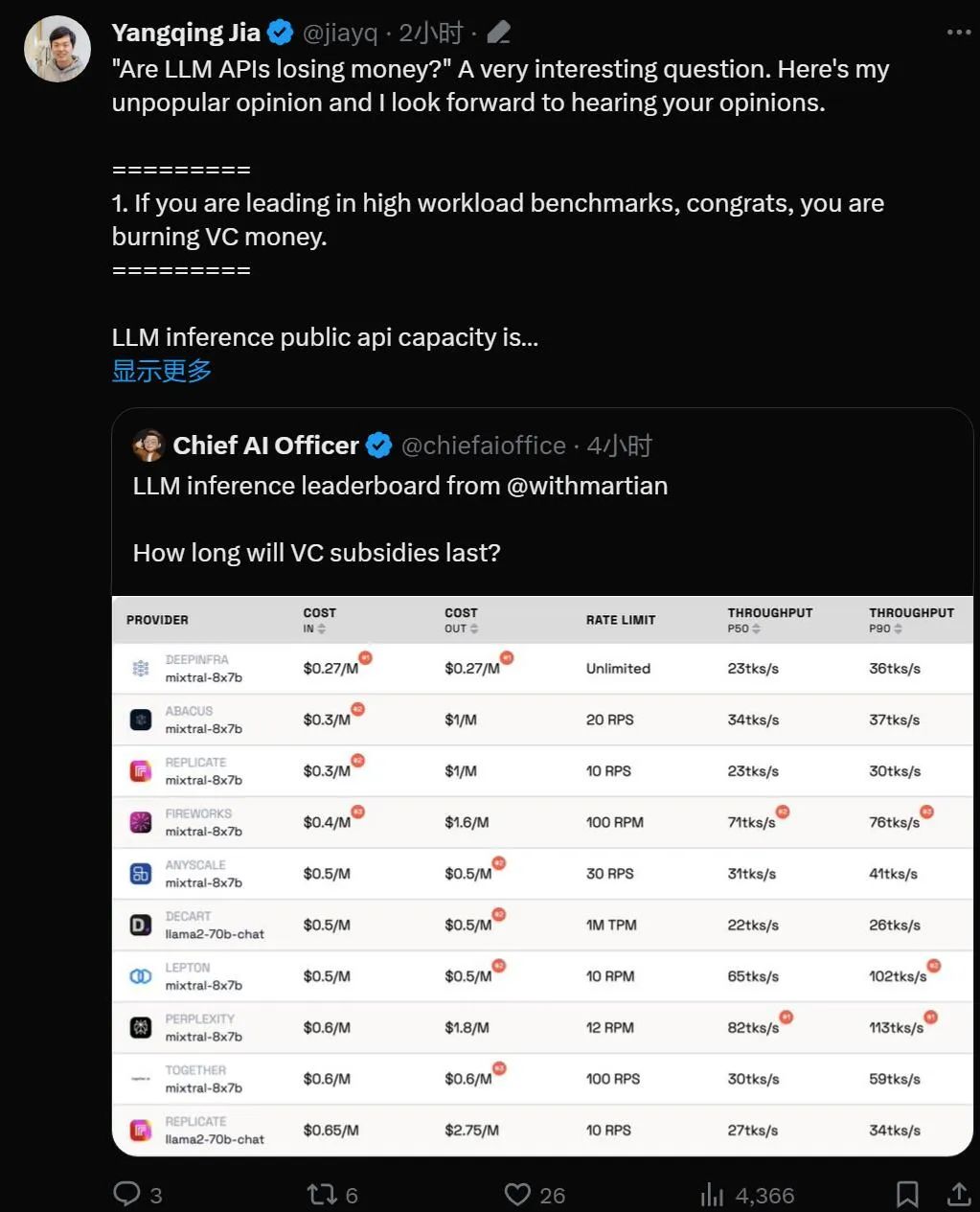

The LLM Inference Provider Leaderboard is an open-source ranking of API inference products for large models. It benchmarks the cost, rate limits, throughput, and P50 and P90 TTFT for the Mixtral-8x7B and Llama-2-70B-Chat public endpoints of each vendor.

Although they compete with each other, Martian found that there are significant differences in the cost, throughput and rate limits of each company's large model services. These differences exceed the 5x cost difference, 6x throughput difference, and even larger rate limit differences. Choosing different APIs is critical to getting the best performance, even though it's just part of doing business.

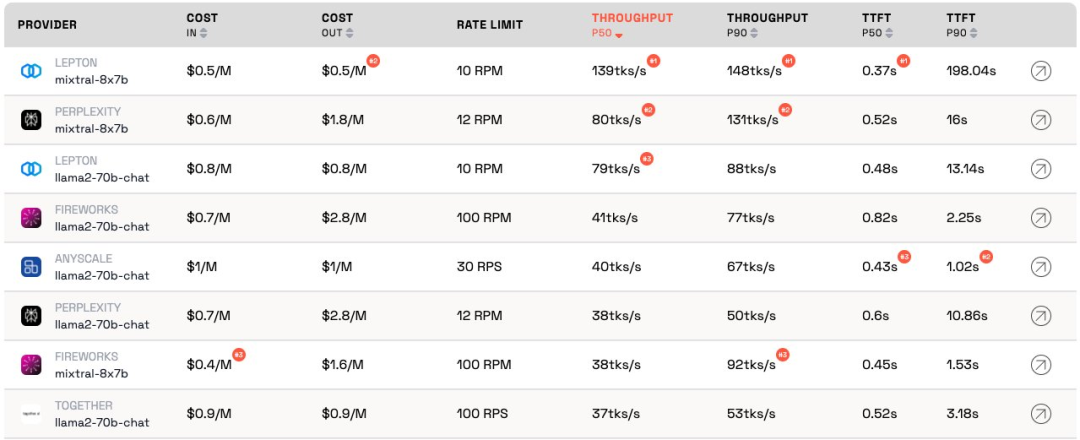

According to the current ranking, the service provided by Anyscale has the best throughput under the medium service load of Llama-2-70B. For large service loads, Together AI performed best with P50 and P90 throughput on Llama-2-70B and Mixtral-8x7B.

Additionally, Jia Yangqing’s LeptonAI showed the best throughput when handling small task loads with short input and long output cues. Its P50 throughput of 130 tks/s is the fastest among the models currently provided by all manufacturers on the market.

Well-known AI scholar and Lepton AI founder Jia Yangqing commented immediately after the rankings were released. Let’s see what he said.

Jia Yangqing first explained the current status of the industry in the field of artificial intelligence, then affirmed the significance of benchmark testing, and finally pointed out that LeptonAI will help users find the best AI Basic strategy.

1. Big model API is "burning money"

If the model is in high workload benchmark test Leading position, then congratulations, it is "burning money."

LLM Reasoning about the capacity of a public API is like running a restaurant: you have a chef and you need to estimate customer traffic. Hiring a chef costs money. Latency and throughput can be understood as "how fast you can cook for customers." For a reasonable business, you need a "reasonable" number of chefs. In other words, you want to have capacity that can handle normal traffic, not sudden bursts of traffic that occur in a matter of seconds. A surge in traffic means waiting; otherwise, the "cook" will have nothing to do.

In the world of artificial intelligence, GPU plays the role of "chef". Baseline loads are bursty. Under low workloads, the baseline load is blended into normal traffic, and the measurements provide an accurate representation of how the service performs under current workloads.

The high service load scenario is interesting because it will cause interruptions. The benchmark only runs a few times per day/week, so it's not the regular traffic one should expect. Imagine having 100 people flock to your local restaurant to check out how quickly the chef is cooking. The results would be great. To borrow the terminology of quantum physics, this is called the "observer effect." The stronger the interference (i.e. the larger the burst load), the lower the accuracy. In other words: if you put a sudden high load on a service and see that the service responds very quickly, you know that the service has quite a bit of idle capacity. As an investor, when you see this situation, you should ask: Is this way of burning money responsible?

2. The model will eventually achieve similar performance

The field of artificial intelligence is very fond of competitive competitions, which is indeed interesting. Everyone quickly converges on the same solution, and Nvidia always wins in the end because of the GPU. This is thanks to great open source projects, vLLM is a great example. This means that, as a provider, if your model performs much worse than others, you can easily catch up by looking at open source solutions and applying good engineering.

3. "As a customer, I don't care about the provider's cost"

For artificial intelligence application building For developers, we are lucky: there are always API providers willing to "burn money". The AI industry is burning money to gain traffic, and the next step is to worry about profits.

Benchmarking is a tedious and error-prone task. For better or worse, it usually happens that winners praise you and losers blame you. Such was the case with the last round of convolutional neural network benchmarks. It’s not an easy task, but benchmarking will help us achieve the next 10x in AI infrastructure.

Based on the artificial intelligence framework and cloud infrastructure, LeptonAI will help users find the best AI basic strategy.

The above is the detailed content of The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

git software installation

Apr 17, 2025 am 11:57 AM

git software installation

Apr 17, 2025 am 11:57 AM

Installing Git software includes the following steps: Download the installation package and run the installation package to verify the installation configuration Git installation Git Bash (Windows only)

How to solve the complexity of WordPress installation and update using Composer

Apr 17, 2025 pm 10:54 PM

How to solve the complexity of WordPress installation and update using Composer

Apr 17, 2025 pm 10:54 PM

When managing WordPress websites, you often encounter complex operations such as installation, update, and multi-site conversion. These operations are not only time-consuming, but also prone to errors, causing the website to be paralyzed. Combining the WP-CLI core command with Composer can greatly simplify these tasks, improve efficiency and reliability. This article will introduce how to use Composer to solve these problems and improve the convenience of WordPress management.

How to solve SQL parsing problem? Use greenlion/php-sql-parser!

Apr 17, 2025 pm 09:15 PM

How to solve SQL parsing problem? Use greenlion/php-sql-parser!

Apr 17, 2025 pm 09:15 PM

When developing a project that requires parsing SQL statements, I encountered a tricky problem: how to efficiently parse MySQL's SQL statements and extract the key information. After trying many methods, I found that the greenlion/php-sql-parser library can perfectly solve my needs.

How to solve complex BelongsToThrough relationship problem in Laravel? Use Composer!

Apr 17, 2025 pm 09:54 PM

How to solve complex BelongsToThrough relationship problem in Laravel? Use Composer!

Apr 17, 2025 pm 09:54 PM

In Laravel development, dealing with complex model relationships has always been a challenge, especially when it comes to multi-level BelongsToThrough relationships. Recently, I encountered this problem in a project dealing with a multi-level model relationship, where traditional HasManyThrough relationships fail to meet the needs, resulting in data queries becoming complex and inefficient. After some exploration, I found the library staudenmeir/belongs-to-through, which easily installed and solved my troubles through Composer.

How to solve the complex problem of PHP geodata processing? Use Composer and GeoPHP!

Apr 17, 2025 pm 08:30 PM

How to solve the complex problem of PHP geodata processing? Use Composer and GeoPHP!

Apr 17, 2025 pm 08:30 PM

When developing a Geographic Information System (GIS), I encountered a difficult problem: how to efficiently handle various geographic data formats such as WKT, WKB, GeoJSON, etc. in PHP. I've tried multiple methods, but none of them can effectively solve the conversion and operational issues between these formats. Finally, I found the GeoPHP library, which easily integrates through Composer, and it completely solved my troubles.

Solve CSS prefix problem using Composer: Practice of padaliyajay/php-autoprefixer library

Apr 17, 2025 pm 11:27 PM

Solve CSS prefix problem using Composer: Practice of padaliyajay/php-autoprefixer library

Apr 17, 2025 pm 11:27 PM

I'm having a tricky problem when developing a front-end project: I need to manually add a browser prefix to the CSS properties to ensure compatibility. This is not only time consuming, but also error-prone. After some exploration, I discovered the padaliyajay/php-autoprefixer library, which easily solved my troubles with Composer.

git software installation tutorial

Apr 17, 2025 pm 12:06 PM

git software installation tutorial

Apr 17, 2025 pm 12:06 PM

Git Software Installation Guide: Visit the official Git website to download the installer for Windows, MacOS, or Linux. Run the installer and follow the prompts. Configure Git: Set username, email, and select a text editor. For Windows users, configure the Git Bash environment.

The latest tutorial on how to read the key of git software

Apr 17, 2025 pm 12:12 PM

The latest tutorial on how to read the key of git software

Apr 17, 2025 pm 12:12 PM

This article will explain in detail how to view keys in Git software. It is crucial to master this because Git keys are secure credentials for authentication and secure transfer of code. The article will guide readers step by step how to display and manage their Git keys, including SSH and GPG keys, using different commands and options. By following the steps in this guide, users can easily ensure their Git repository is secure and collaboratively smoothly with others.