Technology peripherals

Technology peripherals

AI

AI

The team of He Kaiming and Xie Saining successfully followed the deconstruction diffusion model exploration and finally created the highly praised denoising autoencoder.

The team of He Kaiming and Xie Saining successfully followed the deconstruction diffusion model exploration and finally created the highly praised denoising autoencoder.

The team of He Kaiming and Xie Saining successfully followed the deconstruction diffusion model exploration and finally created the highly praised denoising autoencoder.

Denoising Diffusion Model (DDM) is a method currently widely used in image generation. Recently, a four-person team of Xinlei Chen, Zhuang Liu, Xie Saining and He Kaiming conducted a deconstruction study on DDM. By gradually stripping off its components, they found that the generation ability of DDM gradually decreased, but the representation learning ability still maintained a certain level. This shows that some components in DDM may not be important for representation learning.

Denoising is considered a core method for current generative models in fields such as computer vision. This type of method is often called a denoising diffusion model (DDM). By learning a denoising autoencoder (DAE), it can effectively eliminate multiple levels of noise through the diffusion process.

These methods achieve excellent image generation quality and are particularly suitable for generating high-resolution, photo-like simulated real images. The performance of these generative models is so good that they can almost be considered to have strong recognition capabilities and the ability to understand the generated visual content.

Although DAE is the core of current generative models, the earliest paper "Extracting and composing robust features with denoising autoencoders" is to learn the representation of data through supervised methods. This paper proposes a method capable of extracting and combining robust features. It aims to improve the performance of supervised learning tasks by learning useful representations of input data through denoising autoencoders. The successful application of this approach demonstrates the importance of DAEs in generative models.

In the current representation learning community, variants based on "mask noise" are considered the most successful of DAEs, such as predicting missing text in a language (such as BERT) or missing tiles in an image.

Although mask-based variants explicitly specify what is unknown and what is known, they are significantly different from the task of removing additive noise. In the task of isolating additive noise, no explicit information is available to guide processing. However, current DDMs for generative tasks are mainly based on additive noise, which means that unknown and known content may not be explicitly labeled when learning representations. Therefore, this difference may cause the mask-based variants to exhibit different effects in processing additive noise.

Recently, there has been increasing research on the representation learning capabilities of DDM (Deep Denoising Model). These studies directly adopt pre-trained DDM models (originally used for generation tasks) and evaluate their representation quality in recognition tasks. The application of these generative-oriented models has led to exciting results.

However, these pioneering studies also exposed some unresolved problems: these existing models are designed for generation tasks, not recognition tasks, so we cannot determine how their representational capabilities are improved by Noise driving is also obtained through the diffusion driving process.

This study by Xinlei Chen et al. takes a big step in this research direction.

Paper title: Deconstructing Denoising Diffusion Models for Self-Supervised Learning

Paper address: https: //arxiv.org/pdf/2401.14404.pdf

Instead of using existing generation-oriented DDM, they trained a recognition-oriented model. The core idea of this research is to deconstruct the DDM and modify it step by step until it is turned into a classic DAE.

Through this deconstruction research process, they carefully explored every aspect of modern DDM in learning representation goals. The research process brought the AI community a new understanding of what key components a DAE needs to learn a good representation.

Surprisingly, they found that the main key component is the tokenizer, whose function is to create a low-dimensional latent space. Interestingly, this observation is largely independent of the specific tokenizer - they explored standard VAE, tile-level VAE, tile-level AE, tile-level PCA encoders. They found that what makes DAE well represented is the low-dimensional latent space, not the specific tokenizer.

Thanks to the effectiveness of PCA, the team deconstructed it all the way and finally got a simple architecture that is highly similar to the classic DAE (see Figure 1).

They use tile-level PCA to project the image into a latent space, add noise, and project it back through inverse PCA. An autoencoder is then trained to predict the denoised image.

They call this architecture latent Denoising Autoencoder (l-DAE), which is a latent denoising autoencoder.

The team's deconstruction process also revealed many other interesting properties between DDM and classic DAE.

As an example, they found that good results can be achieved with l-DAE even when using a single noise level (i.e., noise scheduling without DDM). Using multi-level noise acts like some form of data augmentation, which can be beneficial, but is not a contributing factor.

Based on these observations, the team believes that the characterization capabilities of DDM are primarily obtained through denoising-driven processes rather than diffusion-driven processes.

Finally, the team also compared its results with previous benchmarks. On the one hand, the new results are better than previously available methods: this is expected, since those models were the starting point for the deconstruction process. On the other hand, the results of the new architecture are not as good as the baseline contrastive learning methods and mask-based methods, but the gap is reduced a bit. This also shows that there is room for further research in the research direction of DAE and DDM.

Background: Denoising Diffusion Model

The starting point for this deconstruction study is the denoising diffusion model (DDM).

As for DDM, please refer to the papers "Diffusion models beat GANs on image synthesis" and "Scalable Diffusion Models with Transformers" and the related reports on this site " Dominance Diffusion The U-Net of the model will be replaced. Xie Saining et al. introduced Transformer and proposed DiT》.

Deconstructing the denoising diffusion model

What we focus on here is its deconstruction process - this process is divided into three stages. The first is to change the generation-centric setting in DiT to one more geared toward self-supervised learning. Next, let's gradually deconstruct and simplify the tokenizer. Finally, they tried to reverse engineer as much of the DDM-driven design as possible to bring the model closer to classic DAE.

Let DDM return to self-supervised learning

Although conceptually, DDM is a form of DAE, it was actually originally developed for image generation tasks from. Many designs in DDM are geared toward generative tasks. Some designs are not inherently suitable for self-supervised learning (e.g. involving category labels); others are not necessary when visual quality is not considered.

In this section, the team will adjust the purpose of DDM to self-supervised learning. Table 1 shows the progression of this phase.

Remove category conditioning

The first step is to remove the category conditioning process in the baseline model.

Unexpectedly, removing category conditioning significantly improves linear probe accuracy (from 57.5% to 62.1%), but the generation quality drops significantly as expected (FID from 11.6 to 11.6). to 34.2).

The team hypothesized that conditioning the model directly on the category labels might reduce the model’s need to encode information about the category labels. Removing category conditioning will force the model to learn more semantics

Deconstructing VQGAN

The training process of the VQGAN tokenizer inherited by DiT from LDM uses multiple loss terms: Automatic encoding reconstruction loss, KL divergence regularization loss, perceptual loss based on supervised VGG network trained for ImageNet classification, adversarial loss using discriminator. The team conducted ablation studies on the latter two losses, see Table 1.

Of course, removing these two losses will affect the generation quality, but in terms of linear detection accuracy index, removing the perceptual loss will reduce it from 62.5% to 58.4%, while removing the adversarial loss will Let it rise, from 58.4% to 59.0%. After removing the adversarial loss, the tokenizer is essentially a VAE.

Replace Noise Scheduling

The team studied a simpler noise scheduling scheme to support self-supervised learning.

Specifically, let the signal scaling factor γ^2_t linearly attenuate in the range of 1>γ^2_t≥0. This allows the model to put more power into sharper images. This significantly increases linear detection accuracy from 59.0% to 63.4%.

Deconstructing the tokenizer

Next, we will deconstruct the VAE tokenizer through a lot of simplifications. They compared four variants of autoencoders as tokenizers, each a simplified version of the previous one:

Convolutional VAE: This was deconstructed in the previous step As a result; it is common that the encoders and decoders of this VAE are deep convolutional neural networks.

Tile-level VAE: Turn input into tiles.

Tile-level AE: The regularization term of VAE is removed, making VAE essentially become AE, and its encoder and decoder are both linear projections.

Tile-level PCA: A simpler variant of principal component analysis (PCA) performed on tile space. It is easy to show that PCA is equivalent to a special case of AE.

Because working with tiles is simple, the team visualized the filters of three tile-level tokenizers in tile space, see Figure 4.

#Table 2 summarizes the linear detection accuracy of DiT when using these four tokenizer variants.

They observed the following results:

To make DDM perform well In supervised learning, the implicit dimension of the tokenizer is crucial.

High-resolution, pixel-based DDM performs poorly for self-supervised learning (see Figure 5.

Become a classic denoising autoencoder

The next step of deconstruction is to make the model as close as possible to the classic DAE. That is to say, to remove the differences between the current PCA-based DDM and the classic DAE All aspects of . The results are shown in Table 3.

Predict clear data (not noise)

Modern DDM usually predicts noise, while classic DAE It is to predict clear data. The team’s approach is to give more weight to the loss term of clearer data by adjusting the loss function.

Such modification will reduce the linear detection accuracy from 65.1% to 62.4%. This shows that the choice of prediction target affects the quality of the representation.

Removing input scaling

In modern DDM, the input has a scaling factor γ_t. But this is not often done in classic DAEs.

By setting γ_t ≡ 1, the team found that it achieved an accuracy of 63.6% (see Table 3), which is better than the model with variable γ_t (62.4%). This shows that in the current scenario , scaling the input is completely unnecessary.

Using inverse PCA to operate on the image space

So far, for all entries explored previously (except Figure 5), the model All run on the implicit space generated by the tokenizer (Figure 2 (b)). Ideally, we want the DAE to directly operate on the image space while also positioning it with excellent accuracy. The team found that since using PCA, then you can use inverse PCA to achieve this goal. See Figure 1.

By making such modifications on the input side (still predicting the output on the implicit space), an accuracy of 63.6% can be obtained (Table 3). And if it is further applied to the output side (that is, using inverse PCA to predict the output on the image space), an accuracy of 63.9% can be obtained. Both results show that using inverse PCA to operate on the image space The obtained results are approximate to the results in the latent space.

Predict the original image

Although inverse PCA can get the predicted target in the image space, the target is not the original image. This is because PCA is a lossy encoder for any reduced dimension d. In contrast, a more natural solution is to predict the original image directly.

When letting the network predict the original image, introduce The "noise" consists of two parts: additive Gaussian noise (its intrinsic dimension is d) and PCA reconstruction error (its intrinsic dimension is D − d (D is 768)). The team's approach is to conduct these two parts separately Weighted.

The team’s design enables prediction of the original image to achieve a linear detection accuracy of 64.5%.

This variant is conceptually very simple: its input is a noisy image, where the noise is added to the PCA implicit space, and its prediction is the original clean image (Figure 1).

Single Noise Level

Finally, driven by curiosity, the team also looked at variants with a single noise level. They pointed out that multi-level noise achieved through noise scheduling is a property of the diffusion process of DDM. Classical DAEs conceptually do not necessarily require multi-level noise.

They fixed the noise level σ to a constant √(1/3). Using this single-level noise, the model's accuracy is a respectable 61.5%, which is only a three percentage point improvement compared to the 64.5% achieved with multi-level noise.

Using multi-level noise is similar to a form of data augmentation in DAEs: it is beneficial, but not a contributing factor. This also means that the representational power of DDM comes primarily from denoising-driven processes rather than from diffusion-driven processes.

Summary

In summary, the team deconstructed the modern DDM and turned it into a classic DAE.

They removed many modern designs and conceptually retained only two designs inherited from modern DDM: low-dimensional implicit space (this is where the noise is added) and multi-level noise.

They use the last item in Table 3 as the final DAE instance (shown in Figure 1). They call this method latent Denoising Autoencoder (latent denoising autoencoder), abbreviated as l-DAE.

Analysis and comparison

Visualizing implicit noise

Conceptually, l-DAE is a form of DAE that can be learned to remove Noise added to the implicit space. Because PCA is simple, the noise implicit in inverse PCA can be easily visualized.

Figure 7 compares noise added to pixels and noise added to latent space. Unlike pixel noise, implicit noise is largely independent of the resolution of the image. If tile-level PCA is used as the tokenizer, the pattern of implicit noise is mainly determined by the tile size.

Denoising results

Figure 8 shows more examples of denoising results based on l-DAE. It can be seen that the new method can obtain better prediction results, even if the noise is strong.

Data augmentation

It should be pointed out that all models given here do not use data augmentation: only the center area of the image is cropped, no random size adjustment or color dithering. The team did further research and tested using mild data augmentation for the final l-DAE:

The results were slightly improved. This indicates that l-DAE's representation learning capabilities are largely independent of data augmentation. Similar behavior has been observed in MAE, see the paper "Masked autoencoders are scalable vision learners" by He Kaiming et al., which is quite different from the contrastive learning method.

Training epoch

All previous experiments are based on 400 epoch training. According to the design of MAE, the team also studied the training of 800 and 1600 epochs:

#In contrast, when the number of epochs increased from 400 to 800, MAE had a significant Gain (4%); but MoCo v3 has almost no gain (0.2%) when the epoch number increases from 300 to 600.

Model size

All previous models were based on the DiT-L variant, and their encoders and decoders were ViT-1/2L (half the depth of ViT-L). The team further trained models of different sizes with the encoder being ViT-B or ViT-L (the decoder is always the same size as the encoder):

Yes See: When the model size is increased from ViT-B to ViT-L, a huge gain of 10.6% can be obtained.

Compare previous baseline models

Finally, in order to better understand the effects of different types of self-supervised learning methods, the team conducted a comparison, and the results are shown in Table 4.

Interestingly, compared to MAE, the performance of l-DAE is not bad, only 1.4% (ViT-B) or 0.8% (ViT-L) Decline. On the other hand, the team also noted that MAE is more efficient in training because it only processes unmasked tiles. Nonetheless, the accuracy gap between MAE and DAE-driven methods has been reduced to a large extent.

Finally, they also observed that autoencoder-based methods (MAE and l-DAE) still have shortcomings compared to contrastive learning methods under this protocol, especially when the model is small. They finally said: "We hope that our research will attract more attention to the research of self-supervised learning using autoencoder-based methods."

The above is the detailed content of The team of He Kaiming and Xie Saining successfully followed the deconstruction diffusion model exploration and finally created the highly praised denoising autoencoder.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

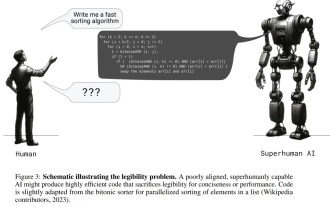

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations