Technology peripherals

Technology peripherals

AI

AI

There are also thieves in large models? To protect your parameters, submit the large model to make a 'human-readable fingerprint'

There are also thieves in large models? To protect your parameters, submit the large model to make a 'human-readable fingerprint'

There are also thieves in large models? To protect your parameters, submit the large model to make a 'human-readable fingerprint'

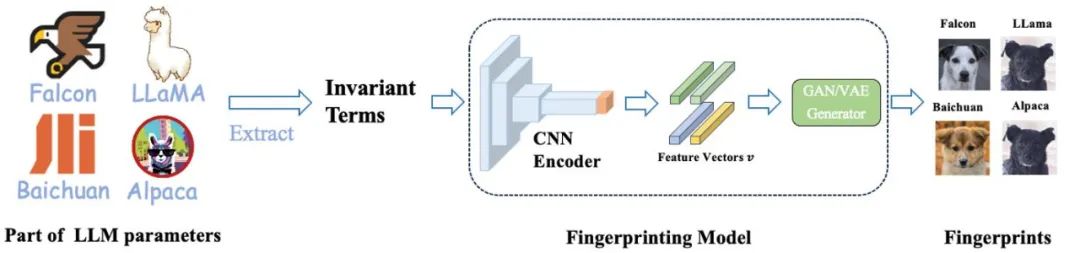

Different base models are symbolized as different breeds of dogs, and the same "dog-shaped fingerprint" indicates that they are derived from the same base model.

Pre-training of large models requires a large amount of computing resources and data. Therefore, the parameters of pre-trained models have become the core competitiveness and assets that major institutions focus on protecting. However, unlike traditional software intellectual property protection, there are two new problems in judging the misappropriation of pre-trained model parameters:

1) The parameters of pre-trained models, especially those of hundreds of billions of models, are usually not Will be open source.

The output and parameters of the pre-trained model will be affected by subsequent processing steps (such as SFT, RLHF, continue pretraining, etc.), which makes it difficult to judge whether a model is fine-tuned based on another existing model. Whether judging based on model output or model parameters, there are certain challenges.

Therefore, the protection of large model parameters is a new problem that lacks effective solutions.

The Lumia research team of Professor Lin Zhouhan of Shanghai Jiao Tong University has developed an innovative technology that can identify ancestry relationships between large models. This approach employs a human-readable fingerprint of a large model without exposing model parameters. The research and development of this technology is of great significance to the development and application of large models.

This method provides two discrimination methods: one is a quantitative discrimination method, which determines whether the pre-trained base model has been stolen by comparing the similarity between the tested large model and a series of base models; the other is Qualitative judgment method, quickly discover the inheritance relationship between models by generating human-readable "dog pictures".

Fingerprints for 6 different base models (first row) and their corresponding descendant models (bottom two rows).

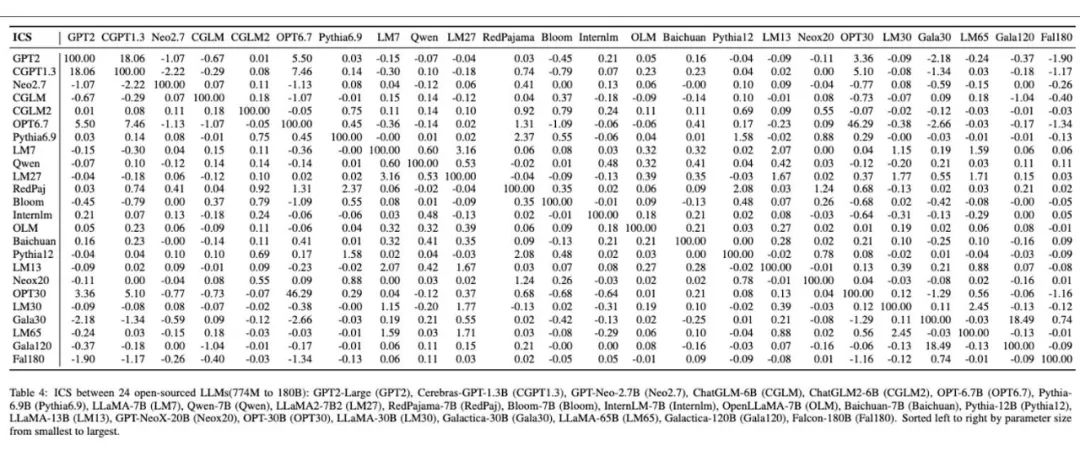

Human-readable large model fingerprints produced for 24 different large models.

Motivation and overall approach

The rapid development of large-scale models has brought a wide range of application prospects, but it has also triggered a series of new challenge. Two of the outstanding problems include:

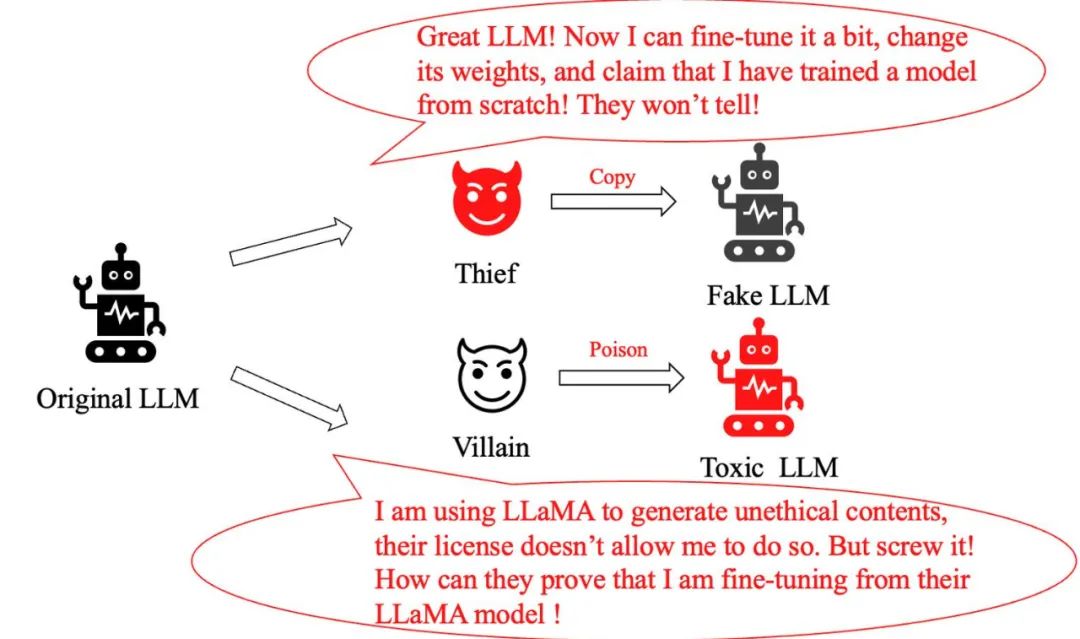

Model theft problem: A clever "thief" who only made minor adjustments to the original large model, and then He claimed to have created a brand new model and exaggerated his contribution. How do we identify if it's a pirated model?

Model Abuse Issue: When a criminal maliciously modifies the LLaMA model and uses it to generate harmful information, even though Meta’s policy clearly prohibits this behavior, how do we Prove that it uses the LLaMA model?

Previously, conventional methods to solve this type of problem included adding watermarks during model training and inference, or adding watermarks to the images generated by large models. Text is classified. However, these methods either impair the performance of large models or are easily circumvented by simple fine-tuning or further pretraining.

This raises a key question: Is there a method that does not interfere with the output distribution of a large model, is robust to fine-tuning and further pretrain, and can also accurately track the base model of the large model, so that The purpose of effectively protecting model copyright.

A team from Shanghai Jiao Tong University drew inspiration from the unique characteristics of human fingerprints and developed a method to create "human-readable fingerprints" for large models. They symbolized different base models as different breeds of dogs, with the same "dog-shaped fingerprint" indicating that they were derived from the same base model.

This intuitive method allows the public to easily identify the connections between different large models, and trace the base model of the model through these fingerprints, effectively preventing model piracy and abuse. It is worth noting that manufacturers of large models do not need to publish their parameters, only the invariants used to generate fingerprints.

The "fingerprints" of Alpaca and LLaMA are very similar. This is because the Alpaca model is obtained by fine-tuning LLaMA; while the fingerprints of several other models show obvious The difference reflects that they originate from different base models.

The paper "HUREF: HUMAN-READABLE FINGERPRINT FOR LARGE LANGUAGE MODELS":

Paper download address: https://arxiv.org/pdf/2312.04828.pdf

Invariant terms observed from experiments

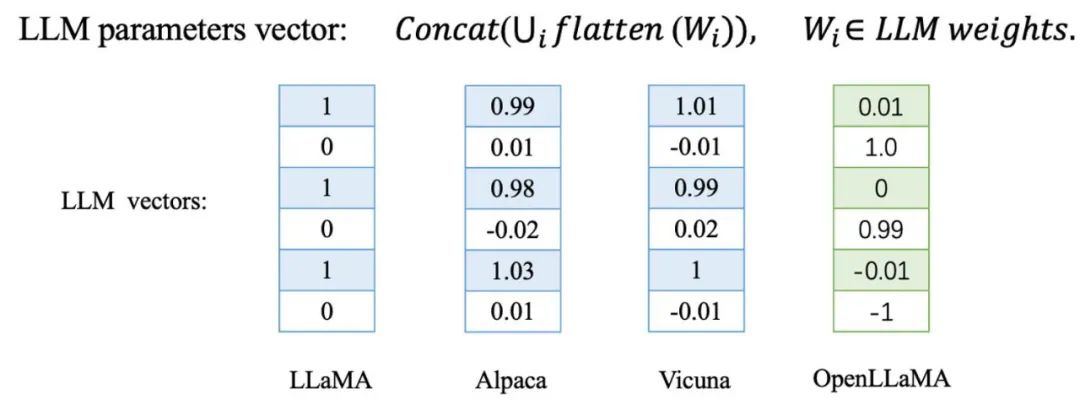

The Jiaotong University team found that when fine-tuning or further pretraining large models, the direction of the parameter vectors of these models changes very slightly. In contrast, for a large model trained from scratch, its parameter direction will be completely different from other base models.

They were verified on a series of derivative models of LLaMA, including Alpaca and Vicuna obtained by fine-tuning LLaMA, as well as Chinese LLaMA and Chinese LLaMA obtained by further pretraining LLaMA Chinese Alpaca. In addition, they also tested independently trained base models such as Baichuan and Shusheng.

The LLaMA derivative model marked in blue in the table and the LLaMA-7B base model show extremely high cosine similarity in the parameter vector, which means that these derivative models It is very close to the base model in the parameter vector direction. In contrast, the independently trained base models marked in red present a completely different situation, with their parameter vector directions completely unrelated.

Based on these observations, they considered whether they could create a fingerprint of the model based on this empirical regularity. However, a key question remains: is this approach robust enough against malicious attacks?

In order to verify this, the research team added the similarity of parameters between models as a penalty loss when fine-tuning LLaMA, so that the parameter direction of the model deviates as much as possible from the base model while fine-tuning, and the test model can Whether to deviate from the original parameter direction while maintaining performance:

They tested the original model and the model obtained by adding penalty loss fine-tuning on 8 benchmarks such as BoolQ and MMLU. As you can see from the chart below, the model's performance deteriorates rapidly as the cosine similarity decreases. This shows that it is quite difficult to deviate from the original parameter direction without damaging the ability of the base model!

Currently, the parameter vector direction of a large model has become an extremely effective and robust indicator for identifying its base model. However, there seem to be some problems in directly using the parameter vector direction as an identification tool. First, this approach requires revealing the parameters of the model, which may not be acceptable for many large models. Secondly, the attacker can simply replace the hidden units to attack the direction of the parameter vector without sacrificing model performance.

Taking the feedforward neural network (FFN) in Transformer as an example, by simply replacing the hidden units and adjusting their weights accordingly, the weight direction can be achieved without changing the network output. Modifications.

#In addition, the team also conducted in-depth analysis of linear mapping attacks and displacement attacks on large model word embedding. These findings raise a question: How should we effectively respond and solve these problems when faced with such diverse attack methods?

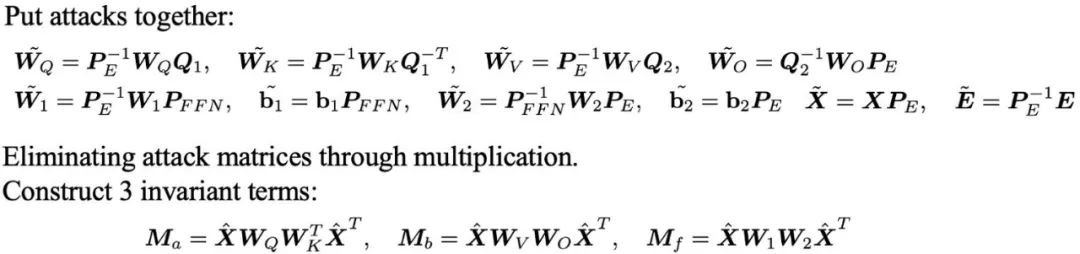

They derived three sets of invariants that are robust to these attacks by eliminating the attack matrices through multiplication between parameter matrices.

From invariants to human-readable fingerprints

Although the invariants derived above are sufficient as large-scale identity markers, but they usually appear in the form of huge matrices, which are not only unintuitive, but also require additional similarity calculations to determine the relationship between different large models. Is there a more intuitive and understandable way to present this information?

In order to solve this problem, the Shanghai Jiao Tong University team developed a method for generating human-readable fingerprints from model parameters—HUREF.

They first extracted invariants from some parameters of the large model, and then used CNN Encoder to encode the invariant matrix into a obeying method while maintaining locality. Gaussian distributed feature vectors, and finally use a smooth GAN or VAE as an image generator to decode these feature vectors into visual images (i.e., dog pictures). These images are not only human-readable, but also visually demonstrate the similarities between different models, effectively serving as a "visual fingerprint" for large models. The following is the detailed training and inference process.

In this framework, the CNN Encoder is the only part that needs to be trained. They use contrastive learning to ensure the local preservation of the Encoder, while using generative adversarial learning to ensure that the feature vector obeys a Gaussian distribution, consistent with the input space of the GAN or VAE generator.

Importantly, during the training process, they do not need to use any real model parameters, all data are obtained through normal distribution sampling. In practical applications, the trained CNN Encoder and the off-the-shelf StyleGAN2 generator trained on the AFHQ dog data set are directly used for inference.

Generating fingerprints for different large models

In order to verify the effectiveness of this method, the team conducted experiments on a variety of widely used large models. They selected several well-known open source large models, such as Falcon, MPT, LLaMA2, Qwen, Baichuan and InternLM, as well as their derivative models, calculated the invariants of these models, and generated the fingerprint image as shown in the figure below. .

The fingerprints of the derived models are very similar to their original models, and we can intuitively identify from the images which prototype model they are based on. In addition, these derived models also maintain a high cosine similarity with the original model in terms of invariants.

Subsequently, they conducted extensive testing on the LLaMA family of models, including Alpaca and Vicuna obtained by SFT, models with extended Chinese vocabulary, Chinese LLaMA and BiLLa obtained by further pretrain, and RLHF Beaver and the multi-modal model Minigpt4, etc.

The table shows the cosine similarity of invariants between LLaMA family models. At the same time, the picture shows the fingerprint images generated for these 14 models. Their similarities The degree is still very high. We can judge from the fingerprint images that they come from the same model. It is worth noting that these models cover a variety of different training methods such as SFT, further pretrain, RLHF and multi-modality, which further validates the method proposed by the team. Robustness of large models in subsequent different training paradigms.

In addition, the figure below is the experimental results they conducted on 24 independently trained open source base models. Through their method, each independent base model is given a unique fingerprint image, which vividly demonstrates the diversity and difference of fingerprints between different large models. In the table, the similarity calculation results between these models are consistent with the differences presented in their fingerprint images.

Finally, the team further verified the uniqueness and stability of the parameter direction of the language model trained independently on a small scale. They pre-trained four GPT-NeoX-350M models from scratch using one-tenth of the Pile dataset.

These models are identical in setup, the only difference is the use of different random number seeds. It is obvious from the chart below that only the difference in random number seeds leads to significantly different model parameter directions and fingerprints, which fully illustrates the uniqueness of the independently trained language model parameter directions.

Finally, by comparing the similarity of adjacent checkpoints, they found that during the pre-training process, the parameters of the model gradually tended to be stable. They believe that this trend will be more obvious in longer training steps and larger models, which also partly explains the effectiveness of their method.

The above is the detailed content of There are also thieves in large models? To protect your parameters, submit the large model to make a 'human-readable fingerprint'. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1382

1382

52

52

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

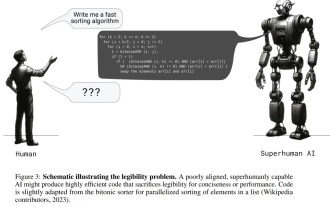

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which