Technology peripherals

Technology peripherals

AI

AI

Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5

Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5

Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5

Before the Spring Festival, version 1.5 of Tongyi Qianwen Model (Qwen) is online. This morning, news of the new version sparked concern in the AI community.

The new version of the large model includes six model sizes: 0.5B, 1.8B, 4B, 7B, 14B and 72B. Among them, the performance of the strongest version surpasses GPT 3.5 and Mistral-Medium. This version includes Base model and Chat model, and provides multi-language support.

The Alibaba Tongyi Qianwen team stated that the relevant technology has also been launched on the Tongyi Qianwen official website and Tongyi Qianwen App.

In addition, today’s release of Qwen 1.5 also has the following highlights:

- Supports 32K context length;

- Opened the checkpoint of the Base Chat model;

- Can be run locally with Transformers;

- Released at the same time GPTQ Int-4/Int8, AWQ and GGUF weights.

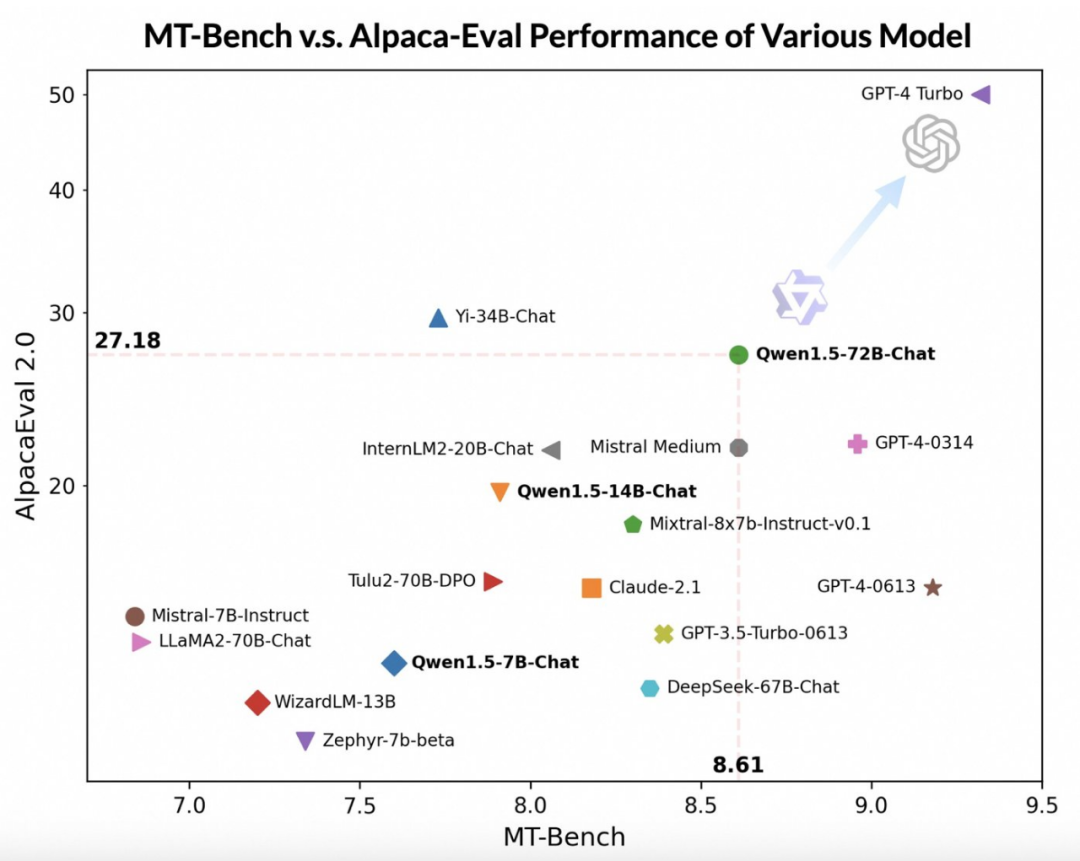

By using more advanced large-scale models as judges, the Tongyi Qianwen team performed on Qwen1.5 on two widely used benchmarks, MT-Bench and Alpaca-Eval. made a preliminary assessment. The evaluation results are as follows:

##Although the Qwen1.5-72B-Chat model lags behind GPT-4-Turbo, it performs better in MT-Bench and In tests on Alpaca-Eval v2, it showed impressive performance. In fact, Qwen1.5-72B-Chat surpasses Claude-2.1, GPT-3.5-Turbo-0613, Mixtral-8x7b-instruct and TULU 2 DPO 70B in performance, and is comparable to the Mistral Medium model that has attracted much attention recently. Comparable. This shows that the Qwen1.5-72B-Chat model has considerable strength in natural language processing.

Tongyi Qianwen team pointed out that although the score of the large model may be related to the length of the answer, human observations show that Qwen1.5 does not suffer from excessively long answers. Impact rating. According to AlpacaEval 2.0 data, the average length of Qwen1.5-Chat is 1618, which is the same length as GPT-4 and shorter than GPT-4-Turbo.

The developers of Tongyi Qianwen said that in recent months, they have been committed to building an excellent model and continuously improving the developer experience.

Compared with previous versions, this update focuses on improving the alignment of the Chat model with human preferences, and significantly enhances the model's multi-language processing power. In terms of sequence length, all scale models have implemented context length range support of 32768 tokens. At the same time, the quality of the pre-trained Base model has also been keyly optimized, which is expected to provide people with a better experience during the fine-tuning process.

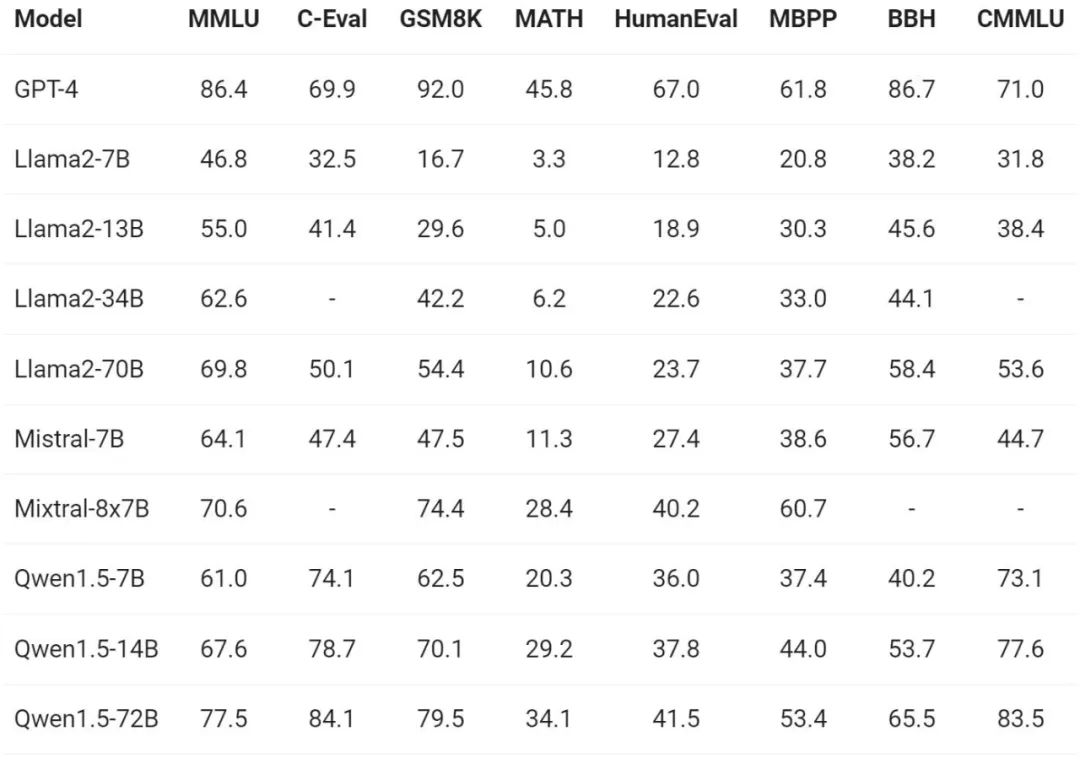

Basic capabilities

Regarding the evaluation of the basic capabilities of the model, the Tongyi Qianwen team conducted MMLU (5-shot), C-Eval, Qwen1.5 was evaluated on benchmark data sets such as Humaneval, GS8K, and BBH.

Under different model sizes, Qwen1.5 showed strong performance in the evaluation benchmarks, and the 72B version performed well in all benchmarks. Beyond Llama2-70B, it demonstrated its capabilities in language understanding, reasoning, and mathematics.

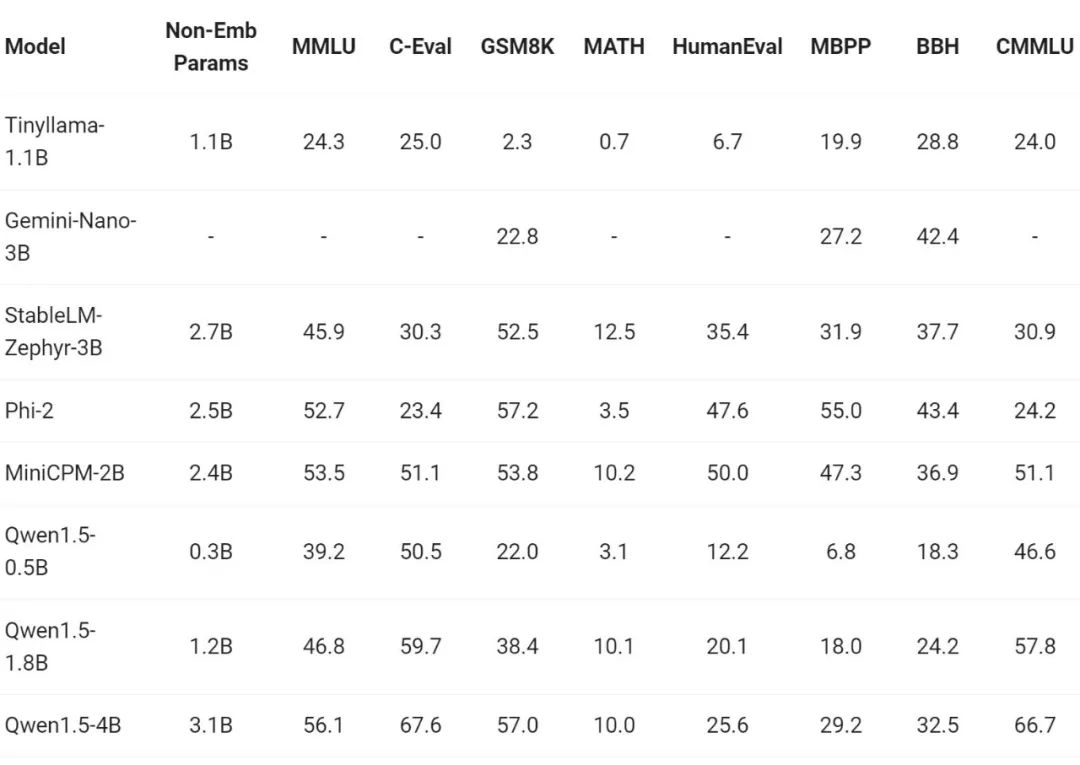

In recent times, the construction of small models has been one of the hot spots in the industry. The Tongyi Qianwen team has compared the Qwen1.5 model with model parameters less than 7 billion with important small models in the community. Comparison:

Qwen1.5 is highly competitive with industry-leading small models in the parameter size range below 7 billion force.

Multi-language capabilities

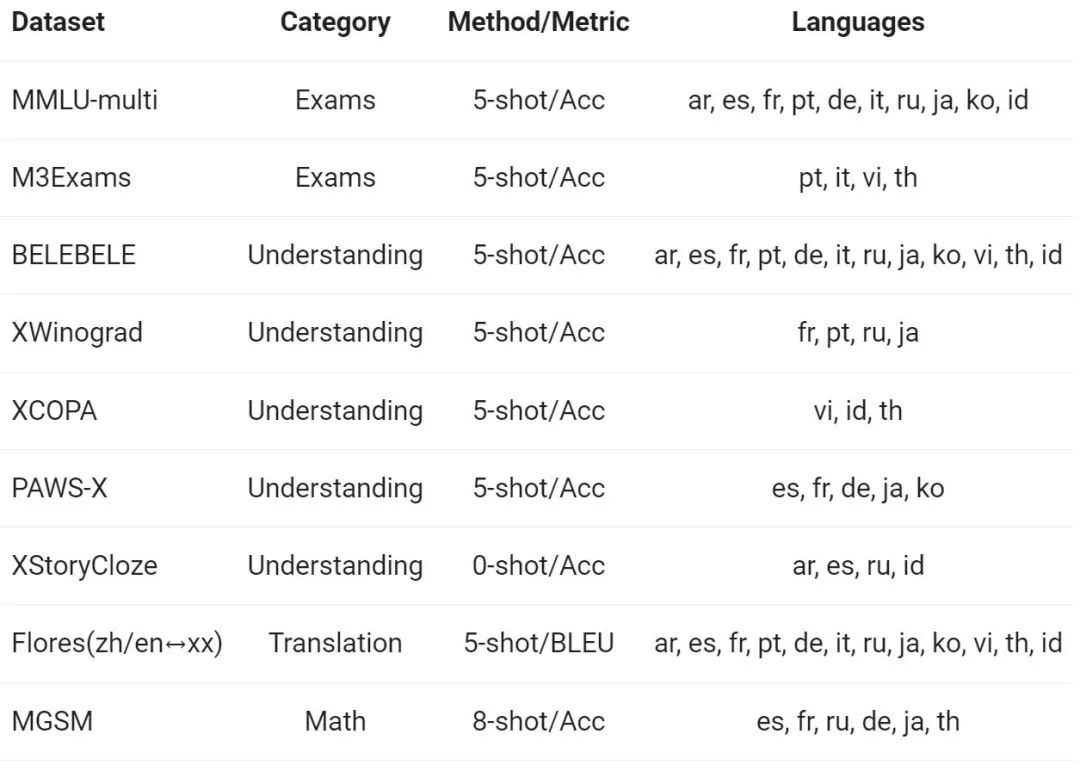

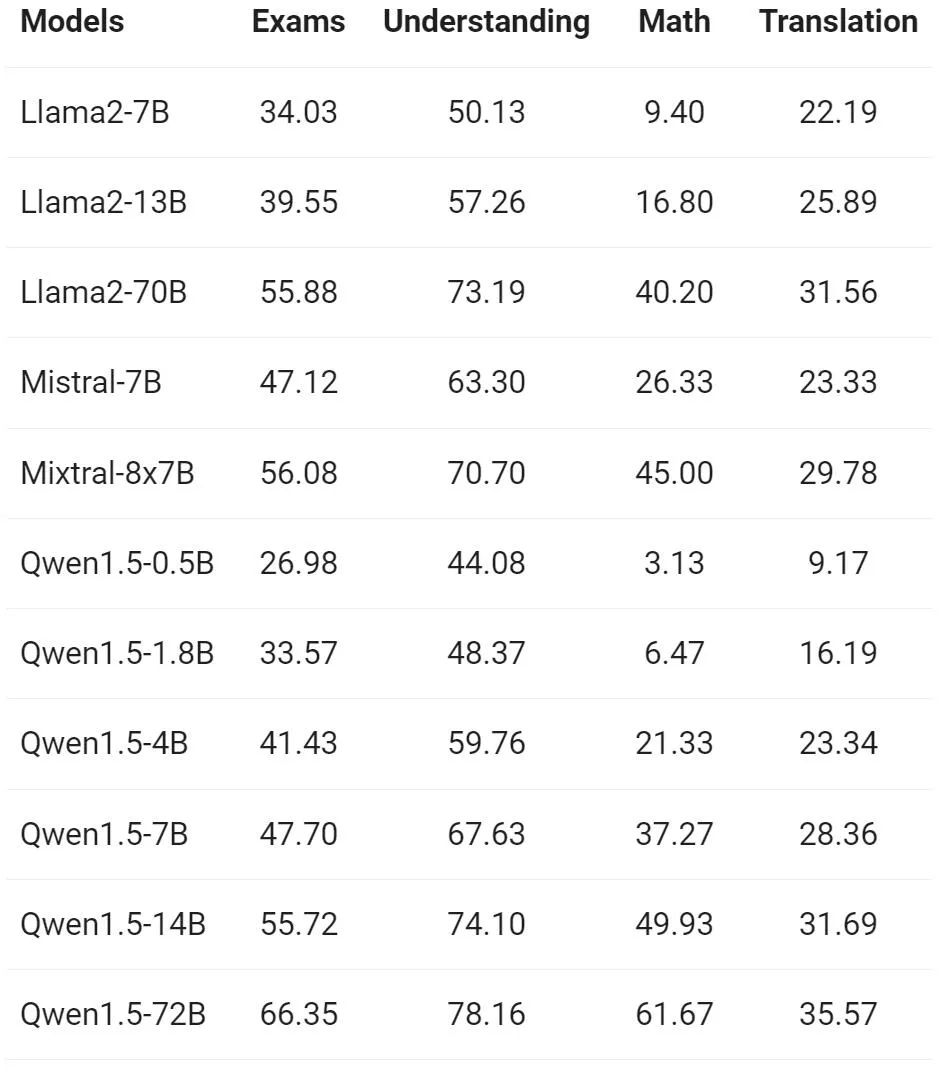

The Tongyi Qianwen team evaluated the Base model on 12 different languages from Europe, East Asia, and Southeast Asia multilingual capabilities. From the public data set of the open source community, Alibaba researchers constructed the evaluation set shown in the following table, covering four different dimensions: examination, comprehension, translation, and mathematics. The table below provides details for each test set, including its evaluation configuration, evaluation metrics, and the specific languages involved.

The detailed results are as follows:

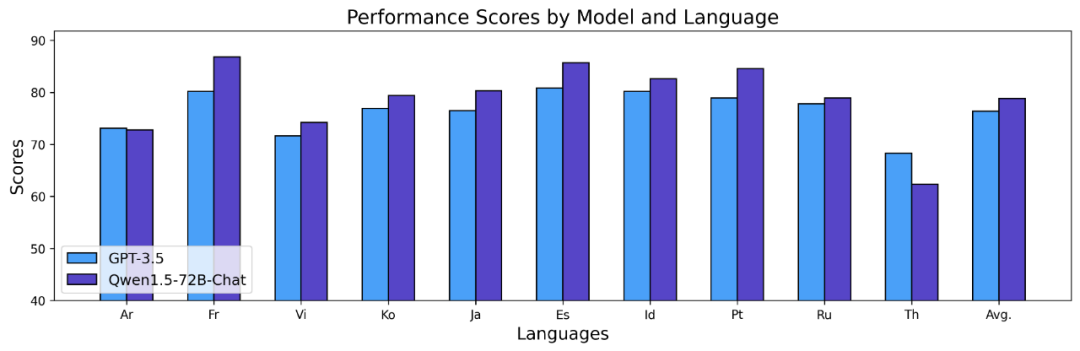

The above results show that the Qwen1.5 Base model performs well in multilingual capabilities in 12 different languages, and shows good performance in the evaluation of various dimensions such as subject knowledge, language understanding, translation, and mathematics. result. Furthermore, with regard to the multilingual capabilities of the Chat model, the following results can be observed:

Long sequence

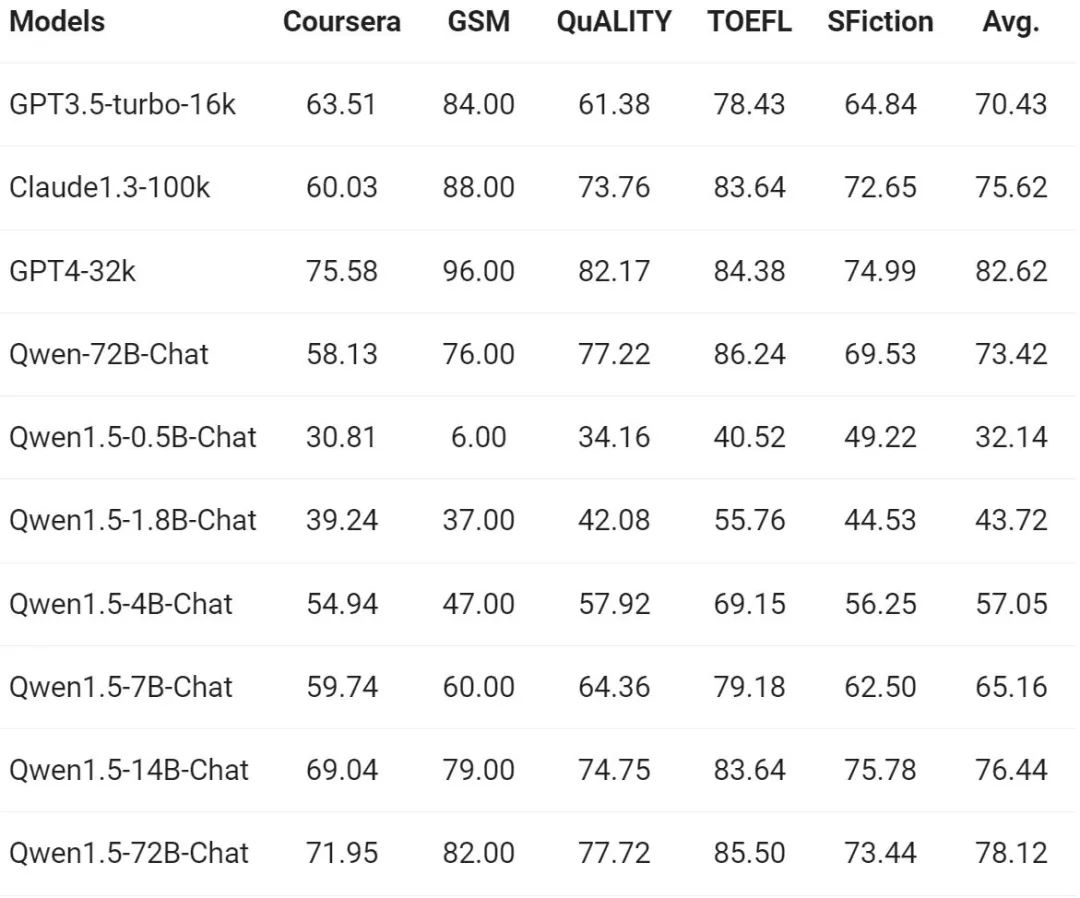

As the demand for long sequence understanding continues to increase, Alibaba has improved the corresponding capabilities of the Qianwen model in the new version. The full series of Qwen1.5 models support the context of 32K tokens. The Tongyi Qianwen team evaluated the performance of the Qwen1.5 model on the L-Eval benchmark, which measures a model's ability to generate responses based on long context. The results are as follows:

From the results, even a small-scale model like Qwen1.5-7B-Chat can show the same performance as GPT -3.5 Comparable performance, while the largest model, Qwen1.5-72B-Chat, is only slightly behind GPT4-32k.

It is worth mentioning that the above results only show the effect of Qwen 1.5 under the length of 32K tokens, and it does not mean that the model can only support a maximum length of 32K. Developers can try to modify max_position_embedding in config.json to a larger value to observe whether the model can achieve satisfactory results in longer context understanding scenarios.

Linking external systems

Nowadays, one of the charms of general language models lies in their potential ability to interface with external systems. As a rapidly emerging task in the community, RAG effectively addresses some of the typical challenges faced by large language models, such as hallucinations and the inability to obtain real-time updates or private data. In addition, language models demonstrate powerful capabilities in using APIs and writing code based on instructions and examples. Large models can use code interpreters or act as AI agents to achieve broader value.

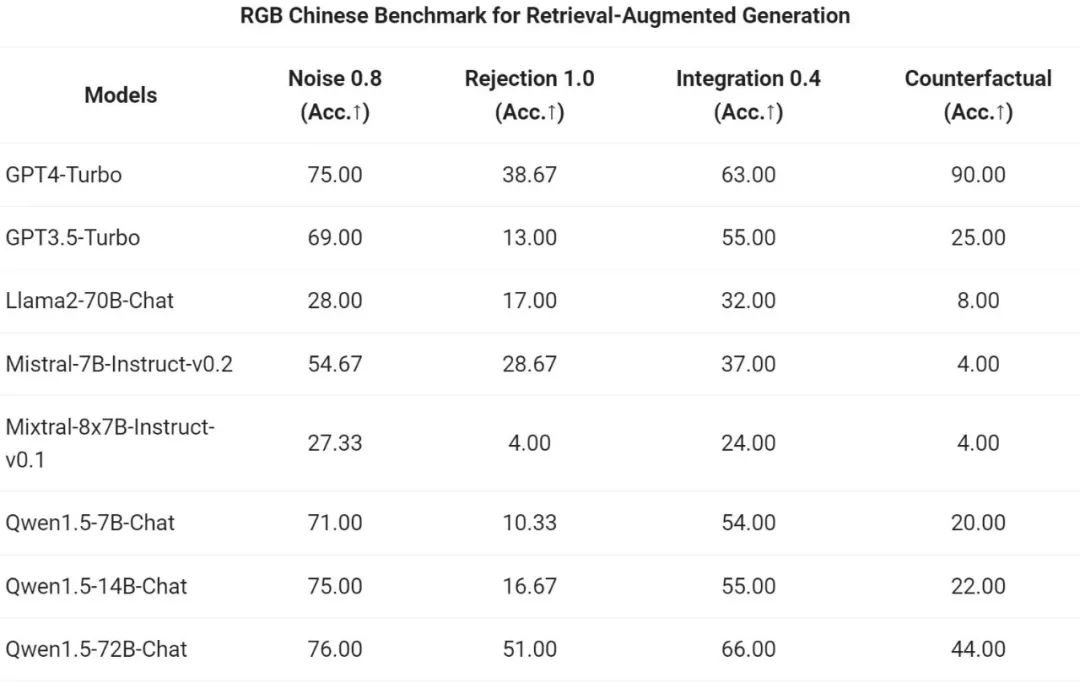

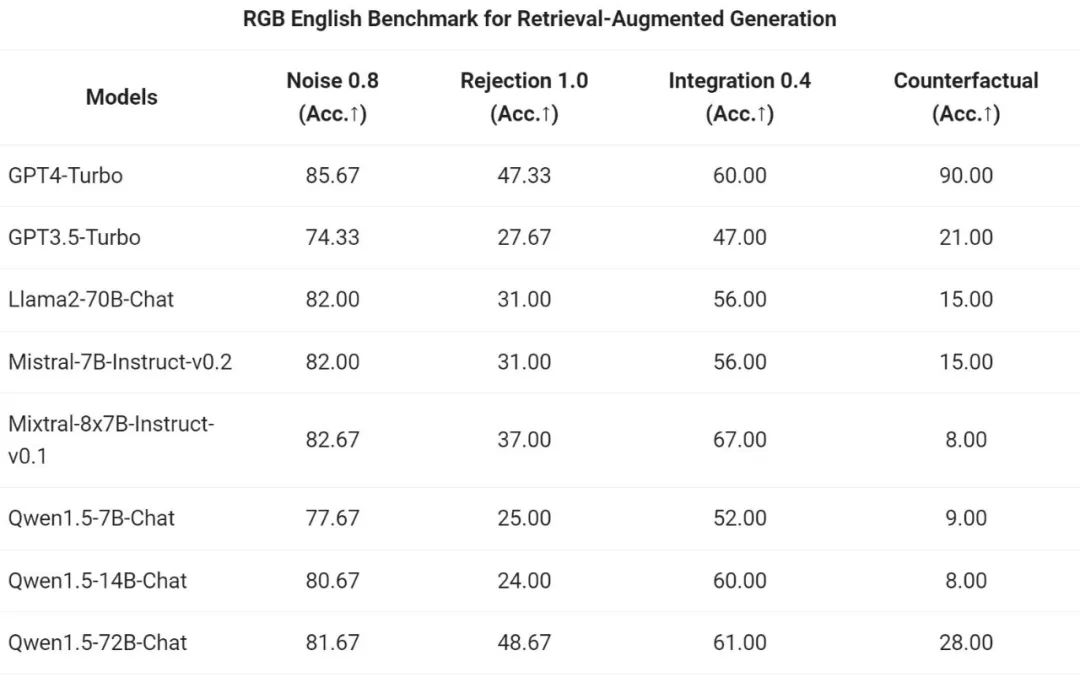

The Tongyi Qianwen team evaluated the end-to-end effect of the Qwen1.5 series Chat model on the RAG task. The evaluation is based on the RGB test set, which is a set used for Chinese and English RAG evaluation:

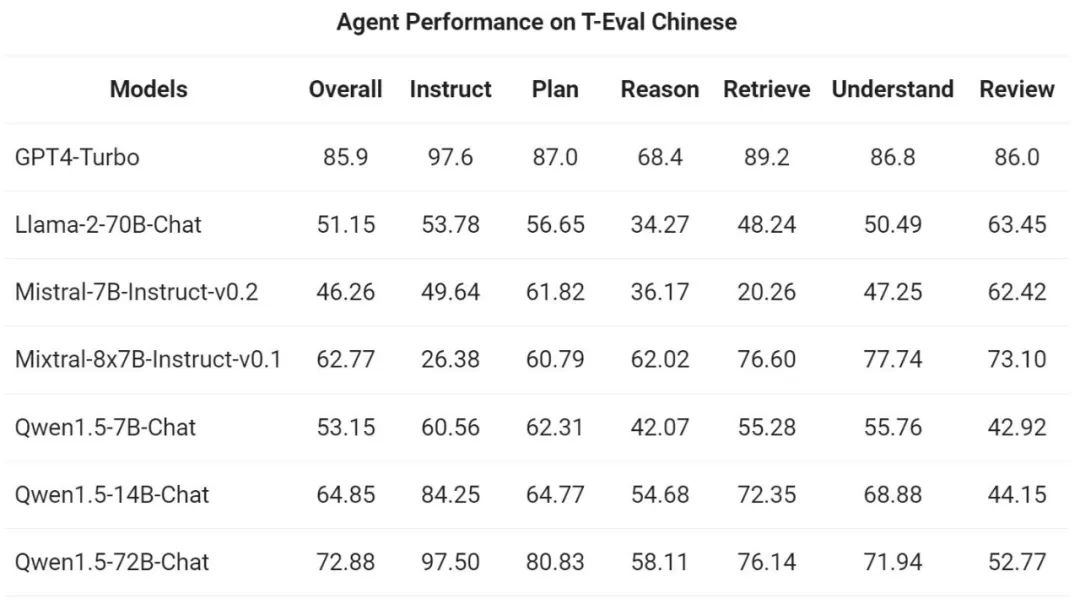

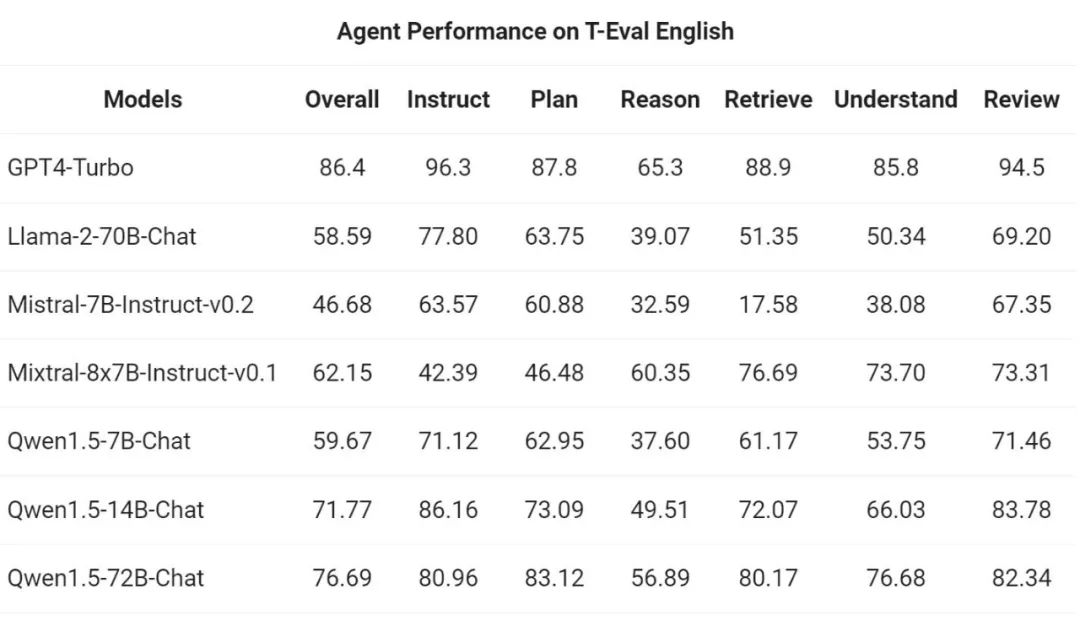

Then, pass The Yiqianwen team evaluated Qwen1.5's ability to run as a general-purpose agent in the T-Eval benchmark. All Qwen1.5 models are not optimized specifically for the benchmark:

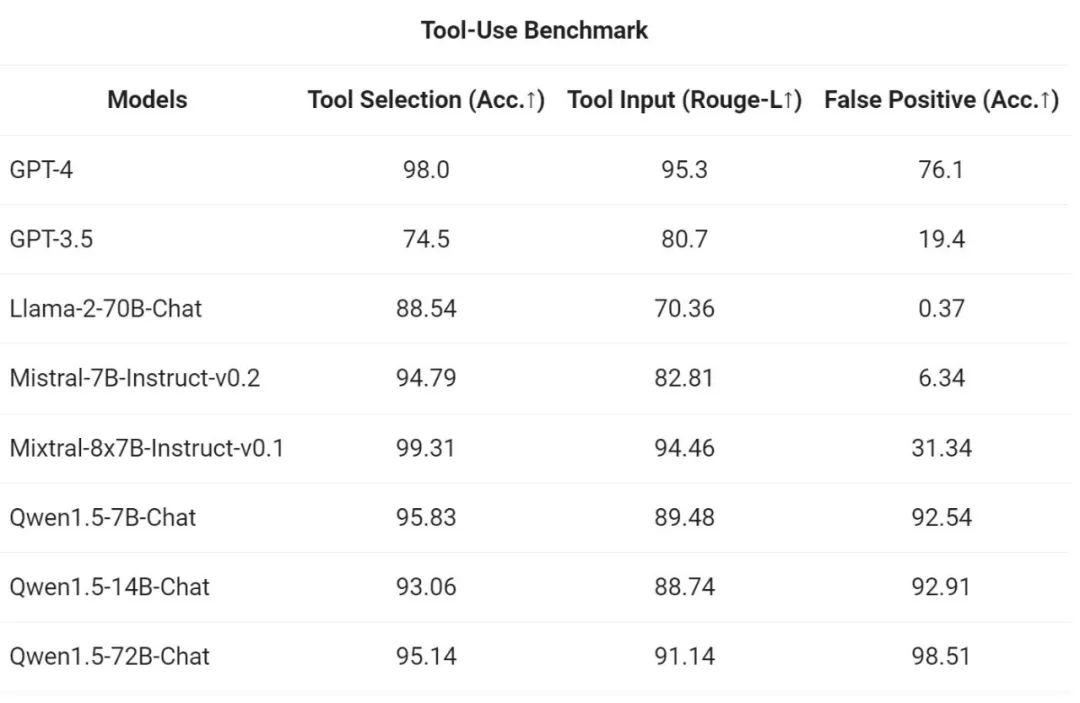

##In order to test the tool calling ability, Ali Use your own open source evaluation benchmark to test the model's ability to correctly select and call tools. The results are as follows:

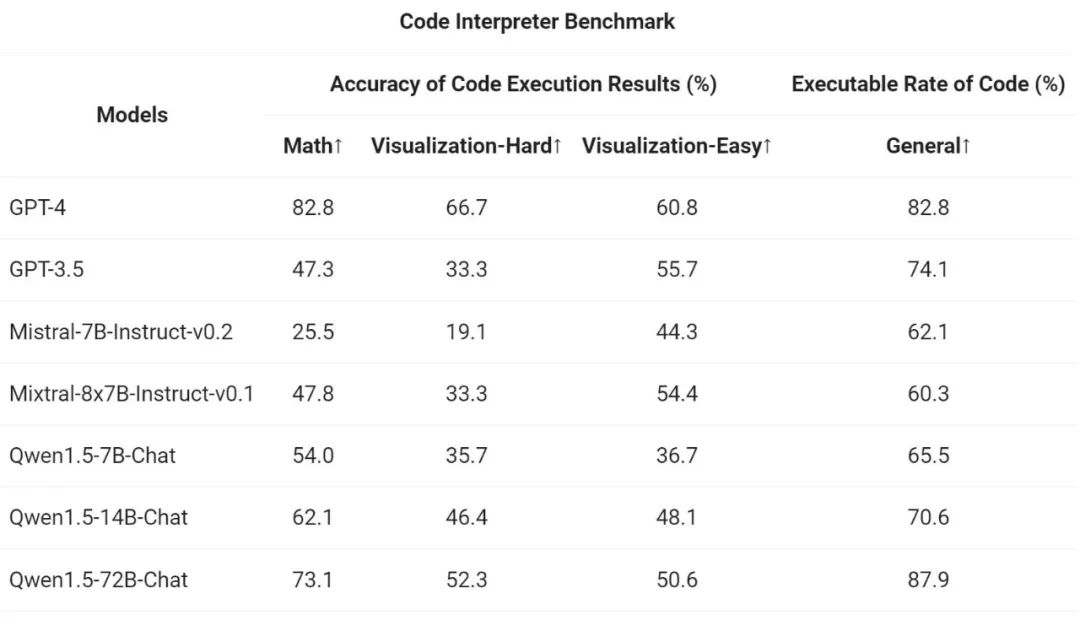

Finally, since the Python code interpreter has become an advanced LLM is an increasingly powerful tool. The Tongyi Qianwen team also evaluated the new model's ability to utilize this tool based on previous open source evaluation benchmarks:

The results show that the larger Qwen1.5-Chat model generally outperforms the smaller model, with Qwen1.5-72B-Chat approaching GPT-4 tool performance. However, in code interpreter tasks such as mathematical problem solving and visualization, even the largest Qwen1.5-72B-Chat model lags significantly behind GPT-4 in terms of coding ability. Ali stated that it will improve the coding capabilities of all Qwen models during the pre-training and alignment process in future versions.

Qwen1.5 is integrated with the HuggingFace transformers code base. Starting from version 4.37.0, developers can directly use the transformers library native code without loading any custom code (specifying the trust_remote_code option) to use Qwen1.5.

In the open source ecosystem, Alibaba has cooperated with vLLM, SGLang (for deployment), AutoAWQ, AutoGPTQ (for quantification), Axolotl, LLaMA-Factory (for fine-tuning) and llama.cpp (for local LLM inference) and other frameworks, all of which now support Qwen1.5. The Qwen1.5 series is also currently available on platforms such as Ollama and LMStudio.

The above is the detailed content of Tongyi Qianwen is open source again, Qwen1.5 brings six volume models, and its performance exceeds GPT3.5. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

How to add a new column in SQL

Apr 09, 2025 pm 02:09 PM

How to add a new column in SQL

Apr 09, 2025 pm 02:09 PM

Add new columns to an existing table in SQL by using the ALTER TABLE statement. The specific steps include: determining the table name and column information, writing ALTER TABLE statements, and executing statements. For example, add an email column to the Customers table (VARCHAR(50)): ALTER TABLE Customers ADD email VARCHAR(50);

What is the syntax for adding columns in SQL

Apr 09, 2025 pm 02:51 PM

What is the syntax for adding columns in SQL

Apr 09, 2025 pm 02:51 PM

The syntax for adding columns in SQL is ALTER TABLE table_name ADD column_name data_type [NOT NULL] [DEFAULT default_value]; where table_name is the table name, column_name is the new column name, data_type is the data type, NOT NULL specifies whether null values are allowed, and DEFAULT default_value specifies the default value.

SQL Clear Table: Performance Optimization Tips

Apr 09, 2025 pm 02:54 PM

SQL Clear Table: Performance Optimization Tips

Apr 09, 2025 pm 02:54 PM

Tips to improve SQL table clearing performance: Use TRUNCATE TABLE instead of DELETE, free up space and reset the identity column. Disable foreign key constraints to prevent cascading deletion. Use transaction encapsulation operations to ensure data consistency. Batch delete big data and limit the number of rows through LIMIT. Rebuild the index after clearing to improve query efficiency.

How to set default values when adding columns in SQL

Apr 09, 2025 pm 02:45 PM

How to set default values when adding columns in SQL

Apr 09, 2025 pm 02:45 PM

Set the default value for newly added columns, use the ALTER TABLE statement: Specify adding columns and set the default value: ALTER TABLE table_name ADD column_name data_type DEFAULT default_value; use the CONSTRAINT clause to specify the default value: ALTER TABLE table_name ADD COLUMN column_name data_type CONSTRAINT default_constraint DEFAULT default_value;

Use DELETE statement to clear SQL tables

Apr 09, 2025 pm 03:00 PM

Use DELETE statement to clear SQL tables

Apr 09, 2025 pm 03:00 PM

Yes, the DELETE statement can be used to clear a SQL table, the steps are as follows: Use the DELETE statement: DELETE FROM table_name; Replace table_name with the name of the table to be cleared.

phpmyadmin creates data table

Apr 10, 2025 pm 11:00 PM

phpmyadmin creates data table

Apr 10, 2025 pm 11:00 PM

To create a data table using phpMyAdmin, the following steps are essential: Connect to the database and click the New tab. Name the table and select the storage engine (InnoDB recommended). Add column details by clicking the Add Column button, including column name, data type, whether to allow null values, and other properties. Select one or more columns as primary keys. Click the Save button to create tables and columns.

How to deal with Redis memory fragmentation?

Apr 10, 2025 pm 02:24 PM

How to deal with Redis memory fragmentation?

Apr 10, 2025 pm 02:24 PM

Redis memory fragmentation refers to the existence of small free areas in the allocated memory that cannot be reassigned. Coping strategies include: Restart Redis: completely clear the memory, but interrupt service. Optimize data structures: Use a structure that is more suitable for Redis to reduce the number of memory allocations and releases. Adjust configuration parameters: Use the policy to eliminate the least recently used key-value pairs. Use persistence mechanism: Back up data regularly and restart Redis to clean up fragments. Monitor memory usage: Discover problems in a timely manner and take measures.

How to create an oracle database How to create an oracle database

Apr 11, 2025 pm 02:33 PM

How to create an oracle database How to create an oracle database

Apr 11, 2025 pm 02:33 PM

Creating an Oracle database is not easy, you need to understand the underlying mechanism. 1. You need to understand the concepts of database and Oracle DBMS; 2. Master the core concepts such as SID, CDB (container database), PDB (pluggable database); 3. Use SQL*Plus to create CDB, and then create PDB, you need to specify parameters such as size, number of data files, and paths; 4. Advanced applications need to adjust the character set, memory and other parameters, and perform performance tuning; 5. Pay attention to disk space, permissions and parameter settings, and continuously monitor and optimize database performance. Only by mastering it skillfully requires continuous practice can you truly understand the creation and management of Oracle databases.