Java

Java

javaTutorial

javaTutorial

Evaluate the performance effect of MyBatis first-level cache in a concurrent environment

Evaluate the performance effect of MyBatis first-level cache in a concurrent environment

Evaluate the performance effect of MyBatis first-level cache in a concurrent environment

Title: Analysis of the application effect of mybatis first-level cache in concurrent environment

Introduction:

When using mybatis for database access, the first-level cache is the default When enabled, it reduces the number of database accesses and improves system performance by caching query results. However, in a concurrent environment, the first-level cache may have some problems. This article will analyze the application effect of mybatis first-level cache in a concurrent environment and give specific code examples.

1. Overview of the first-level cache

The first-level cache of mybatis is a session-level cache. It is enabled by default and is thread-safe. The core idea of the first-level cache is to cache the results of each query in the session. If the parameters of the next query are the same, the results will be obtained directly from the cache without querying the database again, which can reduce the number of database accesses.

2. The application effect of the first-level cache

- Reduce the number of database accesses: By using the first-level cache, you can reduce the number of database accesses and improve system performance. In a concurrent environment, multiple threads share the same session and can share data in the cache, avoiding repeated database query operations.

- Improve system response speed: Since the first-level cache can obtain results directly from the cache without querying the database, it can greatly reduce the system response time and improve the user experience.

3. Problems with the first-level cache in a concurrent environment

- Data inconsistency: In a concurrent environment, when multiple threads share the same session, if one of them If a thread modifies the data in the database, the data obtained by other threads from the cache will be old data, which will lead to data inconsistency. The solution to this problem is to use a second-level cache or manually refresh the cache.

- Excessive memory usage: In the case of large concurrency, the first-level cache may occupy too much memory, causing system performance to decrease. The solution to this problem is to appropriately adjust the size of the first-level cache or use a second-level cache.

Sample code:

Assume there is a UserDao interface and UserMapper.xml file. UserDao defines a getUserById method for querying user information based on user ID. The code example is as follows:

-

UserDao interface definition

public interface UserDao { User getUserById(int id); }Copy after login UserMapper.xml configuration file

<mapper namespace="com.example.UserDao"> <select id="getUserById" resultType="com.example.User"> SELECT * FROM user WHERE id = #{id} </select> </mapper>Copy after loginCode using the first-level cache

public class Main { public static void main(String[] args) { SqlSessionFactory sqlSessionFactory = MyBatisUtil.getSqlSessionFactory(); // 获取SqlSessionFactory SqlSession sqlSession = sqlSessionFactory.openSession(); // 打开一个会话 UserDao userDao = sqlSession.getMapper(UserDao.class); // 获取UserDao的实例 User user1 = userDao.getUserById(1); // 第一次查询,会将结果缓存到一级缓存中 User user2 = userDao.getUserById(1); // 第二次查询,直接从缓存中获取结果 System.out.println(user1); System.out.println(user2); sqlSession.close(); // 关闭会话 } }Copy after login

In the above code, the first query will cache the results into the first-level cache, and the second query will get the results directly from the cache, and The database will not be queried again. This can reduce the number of database accesses and improve system performance.

Conclusion:

Mybatis's first-level cache can effectively reduce the number of database accesses and improve system performance in a concurrent environment. However, when multiple threads share the same session, there may be data inconsistencies. Therefore, in actual applications, it is necessary to consider whether to use the first-level cache according to specific business needs, and adopt corresponding strategies to solve potential problems. At the same time, using appropriate caching strategies and technical means, such as using second-level cache or manually refreshing the cache, can further optimize system performance.

The above is the detailed content of Evaluate the performance effect of MyBatis first-level cache in a concurrent environment. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Detailed steps for cleaning memory in Xiaohongshu

Apr 26, 2024 am 10:43 AM

Detailed steps for cleaning memory in Xiaohongshu

Apr 26, 2024 am 10:43 AM

1. Open Xiaohongshu, click Me in the lower right corner 2. Click the settings icon, click General 3. Click Clear Cache

What to do if your Huawei phone has insufficient memory (Practical methods to solve the problem of insufficient memory)

Apr 29, 2024 pm 06:34 PM

What to do if your Huawei phone has insufficient memory (Practical methods to solve the problem of insufficient memory)

Apr 29, 2024 pm 06:34 PM

Insufficient memory on Huawei mobile phones has become a common problem faced by many users, with the increase in mobile applications and media files. To help users make full use of the storage space of their mobile phones, this article will introduce some practical methods to solve the problem of insufficient memory on Huawei mobile phones. 1. Clean cache: history records and invalid data to free up memory space and clear temporary files generated by applications. Find "Storage" in the settings of your Huawei phone, click "Clear Cache" and select the "Clear Cache" button to delete the application's cache files. 2. Uninstall infrequently used applications: To free up memory space, delete some infrequently used applications. Drag it to the top of the phone screen, long press the "Uninstall" icon of the application you want to delete, and then click the confirmation button to complete the uninstallation. 3.Mobile application to

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

Local fine-tuning of DeepSeek class models faces the challenge of insufficient computing resources and expertise. To address these challenges, the following strategies can be adopted: Model quantization: convert model parameters into low-precision integers, reducing memory footprint. Use smaller models: Select a pretrained model with smaller parameters for easier local fine-tuning. Data selection and preprocessing: Select high-quality data and perform appropriate preprocessing to avoid poor data quality affecting model effectiveness. Batch training: For large data sets, load data in batches for training to avoid memory overflow. Acceleration with GPU: Use independent graphics cards to accelerate the training process and shorten the training time.

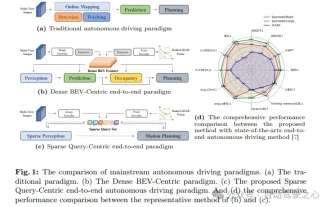

nuScenes' latest SOTA | SparseAD: Sparse query helps efficient end-to-end autonomous driving!

Apr 17, 2024 pm 06:22 PM

nuScenes' latest SOTA | SparseAD: Sparse query helps efficient end-to-end autonomous driving!

Apr 17, 2024 pm 06:22 PM

Written in front & starting point The end-to-end paradigm uses a unified framework to achieve multi-tasking in autonomous driving systems. Despite the simplicity and clarity of this paradigm, the performance of end-to-end autonomous driving methods on subtasks still lags far behind single-task methods. At the same time, the dense bird's-eye view (BEV) features widely used in previous end-to-end methods make it difficult to scale to more modalities or tasks. A sparse search-centric end-to-end autonomous driving paradigm (SparseAD) is proposed here, in which sparse search fully represents the entire driving scenario, including space, time, and tasks, without any dense BEV representation. Specifically, a unified sparse architecture is designed for task awareness including detection, tracking, and online mapping. In addition, heavy

What to do if the Edge browser takes up too much memory What to do if the Edge browser takes up too much memory

May 09, 2024 am 11:10 AM

What to do if the Edge browser takes up too much memory What to do if the Edge browser takes up too much memory

May 09, 2024 am 11:10 AM

1. First, enter the Edge browser and click the three dots in the upper right corner. 2. Then, select [Extensions] in the taskbar. 3. Next, close or uninstall the plug-ins you do not need.

For only $250, Hugging Face's technical director teaches you how to fine-tune Llama 3 step by step

May 06, 2024 pm 03:52 PM

For only $250, Hugging Face's technical director teaches you how to fine-tune Llama 3 step by step

May 06, 2024 pm 03:52 PM

The familiar open source large language models such as Llama3 launched by Meta, Mistral and Mixtral models launched by MistralAI, and Jamba launched by AI21 Lab have become competitors of OpenAI. In most cases, users need to fine-tune these open source models based on their own data to fully unleash the model's potential. It is not difficult to fine-tune a large language model (such as Mistral) compared to a small one using Q-Learning on a single GPU, but efficient fine-tuning of a large model like Llama370b or Mixtral has remained a challenge until now. Therefore, Philipp Sch, technical director of HuggingFace

Does win11 take up less memory than win10?

Apr 18, 2024 am 12:57 AM

Does win11 take up less memory than win10?

Apr 18, 2024 am 12:57 AM

Yes, overall, Win11 takes up less memory than Win10. Optimizations include a lighter system kernel, better memory management, new hibernation options and fewer background processes. Testing shows that Win11's memory footprint is typically 5-10% lower than Win10's in similar configurations. But memory usage is also affected by hardware configuration, applications, and system settings.

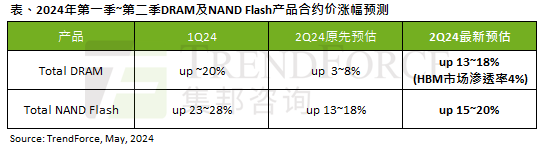

The impact of the AI wave is obvious. TrendForce has revised up its forecast for DRAM memory and NAND flash memory contract price increases this quarter.

May 07, 2024 pm 09:58 PM

The impact of the AI wave is obvious. TrendForce has revised up its forecast for DRAM memory and NAND flash memory contract price increases this quarter.

May 07, 2024 pm 09:58 PM

According to a TrendForce survey report, the AI wave has a significant impact on the DRAM memory and NAND flash memory markets. In this site’s news on May 7, TrendForce said in its latest research report today that the agency has increased the contract price increases for two types of storage products this quarter. Specifically, TrendForce originally estimated that the DRAM memory contract price in the second quarter of 2024 will increase by 3~8%, and now estimates it at 13~18%; in terms of NAND flash memory, the original estimate will increase by 13~18%, and the new estimate is 15%. ~20%, only eMMC/UFS has a lower increase of 10%. ▲Image source TrendForce TrendForce stated that the agency originally expected to continue to