Technology peripherals

Technology peripherals

AI

AI

LLaMa 3 may be postponed to July, targeting GPT-4 and learning lessons from Gemini

LLaMa 3 may be postponed to July, targeting GPT-4 and learning lessons from Gemini

LLaMa 3 may be postponed to July, targeting GPT-4 and learning lessons from Gemini

Past image generation models have often been criticized for rendering predominantly white images, and Google’s Gemini model has fallen into trouble for being an extreme overkill. Its generated image results became overly cautious and deviated significantly from historical facts, surprising users. Google claims the model is more discreet than developers expected. This caution is reflected not only in the generated images, but also in often treating some prompts as sensitive and thus refusing to provide answers.

As this issue continues to attract attention, how to strike a balance between security and usability has become a huge challenge for Meta. LLaMA 2 is regarded as a "strong player" in the open source field and has also become Meta's star model. It changed the situation of large models once it was launched. Currently, Meta is fully preparing to launch LLaMa 3, but first needs to solve the problems left by LLaMA 2: it appeared to be too conservative in answering controversial questions.

Finding a balance between security and usability

Meta added safeguards in Llama 2 ,preventing LLM from answering various controversial questions. While this conservatism is necessary to handle extreme cases, such as queries related to violence or illegal activity, it also limits the model's ability to answer more common but slightly controversial questions. According to The Information, when he asked LLaMA 2 how employees can avoid going into the office on days when they are required to come to the office, he was refused advice or told that "it is important to respect and abide by the company's policies and guidelines." ”. LLaMA 2 also refuses to provide answers on how to prank your friends, win a war, or wreck a car engine. This conservative answer is intended to avoid a PR disaster.

However, it was revealed that Meta’s senior leadership and some researchers involved in the model work believed that LLaMA 2’s answers were too “safe.” Meta is working to make the upcoming LLaMA 3 model more flexible and provide more contextual information when providing answers, rather than rejecting answers outright. Researchers are trying to make LLaMA 3 more interactive with users and better understand what they might mean. It is reported that the new version of the model will be better able to distinguish multiple meanings of a word. For example, LLaMA 3 might understand that a question about how to destroy a car's engine refers to how to shut it down, not to destroy it. Meta also plans to appoint an in-house person to handle tone and safety training in the coming weeks, The Information reports, as part of the company's efforts to make model responses more nuanced.

The challenge that Meta and Google need to overcome is not just finding this balance point, but many technology giants have also been affected to varying degrees. They need to work hard to build products that everyone loves, can use, and work smoothly, while also ensuring that those products are safe and reliable. This is a problem that technology companies must face head-on as they catch up with AI technology.

More information on LLaMa 3

The release of LLaMa 3 is highly anticipated and Meta plans to release it in July, but there is still time Subject to change. Meta CEO Mark Zuckerberg is ambitious and once said, "Although Llama 2 is not the industry-leading model, it is the best open source model. For LLaMa 3 and subsequent models, our goal is to build SOTA, and eventually became the industry-leading model."

Original address: https://www.reuters.com/technology/meta-plans -launch-new-ai-language-model-llama-3-july-information-reports-2024-02-28/

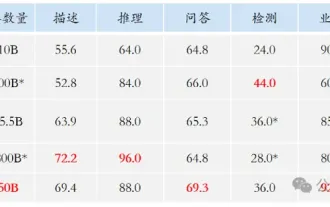

Meta I hope LLaMa 3 can catch up with OpenAI’s GPT- 4. Meta company staff revealed that it has not yet been decided whether LLaMa 3 will be multi-modal and whether it will be able to understand and generate text and images, because the researchers have not yet begun fine-tuning the model. However, LLaMa is expected to have more than 14 billion parameters, which will significantly exceed LLaMa 2, indicating a significant improvement in its ability to handle complex queries.

十分な 350,000 H100 と数百億ドルを管理することに加えて、才能も LLaMa 3 トレーニングの「必需品」です。 Meta は、基礎 AI 研究チームとは別の生成 AI グループを通じて LLaMa を開発しています。 LLaMa 2と3の安全性を担当していた研究者のルイス・マーティン氏は2月に同社を退職した。強化学習を率いていたケビン・ストーン氏も今月退職した。これが LLaMa 3 のトレーニングに影響を与えるかどうかは不明です。 LLaMa 3 がセキュリティと使いやすさのバランスをうまく取り、コーディング機能の面で新たな驚きを与えてくれるかどうか、様子を見守りたいと思います。

The above is the detailed content of LLaMa 3 may be postponed to July, targeting GPT-4 and learning lessons from Gemini. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile