Technology peripherals

Technology peripherals

AI

AI

3D reconstruction of two pictures in 2 seconds! This AI tool is popular on GitHub, netizens: Forget about Sora

3D reconstruction of two pictures in 2 seconds! This AI tool is popular on GitHub, netizens: Forget about Sora

3D reconstruction of two pictures in 2 seconds! This AI tool is popular on GitHub, netizens: Forget about Sora

Only 2 pictures, no need to measure any additional data——

Dangdang, a complete 3D bear is there:

This new tool called DUSt3R is so popular that it ranked second on the GitHub Hot List not long after it was launched. ##.

Netizen actually tested, took two photos, and really reconstructed his kitchen, the whole processIt takes less than 2 seconds!

(In addition to 3D images, it can also provide depth maps, confidence maps and point cloud images)

EveryoneForget about sora first, this is what we can really see and touch.

(from the European branch of NAVER LABS Institute of Artificial Intelligence, Aalto University, Finland) ’s “manifesto” is also full of momentum:

We It is to make the world have no difficult 3D visual tasks.So, how is it done? “all-in-one”For the multi-view stereo reconstruction

(MVS) task, the first step is to estimate the camera parameters, including internal and external parameters.

This operation is boring and troublesome, but it is indispensable for subsequent triangulation of pixels in three-dimensional space, and this is an inseparable part of almost all MVS algorithms with better performance. In the study of this article, the DUSt3R introduced by the author's team adopted a completely different approach. Itdoes not require any prior information about camera calibration or viewpoint pose, and can complete dense or unconstrained 3D reconstruction of arbitrary images.

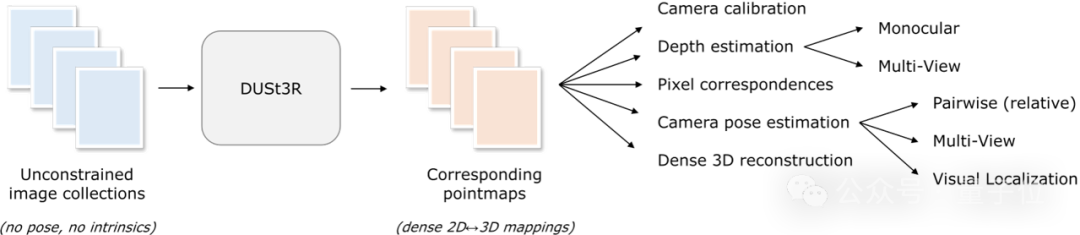

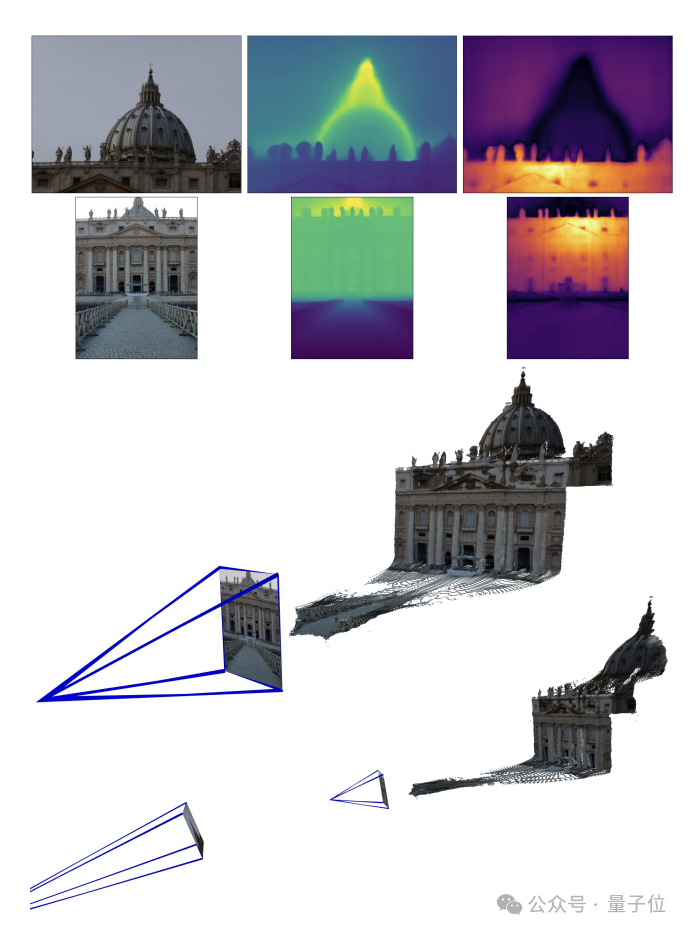

Here, the team formulates the pairwise reconstruction problem as point-plot regression, unifying the monocular and binocular reconstruction situations. When more than two input images are provided, all pairs of point images are represented into a common reference frame through a simple and effective global alignment strategy. As shown in the figure below, given a set of photos with unknown camera poses and intrinsic features, DUSt3R outputs a corresponding set of point maps, from which we can directly recover various geometric quantities that are usually difficult to estimate simultaneously. Such as camera parameters, pixel correspondence, depth map, and completely consistent 3D reconstruction effect.

(The author reminds that DUSt3R is also applicable to a single input image)

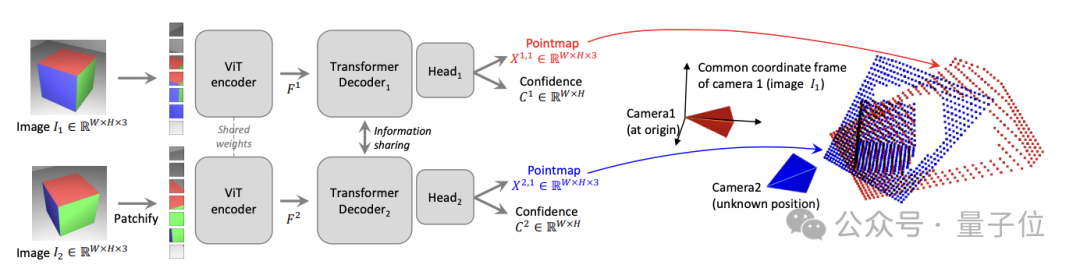

In terms of specific network architecture, DUSt3R is based onStandard Transformer encoder and decoder, inspired by CroCo (a study on self-supervised pre-training of 3D vision tasks across views), and adopted Simple regression loss training is completed.

As shown in the figure below, the two views of the scene(I1, I2) are first encoded in Siamese (Siamese) mode using the shared ViT encoder.

The resulting token representation(F1 and F2) is then passed to two Transformer decoders, which pass the cross attention Information is constantly exchanged.

Многозадачность SOTA

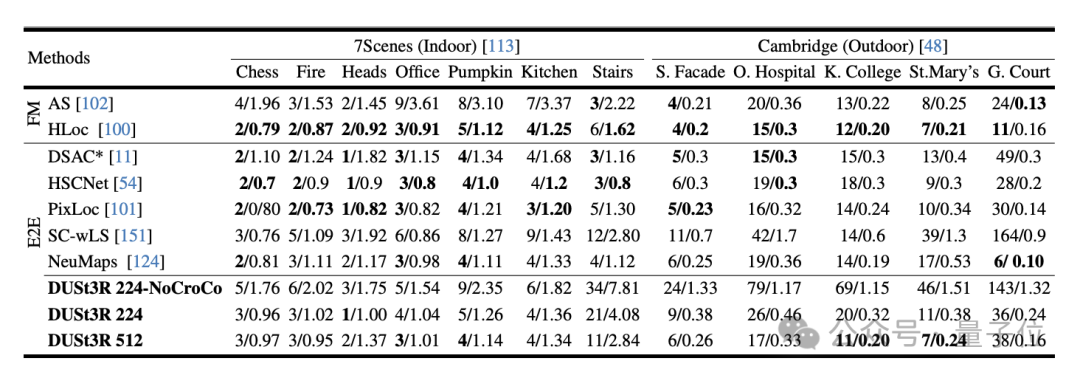

В ходе эксперимента сначала оценивается DUST3R на 7 сценах (7 сцен в помещении) и Cambridge Landmarks (8 сцен на открытом воздухе) Наборы данных Производительность на Задача абсолютной оценки позы, индикаторами являются ошибка перевода и ошибка вращения (чем меньше значение, тем лучше) .

Автор заявил, что по сравнению с другими существующими методами сопоставления функций и сквозными методами производительность DUSt3R замечательна.

Потому что он никогда не проходил никакого обучения визуальному позиционированию, и, во-вторых, он не сталкивался с изображениями запросов и изображениями базы данных в процессе обучения.

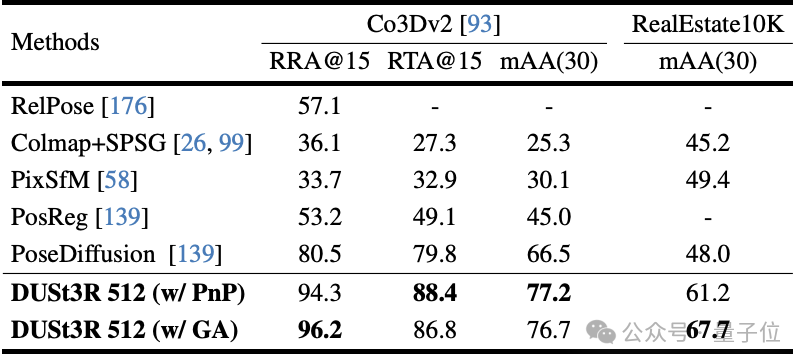

Во-вторых, это задача регрессии позы с несколькими изображениями, выполняемая на 10 случайных кадрах. Результаты. DUST3R добился лучших результатов на обоих наборах данных.

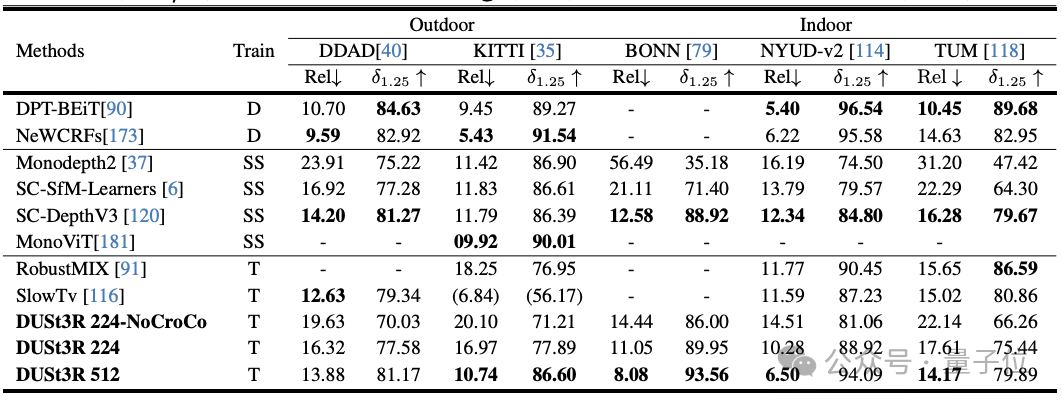

В задаче монокулярной оценки глубины DUSt3R также может хорошо обрабатывать сцены в помещении и на открытом воздухе, с производительностью лучше, чем у базовых линий с самоконтролем, и отличается от самых продвинутых базовых линий с контролируемым контролем. . Вверх и вниз.

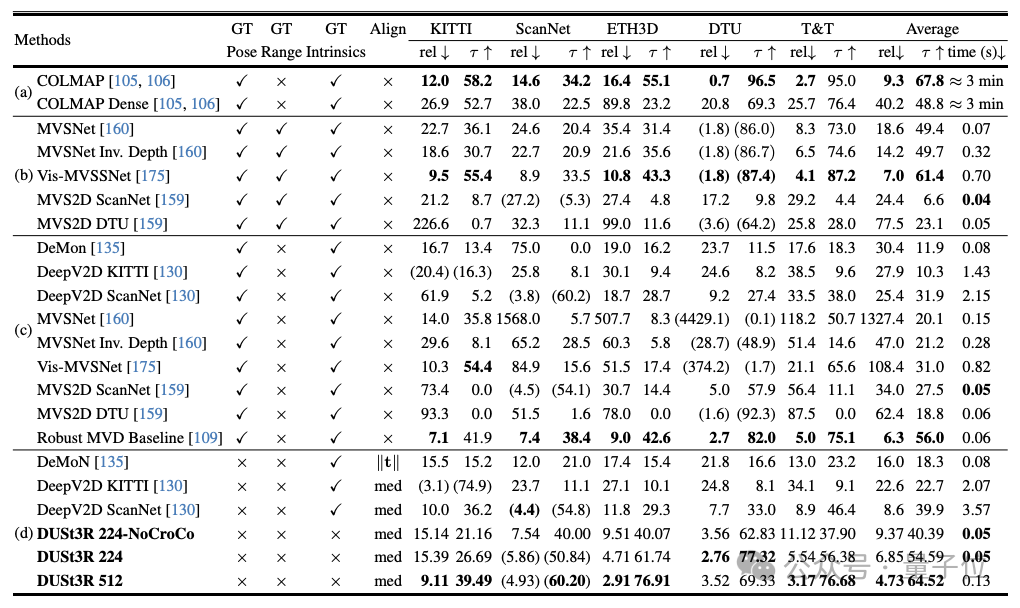

С точки зрения оценки глубины в нескольких ракурсах производительность DUSt3R также выдающаяся.

Ниже приведены эффекты 3D-реконструкции, предоставленные двумя группами чиновников. Чтобы вы почувствовали, они ввели только два изображения:

(1 )

(2)

Реальные измерения пользователей сети: ничего страшного, если два изображения не перекрываются

Да Пользователь сети предоставил DUST3R два изображения без перекрывающегося содержимого, а также за несколько секунд выдал точное 3D-изображение:

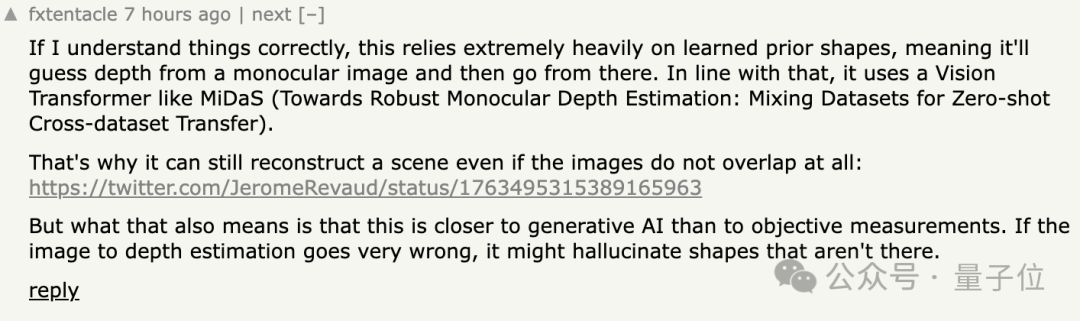

##В ответ некоторые пользователи сети сказали, что это означает, что метода нет. Делайте «объективные измерения» и вместо этого ведите себя как ИИ.

Кроме того, некоторым людям интересно

Кроме того, некоторым людям интересно

? Некоторые пользователи сети действительно попробовали это, и ответ

да!

## Портал:

The above is the detailed content of 3D reconstruction of two pictures in 2 seconds! This AI tool is popular on GitHub, netizens: Forget about Sora. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

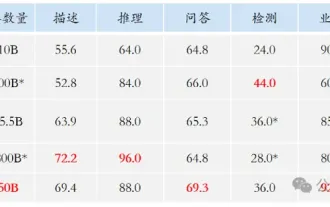

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

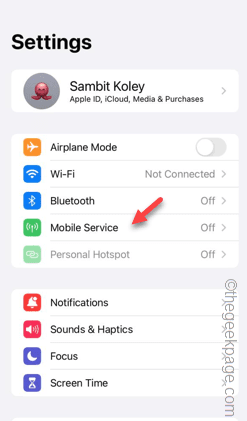

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Slow Cellular Data Internet Speeds on iPhone: Fixes

May 03, 2024 pm 09:01 PM

Facing lag, slow mobile data connection on iPhone? Typically, the strength of cellular internet on your phone depends on several factors such as region, cellular network type, roaming type, etc. There are some things you can do to get a faster, more reliable cellular Internet connection. Fix 1 – Force Restart iPhone Sometimes, force restarting your device just resets a lot of things, including the cellular connection. Step 1 – Just press the volume up key once and release. Next, press the Volume Down key and release it again. Step 2 – The next part of the process is to hold the button on the right side. Let the iPhone finish restarting. Enable cellular data and check network speed. Check again Fix 2 – Change data mode While 5G offers better network speeds, it works better when the signal is weaker

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving