Technology peripherals

Technology peripherals

AI

AI

LimSim++: A new stage for multi-modal large models in autonomous driving

LimSim++: A new stage for multi-modal large models in autonomous driving

LimSim++: A new stage for multi-modal large models in autonomous driving

Paper name: LimSim: A Closed-Loop Platform for Deploying Multimodal LLMs in Autonomous Driving

Project homepage: https://pjlab-adg.github.io/ limsim_plus/

Introduction to the simulator

With the multi-modal large language model ((M)LLM) in the field of artificial intelligence Setting off a research boom, its application in autonomous driving technology has gradually become the focus of attention. These models provide strong support for building safe and reliable autonomous driving systems through powerful generalized understanding and logical reasoning capabilities. Although there are existing closed-loop simulation platforms such as HighwayEnv, CARLA and NuPlan, which can verify the performance of LLM in autonomous driving, users usually need to adapt these platforms themselves, which not only raises the threshold for use, but also limits the in-depth exploration of LLM capabilities.

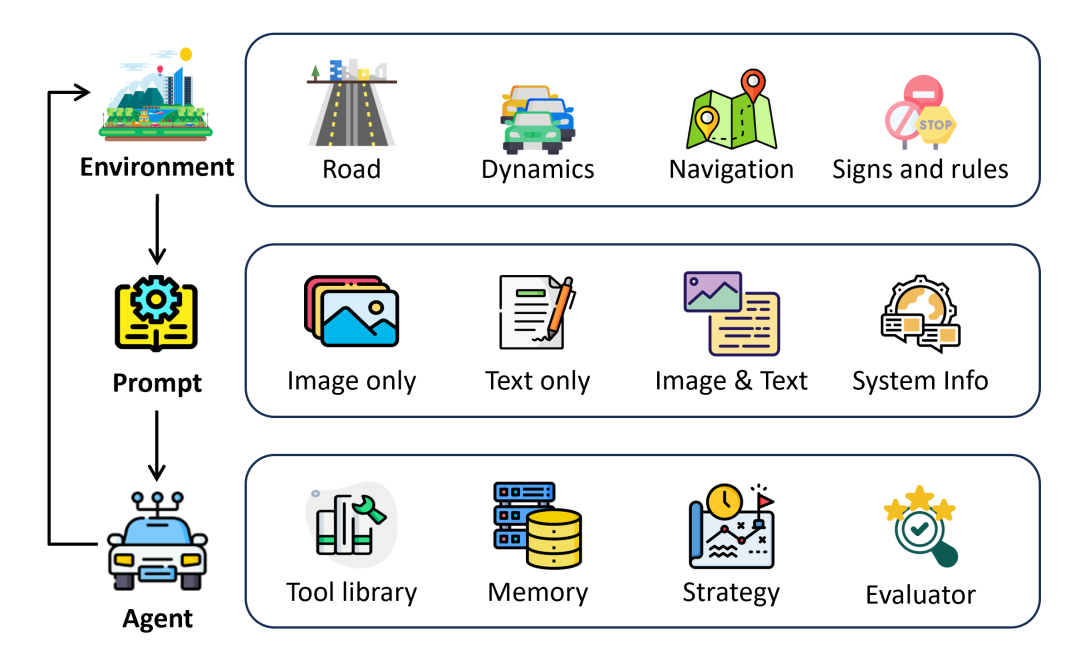

To overcome this challenge, the Intelligent Transportation Platform Group of Shanghai Artificial Intelligence Laboratory launched **LimSim**, an autonomous driving closed-loop simulation platform specially designed for (M)LLM. The launch of LimSim aims to provide researchers in the field of autonomous driving with a more suitable environment to comprehensively explore the potential of LLM in autonomous driving technology. The platform can extract and process scene information from simulation environments such as SUMO and CARLA, converting it into various input forms required by LLM, including image information, scene cognition and task description. In addition, LimSim also has a motion primitive conversion function, which can quickly generate appropriate driving trajectories based on LLM's decisions to achieve closed-loop simulation. More importantly, LimSim creates a continuous learning environment for LLM, which helps LLM continuously optimize driving strategies and improve the driver agent's driving performance by evaluating decision results and providing feedback.

Simulator Features

LimSim has significant features in the field of autonomous driving simulation and provides driver agents driven by (M)LLM An ideal closed-loop simulation and continuous learning environment.

- LimSim supports the simulation of a variety of driving scenarios, such as intersections, ramps, and roundabouts, ensuring that the Driver Agent can accept challenges in various complex road conditions. This diverse scene setting helps LLM gain richer driving experience and improve its adaptability in real environments.

- LimSim supports large language models with multiple modal inputs. LimSim not only provides rule-based scene information generation, but can also be jointly debugged with CARLA to provide rich visual input to meet the visual perception needs of (M)LLM in autonomous driving.

- LimSim focuses on continuous learning capabilities. LimSim integrates modules such as evaluation, reflection, and memory to help (M)LLM continuously accumulate experience and optimize decision-making strategies during the simulation process.

Create your own Driver Agent

LimSim provides users with a rich interface that can meet the needs of Driver Agent Customization requirements improve the flexibility of LimSim development and lower the threshold for use.

- Prompt build

- LimSim supports user-defined prompts to change the text information input to (M)LLM, including roles Information such as settings, task requirements, scene descriptions, etc.

- LimSim provides scene description templates based on json format, allowing users to modify prompts with zero code without considering the specific implementation of information extraction.

- Decision Evaluation Module

- LimSim provides a baseline for evaluating (M)LLM decision results. Users can Adjust evaluation preferences by changing weight parameters.

- Flexibility of the framework

- LimSim supports users to add customized tool libraries for (M)LLM, such as Perceptual tools, numerical processing tools, and more.

Get started quickly

- Step 0:Install SUMO (Version≥v1.15.0, ubuntu)

sudo add-apt-repository ppa:sumo/stablesudo apt-get updatesudo apt-get install sumo sumo-tools sumo-doc

- #Step 1: Download the LimSim source code compression package, decompress it and switch to the correct branch

git clone https://github.com/PJLab-ADG/LimSim.gitgit checkout -b LimSim_plus

- Step 2:Install dependencies (conda is required)

cd LimSimconda env create -f environment.yml

- Step 3: Run simulation

- Run simulation alone

python ExampleModel.py

- Use LLM for autonomous driving

export OPENAI_API_KEY='your openai key'python ExampleLLMAgentCloseLoop.py

- Using VLM for autonomous driving

# Terminal 1cd path-to-carla/./CarlaUE4.sh# Termnial 2cd path-to-carla/cd PythonAPI/util/python3 config.py --map Town06# Termnial 2export OPENAI_API_KEY='your openai key'cd path-to-LimSim++/python ExampleVLMAgentCloseLoop.py

For more information, please check LimSim’s github: https:// github.com/PJLab-ADG/LimSim/tree/LimSim_plus. If you have any other questions, please raise them in Issues on GitHub or contact us directly by email!

We welcome partners from academia and industry to jointly develop LimSim and build an open source ecosystem!

The above is the detailed content of LimSim++: A new stage for multi-modal large models in autonomous driving. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Smart App Control on Windows 11: How to turn it on or off

Jun 06, 2023 pm 11:10 PM

Smart App Control on Windows 11: How to turn it on or off

Jun 06, 2023 pm 11:10 PM

Intelligent App Control is a very useful tool in Windows 11 that helps protect your PC from unauthorized apps that can damage your data, such as ransomware or spyware. This article explains what Smart App Control is, how it works, and how to turn it on or off in Windows 11. What is Smart App Control in Windows 11? Smart App Control (SAC) is a new security feature introduced in the Windows 1122H2 update. It works with Microsoft Defender or third-party antivirus software to block potentially unnecessary apps that can slow down your device, display unexpected ads, or perform other unexpected actions. Smart application

The facial features are flying around, opening the mouth, staring, and raising eyebrows, AI can imitate them perfectly, making it impossible to prevent video scams

Dec 14, 2023 pm 11:30 PM

The facial features are flying around, opening the mouth, staring, and raising eyebrows, AI can imitate them perfectly, making it impossible to prevent video scams

Dec 14, 2023 pm 11:30 PM

With such a powerful AI imitation ability, it is really impossible to prevent it. It is completely impossible to prevent it. Has the development of AI reached this level now? Your front foot makes your facial features fly, and on your back foot, the exact same expression is reproduced. Staring, raising eyebrows, pouting, no matter how exaggerated the expression is, it is all imitated perfectly. Increase the difficulty, raise the eyebrows higher, open the eyes wider, and even the mouth shape is crooked, and the virtual character avatar can perfectly reproduce the expression. When you adjust the parameters on the left, the virtual avatar on the right will also change its movements accordingly to give a close-up of the mouth and eyes. The imitation cannot be said to be exactly the same, but the expression is exactly the same (far right). The research comes from institutions such as the Technical University of Munich, which proposes GaussianAvatars, which

MotionLM: Language modeling technology for multi-agent motion prediction

Oct 13, 2023 pm 12:09 PM

MotionLM: Language modeling technology for multi-agent motion prediction

Oct 13, 2023 pm 12:09 PM

This article is reprinted with permission from the Autonomous Driving Heart public account. Please contact the source for reprinting. Original title: MotionLM: Multi-Agent Motion Forecasting as Language Modeling Paper link: https://arxiv.org/pdf/2309.16534.pdf Author affiliation: Waymo Conference: ICCV2023 Paper idea: For autonomous vehicle safety planning, reliably predict the future behavior of road agents is crucial. This study represents continuous trajectories as sequences of discrete motion tokens and treats multi-agent motion prediction as a language modeling task. The model we propose, MotionLM, has the following advantages: First

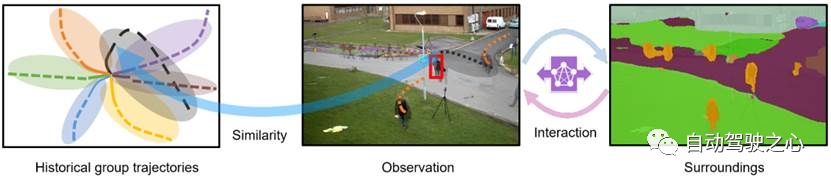

What are the effective methods and common Base methods for pedestrian trajectory prediction? Top conference papers sharing!

Oct 17, 2023 am 11:13 AM

What are the effective methods and common Base methods for pedestrian trajectory prediction? Top conference papers sharing!

Oct 17, 2023 am 11:13 AM

Trajectory prediction has been gaining momentum in the past two years, but most of it focuses on the direction of vehicle trajectory prediction. Today, Autonomous Driving Heart will share with you the algorithm for pedestrian trajectory prediction on NeurIPS - SHENet. In restricted scenes, human movement patterns are usually To a certain extent, it conforms to limited rules. Based on this assumption, SHENet predicts a person's future trajectory by learning implicit scene rules. The article has been authorized to be original by Autonomous Driving Heart! The author's personal understanding is that currently predicting a person's future trajectory is still a challenging problem due to the randomness and subjectivity of human movement. However, human movement patterns in constrained scenes often vary due to scene constraints (such as floor plans, roads, and obstacles) and human-to-human or human-to-object interactivity.

Do you know that programmers will be in decline in a few years?

Nov 08, 2023 am 11:17 AM

Do you know that programmers will be in decline in a few years?

Nov 08, 2023 am 11:17 AM

"ComputerWorld" magazine once wrote an article saying that "programming will disappear by 1960" because IBM developed a new language FORTRAN, which allows engineers to write the mathematical formulas they need and then submit them. Give the computer a run, so programming ends. A few years later, we heard a new saying: any business person can use business terms to describe their problems and tell the computer what to do. Using this programming language called COBOL, companies no longer need programmers. . Later, it is said that IBM developed a new programming language called RPG that allows employees to fill in forms and generate reports, so most of the company's programming needs can be completed through it.

GR-1 Fourier Intelligent Universal Humanoid Robot is about to start pre-sale!

Sep 27, 2023 pm 08:41 PM

GR-1 Fourier Intelligent Universal Humanoid Robot is about to start pre-sale!

Sep 27, 2023 pm 08:41 PM

The humanoid robot is 1.65 meters tall, weighs 55 kilograms, and has 44 degrees of freedom in its body. It can walk quickly, avoid obstacles quickly, climb steadily up and down slopes, and resist impact interference. You can now take it home! Fourier Intelligence's universal humanoid robot GR-1 has started pre-sale. Robot Lecture Hall Fourier Intelligence's Fourier GR-1 universal humanoid robot has now opened for pre-sale. GR-1 has a highly bionic trunk configuration and anthropomorphic motion control. The whole body has 44 degrees of freedom. It has the ability to walk, avoid obstacles, cross obstacles, go up and down slopes, resist interference, and adapt to different road surfaces. It is a general artificial intelligence system. Ideal carrier. Official website pre-sale page: www.fftai.cn/order#FourierGR-1# Fourier Intelligence needs to be rewritten.

Huawei will launch the Xuanji sensing system in the field of smart wearables, which can assess the user's emotional state based on heart rate

Aug 29, 2024 pm 03:30 PM

Huawei will launch the Xuanji sensing system in the field of smart wearables, which can assess the user's emotional state based on heart rate

Aug 29, 2024 pm 03:30 PM

Recently, Huawei announced that it will launch a new smart wearable product equipped with Xuanji sensing system in September, which is expected to be Huawei's latest smart watch. This new product will integrate advanced emotional health monitoring functions. The Xuanji Perception System provides users with a comprehensive health assessment with its six characteristics - accuracy, comprehensiveness, speed, flexibility, openness and scalability. The system uses a super-sensing module and optimizes the multi-channel optical path architecture technology, which greatly improves the monitoring accuracy of basic indicators such as heart rate, blood oxygen and respiration rate. In addition, the Xuanji Sensing System has also expanded the research on emotional states based on heart rate data. It is not limited to physiological indicators, but can also evaluate the user's emotional state and stress level. It supports the monitoring of more than 60 sports health indicators, covering cardiovascular, respiratory, neurological, endocrine,

Read the smart car skateboard chassis in one article

May 24, 2023 pm 12:01 PM

Read the smart car skateboard chassis in one article

May 24, 2023 pm 12:01 PM

01 What is a skateboard chassis? The so-called skateboard chassis integrates the battery, electric transmission system, suspension, brakes and other components on the chassis in advance to achieve separation of the body and chassis and decoupling the design. Based on this type of platform, car companies can significantly reduce early R&D and testing costs, while quickly responding to market demand to create different models. Especially in the era of driverless driving, the layout of the car is no longer centered on driving, but will focus on space attributes. The skateboard-type chassis can provide more possibilities for the development of the upper cabin. As shown in the picture above, of course when we look at the skateboard chassis, we should not be framed by the first impression of "Oh, it is a non-load-bearing body" when we come up. There were no electric cars back then, so there were no battery packs worth hundreds of kilograms, no steering-by-wire system that could eliminate the steering column, and no brake-by-wire system.