How to check memory usage on Xiaomi Mi 14Pro?

php editor Xinyi teaches you how to check the memory usage on Xiaomi 14 Pro mobile phone. Understanding memory usage can help you manage your phone's operating efficiency and avoid lags and app crashes. Through simple operations, you can quickly check which applications occupy a lot of memory, thereby freeing up memory space in time and keeping your phone running smoothly. Let’s master this practical tip together!

How to check the memory usage of Xiaomi 14Pro? Introduction to how to check the memory usage of Xiaomi 14Pro

Open the [Application Management] button in [Settings] of Xiaomi 14Pro phone.

View the list of all installed applications, browse the list and find the application you want to view, click on it to enter the application details page.

In the application details page, you will see some information about the application, including the size of the application, the storage space occupied, etc.

If you want to check the memory usage of the application, continue to click [Memory] or [Memory Usage] and other options to enter the memory management interface.

In the memory management interface, you will see a chart or list of the memory size currently occupied by the application and memory usage.

After reading the above content, I believe that most friends already know the answer to how to check the memory usage of Xiaomi 14Pro. You can check the memory usage of other applications as needed, or perform some memory cleaning. operate.

Previous article: Will Damai have a shield number? Next article: How to enable high-frequency PWM dimming on OPPOA2The above is the detailed content of How to check memory usage on Xiaomi Mi 14Pro?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Large memory optimization, what should I do if the computer upgrades to 16g/32g memory speed and there is no change?

Jun 18, 2024 pm 06:51 PM

Large memory optimization, what should I do if the computer upgrades to 16g/32g memory speed and there is no change?

Jun 18, 2024 pm 06:51 PM

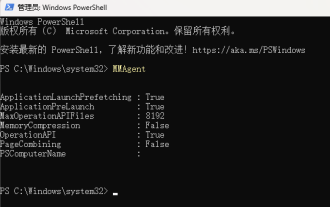

For mechanical hard drives or SATA solid-state drives, you will feel the increase in software running speed. If it is an NVME hard drive, you may not feel it. 1. Import the registry into the desktop and create a new text document, copy and paste the following content, save it as 1.reg, then right-click to merge and restart the computer. WindowsRegistryEditorVersion5.00[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\SessionManager\MemoryManagement]"DisablePagingExecutive"=d

Sources say Samsung Electronics and SK Hynix will commercialize stacked mobile memory after 2026

Sep 03, 2024 pm 02:15 PM

Sources say Samsung Electronics and SK Hynix will commercialize stacked mobile memory after 2026

Sep 03, 2024 pm 02:15 PM

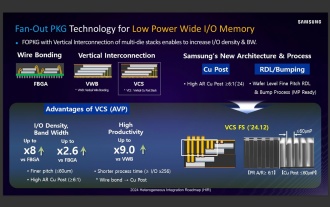

According to news from this website on September 3, Korean media etnews reported yesterday (local time) that Samsung Electronics and SK Hynix’s “HBM-like” stacked structure mobile memory products will be commercialized after 2026. Sources said that the two Korean memory giants regard stacked mobile memory as an important source of future revenue and plan to expand "HBM-like memory" to smartphones, tablets and laptops to provide power for end-side AI. According to previous reports on this site, Samsung Electronics’ product is called LPWide I/O memory, and SK Hynix calls this technology VFO. The two companies have used roughly the same technical route, which is to combine fan-out packaging and vertical channels. Samsung Electronics’ LPWide I/O memory has a bit width of 512

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

Local fine-tuning of DeepSeek class models faces the challenge of insufficient computing resources and expertise. To address these challenges, the following strategies can be adopted: Model quantization: convert model parameters into low-precision integers, reducing memory footprint. Use smaller models: Select a pretrained model with smaller parameters for easier local fine-tuning. Data selection and preprocessing: Select high-quality data and perform appropriate preprocessing to avoid poor data quality affecting model effectiveness. Batch training: For large data sets, load data in batches for training to avoid memory overflow. Acceleration with GPU: Use independent graphics cards to accelerate the training process and shorten the training time.

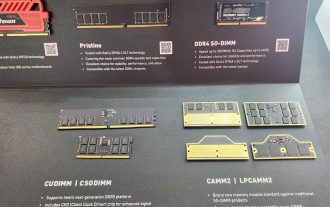

Kingbang launches new DDR5 8600 memory, offering CAMM2, LPCAMM2 and regular models to choose from

Jun 08, 2024 pm 01:35 PM

Kingbang launches new DDR5 8600 memory, offering CAMM2, LPCAMM2 and regular models to choose from

Jun 08, 2024 pm 01:35 PM

According to news from this site on June 7, GEIL launched its latest DDR5 solution at the 2024 Taipei International Computer Show, and provided SO-DIMM, CUDIMM, CSODIMM, CAMM2 and LPCAMM2 versions to choose from. ▲Picture source: Wccftech As shown in the picture, the CAMM2/LPCAMM2 memory exhibited by Jinbang adopts a very compact design, can provide a maximum capacity of 128GB, and a speed of up to 8533MT/s. Some of these products can even be stable on the AMDAM5 platform Overclocked to 9000MT/s without any auxiliary cooling. According to reports, Jinbang’s 2024 Polaris RGBDDR5 series memory can provide up to 8400

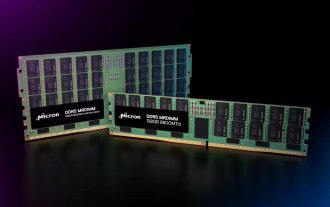

DDR5 MRDIMM and LPDDR6 CAMM memory specifications are ready for launch, JEDEC releases key technical details

Jul 23, 2024 pm 02:25 PM

DDR5 MRDIMM and LPDDR6 CAMM memory specifications are ready for launch, JEDEC releases key technical details

Jul 23, 2024 pm 02:25 PM

According to news from this website on July 23, the JEDEC Solid State Technology Association, the microelectronics standard setter, announced on the 22nd local time that the DDR5MRDIMM and LPDDR6CAMM memory technical specifications will be officially launched soon, and introduced the key details of these two memories. The "MR" in DDR5MRDIMM stands for MultiplexedRank, which means that the memory supports two or more Ranks and can combine and transmit multiple data signals on a single channel without additional physical The connection can effectively increase the bandwidth. JEDEC has planned multiple generations of DDR5MRDIMM memory, with the goal of eventually increasing its bandwidth to 12.8Gbps, compared with the current 6.4Gbps of DDR5RDIMM memory.

Lexar God of War Wings ARES RGB DDR5 8000 Memory Picture Gallery: Colorful White Wings supports RGB

Jun 25, 2024 pm 01:51 PM

Lexar God of War Wings ARES RGB DDR5 8000 Memory Picture Gallery: Colorful White Wings supports RGB

Jun 25, 2024 pm 01:51 PM

When the prices of ultra-high-frequency flagship memories such as 7600MT/s and 8000MT/s are generally high, Lexar has taken action. They have launched a new memory series called Ares Wings ARES RGB DDR5, with 7600 C36 and 8000 C38 is available in two specifications. The 16GB*2 sets are priced at 1,299 yuan and 1,499 yuan respectively, which is very cost-effective. This site has obtained the 8000 C38 version of Wings of War, and will bring you its unboxing pictures. The packaging of Lexar Wings ARES RGB DDR5 memory is well designed, using eye-catching black and red color schemes with colorful printing. There is an exclusive &quo in the upper left corner of the packaging.

Xiaomi 14, Redmi K70 and other models will launch Thermal OS full AI functions: no need to apply for qualifications

Aug 07, 2024 pm 08:02 PM

Xiaomi 14, Redmi K70 and other models will launch Thermal OS full AI functions: no need to apply for qualifications

Aug 07, 2024 pm 08:02 PM

According to news on August 6, Xiaomi community announced that after multiple rounds of testing and adjustments, Xiaomi will launch full AI functions on some models of mobile phones. That is to say, you do not need to apply for internal testing qualifications for AI functions in the community. As long as you meet the mobile phone model and system version requirements, you can start the function experience. According to reports, the full range of AI functions include: Xiaoai input assistant, AI photo taking, AI image search, real-time subtitles, on-device Xiaoai classmate pictures, and on-device photo album AI editing. The official said that this project will complete the function push and grayscale on a model-by-model basis within this month. If you cannot experience it yet, please wait patiently. The specific push time will be subject to the follow-up push time. Supported models: Xiaomi 14 Xiaomi 14Pro Xiaomi 14Pro titanium Xiaomi 14Ultra Xiaomi Civi4Pro Xiaomi MIX Flip Xiaomi M

Longsys displays FORESEE LPCAMM2 notebook memory: up to 64GB, 7500MT/s

Jun 05, 2024 pm 02:22 PM

Longsys displays FORESEE LPCAMM2 notebook memory: up to 64GB, 7500MT/s

Jun 05, 2024 pm 02:22 PM

According to news from this website on May 16, Longsys, the parent company of the Lexar brand, announced that it will demonstrate a new form of memory - FORESEELPCAMM2 at CFMS2024. FORESEELPCAMM2 is equipped with LPDDR5/5x particles, is compatible with 315ball and 496ball designs, supports frequencies of 7500MT/s and above, and has product capacity options of 16GB, 32GB, and 64GB. In terms of product technology, FORESEELPCAMM2 adopts a new design architecture to directly package 4 x32LPDDR5/5x memory particles on the compression connector, realizing a 128-bit memory bus on a single memory module, providing a more efficient packaging than standard memory modules.