15 recommended open source free image annotation tools

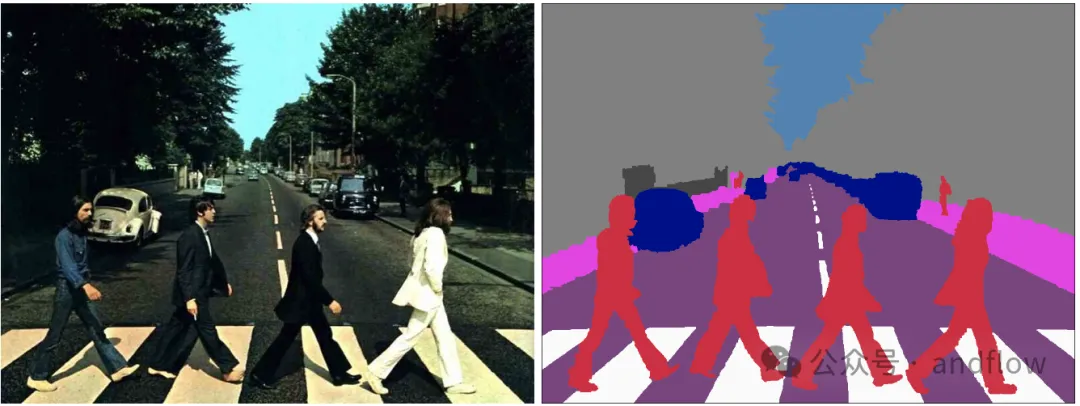

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing and graphs. Vision models have a wide range of applications, such as assisting vehicles in identifying obstacles on the road and helping in the detection of diseases. and diagnosis through medical image recognition.

This article mainly recommends some better open source and free image annotation tools.

##https://www.php.cn/link/9e411b2d0cbcc1d9cd8775e89e96774f

https://www.php.cn/link/47af10edfc4c96329531345635a4baa9

##Makesense.ai is a free online cross-platform Platform tool for labeling photos, ideal for small computer vision deep learning projects. It simplifies dataset preparation and labels can be downloaded in multiple formats. The application is written in TypeScript and developed based on the React/Redux framework. It integrates advanced AI models such as YOLOv, SSD pre-trained on the COCO dataset, and PoseNet to automate image annotation. The AI function is based on the TensorFlow.js framework, which ensures data privacy and security because the photos do not need to be transmitted to the server. 2.Labelme

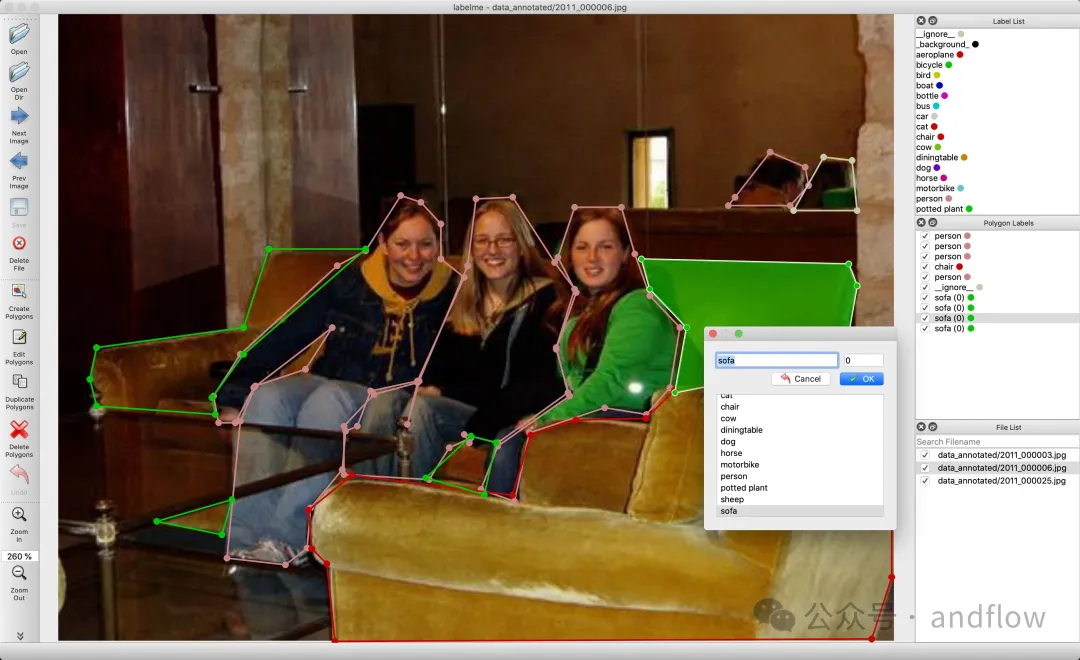

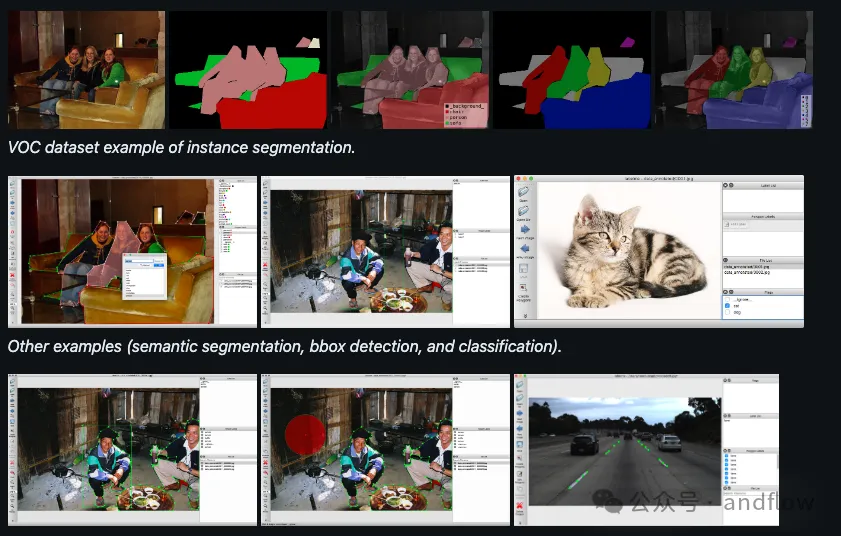

2.Labelme

Functional features:

Functional features:

- Supports polygon, rectangle, circle, line, point and image-level mark annotation

- Applies to Ubuntu, macOS and Windows

- Annotation information is saved as a JSON file

- Advanced usage examples

- Assign markers to the entire image

- Assign labels to individual faces

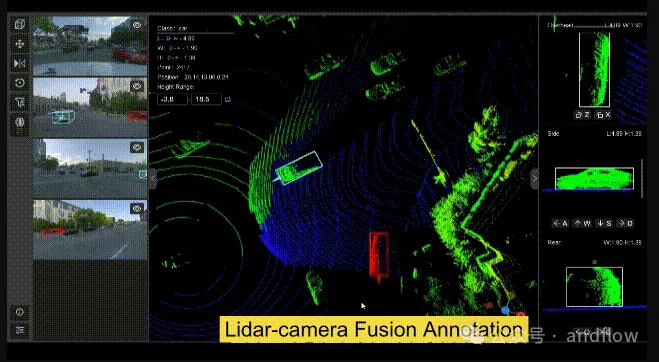

3.Xtreme1

https://www.php.cn/link/ae9ed3423e5d1c1fe8769d705207f040

- Supports data annotation of images, 3D LiDAR and 2D/3D sensor fusion datasets

- Built-in pre-labeled and interactive models support 2D/ 3D object detection, segmentation and classification

- Configurable ontology center for general classes (with hierarchy) and attributes for model training

- Data management and quality monitoring

- Tools for finding and fixing label errors

- Visualization of model results to assist model evaluation

- RLHF for large language models (beta)

- Easy to use with Docker or from Source code installation

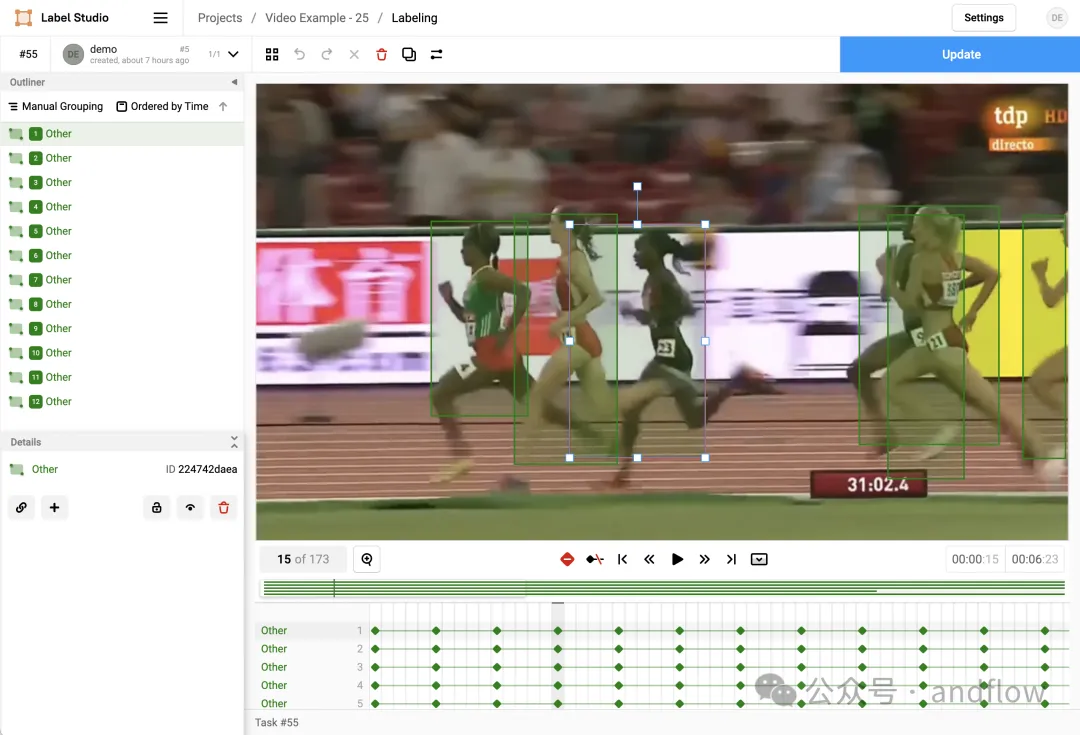

4.Label Studio

https://www.php.cn/link/359f449e012b58f30cbc80ea8b9e794a

- It has a user-friendly interface, can export data in standardized formats, supports integrated machine learning models, and can be customized for specific projects.

- It is based on the Apache-2.0 open source license.

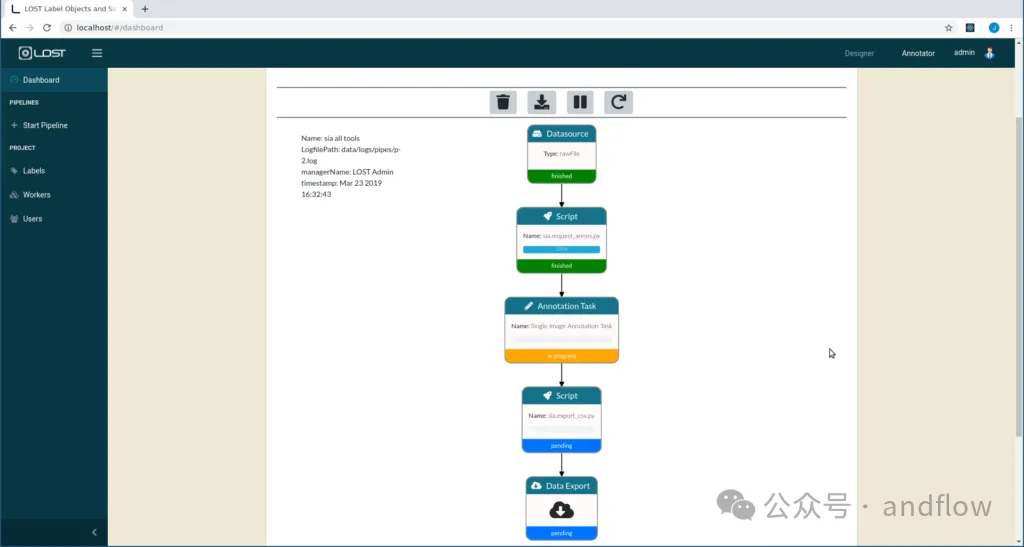

5.LOST

https://www.php.cn/link/254b6cccc84a3b7e5c696e67c9ef656e

- Web-based collaborative image annotation framework

- Pre-built annotation pipeline for on-the-fly image annotation

- Customized annotation pipeline

- Extensible application

- Easily connect to external file systems such as S3 Bucket or Azure Blobstorage

- Visualize the annotation process in the browser

- Configurable locally or on a web server

- Support organizing tag trees

- Monitor the annotation process

- Support in-browser annotation

- Ability to model semi-automatic annotation pipelines

- Annotation suggestion generation

- Single image annotation tool (SIA), used to annotate bboxes, polygons, points or lines

- Multi image annotation tool (MIA), used to annotate the entire image cluster

- Export labeling functions

- Personal and project-based labeling statistics

- Colored tag tree for label organization

- View labeling functions

- Pipeline projects Import and export

- Pipeline project sharing

- Integrate Jupyter-Lab, easy development of pipelines

- LDAP integration

- Email notification

- Extensible Designed to distribute intensive computing processes across multiple machines

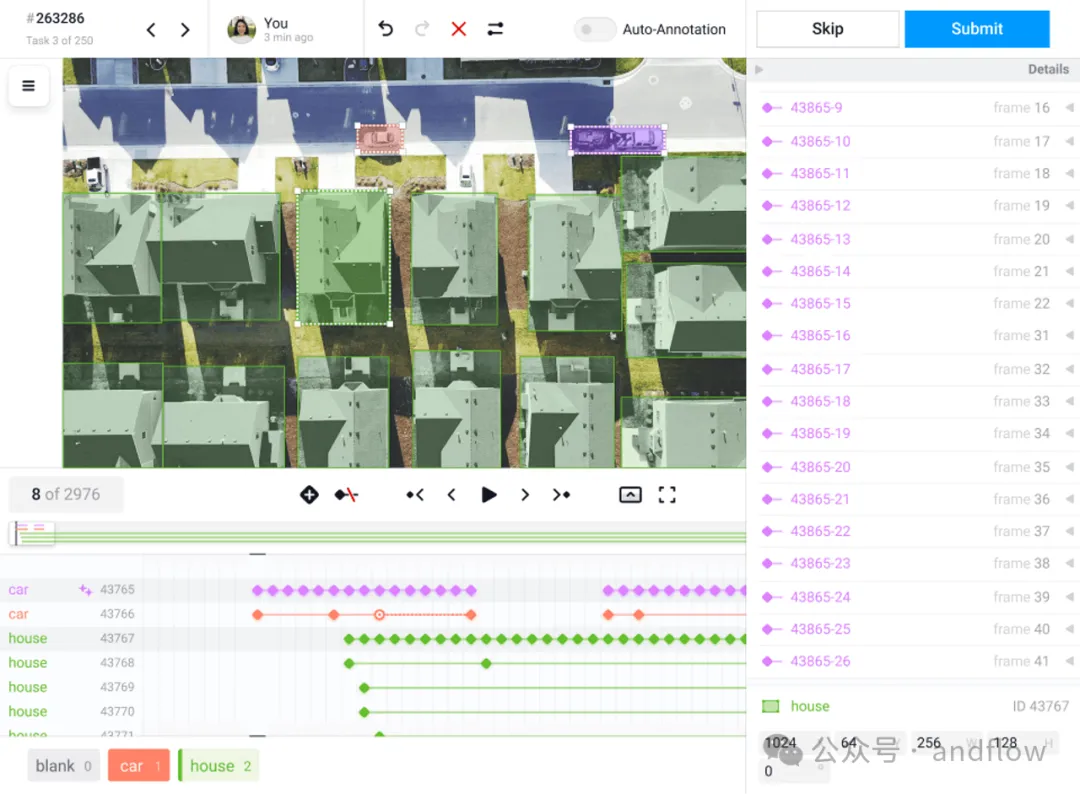

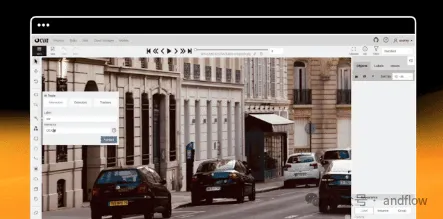

6.CVAT

##https://www.php.cn/link/ 4d91e93c7905243a769485162b66e3dc

##CVAT (Computer Vision Annotation Tool) is an interactive tool for video and image annotation, widely used in computer vision. It supports a data-centric approach to artificial intelligence and is available online for free or with subscription for additional features. CVAT can also be installed privately and provides enterprise support for advanced features.

##CVAT (Computer Vision Annotation Tool) is an interactive tool for video and image annotation, widely used in computer vision. It supports a data-centric approach to artificial intelligence and is available online for free or with subscription for additional features. CVAT can also be installed privately and provides enterprise support for advanced features.

https://www.php.cn/link/388ac20c845a327f97edece8acba6237

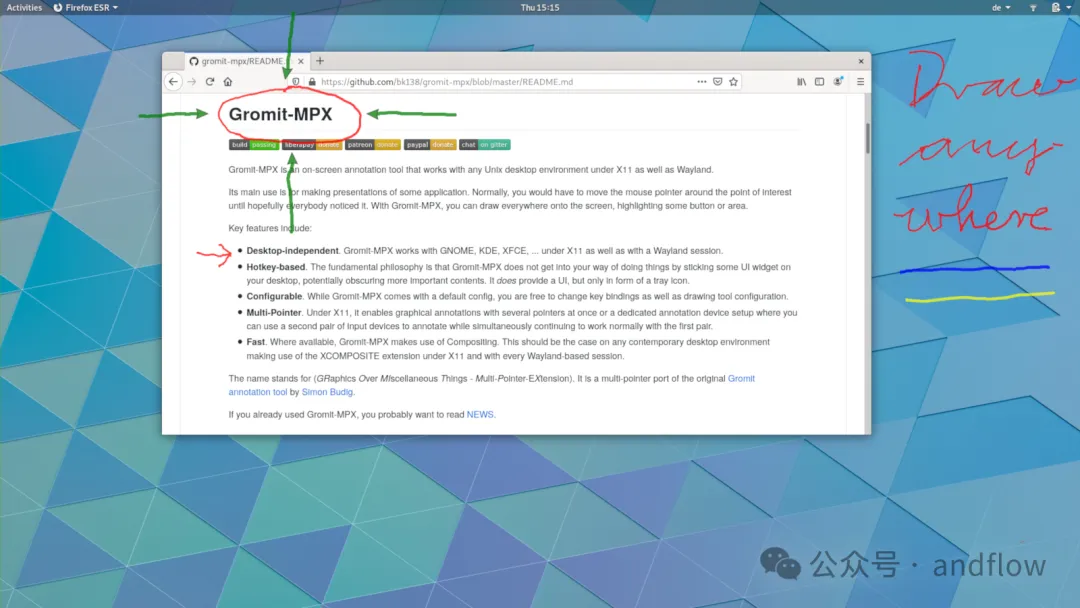

Gromit-MPX is an annotation tool for Unix desktop environments that allows users to draw directly on the screen and highlight points of interest to enhance presentations.

Gromit-MPX is an annotation tool for Unix desktop environments that allows users to draw directly on the screen and highlight points of interest to enhance presentations.

https://www.php.cn/link/6afea581e2d33bf935e94036b41979b2

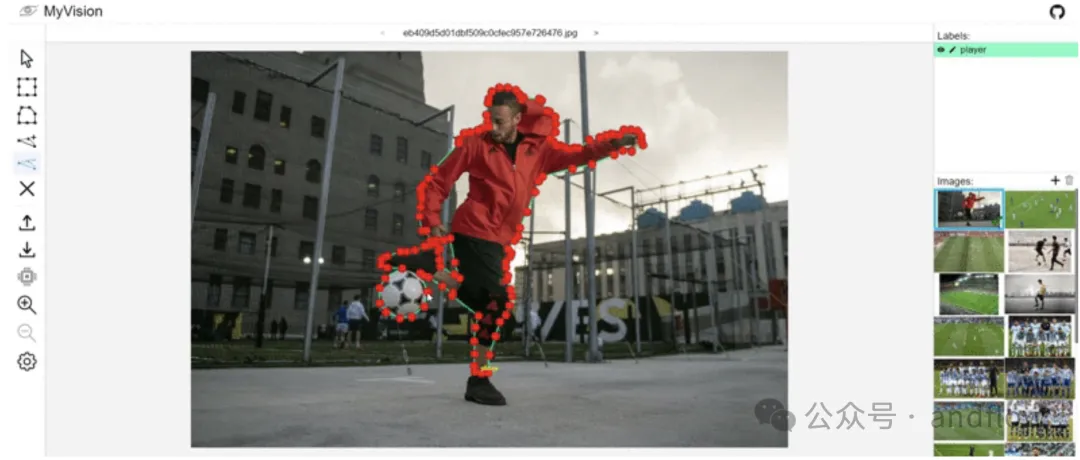

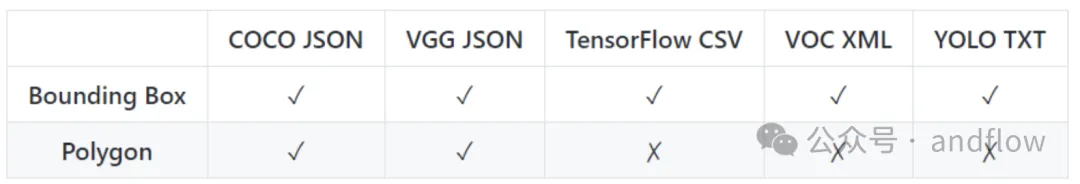

MyVision is a free online image annotation tool used to generate machine learning training data for computer vision. Supports drawing bounding boxes and polygons for object annotation, polygon operations, and supports various dataset formats. It also supports automatic annotation using the "COCO-SSD" model, which can be operated locally to ensure data privacy and security.

Supported data formats:

Functional features:

- Draw bounding boxes and polygons for object annotations

- Use features for polygon operations to edit, remove and add new points

- Supports various dataset formats

- Supports automatic annotation using the "COCO-SSD" model

- Runs locally to maintain data privacy

- Allows import and continued processing of existing annotation projects

- Can be used to convert datasets from one format to another

9.LabelImg

https://www.php.cn/link/112a8e92dcedcda4237de18e9126b2d2

LabelImg is a popular image annotation tool that has joined the Label Studio community and is no longer actively developed. Label Studio is a flexible open source data labeling tool for various types of data, including images, text, audio, video and time series data.

The annotation information in LabelImg is saved in PASCAL VOC format. In addition, it also supports YOLO and XML formats.

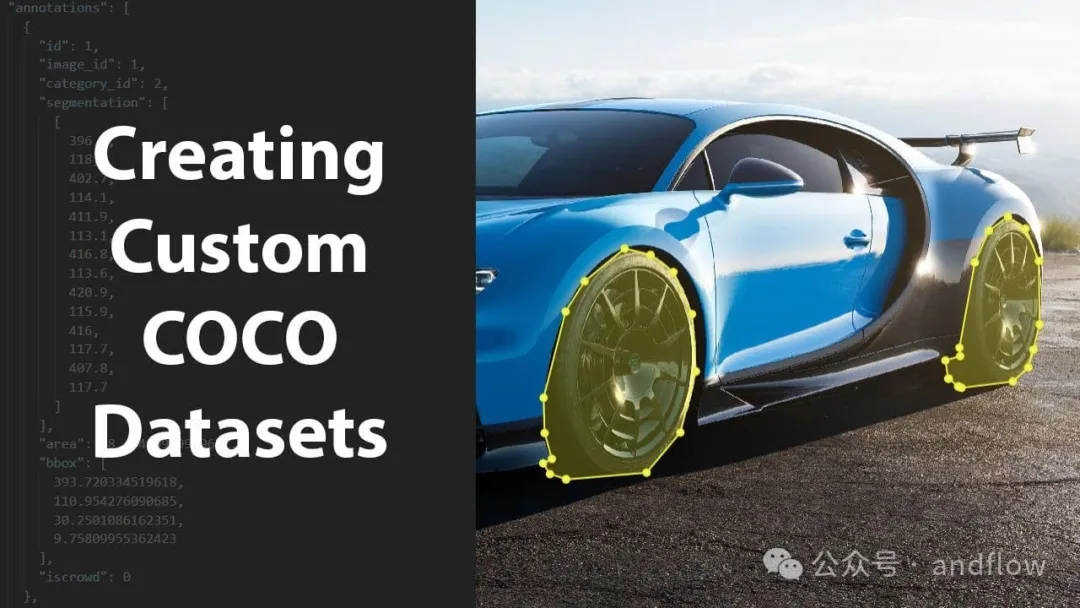

10.Coco Annotator

https://www.php.cn/link/e3743b463beb38a2a24eebe5ecbad410

COCO Annotator is an efficient and versatile web-based image labeling tool designed to create datasets for training image localization and object detection.

Features it provides include segment marking, object instance tracking, and marking objects with disconnected visible parts. It stores and exports notes in COCO format through an intuitive and customizable interface.

Functional features:

- We-based tools

- Efficient and versatile image labeling

- Designed for the creation of training data for image localization and object detection

- Segment labeling

- Object Instance Tracking

- Mark objects with broken visible parts

- Store and export comments in COCO format

- Intuitive and customizable interface

- Allows the user to manually define areas in the image

- Create text descriptions

- Object labeling via bounding boxes, masking tools, or marker points

- Freeform curves or Polygon annotations

- Direct export to COCO format

- Segmentation of objects

- Ability to add keypoints

- Useful API endpoints for data analysis

- Import a dataset in COCO format

- Label disconnected objects as single instances

- Label image fragments with any number of labels simultaneously

- Allows for each Instance or object custom metadata

- Advanced selection tools such as DEXTR, MaskRCNN, and Magic Wand

- Annotate images with semi-trained models

- Generate dataset using Google Images

- User Authentication System

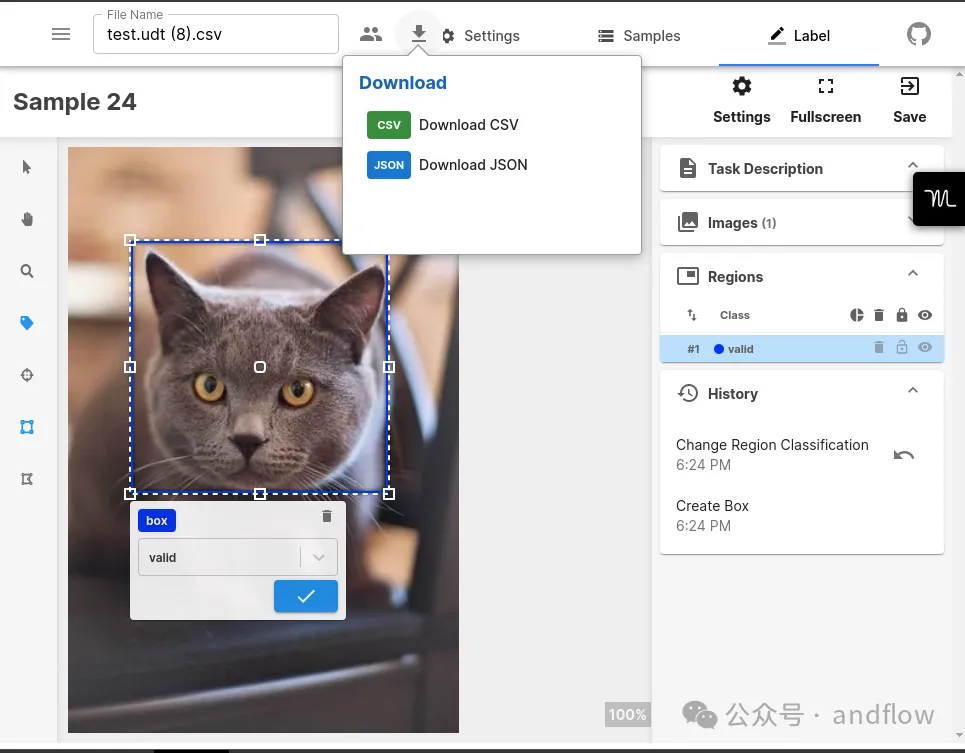

11.Universal Data Tool

https://www.php.cn/link/c4dc035d67bc669546c560622ac4bdd4

Universal Data Tool is a versatile application for editing and annotating data types such as images, text, audio, and documents. It supports tasks such as image segmentation, text classification, and audio transcription. The tool enables real-time collaboration, runs on a variety of platforms, and supports multiple data formats.

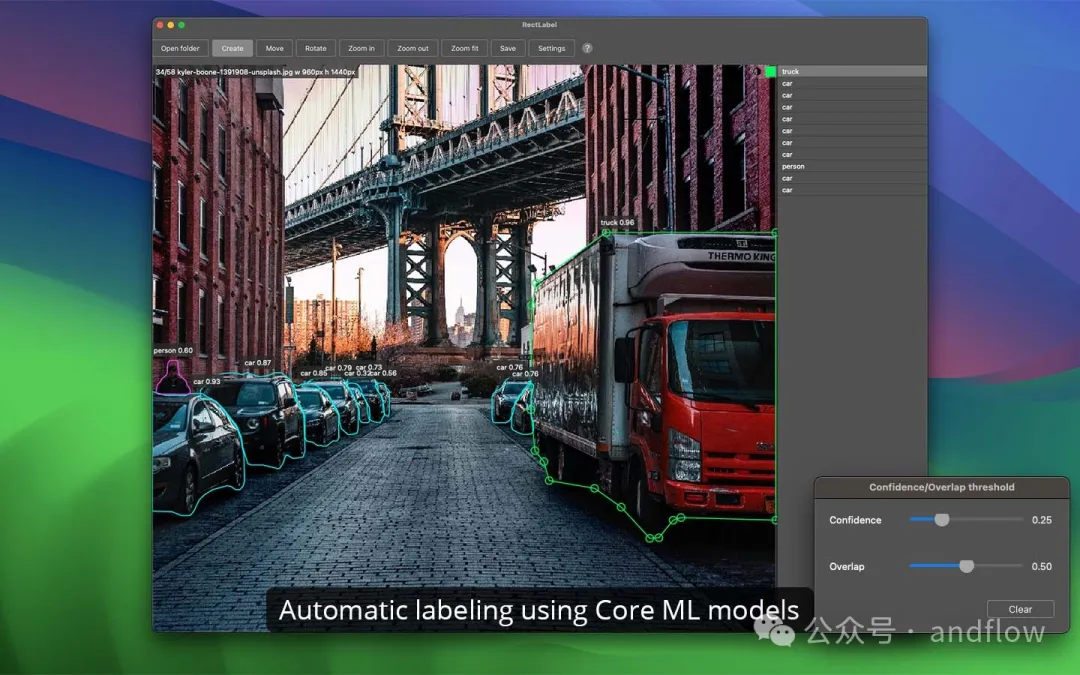

12.RectLabel

https://www.php.cn/link/1b31a4f23c784d5b162a3066fa9aaf4f

#Label is an offline image annotation tool that can be used for object detection and segmentation.

Key features:

- Labeling faces and pixels using the Segment Anything model

- Automatic tagging using the Core ML model

- Automatic text recognition of lines and words

- Labeling faces using holes

- Label cubic Bezier curves, line segments and points

- Label-oriented bounding boxes in aerial imagery

- Use skeletons to mark key points

- Use brushes and hyper Pixel Label Pixel

- Quickly set objects, properties, hotkeys and labels

- Search for objects, properties and image names in the gallery view

- Export to COCO, Labelme, COML, YOLO, DOTA and CSV formats

- Export indexed color mask images and grayscale mask images

- Videos to image frames, enhanced images, etc.

13.OpenLabeling

https://www.php.cn/link/03c4207fa67ee3ea4f42c748980eda86

OpenLabeling is an open source tool for labeling images and videos. It supports multiple formats such as PASCAL VOC and YOLO Darknet.

This tool has been used for: deep learning object detection models, interference-aware Siamese networks for visual object tracking, bounding box tracking, and the OpenCV tracker for video object tracking.

14.bbox-visualizer

https://www.php.cn/link/ed71773d43d53fa70ecf593c6582d9cc

bbox-visualizer helps users draw bounding boxes around objects, eliminating the need for complex mathematical calculations of label positioning. It provides various visualization types for labeling objects after recognition. The data format of bounding box points is: (xmin, ymin, xmax, ymax).

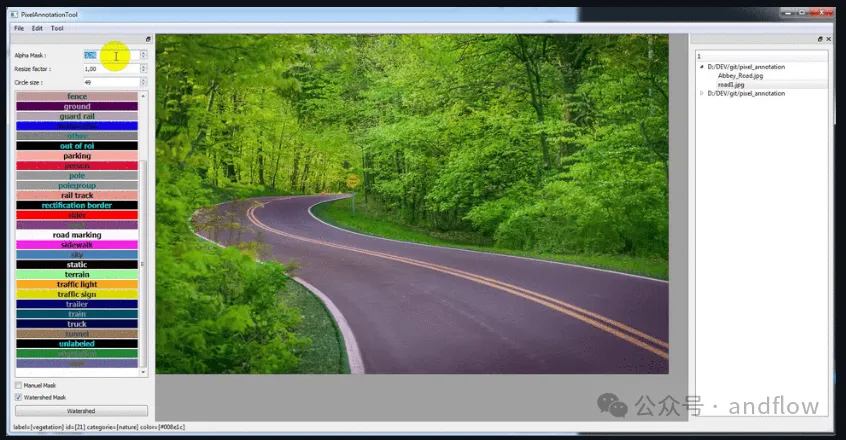

15.PixelAnnotationTool

https://www.php.cn/link/2e3e809d4082093c8bbf499ae9966cfc

PixelAnnotationTool is a tool that can quickly manually annotate images in a directory using OpenCV's watershed algorithm.

Users can manually mark areas with a brush and then start the algorithm. If the initial segmentation needs correction, the user can redraw new region annotations over the erroneous regions.

The above is the detailed content of 15 recommended open source free image annotation tools. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

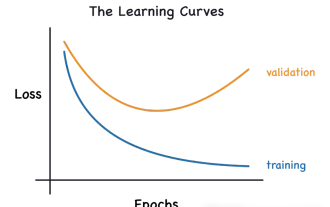

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

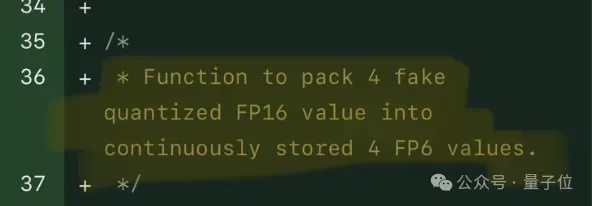

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,