Technology peripherals

Technology peripherals

AI

AI

ICLR 2024 | Model critical layers for federated learning backdoor attacks

ICLR 2024 | Model critical layers for federated learning backdoor attacks

ICLR 2024 | Model critical layers for federated learning backdoor attacks

Federated learning uses multiple parties to train models while data privacy is protected. However, because the server cannot monitor the training process performed locally by participants, participants can tamper with the local training model, thus posing security risks to the overall federated learning model, such as backdoor attacks.

This article focuses on how to launch a backdoor attack on federated learning under a defensively protected training framework. This paper finds that the implantation of backdoor attacks is more closely related to some neural network layers, and calls these layers the key layers for backdoor attacks. In federated learning, clients participating in training are distributed on different devices. They each train their own models, and then upload the updated model parameters to the server for aggregation. Since the client participating in the training is not trustworthy and there is a certain risk, the server

is based on the discovery of the key layer of the backdoor. This article proposes to bypass the defense algorithm detection by attacking the key layer of the backdoor, so that a small number of participants can be controlled to perform efficient backdoor attack.

Paper title: Backdoor Federated Learning By Poisoning Backdoor-Critical Layers

Paper link: https://openreview.net/pdf?id=AJBGSVSTT2

Code link: https://github.com/zhmzm/Poisoning_Backdoor-critical_Layers_Attack

Method

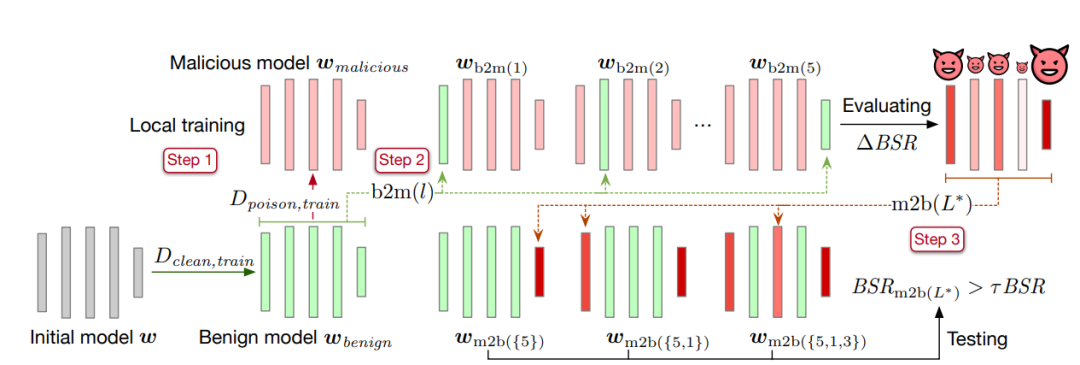

This article A layer replacement method is proposed to identify key layers of backdoors. The specific method is as follows:

The first step is to train the model on a clean data set until convergence, and save the model parameters as benign model

. Then copy the benign model and train it on the data set containing the backdoor. After convergence, save the model parameters and record them as malicious model

. Then copy the benign model and train it on the data set containing the backdoor. After convergence, save the model parameters and record them as malicious model  .

. The second step is to replace a layer of parameters in the benign model into the malicious model containing the backdoor, and calculate the backdoor attack success rate

of the resulting model. The difference between the obtained backdoor attack success rate and the malicious model's backdoor attack success rate BSR is ΔBSR, which can be used to obtain the impact of this layer on backdoor attacks. Using the same method for each layer in the neural network, you can get a list of the impact of all layers on backdoor attacks.

of the resulting model. The difference between the obtained backdoor attack success rate and the malicious model's backdoor attack success rate BSR is ΔBSR, which can be used to obtain the impact of this layer on backdoor attacks. Using the same method for each layer in the neural network, you can get a list of the impact of all layers on backdoor attacks. The third step is to sort all layers according to their impact on backdoor attacks. Take the layer with the greatest impact from the list and add it to the backdoor attack key layer set

, and implant the backdoor attack key layer (layers in the set

, and implant the backdoor attack key layer (layers in the set  ) parameters in the malicious model into the benign model. Calculate the backdoor attack success rate

) parameters in the malicious model into the benign model. Calculate the backdoor attack success rate of the obtained model. If the backdoor attack success rate is greater than the set threshold τ multiplied by the malicious model backdoor attack success rate

of the obtained model. If the backdoor attack success rate is greater than the set threshold τ multiplied by the malicious model backdoor attack success rate , the algorithm will be stopped. If it is not satisfied, continue to add the largest layer among the remaining layers in the list to the key layer for backdoor attack

, the algorithm will be stopped. If it is not satisfied, continue to add the largest layer among the remaining layers in the list to the key layer for backdoor attack  until the conditions are met.

until the conditions are met.

After obtaining the collection of key layers of backdoor attacks, this article proposes a method to bypass the detection of defense methods by attacking the key layers of backdoors. In addition, this paper introduces simulation aggregation and benign model centers to further reduce the distance from other benign models.

Experimental results

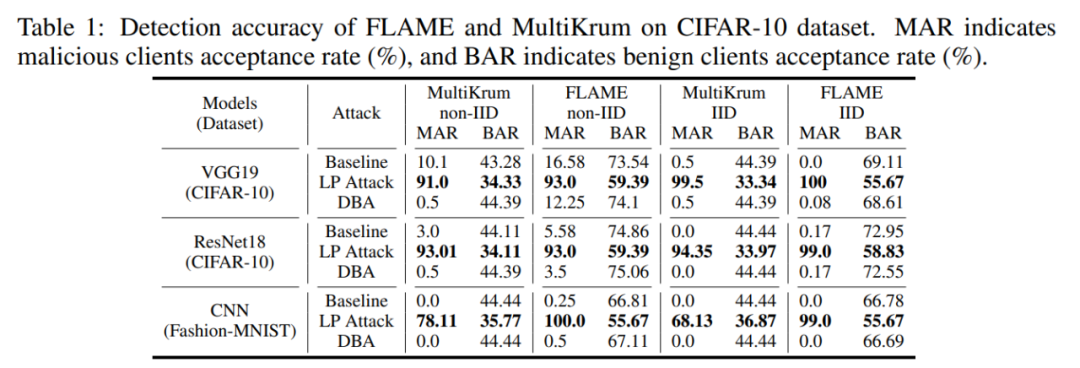

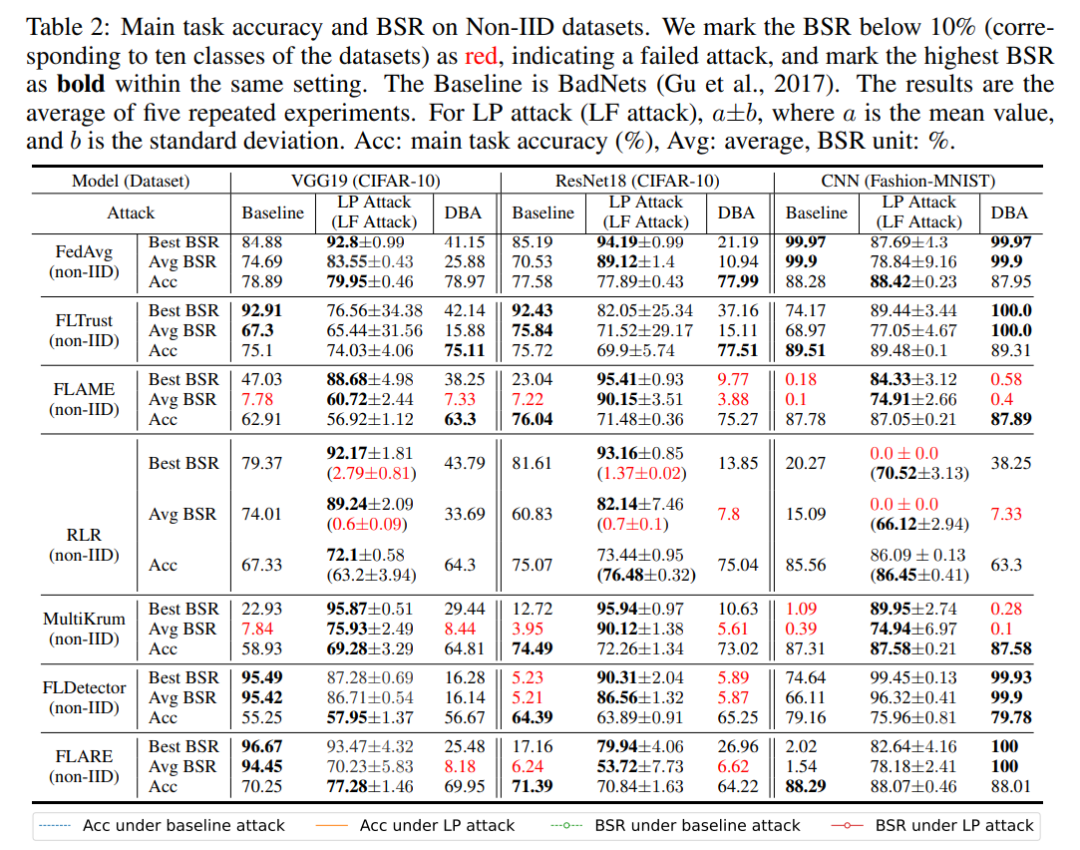

This article verifies the effectiveness of key layer attacks based on backdoors on multiple defense methods on the CIFAR-10 and MNIST data sets. The experiment will use the backdoor attack success rate BSR and the malicious model acceptance rate MAR (benign model acceptance rate BAR) as indicators to measure the effectiveness of the attack.

First of all, layer-based attack LP Attack can allow malicious clients to obtain a high selection rate. As shown in the table below, LP Attack achieved a reception rate of 90% on the CIFAR-10 dataset, which is much higher than the 34% of benign users.

Then, LP Attack can achieve a high backdoor attack success rate, even in a setting with only 10% malicious clients. As shown in the table below, LP Attack can achieve a high backdoor attack success rate BSR under the protection of different data sets and different defense methods.

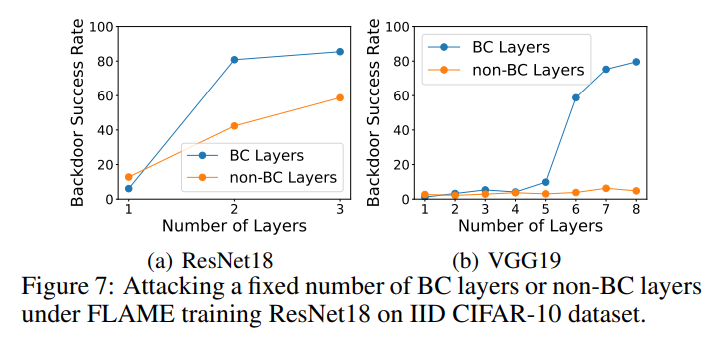

In the ablation experiment, this article poisoned the backdoor key layer and the non-backdoor key layer respectively and measured the backdoor attack success rate of the two experiments. As shown in the figure below, when attacking the same number of layers, the success rate of poisoning non-backdoor key layers is much lower than that of poisoning backdoor key layers. This shows that the algorithm in this article can select effective backdoor attack key layers.

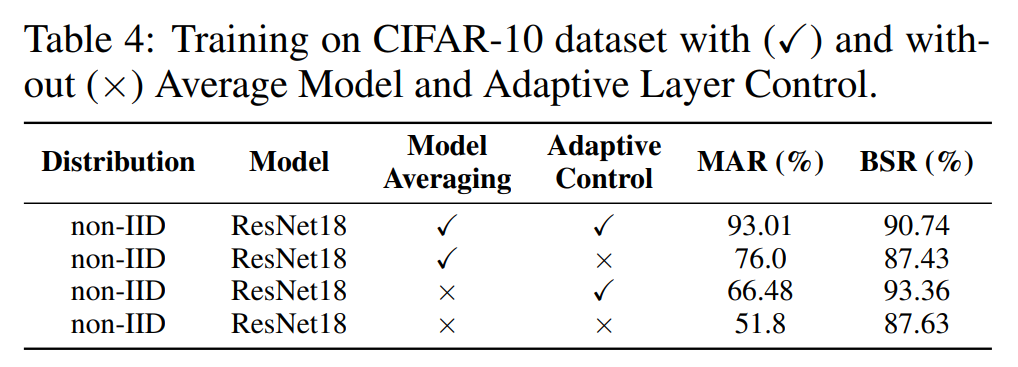

In addition, we conduct ablation experiments on the model aggregation module Model Averaging and the adaptive control module Adaptive Control. As shown in the table below, both modules improve the selection rate and backdoor attack success rate, proving the effectiveness of these two modules.

Summary

This article found that backdoor attacks are closely related to some layers, and proposed an algorithm to search for key layers of backdoor attacks. This paper proposes a layer-wise attack on the protection algorithm in federated learning by using backdoors to attack key layers. The proposed attack reveals the vulnerabilities of the current three types of defense methods, indicating that more sophisticated defense algorithms will be needed to protect federated learning security in the future.

Introduction to the author

Zhuang Haomin, graduated from South China University of Technology with a bachelor's degree, worked as a research assistant in the IntelliSys Laboratory of Louisiana State University, and is currently studying for a doctoral degree at the University of Notre Dame . The main research directions are backdoor attacks and adversarial sample attacks.

The above is the detailed content of ICLR 2024 | Model critical layers for federated learning backdoor attacks. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

The first batch of drones in the country to deliver college entrance examination admission notices, South China University of Technology's 2024 freshmen 'happy from the sky'

Jul 17, 2024 am 03:15 AM

The first batch of drones in the country to deliver college entrance examination admission notices, South China University of Technology's 2024 freshmen 'happy from the sky'

Jul 17, 2024 am 03:15 AM

This website reported on July 16 that according to official news from South China University of Technology, Guangzhou Post and South China University of Technology jointly explored the use of drones to deliver college entrance examination admission notices to candidates. The admission notices that Tu Sulan and the four were waiting for came from South China University of Technology. Arrive via direct flight. On the morning of July 15th, Tu Sulan, a candidate who was admitted to the chemistry major of South China University of Technology (Strong Basic Plan Class), and students Zhong Mingcheng, Wang Yunyi, and Li Jinquan, who were admitted to the sports training major, "looked up" at Vanke Mountain View City, Huangpu District, Guangzhou Looking forward to it" because their admission notice will "come from heaven with joy". According to reports, the entire delivery process does not require manual control by professional pilots. Instead, the system route is set through the flight control center in the background of the drone. At 11 a.m., the admissions staff handed over the sealed notice to the postal service

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which

. Then copy the benign model and train it on the data set containing the backdoor. After convergence, save the model parameters and record them as malicious model

. Then copy the benign model and train it on the data set containing the backdoor. After convergence, save the model parameters and record them as malicious model  .

.  of the resulting model. The difference between the obtained backdoor attack success rate and the malicious model's backdoor attack success rate BSR is ΔBSR, which can be used to obtain the impact of this layer on backdoor attacks. Using the same method for each layer in the neural network, you can get a list of the impact of all layers on backdoor attacks.

of the resulting model. The difference between the obtained backdoor attack success rate and the malicious model's backdoor attack success rate BSR is ΔBSR, which can be used to obtain the impact of this layer on backdoor attacks. Using the same method for each layer in the neural network, you can get a list of the impact of all layers on backdoor attacks.  , and implant the backdoor attack key layer (layers in the set

, and implant the backdoor attack key layer (layers in the set  ) parameters in the malicious model into the benign model. Calculate the backdoor attack success rate

) parameters in the malicious model into the benign model. Calculate the backdoor attack success rate of the obtained model. If the backdoor attack success rate is greater than the set threshold τ multiplied by the malicious model backdoor attack success rate

of the obtained model. If the backdoor attack success rate is greater than the set threshold τ multiplied by the malicious model backdoor attack success rate , the algorithm will be stopped. If it is not satisfied, continue to add the largest layer among the remaining layers in the list to the key layer for backdoor attack

, the algorithm will be stopped. If it is not satisfied, continue to add the largest layer among the remaining layers in the list to the key layer for backdoor attack