Technology peripherals

Technology peripherals

AI

AI

AI helps brain-computer interface research, New York University's breakthrough neural speech decoding technology, published in Nature sub-journal

AI helps brain-computer interface research, New York University's breakthrough neural speech decoding technology, published in Nature sub-journal

AI helps brain-computer interface research, New York University's breakthrough neural speech decoding technology, published in Nature sub-journal

Author | Chen Xupeng

Aphasia due to defects in the nervous system can lead to serious life disabilities, and it may limit people professional and social life.

In recent years, the rapid development of deep learning and brain-computer interface (BCI) technology has provided the feasibility of developing neural voice prostheses that can help aphasic people communicate. However, speech decoding of neural signals faces challenges.

Recently, researchers from VideoLab and Flinker Lab at the University of Jordan have developed a new type of differentiable speech synthesizer that can use a lightweight convolutional neural network to encode speech into a series of interpretable speech parameters (such as pitch, loudness, formant frequency, etc.), and these parameters are synthesized into speech through a differentiable neural network. This synthesizer can also parse speech parameters (such as pitch, loudness, formant frequencies, etc.) through a lightweight convolutional neural network, and resynthesize speech through a differentiable speech synthesizer.

The researchers established a neural signal decoding system that is highly interpretable and applicable to situations with small data volumes, by mapping neural signals to these speech parameters without changing the meaning of the original content.

The research is titled "A neural speech decoding framework leveraging deep learning and speech synthesis" and was published in "Nature Machine Intelligence on April 8, 2024 ” magazine.

Paper link: https://www.nature.com/articles/s42256-024-00824-8

Research Background

Most attempts to develop neuro-speech decoders rely on a special kind of data: electrocorticography (ECoG) recordings from patients undergoing epilepsy surgery. Using electrodes implanted in patients with epilepsy to collect cerebral cortex data during speech production, these data have high spatiotemporal resolution and have helped researchers achieve a series of remarkable results in the field of speech decoding, helping to promote brain-computer interfaces. development of the field.

Speech decoding of neural signals faces two major challenges.

First of all, the data used to train personalized neural to speech decoding models is very limited in time, usually only about ten minutes, while deep learning models often require a large amount of training data to drive.

Secondly, human pronunciation is very diverse. Even if the same person speaks the same word repeatedly, the speech speed, intonation and pitch will change, which adds complexity to the representation space built by the model.

Early attempts to decode neural signals into speech mainly relied on linear models. The models usually did not require huge training data sets and were highly interpretable, but the accuracy was low.

Recent deep neural networks, especially the use of convolutional and recurrent neural network architectures, are developed in two key dimensions: the intermediate latent representation of simulated speech and the quality of synthesized speech. For example, there are studies that decode cerebral cortex activity into mouth movement space and then convert it into speech. Although the decoding performance is powerful, the reconstructed voice sounds unnatural.

On the other hand, some methods successfully reconstruct natural-sounding speech by using wavenet vocoder, generative adversarial network (GAN), etc., but their accuracy is limited. Recently, in a study of patients with implanted devices, speech waveforms that were both accurate and natural were achieved by using quantized HuBERT features as an intermediate representation space and a pretrained speech synthesizer to convert these features into speech.

However, HuBERT features cannot represent speaker-specific acoustic information and can only generate a fixed and unified speaker's voice, so additional models are needed to convert this universal voice into a specific patient's voice. Furthermore, this study and most previous attempts adopted a non-causal architecture, which may limit its use in practical brain-computer interface applications that require temporal causal operations.

Main model framework

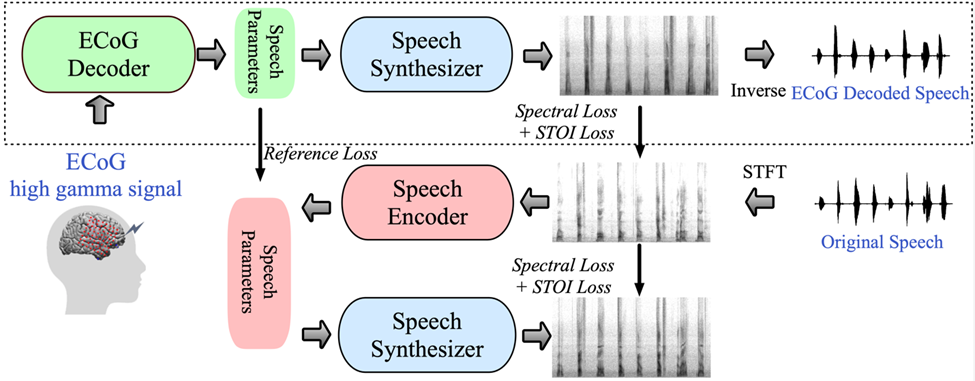

To address these challenges, researchers introduce a new decoding framework from electroencephalogram (ECoG) signals to speech in this article. The researchers build a low-dimensional intermediate Representation (low dimension latent representation), which is generated by a speech encoding and decoding model using only speech signals (Figure 1).

The framework proposed in the study consists of two parts: one is the ECoG decoder, which converts the ECoG signal into acoustic speech parameters that we can understand (such as pitch, whether the sound is uttered, loudness, and formant frequency, etc. ); the other part is the speech synthesizer, which converts these speech parameters into a spectrogram.

研究人員建構了一個可微分語音合成器,這使得在訓練ECoG解碼器的過程中,語音合成器也可以參與訓練,共同優化以減少頻譜圖重建的誤差。這個低維度的潛在空間具有很強的可解釋性,加上輕量級的預訓練語音編碼器產生參考用的語音參數,幫助研究者建立了一個高效的神經語音解碼框架,克服了數據稀缺的問題。

該框架能產生非常接近說話者自己聲音的自然語音,並且ECoG解碼器部分可以插入不同的深度學習模型架構,也支援因果操作(causal operations)。研究人員共收集並處理了48名神經外科病人的ECoG數據,使用多種深度學習架構(包括卷積、循環神經網路和Transformer)作為ECoG解碼器。

該框架在各種模型上都展現出了高準確度,其中以卷積(ResNet)架構獲得的性能最好,原始與解碼頻譜圖之間的皮爾森相關係數(PCC)達到了0.806。研究者提出的框架僅透過因果操作和相對較低的採樣率(low-density, 10mm spacing)就能達到高準確度。

研究者也展示了能夠從大腦的左右半球都進行有效的語音解碼,將神經語音解碼的應用擴展到了右腦。

研究相關程式碼開源:https://github.com/flinkerlab/neural_speech_decoding

該研究的重要創新是提出了一個可微分的語音合成器(speech synthesizer),這使得語音的重合成任務變得非常高效,可以用很小的語音合成高保真的貼合原聲的音訊。

可微分語音合成器的原理借鑒了人的發生系統原理,將語音分為Voice(用於建模元音)和Unvoice(用於建模輔音)兩部分:

Voice部分可以先用基頻訊號產生諧波,由F1-F6的共振峰組成的濾波器濾波得到母音部分的頻譜特徵;對於Unvoice部分,研究者則是將白噪聲用對應的濾波器濾波得到對應的頻譜,一個可學習的參數可以調控兩部分在每個時刻的混合比例;在此之後透過響度訊號放大,加入背景雜訊來得到最終的語音頻譜。基於此語音合成器,本文設計了一個高效率的語音重合成框架以及神經-語音解碼框架。

研究結果

具有時序因果性的語音解碼結果

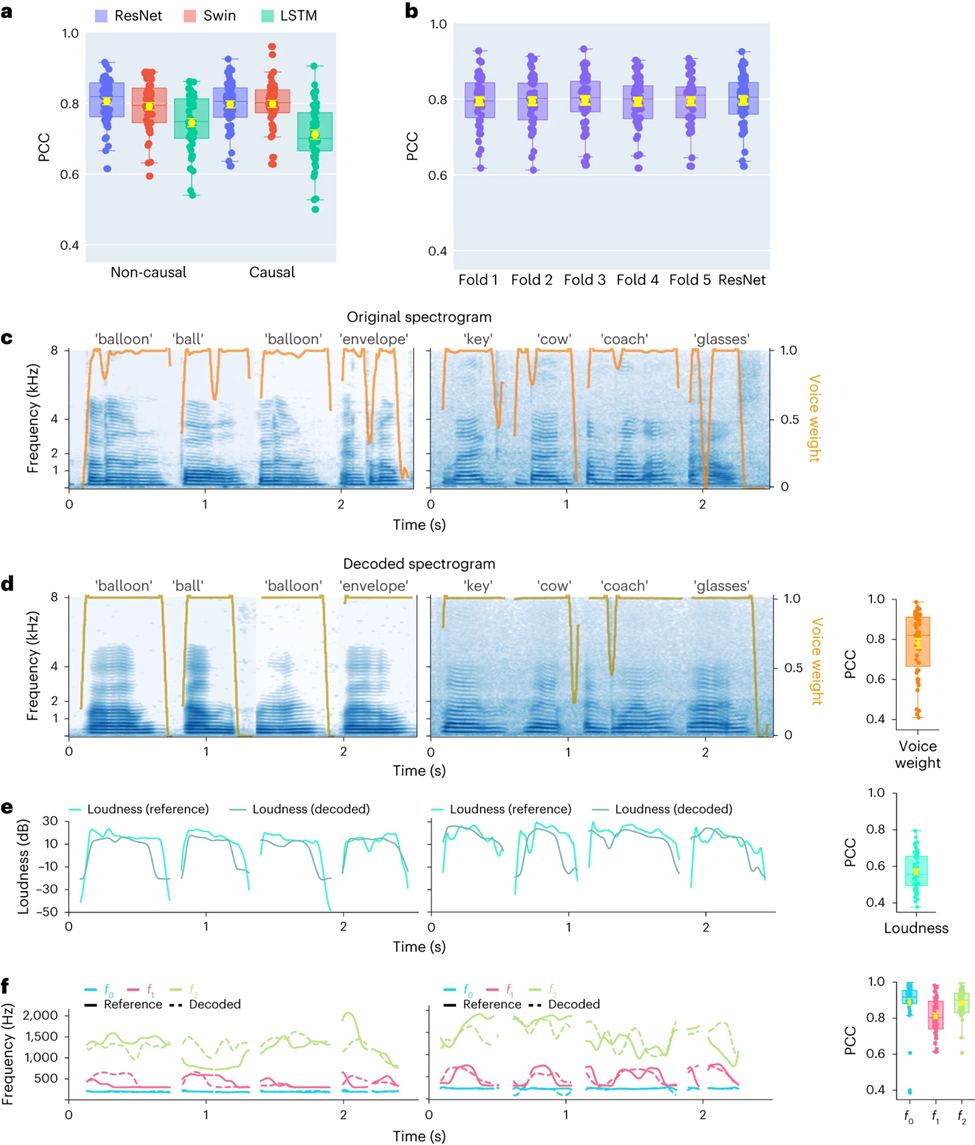

首先,研究者直接比較不同模型架構(卷積(ResNet)、循環(LSTM)和Transformer(3D Swin)在語音解碼性能上的差異。值得注意的是,這些模型都可以執行時間上的非因果(non-causal)或因果操作。森相關係數(PCC),非因果和因果的平均PCC分別為0.806和0.797,緊接而來的是Swin模型(非因果和因果的平均PCC分別為0.792和0.798)(圖2a)。

透過STOI 指標的評估也得到了相似的發現。也會使用未來的神經訊號。 #研究發現,即使是因果版本的ResNet模型也能與非因果版本媲美,二者之間沒有顯著差異。非因果版本,因此研究者後續主要關注ResNet和Swin模型。交叉驗證,這意味著相同單字的不同試驗不會同時出現在訓練集和測試集中。在訓練期間未見過的單詞,模型也能夠很好地進行解碼,這主要得益於本文構建的模型在進行音素(phoneme)或類似水平的語音解碼。進一步,研究者展示了ResNet因果解碼器在單字層級的表現,展示了兩位參與者(低密度取樣率ECoG)的數據。解碼後的頻譜圖準確地保留了原始語音的頻譜-時間結構(圖2c,d)。

研究人員也比較了神經解碼器預測的語音參數與語音編碼器編碼的參數(作為參考值),研究者展示了幾個關鍵語音參數的平均PCC值(N=48),包括聲音權重(用於區分母音和子音)、響度、音高f0、第一共振峰f1和第二共振峰f2。準確地重建這些語音參數,尤其是音高、聲音權重和前兩個共振峰,對於實現精確的語音解碼和自然地模仿參與者聲音的重建至關重要。

研究發現表明,無論是非因果或因果模型,都能得到合理的解碼結果,這為未來的研究和應用提供了積極的指引。

對左右大腦神經訊號語音解碼以及空間取樣率的研究

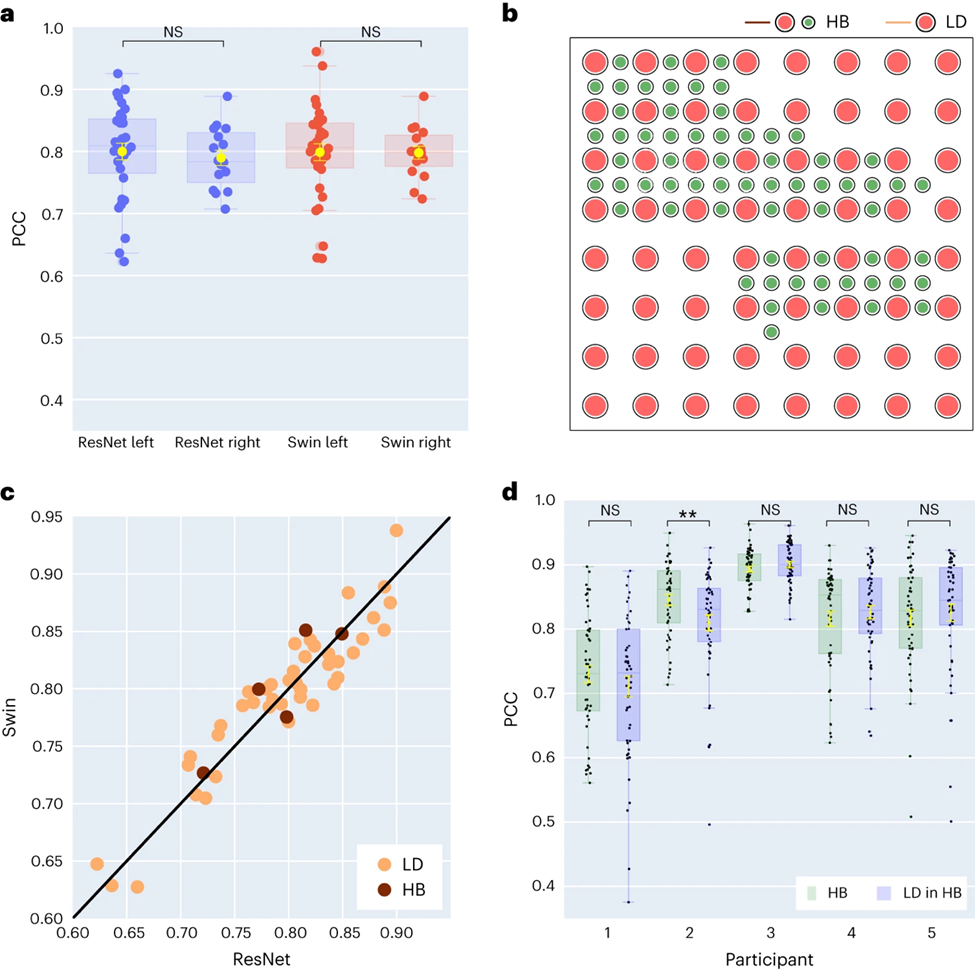

研究者進一步對左右大腦半球的語音解碼結果進行了比較。多數研究集中關注主導語音和語言功能的左腦半球。然而,我們對於如何從右腦半球解碼語言訊息所知甚少。針對這一點,研究者比較了參與者左右大腦半球的解碼表現,以驗證使用右腦半球進行語音恢復的可能性。

在研究收集的48位受試者中,有16位受試者的ECoG訊號是從右腦中擷取。透過比較ResNet 與Swin 解碼器的表現,研究者發現右腦半球也能夠穩定地進行語音解碼(ResNet 的PCC值為0.790,Swin 的PCC值為0.798),與左腦半球的解碼效果相差較小(如圖3a 所示)。

這項發現同樣適用於 STOI 的評估。這意味著,對於左腦半球受損、失去語言能力的患者來說,利用右腦半球的神經訊號恢復語言也許是可行的方案。

接著,研究者探討了電極取樣密度對語音解碼效果的影響。先前的研究多採用較高密度的電極網格(0.4 mm),而臨床上通常使用的電極網格密度較低(LD 1 cm)。

有五位參與者使用了混合類型(HB)的電極網格(見圖 3b),這類網格雖然主要是低密度採樣,但其中加入了額外的電極。剩餘的四十三位參與者都採用低密度採樣。這些混合取樣(HB)的解碼表現與傳統的低密度取樣(LD)相似,但在 STOI 上表現稍好。

研究者比較了僅利用低密度電極與使用所有混合電極進行解碼的效果,發現兩者之間的差異並不顯著(參見圖3d),這表明模型能夠從不同空間採樣密度的大腦皮層中學習到語音訊息,這也暗示臨床通常使用的採樣密度對於未來的腦機介面應用也許是足夠的。

對於左右腦不同腦區對語音解碼貢獻度的研究

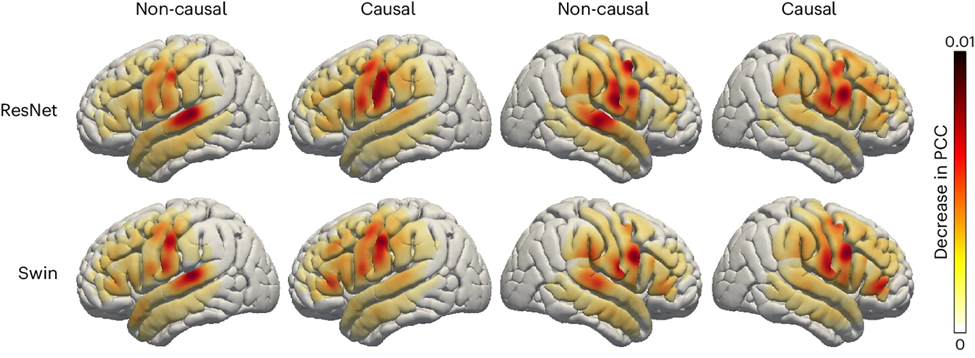

最後,研究者檢視了大腦的語音相關區域在語音解碼過程中的貢獻程度,這對於未來在左右腦半球植入語音恢復設備提供了重要的參考。研究者採用了遮蔽技術(occlusion analysis)來評估不同腦區對語音解碼的貢獻度。

簡而言之,如果某個區域對解碼至關重要,那麼遮蔽該區域的電極訊號(即將訊號設為零)會降低重構語音的準確率(PCC值)。

透過這種方法,研究者測量了遮蔽每個區域時,PCC值的減少情況。透過對比ResNet 和Swin 解碼器的因果與非因果模型發現,聽覺皮層在非因果模型中的貢獻更大;這強調了在即時語音解碼應用中,必須使用因果模型;因為在即時語音解碼中,我們無法利用神經回饋訊號。

此外,無論是在右腦或左腦半球,感測運動皮質尤其是腹部區域的貢獻度相似,這暗示在右半球植入神經義肢也許是可行的。

結論&啟發展望

研究者開發了一個新型的可微分語音合成器,可以利用一個輕型的捲積神經網路將語音編碼為一系列可解釋的語音參數(如音高,響度,共振峰頻率等)並透過可微分語音合成器重新合成語音。

透過將神經訊號映射到這些語音參數,研究者建構了一個高度可解釋且可應用於小數據量情形的神經語音解碼系統,可產生聽起來自然的語音。此方法在參與者間高度可重複(共48人),研究者成功展示了利用卷積和Transformer(3D Swin)架構進行因果解碼的有效性,均優於循環架構(LSTM)。

該框架能夠處理高低不同空間取樣密度,並且可以處理左、右半球的腦電訊號,顯示出了強大的語音解碼潛力。

大多數先前的研究沒有考慮到即時腦機介面應用中解碼操作的時序因果性。許多非因果模型依賴聽覺感覺回饋訊號。研究者的分析顯示,非因果模型主要依賴顳上回(superior temporal gyrus)的貢獻,而因果模型則基本上消除了這一點。研究者認為,由於過度依賴回饋訊號,非因果模型在即時BCI應用中的通用性受限。

有些方法嘗試避免訓練中的回饋,如解碼受試者想像中的語音。儘管如此,大多數研究仍採用非因果模型,無法排除訓練和推論過程中的回饋影響。此外,文獻中廣泛使用的循環神經網路通常是雙向的,導致非因果行為和預測延遲,而研究者的實驗表明,單向訓練的循環網路表現最差。

儘管研究並沒有測試即時解碼,但研究者實現了從神經訊號合成語音小於50毫秒的延遲,幾乎不影響聽覺延遲,允許正常語音產出。

研究中探討了是否更高密度的覆蓋能改善解碼性能。研究者發現低密度和高(混合)密度網格覆蓋都能達到高解碼效能(見圖 3c)。此外,研究者發現使用所有電極的解碼性能與僅使用低密度電極的性能沒有顯著差異(圖3d)。

這證明了只要圍顳覆蓋足夠,即使在低密度參與者中,研究者提出的ECoG解碼器也能夠從神經訊號中提取語音參數用於重建語音。另一個顯著的發現是右半球皮質結構以及右圍顳皮質對語音解碼的貢獻。儘管先前的一些研究展示了對元音和句子的解碼中,右半球可能提供貢獻,研究者的結果提供了右半球中魯棒的語音表示的證據。

研究者也提到了目前模型的一些限制,例如解碼流程需要有與ECoG記錄配對的語音訓練數據,這對失語症患者可能不適用。未來,研究者也希望開發能處理非網格資料的模型架構,以及更好地利用多病人、多模態腦電資料。

本文第一作者:Xupeng Chen, Ran Wang,通訊作者:Adeen Flinker。

基金支持:National Science Foundation under Grant No. IIS-1912286, 2309057 (Y.W., A.F.) and National Institute of Health R01NS109367, R01NS115929, R01DC018805 (A.F.)##。

更多關於神經語音解碼中的因果性討論,可以參考作者們的另一篇論文《Distributed feedforward and feedback cortical processing supports human speech production 》:https ://www.pnas.org/doi/10.1073/pnas.2300255120

來源:腦機介面社群The above is the detailed content of AI helps brain-computer interface research, New York University's breakthrough neural speech decoding technology, published in Nature sub-journal. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

How to run the h5 project

Apr 06, 2025 pm 12:21 PM

How to run the h5 project

Apr 06, 2025 pm 12:21 PM

Running the H5 project requires the following steps: installing necessary tools such as web server, Node.js, development tools, etc. Build a development environment, create project folders, initialize projects, and write code. Start the development server and run the command using the command line. Preview the project in your browser and enter the development server URL. Publish projects, optimize code, deploy projects, and set up web server configuration.

Gitee Pages static website deployment failed: How to troubleshoot and resolve single file 404 errors?

Apr 04, 2025 pm 11:54 PM

Gitee Pages static website deployment failed: How to troubleshoot and resolve single file 404 errors?

Apr 04, 2025 pm 11:54 PM

GiteePages static website deployment failed: 404 error troubleshooting and resolution when using Gitee...

Does H5 page production require continuous maintenance?

Apr 05, 2025 pm 11:27 PM

Does H5 page production require continuous maintenance?

Apr 05, 2025 pm 11:27 PM

The H5 page needs to be maintained continuously, because of factors such as code vulnerabilities, browser compatibility, performance optimization, security updates and user experience improvements. Effective maintenance methods include establishing a complete testing system, using version control tools, regularly monitoring page performance, collecting user feedback and formulating maintenance plans.

How to convert xml to excel

Apr 03, 2025 am 08:54 AM

How to convert xml to excel

Apr 03, 2025 am 08:54 AM

There are two ways to convert XML to Excel: use built-in Excel features or third-party tools. Third-party tools include XML to Excel converter, XML2Excel, and XML Candy.

Can you learn how to make H5 pages by yourself?

Apr 06, 2025 am 06:36 AM

Can you learn how to make H5 pages by yourself?

Apr 06, 2025 am 06:36 AM

It is feasible to self-study H5 page production, but it is not a quick success. It requires mastering HTML, CSS, and JavaScript, involving design, front-end development, and back-end interaction logic. Practice is the key, and learn by completing tutorials, reviewing materials, and participating in open source projects. Performance optimization is also important, requiring optimization of images, reducing HTTP requests and using appropriate frameworks. The road to self-study is long and requires continuous learning and communication.

How to set the bootstrap navigation bar

Apr 07, 2025 pm 01:51 PM

How to set the bootstrap navigation bar

Apr 07, 2025 pm 01:51 PM

Bootstrap provides a simple guide to setting up navigation bars: Introducing the Bootstrap library to create navigation bar containers Add brand identity Create navigation links Add other elements (optional) Adjust styles (optional)

How to quickly build a foreground page in a React Vite project using AI tools?

Apr 04, 2025 pm 01:45 PM

How to quickly build a foreground page in a React Vite project using AI tools?

Apr 04, 2025 pm 01:45 PM

How to quickly build a front-end page in back-end development? As a backend developer with three or four years of experience, he has mastered the basic JavaScript, CSS and HTML...

How to use vue pagination

Apr 08, 2025 am 06:45 AM

How to use vue pagination

Apr 08, 2025 am 06:45 AM

Pagination is a technology that splits large data sets into small pages to improve performance and user experience. In Vue, you can use the following built-in method to paging: Calculate the total number of pages: totalPages() traversal page number: v-for directive to set the current page: currentPage Get the current page data: currentPageData()