Technology peripherals

Technology peripherals

AI

AI

Within hours of release, Microsoft deleted a large open source model comparable to GPT-4 in seconds! Forgot to take the poison test

Within hours of release, Microsoft deleted a large open source model comparable to GPT-4 in seconds! Forgot to take the poison test

Within hours of release, Microsoft deleted a large open source model comparable to GPT-4 in seconds! Forgot to take the poison test

Last week, Microsoft airborne an open source model called WizardLM-2 that can be called GPT-4 level.

But I didn’t expect that it would be deleted immediately a few hours after it was posted.

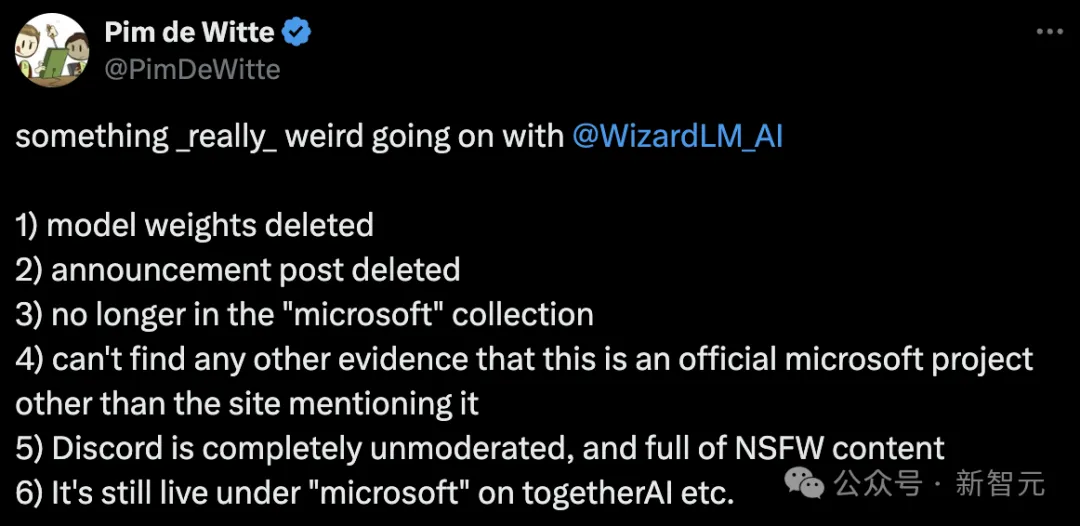

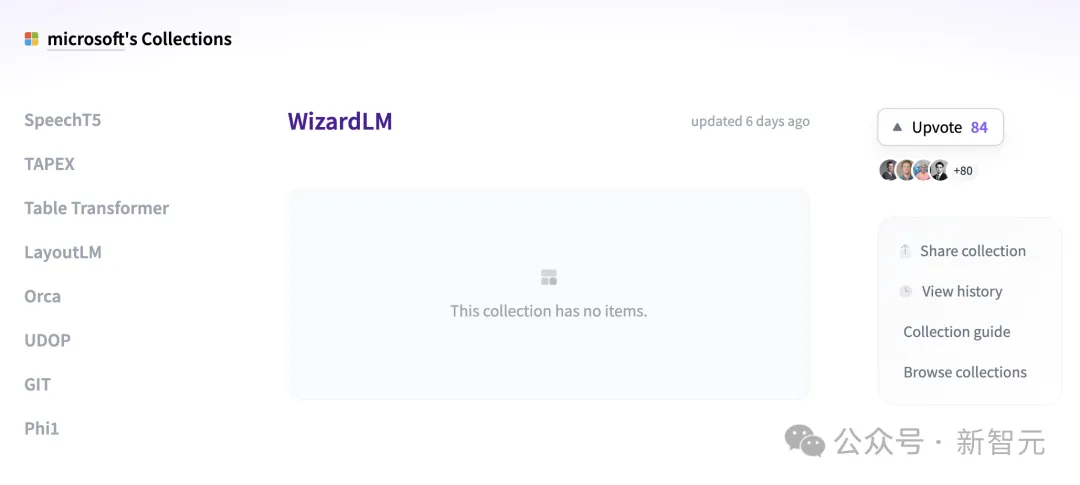

Some netizens suddenly discovered that WizardLM’s model weights and announcement posts had all been deleted and were no longer in the Microsoft collection. Apart from mentioning the site, they could not find any evidence to prove this. An official project from Microsoft.

The GitHub project homepage has become a 404.

Project address: https://wizardlm.github.io/

Include the model in HF All the weights on it have also disappeared...

The whole network is full of confusion, why is WizardLM gone?

However, the reason Microsoft did this was because the team forgot to "test" the model.

Later, the Microsoft team appeared to apologize and explained that it had been a while since WizardLM was released a few months ago, so we were not familiar with the new release process now.

We accidentally left out an item that is required in the model release process: poisoning testing

Microsoft WizardLM upgrade to second generation

In June last year, the first-generation WizardLM, which was fine-tuned based on LlaMA, was released and attracted a lot of attention from the open source community.

Paper address: https://arxiv.org/pdf/2304.12244.pdf

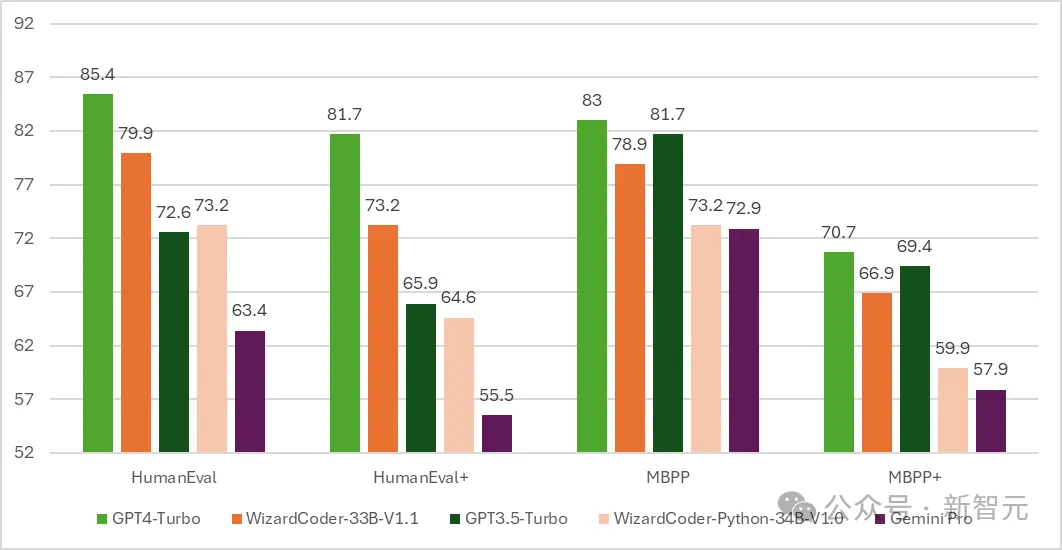

Follow , the code version of WizardCoder was born - a model based on Code Llama and fine-tuned using Evol-Instruct.

The test results show that WizardCoder’s pass@1 on HumanEval reached an astonishing 73.2%, surpassing the original GPT-4.

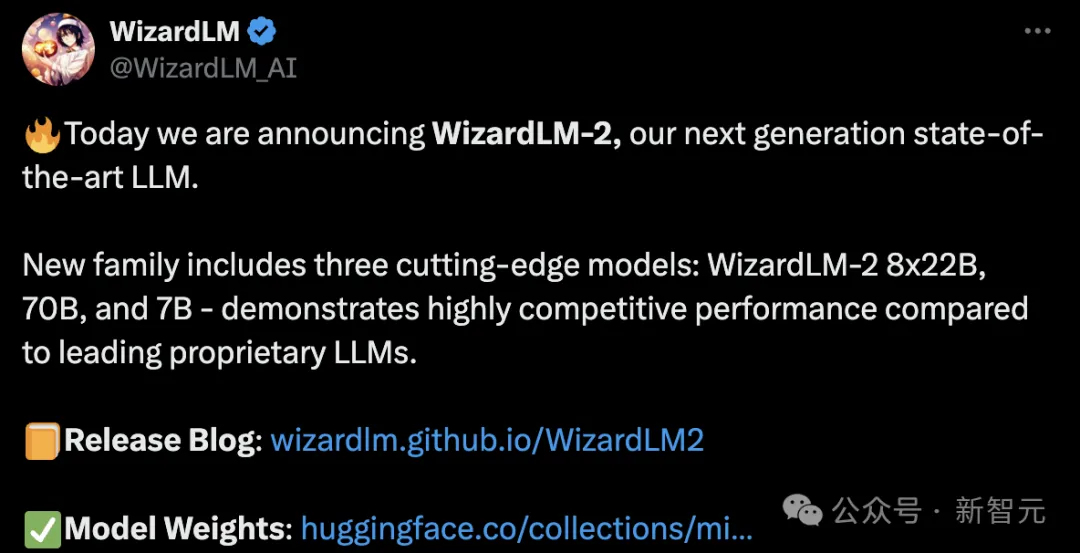

As the time progresses to April 15, Microsoft developers officially announced a new generation of WizardLM, this time fine-tuned from Mixtral 8x22B.

It contains three parameter versions, namely 8x22B, 70B and 7B.

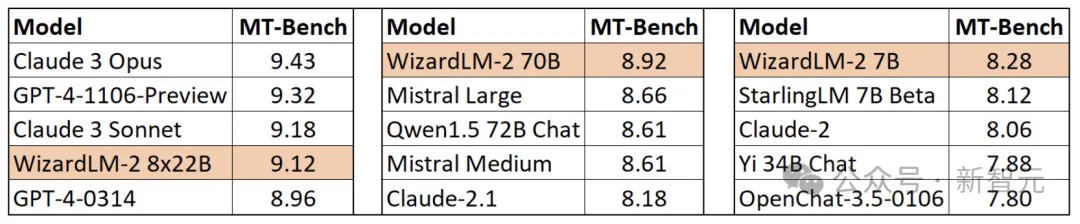

The most noteworthy thing is that in the MT-Bench benchmark test, the new model achieved a leading advantage.

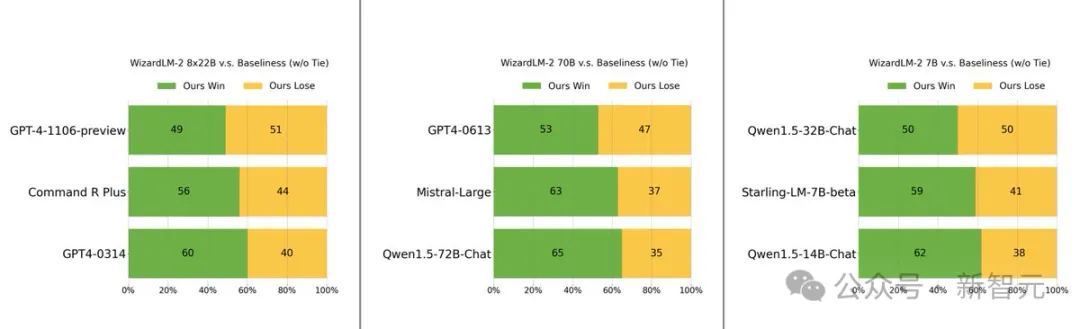

Specifically, the performance of the largest parameter version of the WizardLM 8x22B model is almost close to GPT-4 and Claude 3.

Under the same parameter scale, the 70B version ranks first.

The 7B version is the fastest and can even achieve performance comparable to the leading model with a parameter scale 10 times larger.

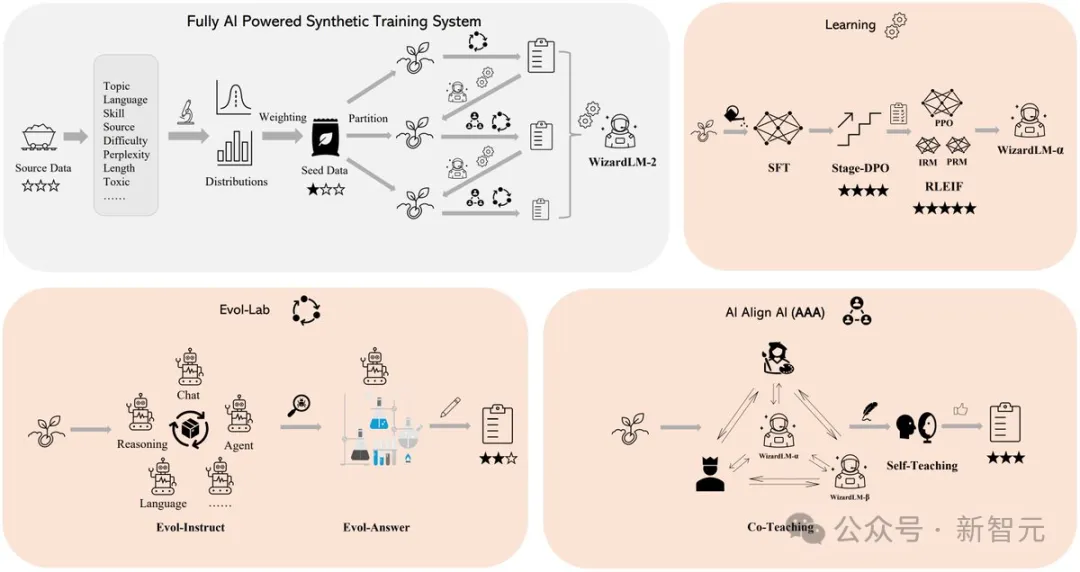

The secret behind WizardLM 2’s outstanding performance lies in Evol-Instruct, a revolutionary training methodology developed by Microsoft.

Evol-Instruct utilizes large language models to iteratively rewrite the initial instruction set into increasingly complex variants. These evolved instruction data are then used to fine-tune the base model, significantly improving its ability to handle complex tasks.

The other is the reinforcement learning framework RLEIF, which also played an important role in the development process of WizardLM 2.

In WizardLM 2 training, the AI Align AI (AAA) method is also used, which allows multiple leading large models to guide and improve each other.

The AAA framework consists of two main components, namely "co-teaching" and "self-study".

Co-teaching In this phase, WizardLM works with a variety of licensed open source and proprietary advanced models to conduct simulation chats, quality critiques, suggestions for improvements, and closing skill gaps.

By communicating with each other and providing feedback, models learn from their peers and improve their capabilities.

For self-study, WizardLM can generate new evolutionary training data for supervised learning and preference data for reinforcement learning through active self-study.

This self-learning mechanism allows the model to continuously improve performance by learning from the data and feedback information it generates.

In addition, the WizardLM 2 model was trained using the generated synthetic data.

In the view of researchers, training data for large models is increasingly depleted, and it is believed that data carefully created by AI and models gradually supervised by AI will be the only way to more powerful artificial intelligence.

So they created a fully AI-driven synthetic training system to improve WizardLM-2.

Fast netizens have already downloaded the weight

However, before the database was deleted, Many people have downloaded the model weights.

Several users also tested the model on some additional benchmarks before it was removed.

Fortunately, the netizens who tested it were impressed by the 7B model and said that it would be their first choice for performing local assistant tasks.

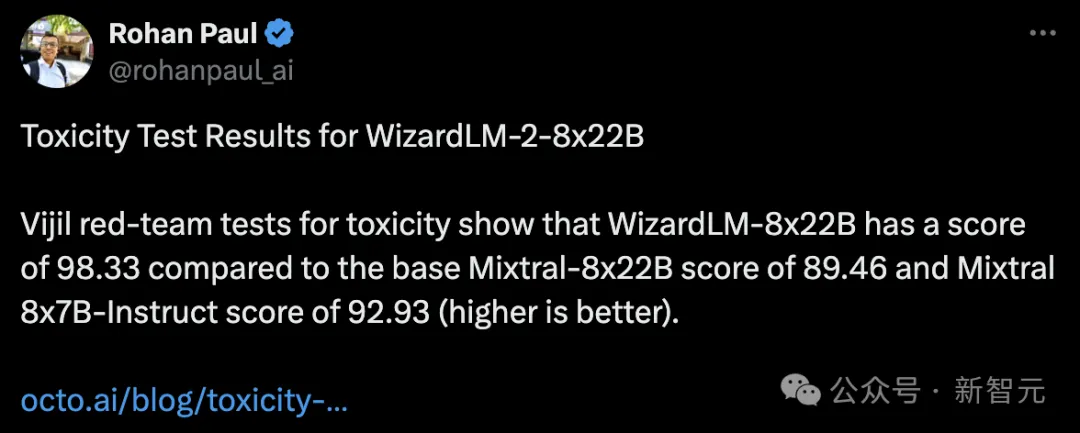

Someone also conducted a poisoning test and found that the WizardLM-8x22B scored 98.33, while the base Mixtral-8x22B scored 89.46. Mixtral 8x7B-Indict scored 92.93.

The higher the score, the better, which means that WizardLM-8x22B is still very strong.

If there is no poisoning test, it is absolutely impossible to send the model out.

It is well known that large models are prone to hallucinations.

If WizardLM 2 outputs "toxic, biased, and incorrect" content in the answer, it is not friendly to large models.

In particular, these mistakes attract the attention of the entire network, and Microsoft itself will also fall into criticism and even be investigated by the authorities.

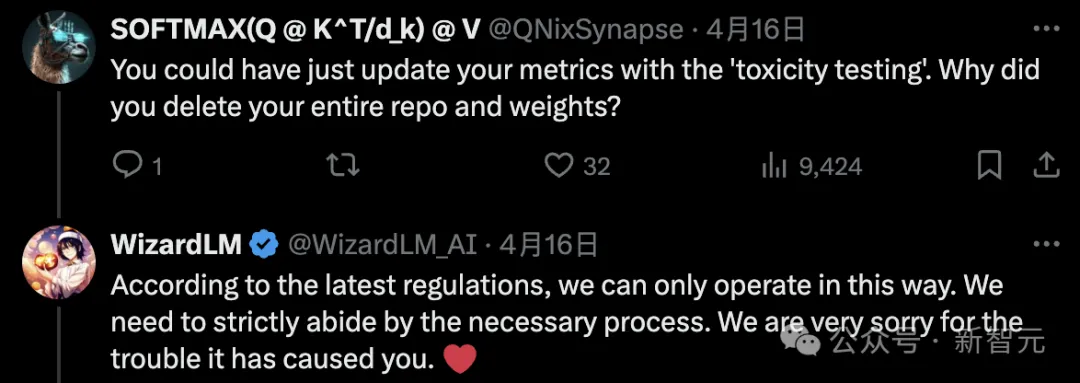

Some netizens wondered, you can update the indicators through "poisoning test". Why delete the entire repository and weight?

The Microsoft author stated that according to the latest internal regulations, this can only be done.

Some people also said that we want models without "lobotomy".

#However, developers still need to wait patiently, and the Microsoft team promises that it will go back online after the test is completed.

The above is the detailed content of Within hours of release, Microsoft deleted a large open source model comparable to GPT-4 in seconds! Forgot to take the poison test. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to download git projects to local

Apr 17, 2025 pm 04:36 PM

How to download git projects to local

Apr 17, 2025 pm 04:36 PM

To download projects locally via Git, follow these steps: Install Git. Navigate to the project directory. cloning the remote repository using the following command: git clone https://github.com/username/repository-name.git

How to update code in git

Apr 17, 2025 pm 04:45 PM

How to update code in git

Apr 17, 2025 pm 04:45 PM

Steps to update git code: Check out code: git clone https://github.com/username/repo.git Get the latest changes: git fetch merge changes: git merge origin/master push changes (optional): git push origin master

How to use git commit

Apr 17, 2025 pm 03:57 PM

How to use git commit

Apr 17, 2025 pm 03:57 PM

Git Commit is a command that records file changes to a Git repository to save a snapshot of the current state of the project. How to use it is as follows: Add changes to the temporary storage area Write a concise and informative submission message to save and exit the submission message to complete the submission optionally: Add a signature for the submission Use git log to view the submission content

How to merge code in git

Apr 17, 2025 pm 04:39 PM

How to merge code in git

Apr 17, 2025 pm 04:39 PM

Git code merge process: Pull the latest changes to avoid conflicts. Switch to the branch you want to merge. Initiate a merge, specifying the branch to merge. Resolve merge conflicts (if any). Staging and commit merge, providing commit message.

What to do if the git download is not active

Apr 17, 2025 pm 04:54 PM

What to do if the git download is not active

Apr 17, 2025 pm 04:54 PM

Resolve: When Git download speed is slow, you can take the following steps: Check the network connection and try to switch the connection method. Optimize Git configuration: Increase the POST buffer size (git config --global http.postBuffer 524288000), and reduce the low-speed limit (git config --global http.lowSpeedLimit 1000). Use a Git proxy (such as git-proxy or git-lfs-proxy). Try using a different Git client (such as Sourcetree or Github Desktop). Check for fire protection

How to delete a repository by git

Apr 17, 2025 pm 04:03 PM

How to delete a repository by git

Apr 17, 2025 pm 04:03 PM

To delete a Git repository, follow these steps: Confirm the repository you want to delete. Local deletion of repository: Use the rm -rf command to delete its folder. Remotely delete a warehouse: Navigate to the warehouse settings, find the "Delete Warehouse" option, and confirm the operation.

How to solve the efficient search problem in PHP projects? Typesense helps you achieve it!

Apr 17, 2025 pm 08:15 PM

How to solve the efficient search problem in PHP projects? Typesense helps you achieve it!

Apr 17, 2025 pm 08:15 PM

When developing an e-commerce website, I encountered a difficult problem: How to achieve efficient search functions in large amounts of product data? Traditional database searches are inefficient and have poor user experience. After some research, I discovered the search engine Typesense and solved this problem through its official PHP client typesense/typesense-php, which greatly improved the search performance.

How to update local code in git

Apr 17, 2025 pm 04:48 PM

How to update local code in git

Apr 17, 2025 pm 04:48 PM

How to update local Git code? Use git fetch to pull the latest changes from the remote repository. Merge remote changes to the local branch using git merge origin/<remote branch name>. Resolve conflicts arising from mergers. Use git commit -m "Merge branch <Remote branch name>" to submit merge changes and apply updates.