Three secrets for deploying large models in the cloud

Orchestration tools that support stateful deployment (such as Kubernetes) are helpful. They can leverage persistent storage options for large language models and be configured to maintain and manipulate their state across sessions. You need to do this in order to support continuity and performance of large language models.

With the explosive growth of generative artificial intelligence, deploying large language models on cloud platforms is a foregone conclusion. For most businesses, not using the cloud is simply too inconvenient. My worry about the ensuing craze is that we will miss some easy-to-solve problems and make huge and expensive mistakes that are mostly avoidable in the end.

To learn more about AIGC, please visit:

51CTO AI.x Community

https://www.51cto.com/aigc/

The above is the detailed content of Three secrets for deploying large models in the cloud. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Understand Tokenization in one article!

Apr 12, 2024 pm 02:31 PM

Understand Tokenization in one article!

Apr 12, 2024 pm 02:31 PM

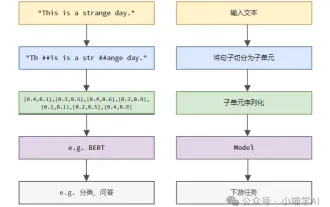

Language models reason about text, which is usually in the form of strings, but the input to the model can only be numbers, so the text needs to be converted into numerical form. Tokenization is a basic task of natural language processing. It can divide a continuous text sequence (such as sentences, paragraphs, etc.) into a character sequence (such as words, phrases, characters, punctuation, etc.) according to specific needs. The units in it Called a token or word. According to the specific process shown in the figure below, the text sentences are first divided into units, then the single elements are digitized (mapped into vectors), then these vectors are input to the model for encoding, and finally output to downstream tasks to further obtain the final result. Text segmentation can be divided into Toke according to the granularity of text segmentation.

How to use Vue for data encryption and secure transmission

Aug 02, 2023 pm 02:58 PM

How to use Vue for data encryption and secure transmission

Aug 02, 2023 pm 02:58 PM

How to use Vue for data encryption and secure transmission Introduction: With the development of the Internet, data security has received more and more attention. In web application development, data encryption and secure transmission are important means to protect user privacy and sensitive information. As a popular JavaScript framework, Vue provides a wealth of tools and plug-ins that can help us achieve data encryption and secure transmission. This article will introduce how to use Vue for data encryption and secure transmission, and provide code examples for reference. 1. Data encryption and data encryption

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

Three secrets for deploying large models in the cloud

Apr 24, 2024 pm 03:00 PM

Three secrets for deploying large models in the cloud

Apr 24, 2024 pm 03:00 PM

Compilation|Produced by Xingxuan|51CTO Technology Stack (WeChat ID: blog51cto) In the past two years, I have been more involved in generative AI projects using large language models (LLMs) rather than traditional systems. I'm starting to miss serverless cloud computing. Their applications range from enhancing conversational AI to providing complex analytics solutions for various industries, and many other capabilities. Many enterprises deploy these models on cloud platforms because public cloud providers already provide a ready-made ecosystem and it is the path of least resistance. However, it doesn't come cheap. The cloud also offers other benefits such as scalability, efficiency and advanced computing capabilities (GPUs available on demand). There are some little-known aspects of deploying LLM on public cloud platforms

One article to understand the technical challenges and optimization strategies for fine-tuning large language models

Mar 20, 2024 pm 11:01 PM

One article to understand the technical challenges and optimization strategies for fine-tuning large language models

Mar 20, 2024 pm 11:01 PM

Hello everyone, my name is Luga. Today we will continue to explore technologies in the artificial intelligence ecosystem, especially LLMFine-Tuning. This article will continue to analyze LLMFine-Tuning technology in depth to help everyone better understand its implementation mechanism so that it can be better applied to market development and other fields. LLMs (LargeLanguageModels) are leading a new wave of artificial intelligence technology. This advanced AI simulates human cognitive and language abilities by analyzing massive amounts of data using statistical models to learn complex patterns between words and phrases. The powerful functions of LLMs have aroused strong interest from many leading companies and technology enthusiasts, who are rushing to adopt these artificial intelligence-driven

Conveniently trained the biggest ViT in history? Google upgrades visual language model PaLI: supports 100+ languages

Apr 12, 2023 am 09:31 AM

Conveniently trained the biggest ViT in history? Google upgrades visual language model PaLI: supports 100+ languages

Apr 12, 2023 am 09:31 AM

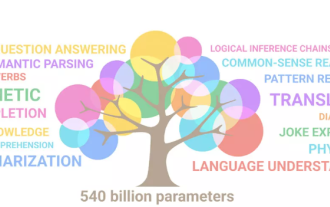

The progress of natural language processing in recent years has largely come from large-scale language models. Each new model released pushes the amount of parameters and training data to new highs, and at the same time, the existing benchmark rankings will be slaughtered. ! For example, in April this year, Google released the 540 billion-parameter language model PaLM (Pathways Language Model), which successfully surpassed humans in a series of language and reasoning tests, especially its excellent performance in few-shot small sample learning scenarios. PaLM is considered to be the development direction of the next generation language model. In the same way, visual language models actually work wonders, and performance can be improved by increasing the scale of the model. Of course, if it is just a multi-tasking visual language model

Efficient parameter fine-tuning of large-scale language models--BitFit/Prefix/Prompt fine-tuning series

Oct 07, 2023 pm 12:13 PM

Efficient parameter fine-tuning of large-scale language models--BitFit/Prefix/Prompt fine-tuning series

Oct 07, 2023 pm 12:13 PM

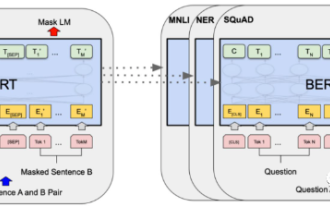

In 2018, Google released BERT. Once it was released, it defeated the State-of-the-art (Sota) results of 11 NLP tasks in one fell swoop, becoming a new milestone in the NLP world. The structure of BERT is shown in the figure below. On the left is the BERT model preset. The training process, on the right is the fine-tuning process for specific tasks. Among them, the fine-tuning stage is for fine-tuning when it is subsequently used in some downstream tasks, such as text classification, part-of-speech tagging, question and answer systems, etc. BERT can be fine-tuned on different tasks without adjusting the structure. Through the task design of "pre-trained language model + downstream task fine-tuning", it brings powerful model effects. Since then, "pre-training language model + downstream task fine-tuning" has become the mainstream training in the NLP field.

RoSA: A new method for efficient fine-tuning of large model parameters

Jan 18, 2024 pm 05:27 PM

RoSA: A new method for efficient fine-tuning of large model parameters

Jan 18, 2024 pm 05:27 PM

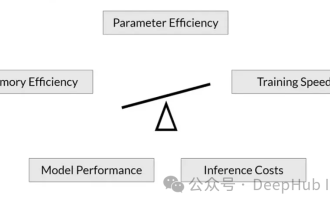

As language models scale to unprecedented scale, comprehensive fine-tuning for downstream tasks becomes prohibitively expensive. In order to solve this problem, researchers began to pay attention to and adopt the PEFT method. The main idea of the PEFT method is to limit the scope of fine-tuning to a small set of parameters to reduce computational costs while still achieving state-of-the-art performance on natural language understanding tasks. In this way, researchers can save computing resources while maintaining high performance, bringing new research hotspots to the field of natural language processing. RoSA is a new PEFT technique that, through experiments on a set of benchmarks, is found to outperform previous low-rank adaptive (LoRA) and pure sparse fine-tuning methods using the same parameter budget. This article will go into depth